Energy optimization conversations in data centers often revolve around advanced cooling technologies, yet the underlying physics of heat movement receives far less attention than it deserves. Operators continue to invest in sophisticated systems while overlooking the subtle degradation of heat exchange surfaces that quietly undermines performance. This disconnect creates a gap between installed cooling capacity and actual thermal effectiveness within operational environments. As workloads intensify and thermal densities increase, the margin for inefficiency narrows without drawing visible alarms. Heat transfer inefficiency rarely triggers immediate system failures, which allows it to persist unnoticed over extended operational periods. The result emerges not as a sudden breakdown but as a gradual escalation in energy consumption that resists easy diagnosis.

Thermal energy always follows predictable physical laws, yet real-world systems deviate due to material conditions and operational wear. Surface degradation, fluid impurities, and airflow disruptions introduce resistance that directly interferes with heat exchange processes. Engineers often interpret rising energy consumption as a need for additional capacity rather than a sign of declining efficiency. This misinterpretation shifts investments toward expansion instead of optimization, which compounds the problem over time. Heat transfer, unlike mechanical components, does not fail dramatically but erodes progressively through micro-level inefficiencies. Recognizing this pattern requires a shift in perspective from equipment-centric thinking to thermodynamic analysis.

Modern infrastructure amplifies this issue due to increasing computational intensity and compact system design. High-density racks generate concentrated heat loads that demand consistent and efficient removal to maintain operational stability. Any disruption in thermal pathways introduces localized stress that spreads across the system in cascading effects. Operators frequently compensate by lowering temperature setpoints, which increases energy demand without addressing the root cause. This pattern masks inefficiency rather than resolving it, creating a cycle of escalating energy use. Understanding heat transfer as the foundational layer of cooling efficiency becomes essential for reversing this trend.

Heat Transfer Losses: The Invisible Driver of Rising Energy Intensity

Heat transfer losses develop silently within systems that appear to function normally under standard monitoring conditions. These losses arise when thermal energy encounters resistance during its movement from heat sources to cooling media. Even minor surface degradation or fluid contamination can disrupt this process enough to increase energy consumption measurably depending on system conditions and severity of degradation. The system compensates by drawing more power to maintain target temperatures, which creates a hidden energy burden. Since IT loads remain stable, operators often attribute increased consumption to external factors rather than internal inefficiencies. This disconnect allows the problem to persist without targeted intervention.

Thermal resistance acts as a barrier that slows the transfer of heat, forcing cooling systems to operate longer and harder. Heat exchangers, coils, and piping networks experience gradual performance decline as deposits accumulate over time. These changes rarely produce immediate operational alarms, which makes them difficult to detect through conventional monitoring systems. Energy intensity increases incrementally, often blending into baseline variability rather than standing out as an anomaly. This gradual escalation creates a compounding effect that becomes significant over extended periods. Identifying these losses requires a deeper focus on thermodynamic performance metrics rather than surface-level indicators.

Operational environments further complicate detection because multiple systems interact simultaneously within cooling chains. A slight inefficiency in one component influences the performance of others, creating a network of interdependent effects. This interconnected behavior obscures the origin of inefficiency and complicates troubleshooting efforts. Engineers may optimize individual components without realizing that underlying heat transfer issues persist. The system continues to consume excess energy despite apparent improvements in isolated areas. Addressing heat transfer losses at their source offers a more effective pathway to reducing overall energy intensity.

The Thermodynamic Penalty of Fouling in Cooling Systems

Fouling introduces a measurable thermodynamic penalty by increasing resistance at the interface where heat exchange occurs. Deposits such as mineral scaling, biological growth, and particulate accumulation create insulating layers that disrupt thermal conductivity. These layers reduce the efficiency of heat exchangers by limiting direct contact between surfaces and cooling media. As resistance increases, systems require higher energy input to maintain the same level of thermal performance. This relationship directly links physical surface conditions to energy consumption patterns. The impact remains present across various cooling technologies, although the magnitude of performance degradation varies depending on system design and operating conditions.

Cooling systems rely on clean and conductive surfaces to facilitate efficient heat exchange under continuous operation. Even slight fouling can alter flow dynamics, which further reduces heat transfer efficiency. Fluid pathways become restricted, leading to uneven temperature distribution and localized inefficiencies. These disruptions force pumps and chillers to compensate by increasing operational intensity. Over time, this compensation becomes normalized within the system, masking the underlying inefficiency. Addressing fouling requires proactive maintenance strategies rather than reactive responses to performance decline.

Thermodynamic penalties extend beyond individual components and influence the entire cooling ecosystem. Increased resistance in one section creates a ripple effect that alters system balance and performance. Chillers operate under higher loads, pumps experience increased strain, and airflow systems adjust to compensate for uneven heat removal. These adjustments consume additional energy without improving overall efficiency. The system achieves stability at the cost of increased energy expenditure. Eliminating fouling restores thermal pathways and reduces the need for compensatory energy use.

Cooling systems function as interconnected chains where each component influences the performance of others. A small inefficiency in heat transfer at one point can amplify as it propagates through the system. This amplification occurs because downstream components must compensate for upstream performance losses. Pumps increase flow rates, chillers operate longer cycles, and fans adjust speeds to maintain thermal balance. Each adjustment adds incremental energy consumption that accumulates across the system. The result is a disproportionate increase in total energy use relative to the initial inefficiency.

Amplification effects often remain hidden because monitoring systems focus on individual component performance rather than system-wide interactions. Engineers may observe acceptable performance metrics in isolated units while overlooking cumulative inefficiencies. This fragmented view prevents accurate diagnosis of energy consumption patterns. Heat transfer inefficiency acts as a multiplier within these systems, magnifying its impact beyond its immediate location. Addressing the root cause requires a holistic understanding of system dynamics rather than isolated optimization efforts. This approach aligns energy management with thermodynamic principles rather than mechanical adjustments.

System design further influences amplification by determining how effectively components interact under varying conditions. Poorly balanced systems exhibit greater sensitivity to minor inefficiencies, which increases the risk of energy escalation. High-density environments intensify this effect due to concentrated heat loads and limited thermal margins. Small disruptions in heat transfer can trigger significant compensatory responses across the system. These responses create feedback loops that reinforce inefficiency rather than resolving it. Optimizing heat transfer at the source reduces amplification and stabilizes overall system performance.

Cooling systems exist to facilitate heat removal, yet discussions often focus on mechanical components rather than the physics of heat exchange. This perspective overlooks the fundamental role of heat transfer in determining system efficiency. Cooling energy consumption directly reflects how effectively heat moves through the system from source to rejection point. When heat transfer efficiency declines, systems must expend more energy to achieve the same outcome. This relationship positions heat transfer as a foundational determinant of cooling energy performance alongside system design, airflow management, and control strategies. Recognizing this connection shifts optimization strategies toward maintaining thermal pathways.

Mechanical improvements alone cannot fully compensate for poor heat transfer conditions within the system, although they may partially mitigate performance losses. Advanced chillers and high-performance fans still depend on efficient thermal exchange to operate effectively. Without proper heat transfer, these components operate under increased stress and reduced efficiency. This dynamic creates a mismatch between technological capability and actual performance outcomes. Engineers often attempt to resolve this mismatch through additional capacity rather than addressing underlying inefficiencies. Focusing on heat transfer restores alignment between system design and operational performance.

Reframing cooling energy as a heat transfer problem encourages a more precise approach to energy optimization. It highlights the importance of maintaining clean surfaces, balanced flow dynamics, and consistent thermal conductivity. This perspective integrates thermodynamics into operational decision-making rather than treating it as a background concept. Heat transfer efficiency becomes a measurable and actionable parameter within energy management strategies. Improving this parameter reduces energy consumption without requiring major infrastructure changes. This approach offers a more sustainable pathway to long-term efficiency gains.

The Shrinking Margin Between Heat Generation and Heat Rejection

AI-driven workloads continue to increase computational intensity, which compresses the thermal margin between heat generation and heat rejection within data center environments. Systems now operate closer to their thermal limits, leaving minimal room for inefficiency in heat transfer processes. Even slight resistance in thermal pathways can disrupt equilibrium and force cooling systems to compensate through increased energy use. This compression transforms minor inefficiencies into significant operational challenges that affect overall energy performance. Engineers must manage heat removal with greater precision as margins narrow across infrastructure layers. Maintaining optimal heat transfer efficiency becomes critical for sustaining stable operations under these conditions.

Thermal density amplifies the consequences of inefficient heat transfer because localized heat accumulation spreads quickly in tightly packed environments. Components experience higher stress when heat removal does not match heat generation rates in real time. This imbalance forces systems to operate defensively by increasing cooling intensity beyond optimal levels. Such responses stabilize temperatures but introduce additional energy consumption that could have been avoided. The shrinking margin eliminates tolerance for gradual degradation in thermal performance. Operators must treat heat transfer efficiency as a continuously managed parameter rather than a static design feature.

High-performance computing environments illustrate how sensitive systems become when thermal margins compress. Small inefficiencies that once remained inconsequential now trigger measurable impacts on system stability and energy use. Cooling systems respond dynamically, often overshooting requirements to prevent overheating risks. This behavior reflects a lack of precise heat transfer control rather than insufficient cooling capacity. Addressing the root cause requires restoring efficient thermal pathways rather than increasing mechanical output. Effective management of heat transfer ensures that shrinking margins do not translate into escalating energy demand.

Overcooling emerges as a common response to inefficient heat transfer within data center environments. Operators lower temperature setpoints to counteract uneven or insufficient heat removal across systems. This approach creates a buffer against thermal instability but introduces unnecessary energy consumption. Cooling systems operate beyond their optimal range, which reduces efficiency and increases operational costs. The root issue remains unresolved because heat transfer inefficiencies persist beneath the surface. Addressing overcooling requires identifying and correcting the underlying thermal resistance within the system.

Cooling systems rely on precise heat exchange to maintain stable operating conditions without excessive energy use. When heat transfer efficiency declines, systems struggle to achieve uniform temperature distribution across infrastructure. This imbalance leads to localized hotspots that trigger compensatory cooling measures. Operators may interpret these hotspots as indicators of insufficient cooling capacity rather than inefficient heat transfer, particularly in environments with limited thermal diagnostics. The response involves increasing cooling intensity instead of improving thermal pathways. This pattern perpetuates energy waste while masking the actual source of inefficiency.

Overcooling also affects system longevity by introducing unnecessary thermal stress on components. Rapid fluctuations in temperature create conditions that accelerate material wear and reduce reliability. These effects compound operational challenges and increase maintenance requirements over time. Energy consumption rises as systems work harder to maintain artificially low temperatures. Eliminating the need for overcooling requires restoring consistent and efficient heat transfer across all components. This approach aligns energy use with actual thermal requirements rather than compensatory adjustments.

Restoring Thermal Conductivity vs. Expanding Cooling Capacity

Organizations often face a choice between improving existing system performance and expanding cooling infrastructure to meet rising demands. Expanding capacity appears to offer a straightforward solution, yet it does not address underlying inefficiencies in heat transfer. Additional cooling systems increase complexity and energy consumption without resolving root causes. Restoring thermal conductivity within existing systems provides a more effective approach to energy optimization. Clean surfaces and efficient heat exchange pathways enable systems to operate at designed performance levels. This strategy reduces the need for costly and energy-intensive infrastructure expansion. (https://www.energy.gov/eere/amo/articles/understanding-heat-exchanger-fouling)

Thermal conductivity determines how effectively heat moves through materials and interfaces within cooling systems. Degradation in this property reduces overall system efficiency and forces compensatory energy use. Restoring conductivity involves removing deposits, improving fluid quality, and maintaining optimal operating conditions. These actions enhance heat transfer without requiring significant capital investment. The resulting efficiency gains can, in many cases, exceed those achieved through capacity expansion alone, depending on baseline system conditions and operational constraints. Focusing on thermal conductivity aligns operational improvements with fundamental physical principles.

Capacity expansion introduces additional challenges related to integration and system balance. New components must interact seamlessly with existing infrastructure to achieve intended performance outcomes. Any mismatch can create further inefficiencies that offset potential benefits. Improving heat transfer efficiency within current systems avoids these complications and delivers immediate results. This approach emphasizes optimization over expansion, which supports sustainable energy management practices. Restoring thermal conductivity offers a direct pathway to reducing energy consumption while maintaining operational stability.

The Energy Cost of Temperature Gradients Inside Infrastructure

Temperature gradients develop when heat removal occurs unevenly across different parts of a system. These gradients create zones of varying thermal intensity that disrupt overall efficiency. Hotspots emerge in areas where heat transfer remains insufficient, while other regions experience excessive cooling. This imbalance forces systems to compensate by increasing overall cooling output. The result is higher energy consumption without achieving uniform thermal conditions. Managing temperature gradients requires consistent and efficient heat transfer across all components.

Uneven heat distribution affects airflow dynamics within data center environments. Air moves preferentially through paths of least resistance, which can bypass areas with higher thermal loads. This behavior reduces the effectiveness of cooling systems and increases reliance on compensatory measures. Operators often respond by increasing airflow rates, which raises energy consumption further. Addressing the root cause involves improving heat transfer at the source rather than adjusting airflow patterns. Balanced thermal conditions enhance system efficiency and reduce unnecessary energy use.

Temperature gradients also influence equipment performance and reliability over time. Components exposed to higher temperatures experience increased stress and reduced lifespan. Cooling systems must work harder to maintain acceptable operating conditions in these areas. This dynamic creates a cycle of inefficiency that extends across the entire infrastructure. Eliminating gradients through improved heat transfer restores balance and reduces energy demand. Consistent thermal conditions support both operational stability and energy optimization.

Heat Transfer Optimization as a Load Reduction Strategy

Heat transfer optimization offers a unique approach to reducing energy demand without altering computational output. Efficient heat exchange allows systems to maintain target temperatures with lower energy input. This dynamic effectively reduces the load on cooling infrastructure while preserving performance levels. Operators often focus on efficiency as a ratio rather than as a mechanism for demand reduction. Improving heat transfer shifts this perspective by directly lowering the energy required for cooling operations. This approach aligns efficiency improvements with tangible reductions in energy consumption.

Cooling systems consume energy in proportion to the effort required to remove heat from infrastructure. When heat transfer efficiency improves, the effort required decreases accordingly. This relationship creates an opportunity to reduce overall energy demand without compromising system functionality. Optimized heat transfer reduces the need for high-intensity cooling cycles and excessive airflow. Systems operate closer to their optimal efficiency range, which enhances performance consistency. This strategy transforms efficiency from a passive metric into an active tool for energy management.

Demand reduction through heat transfer optimization also supports scalability in high-growth environments. As workloads increase, efficient thermal management ensures that additional energy demand remains controlled. Systems can accommodate higher computational loads without proportional increases in cooling energy. This capability enhances operational flexibility and reduces long-term infrastructure costs. Maintaining efficient heat transfer becomes a critical factor in sustainable growth strategies. The approach integrates thermodynamics with energy management in a practical and measurable way.

Waste Heat as an Untapped Energy Asset

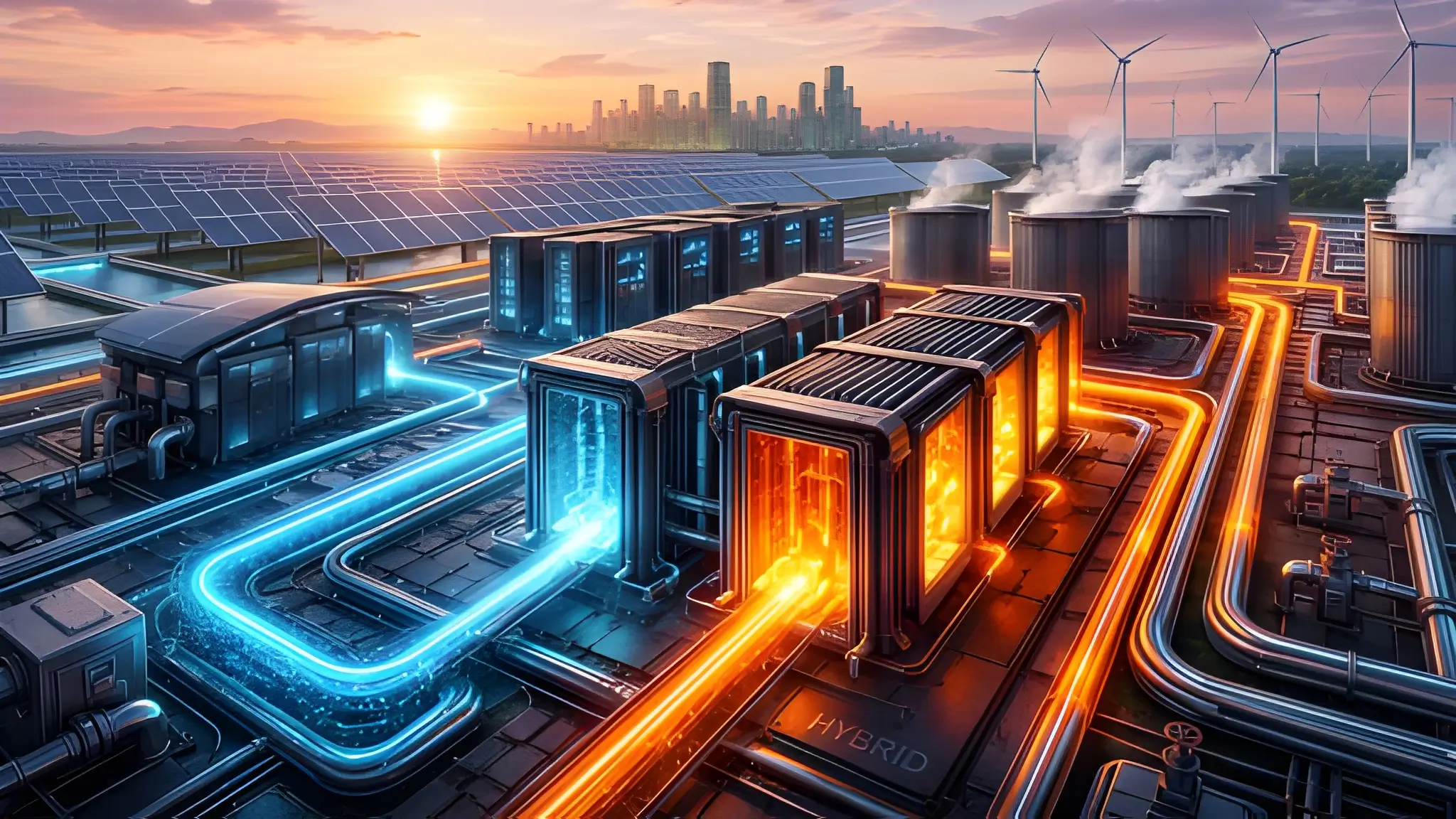

Waste heat represents a significant byproduct of data center operations, yet it often remains underutilized due to inefficient heat transfer processes. Efficient heat exchange enables the capture and redirection of thermal energy for secondary uses. This capability transforms waste heat from a liability into a valuable resource. Systems designed with optimized heat transfer can support applications such as district heating or industrial processes. The effectiveness of these applications depends on the quality and consistency of heat extraction. Improving heat transfer efficiency enhances the viability of waste heat recovery initiatives.

Heat recovery systems rely on stable thermal output to function effectively within integrated energy networks. Inconsistent or inefficient heat transfer reduces the reliability of recovered energy streams. This limitation discourages investment in recovery infrastructure and limits potential benefits. Enhancing heat transfer ensures that thermal energy remains accessible and usable for secondary applications. This approach aligns data center operations with broader energy sustainability goals. Waste heat utilization becomes feasible when thermal pathways operate at optimal efficiency levels.

The integration of waste heat recovery systems introduces additional considerations related to system design and operation. Efficient heat transfer supports seamless integration by providing consistent thermal output. This consistency enables reliable performance across interconnected systems. Operators can leverage recovered heat to offset energy demand in other areas, which improves overall efficiency. The success of these initiatives depends on maintaining high-quality heat exchange processes. Optimizing heat transfer unlocks the full potential of waste heat as an energy asset.

From Thermal Resistance to Energy Waste: Quantifying the Link

Thermal resistance directly influences the amount of energy required to move heat through a system. Increased resistance slows heat transfer and forces cooling systems to compensate through higher energy input. This relationship establishes a clear link between physical conditions and energy consumption patterns. Engineers can quantify this link by analyzing changes in system performance relative to thermal resistance levels. Even small increases in resistance can produce noticeable effects on energy demand. Understanding this relationship provides a foundation for targeted optimization efforts.

Heat transfer efficiency depends on maintaining low resistance across all interfaces within cooling systems. Deposits, surface degradation, and flow disruptions contribute to increased resistance over time. These factors reduce the effectiveness of heat exchange and require compensatory energy use. Systems operate under higher loads to achieve the same thermal outcomes, which increases overall consumption. Quantifying these effects enables more precise identification of inefficiencies. This approach supports data-driven decision-making in energy management strategies.

The translation of thermal resistance into energy waste highlights the importance of proactive maintenance and optimization. Addressing resistance at its source reduces the need for compensatory energy use across the system. This approach improves both efficiency and reliability within data center operations. Engineers can implement targeted interventions that restore optimal heat transfer conditions. These interventions deliver measurable reductions in energy consumption without requiring major infrastructure changes. The link between resistance and energy waste underscores the value of thermodynamic analysis in operational planning.

Energy Efficiency Begins at the Point of Heat Exchange

Energy efficiency in data centers often focuses on advanced technologies, yet the foundation of optimization lies in the efficiency of heat exchange processes. Cooling systems depend on effective heat transfer to operate at their intended performance levels. When this foundation weakens, even the most advanced technologies struggle to deliver expected outcomes. Restoring heat transfer efficiency addresses the root cause of energy inefficiency within these systems. This approach shifts the focus from expansion to optimization, which supports sustainable energy management practices. Understanding heat transfer as the primary lever transforms how operators approach energy optimization.

Thermodynamic principles provide a consistent framework for analyzing and improving system performance. Heat transfer efficiency serves as a measurable parameter that reflects the effectiveness of cooling operations. Improving this parameter reduces energy consumption while maintaining operational stability. Engineers can achieve these improvements through targeted maintenance and optimization strategies. This approach aligns operational practices with fundamental physical laws rather than relying solely on technological upgrades. Energy efficiency becomes a direct outcome of maintaining optimal thermal pathways.

The future of energy optimization in data centers depends on integrating thermodynamic analysis into everyday operations. Operators must prioritize heat transfer efficiency as a core component of system performance. This perspective enables more precise and effective management of energy consumption across infrastructure layers. Restoring and maintaining efficient heat exchange processes offers a scalable and sustainable pathway to optimization in many operational scenarios, though capacity expansion may still be required in constrained environments.The most impactful improvements often occur at the point where heat meets resistance. Energy efficiency begins not with cooling technology, but with the physics of heat transfer itself.