When Climate Stops Being the Deciding Factor

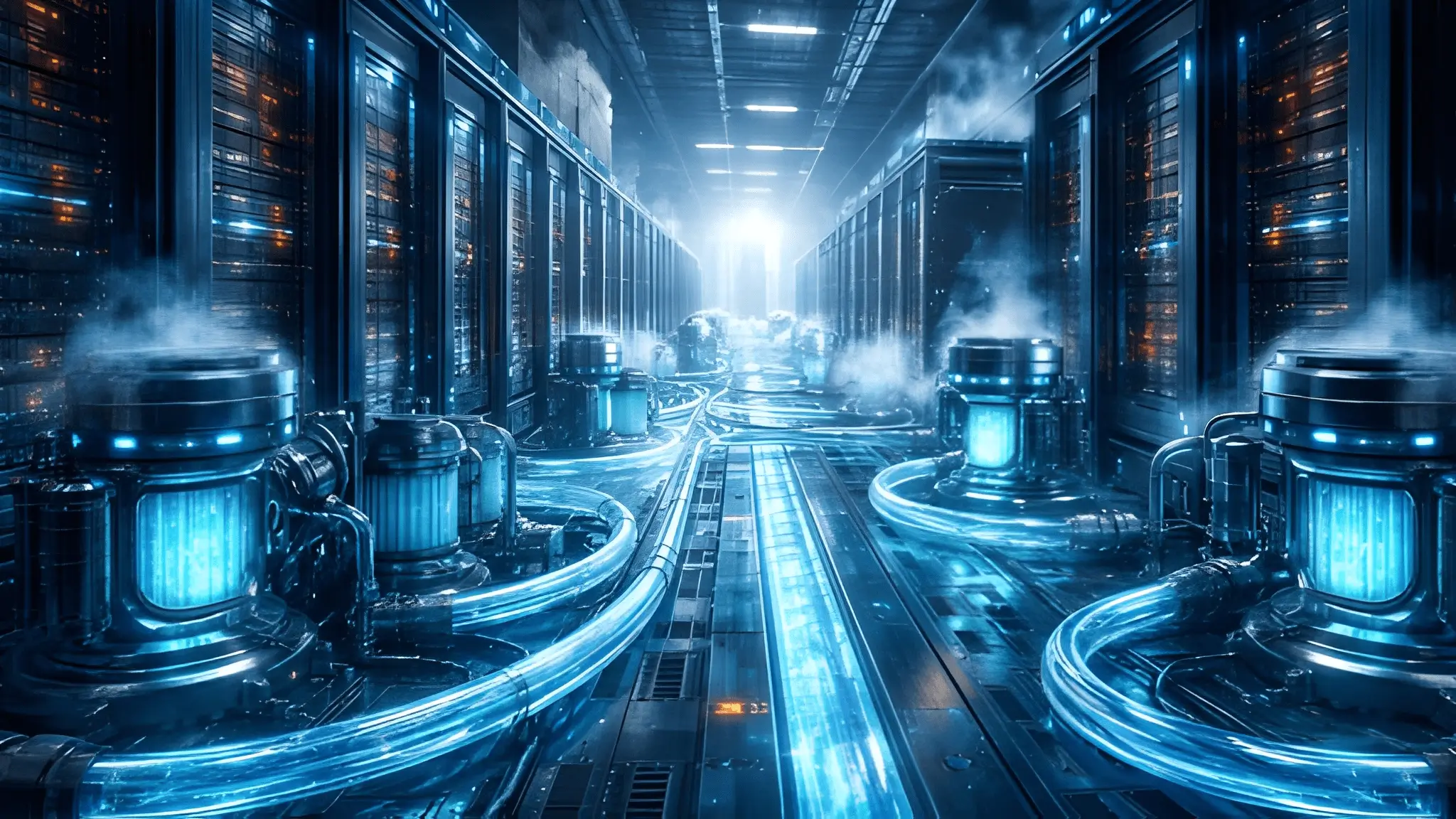

The geography of compute once followed temperature maps with near mechanical predictability, as operators aligned deployments with colder regions to exploit ambient air for thermal efficiency. That logic anchored decades of infrastructure planning and shaped entire clusters in northern latitudes. Liquid cooling reduces that dependency by shifting thermal management from external conditions to controlled internal systems while climate continues to influence efficiency outcomes. Engineers now partially decouple cooling performance from outside air, allowing more consistent thermal regulation despite climate variability.This transition removes one of the oldest constraints in site selection and introduces a new layer of design autonomy. Infrastructure planning now begins with system capability rather than environmental compromise.

Free cooling once defined efficiency benchmarks because it reduced mechanical intervention in thermal control systems. That advantage weakens when liquid cooling systems handle heat directly at the chip or rack level with higher precision. Direct-to-chip and immersion systems absorb thermal loads before heat disperses into ambient air, reducing reliance on outside temperature gradients. Cooling loops operate in closed environments that maintain consistent performance across seasonal fluctuations. Operators no longer depend on cold air availability to sustain efficiency at scale. Infrastructure planning evolves from climate exploitation to thermal engineering.

Thermal Density Redefines Priorities

Modern compute workloads, especially AI-driven systems, generate heat densities that air cooling struggles to handle effectively. Liquid cooling addresses this by enabling higher thermal transfer rates within compact footprints. That capability reduces the relevance of external environmental support systems. Designers prioritize internal cooling architecture over site-level climate advantages. High-density racks now operate efficiently in regions previously considered unsuitable. The shift reflects a broader move toward performance-led infrastructure planning.

Energy availability now shapes location strategy more decisively than ambient temperature ever did. Data centers increasingly depend on uninterrupted power supply to sustain high-density workloads and cooling systems simultaneously. Liquid cooling systems require stable energy input to maintain circulation, heat exchange, and control mechanisms. Operators evaluate grid resilience, energy scalability, and long-term supply reliability as primary site selection criteria. Climate advantages fade when power infrastructure determines operational continuity. Site selection transforms into an energy-first equation driven by infrastructure robustness.

Power grids now function as foundational components of data center ecosystems rather than external dependencies. Liquid cooling systems integrate tightly with electrical infrastructure, requiring consistent load handling and redundancy planning. Engineers assess grid stability, transmission capacity, and proximity to generation sources during site evaluation. Regions with strong energy frameworks attract deployments regardless of climatic conditions. The shift reflects a deeper integration between compute infrastructure and energy systems. Location decisions align with power availability rather than weather patterns.

Cooling Efficiency Meets Power Demand

Liquid cooling reduces airflow requirements but increases reliance on pumps, heat exchangers, and control systems. These components demand stable electrical input to maintain performance consistency. Operators balance cooling efficiency gains with power consumption profiles to optimize overall system design. Energy becomes both a constraint and an enabler in infrastructure planning. The interplay between cooling and power reshapes traditional efficiency metrics. Site selection evolves toward integrated energy and cooling strategies.

Liquid cooling expands the geographical footprint of data center deployments by enabling operations in diverse climates. Regions previously excluded due to high ambient temperatures now support high-density compute infrastructure. Engineers design systems that maintain thermal stability independent of external environmental conditions. This capability opens new markets and redistributes infrastructure across broader geographic zones. Location strategy shifts from limitation to opportunity as cooling independence increases. The data center map evolves toward a more distributed and flexible model.

Unlocking New Regions

Warm and humid regions once posed significant challenges for traditional cooling systems. Liquid cooling mitigates these challenges by isolating heat transfer processes from ambient air conditions. Operators deploy infrastructure in areas with strong connectivity and power availability without climate constraints. This expansion supports regional compute demand and reduces latency for end users. Infrastructure distribution becomes more aligned with demand patterns rather than environmental limitations. The shift reflects a broader transformation in global compute architecture.

Decentralizing Compute Infrastructure

Cooling independence supports decentralized deployment strategies that bring compute closer to users. Edge computing environments benefit from liquid cooling due to compact design and high efficiency. Operators build smaller facilities in diverse locations without relying on favorable climates. This approach enhances responsiveness and reduces dependency on centralized hubs. Infrastructure planning becomes more granular and adaptive. The data center ecosystem moves toward distributed resilience.

Infrastructure design now focuses on managing heat generation rather than avoiding it through location choices. Liquid cooling systems enable engineers to address thermal challenges directly within the hardware environment. Designers integrate cooling solutions into system architecture from the outset. This approach contrasts with traditional strategies that relied on external conditions to mitigate heat. Engineering becomes the primary tool for thermal management. Site selection reflects design capability rather than environmental compromise.

Engineering-First Thermal Strategies

Engineers now prioritize thermal pathways, fluid dynamics, and heat exchange efficiency in system design. Liquid cooling systems operate with precise control over temperature gradients and flow rates. This precision allows consistent performance across varying environmental conditions. Designers optimize cooling loops to match workload demands and hardware configurations. Thermal management becomes a core engineering discipline within infrastructure planning. The shift elevates design complexity and capability.

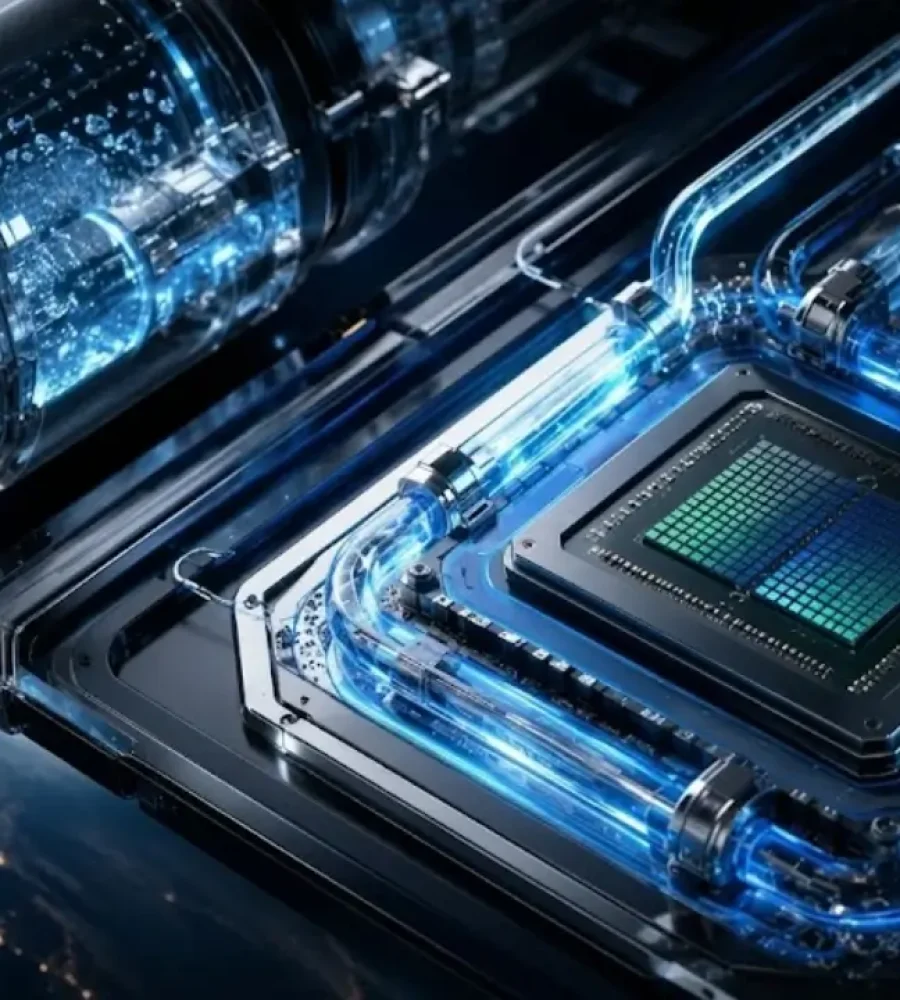

Integration with Hardware Design

Liquid cooling integrates directly with processors, GPUs, and memory systems to manage heat at the source. This integration reduces thermal resistance and improves efficiency. Hardware manufacturers design components with liquid cooling compatibility in mind. Infrastructure planning aligns with hardware capabilities rather than external conditions. The relationship between cooling and compute becomes more tightly coupled. System performance benefits from this integration.

Cooling strategies now shift from managing airflow to controlling fluid dynamics within closed systems. This transition changes the infrastructure requirements for data center design and operation. Liquid cooling systems rely on pumps, piping, and heat exchangers rather than large-scale air circulation systems. Engineers design facilities with different spatial and mechanical considerations. Site selection reflects these new requirements and constraints. The shift represents a fundamental reset in infrastructure planning.

Infrastructure Transformation

Facilities designed for liquid cooling require different layouts, materials, and operational practices. Engineers plan for fluid distribution networks and heat rejection systems within the building structure. This transformation affects construction timelines and design methodologies. Infrastructure becomes more specialized and performance-driven. Site selection aligns with the ability to support these systems effectively. The evolution reflects a deeper integration of engineering principles into location strategy.

Liquid cooling systems change maintenance practices and operational workflows within data centers. Operators monitor fluid quality, flow rates, and system integrity to ensure consistent performance. This approach requires new skill sets and operational frameworks. Infrastructure planning includes considerations for maintenance accessibility and system resilience. Site selection incorporates these operational requirements. The shift reflects a broader transformation in data center management.

Hot Climates, Cool Compute: Breaking the Geography Myth

High ambient temperatures once imposed strict limitations on data center deployment, as air cooling systems struggled to maintain efficiency under thermal stress. Liquid cooling reduces that constraint by isolating thermal exchange within engineered systems that remain less affected by external heat levels, though ambient conditions still influence overall efficiency. Operators now deploy infrastructure in regions where temperature variability once disrupted performance consistency. This shift reframes climate from a primary limiting factor into one of several considerations within broader infrastructure planning. Cooling resilience enables sustained performance even in environments with prolonged heat exposure. Infrastructure expansion now aligns with demand and capability rather than climate restrictions.

Thermal Stability in Extreme Environments

Liquid cooling systems maintain stable operating conditions by managing heat transfer directly at the component level. Coolant absorbs thermal energy efficiently and transports it away without reliance on ambient air circulation. Engineers design systems to function consistently across a wide range of environmental conditions. This stability supports deployments in regions with high temperature fluctuations. Infrastructure performance becomes predictable and controllable regardless of external climate. The shift strengthens confidence in non-traditional deployment regions.

Redefining Environmental Constraints

Traditional environmental constraints lose relevance as liquid cooling systems decouple performance from external conditions. Operators evaluate locations based on infrastructure readiness rather than temperature suitability. Regions previously considered high-risk now present viable opportunities for high-density deployments. This redefinition expands the global footprint of data center infrastructure. Planning strategies evolve to incorporate broader geographic possibilities. The industry moves toward a more flexible and inclusive approach to site selection.

Energy infrastructure now functions as the central determinant of data center viability, replacing climate as the dominant constraint. Liquid cooling systems depend on reliable power to sustain pumps, monitoring systems, and heat exchange processes. Operators prioritize proximity to strong electrical grids and generation sources during site evaluation. This shift elevates energy infrastructure from a supporting role to a primary design consideration. Cooling performance now aligns with energy availability rather than environmental conditions. Site selection becomes intrinsically linked to power ecosystem strength.

Power Availability as Thermal Backbone

Liquid cooling systems transform electrical energy into thermal management capability through continuous fluid circulation. Engineers design systems that rely on uninterrupted power to maintain cooling efficiency. Any disruption in energy supply directly impacts thermal stability and system performance. Operators therefore prioritize regions with robust and resilient power infrastructure. This dependency redefines the relationship between cooling and energy systems. Infrastructure planning integrates power and cooling into a unified framework.

Grid Proximity Over Climate Advantage

Site selection increasingly favors locations near energy generation and transmission hubs. Operators evaluate grid capacity, redundancy, and scalability as primary factors in deployment decisions. Climate advantages no longer outweigh the importance of reliable power access. This shift reflects a broader transformation in infrastructure priorities. Data centers align more closely with energy ecosystems than environmental conditions. Geography evolves into an energy-centric consideration.

Data center site selection now reflects a multi-variable engineering challenge rather than a climate-driven decision. Engineers analyze factors such as power availability, connectivity, structural feasibility, and cooling system integration. Liquid cooling enables more precise control over thermal conditions, reducing but not eliminating dependence on environmental factors. This shift introduces a more analytical and data-driven approach to location strategy. Site evaluation increasingly focuses on optimizing infrastructure compatibility alongside environmental considerations rather than relying primarily on favorable climates. The transformation elevates engineering as the primary driver of decision-making.

Data-Driven Location Analysis

Modern site selection incorporates advanced modeling and simulation techniques to evaluate infrastructure performance. Engineers assess thermal behavior, energy consumption, and system resilience under various scenarios. Liquid cooling systems provide predictable performance metrics that support accurate modeling. This capability enhances decision-making and reduces uncertainty in site evaluation. Infrastructure planning becomes more precise and evidence-based. The approach reflects a shift toward analytical rigor in location strategy.

Infrastructure Compatibility as Key Metric

Site viability now depends on the ability to support complex cooling and power systems. Engineers evaluate structural capacity, water availability for heat rejection, and integration with existing infrastructure. Liquid cooling systems require specific conditions that influence site selection criteria. Operators prioritize compatibility over environmental advantages. This focus ensures efficient deployment and long-term operational stability. Infrastructure alignment becomes the defining factor in location decisions.

Liquid cooling can support more standardized deployments by reducing the need for climate-specific design adaptations, although deployment speed also depends on regulatory, supply chain, and infrastructure factors. Operators can standardize infrastructure across diverse regions without extensive modifications for environmental conditions. This capability enables faster expansion into markets that were previously overlooked. Deployment strategies become more agile and responsive to demand. Infrastructure rollout aligns with business objectives rather than environmental constraints. The shift supports rapid growth in emerging regions.

Standardization Across Regions

Liquid cooling systems allow consistent design frameworks that apply across multiple geographic locations. Engineers replicate proven configurations without adapting to local climate variations. This standardization can simplify construction processes and reduce complexity in certain deployment scenarios. Operators achieve faster deployment cycles and improved scalability. Infrastructure planning becomes more efficient and predictable. The approach enhances global expansion strategies.

Unlocking Emerging Markets

Regions with growing digital demand but challenging climates now attract data center investments. Liquid cooling removes barriers that previously limited infrastructure development in these areas. Operators deploy facilities closer to end users, improving service delivery and latency. This expansion supports regional economic growth and digital transformation. Infrastructure distribution becomes more balanced and inclusive. The shift reflects a broader democratization of compute resources.

AI workloads introduce new requirements for data center infrastructure, including higher power density and advanced cooling systems. Liquid cooling supports these requirements by enabling efficient thermal management at scale. Operators adopt industrial planning methodologies to optimize site selection for AI deployments. This approach emphasizes throughput, scalability, and infrastructure integration. Location strategy evolves into a structured and repeatable process. The shift reflects the increasing complexity of modern compute environments.

Throughput-Centric Planning

AI infrastructure demands continuous and high-volume data processing capabilities. Engineers design systems to maximize throughput while maintaining thermal stability. Liquid cooling enables higher density deployments that support these requirements. Site selection prioritizes locations that can sustain intensive workloads. Infrastructure planning aligns with performance objectives rather than environmental conditions. The approach enhances operational efficiency.

Scalability as Core Requirement

Operators design data centers with scalability in mind to accommodate growing AI workloads. Liquid cooling systems support modular expansion without significant redesign. Engineers evaluate sites based on their ability to support future growth. This focus ensures long-term viability and adaptability. Infrastructure planning becomes forward-looking and flexible. The shift reflects the dynamic nature of AI-driven demand.

Does Geography Still Matter—or Just Matter Differently?

Geography continues to influence data center site selection, but its role has evolved significantly. Climate no longer dominates decision-making as liquid cooling systems mitigate environmental constraints. Operators now consider factors such as power availability, connectivity, regulatory environment, and proximity to users. This multi-variable approach redefines the concept of location advantage. Geography becomes a composite of infrastructure and operational considerations. The shift reflects a more nuanced understanding of site selection.

Modern site selection involves balancing multiple factors that influence infrastructure performance and efficiency. Engineers evaluate power, connectivity, latency, and regulatory frameworks alongside cooling capabilities. Liquid cooling reduces the weight of climate in this equation. Operators prioritize locations that offer the best combination of these variables. Infrastructure planning becomes more complex and strategic. The approach enhances decision-making accuracy.

Regulatory and Connectivity Factors

Regulatory environments and network connectivity play critical roles in site selection. Operators assess compliance requirements, data sovereignty laws, and connectivity infrastructure. Liquid cooling allows flexibility in choosing locations that meet these criteria. This capability expands the range of viable deployment regions. Infrastructure planning aligns with both technical and regulatory considerations. The shift reflects the evolving landscape of global data infrastructure.

Thermal management no longer remains confined within the walls of the data center as liquid cooling introduces a broader systems perspective. Engineers now consider how heat rejection integrates with surrounding infrastructure and environmental systems. Cooling loops extend beyond internal circulation to interact with external heat exchange mechanisms. This approach creates a more dynamic relationship between the facility and its location. Site planning includes evaluating how effectively heat can be transferred, reused, or dissipated within the local ecosystem. Infrastructure decisions now reflect a convergence of thermal engineering and regional capability.

External Heat Rejection Strategies

Liquid cooling systems rely on efficient heat rejection processes to maintain internal thermal balance. Engineers design systems that transfer heat from coolant loops to external exchangers such as cooling towers or dry coolers. These systems operate independently of ambient air temperature constraints, enabling flexibility in site selection. Operators evaluate locations based on their ability to support these external systems effectively. Heat rejection becomes a localized engineering challenge rather than a climate limitation. Infrastructure planning incorporates these requirements into site evaluation.

Heat Reuse and Regional Integration

Recovered heat from liquid cooling systems presents opportunities for integration with local energy systems. Engineers design facilities that channel waste heat into district heating networks or industrial processes. This capability adds a new dimension to site selection by aligning infrastructure with regional energy use. Operators consider how effectively heat can be reused within the surrounding environment. This integration enhances overall system efficiency and sustainability. Location strategy evolves to include thermal symbiosis with local ecosystems.

Water management now plays a central role in many data center designs as liquid cooling systems depend on controlled fluid circulation alongside existing air-based approaches. Engineers evaluate water availability, quality, and treatment requirements during site selection. This shift introduces new considerations that complement and, in some cases, partially replace traditional airflow-based strategies.Infrastructure planning integrates water systems into the core design of the facility. Operators prioritize locations that support sustainable water use and efficient cooling loops. The transition reflects a broader redefinition of resource dependency in data center operations.

Closed-Loop Cooling Architectures

Liquid cooling systems often operate within closed-loop configurations that minimize water consumption and environmental impact. Engineers design these systems to recycle coolant efficiently while maintaining thermal performance. This approach reduces dependence on continuous water supply from external sources. Operators evaluate sites based on their ability to support closed-loop infrastructure. Water strategy becomes a function of engineering design rather than resource abundance. Infrastructure planning aligns with sustainability objectives.

Water Quality and System Integrity

Fluid quality directly influences the performance and longevity of liquid cooling systems. Engineers implement filtration, treatment, and monitoring processes to maintain system integrity. Site selection includes assessing local water conditions and potential treatment requirements. Operators prioritize locations that minimize risks associated with contamination or corrosion. This focus ensures reliable operation and reduces maintenance complexity. Infrastructure planning incorporates water quality as a critical factor.

Facility design increasingly adapts to support liquid cooling systems rather than relying primarily on environmental conditions to manage heat. Engineers focus on structural integrity, load distribution, and spatial configuration to accommodate advanced cooling infrastructure. This shift transforms how data centers are built and operated. Site selection includes evaluating the feasibility of constructing facilities that support these requirements. Infrastructure planning becomes more aligned with engineering capabilities than environmental advantages. The evolution reflects a deeper integration of design and performance.

Load-Bearing and Spatial Considerations

Liquid cooling systems introduce additional weight and structural requirements due to fluid distribution networks and equipment. Engineers design facilities to support these loads without compromising safety or performance. Site evaluation includes assessing soil stability, building materials, and construction feasibility. Operators prioritize locations that enable efficient structural design. Infrastructure planning integrates these considerations into site selection. The shift emphasizes engineering precision in facility development.

Modular and Scalable Construction

Liquid cooling supports modular construction approaches that enable incremental expansion of data center capacity. Engineers design facilities with scalability in mind to accommodate future growth. This approach reduces construction complexity and accelerates deployment timelines. Operators evaluate sites based on their ability to support modular infrastructure. Infrastructure planning becomes more flexible and adaptive. The evolution reflects changing demands in data center development.

As climate constraints diminish, proximity to users and network infrastructure becomes a more significant factor in site selection. Operators prioritize locations that minimize latency and enhance service delivery. Liquid cooling enables deployments in regions closer to demand centers without thermal limitations. This shift supports the growth of edge computing and distributed infrastructure. Site selection aligns with connectivity and user proximity rather than environmental conditions. The evolution reflects changing priorities in data center strategy.

Network Infrastructure Integration

Connectivity plays a critical role in determining the effectiveness of data center deployments. Engineers evaluate proximity to fiber networks, exchange points, and communication hubs. Liquid cooling allows flexibility in choosing locations that optimize connectivity. Operators align infrastructure with network ecosystems to enhance performance. Site selection becomes a function of connectivity rather than climate. Infrastructure planning integrates these considerations.

Edge Deployment Acceleration

Edge computing environments benefit from liquid cooling due to compact design and high efficiency. Operators deploy smaller facilities closer to end users to reduce latency. This approach enhances responsiveness and supports real-time applications. Liquid cooling enables these deployments in diverse geographic locations. Infrastructure planning becomes more decentralized and adaptive. The shift reflects the growing importance of edge computing.

Risk assessment in data center site selection increasingly focuses on infrastructure reliability alongside environmental conditions. Operators evaluate risks associated with power supply, water systems, and structural integrity. Liquid cooling reduces certain exposure to climate-related risks by providing more controlled thermal management, though environmental risks remain relevant. This shift changes how operators approach resilience and contingency planning. Site selection incorporates a broader range of risk factors. Infrastructure planning becomes more comprehensive and strategic.

Infrastructure Resilience

Resilience now depends on the robustness of power, cooling, and connectivity systems. Engineers design infrastructure to withstand disruptions and maintain operational continuity. Liquid cooling systems contribute to resilience by providing consistent thermal management. Operators prioritize locations with reliable infrastructure networks. Site selection aligns with resilience objectives. The shift reflects evolving risk management strategies.

Redundancy and Failover Design

Redundancy remains a critical component of data center design, ensuring continuous operation during system failures. Engineers implement backup systems for power and cooling to maintain performance. Liquid cooling systems integrate with redundancy frameworks to enhance reliability. Operators evaluate sites based on their ability to support these systems. Infrastructure planning incorporates failover strategies into site selection. The approach strengthens operational resilience.

Liquid cooling is beginning to influence the economic dynamics of data center development by altering cost structures and investment priorities. Operators allocate resources toward advanced cooling systems and energy infrastructure rather than climate-based advantages. This shift changes how projects are evaluated and financed. Site selection reflects cost efficiency in infrastructure deployment and operation. Economic models are gradually evolving to align with new technological capabilities. The transformation reshapes the financial landscape of data center development.

Capital Allocation Priorities

Investment strategies now prioritize infrastructure components that support high-density computing and efficient cooling. Liquid cooling systems require upfront capital but deliver long-term operational benefits. Operators evaluate sites based on their ability to support these investments. Infrastructure planning aligns with financial objectives. The shift reflects changing priorities in capital allocation. Economic considerations become more closely tied to engineering capabilities.

Operational Cost Optimization

Liquid cooling systems improve energy efficiency and reduce operational costs over time. Engineers design systems to minimize energy consumption while maintaining performance. Operators evaluate sites based on their potential for cost optimization. Infrastructure planning incorporates long-term financial considerations. The approach enhances sustainability and profitability. Economic models adapt to new technological realities.

The Shift from Climate Advantage to Infrastructure Advantage

The evolution of liquid cooling marks a structural shift in how data centers approach location strategy, moving away from climate-driven decisions toward infrastructure-led planning. Operators now prioritize power availability, engineering capability, and system integration while still accounting for environmental conditions. Liquid cooling enables more consistent performance across diverse geographies, reducing but not removing dependence on traditional climate advantages. This transformation expands the global footprint of data center infrastructure and supports more distributed deployment models. Geography continues to matter, but its influence now reflects a broader set of variables that define operational success. The industry enters a phase where infrastructure advantage replaces climate advantage as the defining factor in site selection.

Redefining Location Advantage

Location advantage now depends on the ability to support advanced infrastructure systems rather than favorable environmental conditions. Engineers design data centers to operate efficiently regardless of climate. Operators evaluate sites based on infrastructure readiness and long-term scalability. This shift reflects a deeper integration of engineering and planning processes. Infrastructure capability becomes the primary determinant of success. The concept of geography evolves accordingly.

The Future of Site Selection

Future site selection strategies will continue to emphasize infrastructure integration and performance optimization. Liquid cooling will play a central role in enabling this transformation. Operators will adopt more analytical and data-driven approaches to location planning. This evolution will support the growing demands of AI and high-performance computing. Infrastructure planning will become increasingly sophisticated and adaptive. The shift represents a new era in data center development.