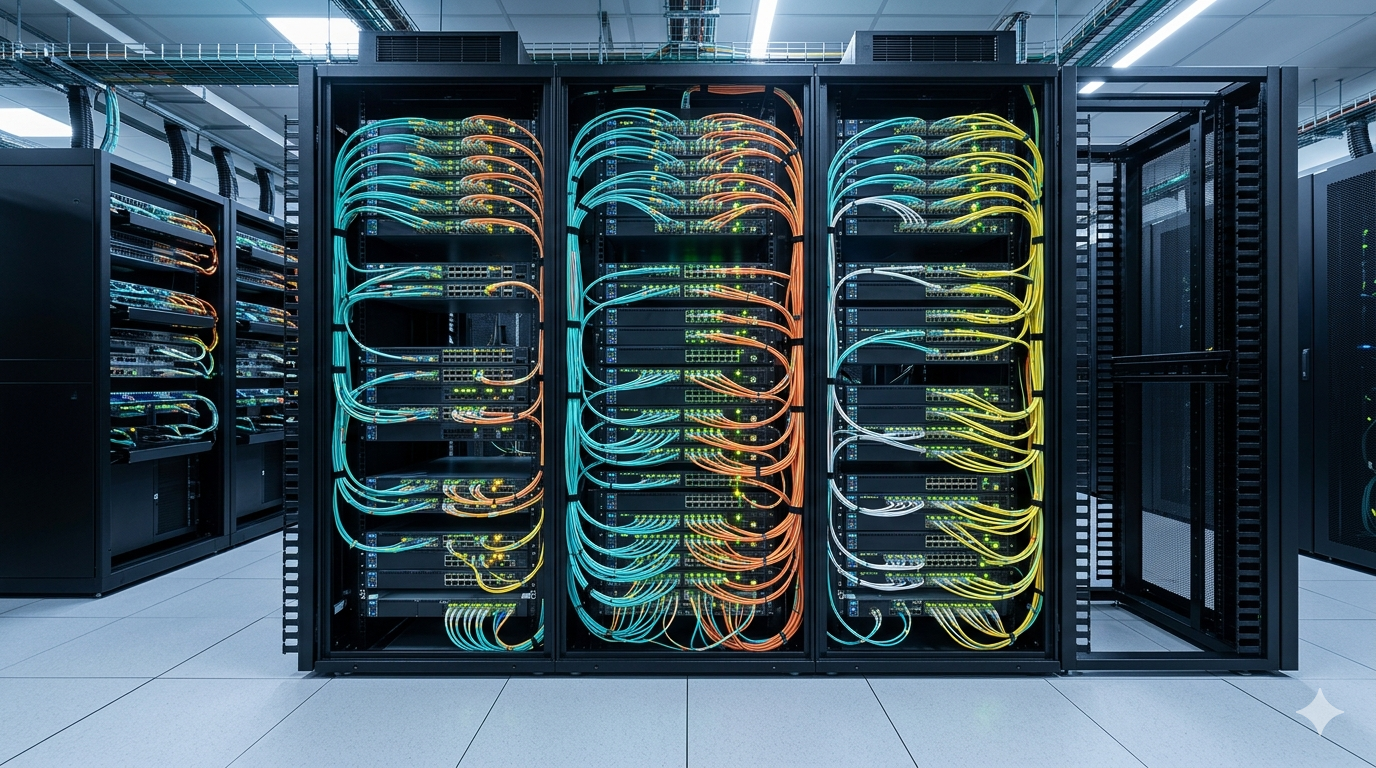

The networking infrastructure inside AI data centers was designed around a specific workload profile: large-scale model training that moves enormous volumes of data between GPU clusters in predictable, high-bandwidth bursts. That profile shaped the switch architectures, fabric topologies, and bandwidth provisioning strategies that most current AI infrastructure uses. Agentic AI workloads behave differently. They generate traffic patterns that the existing networking model was not built to handle efficiently, and operators who have not yet recognised this distinction are building infrastructure that will underperform the workloads it is meant to serve.

Agentic AI systems run multiple models simultaneously, coordinate tasks across specialised components, query external data sources in real time, and maintain state across long-running interactions. Each of those activities generates network traffic with different latency requirements, different burst characteristics, and different failure tolerance profiles than a training run. Understanding what changes in the network as AI shifts from training-centric to agentic is essential for anyone designing or procuring AI data center infrastructure in 2026.

The Traffic Pattern That Changes Everything

Training workloads generate what the industry calls east-west traffic, moving data laterally between GPU nodes within a cluster at very high bandwidth and relatively predictable timing. The network fabric for training optimises for throughput and minimises latency within the cluster boundary. Most AI data center networking, including InfiniBand and high-performance Ethernet fabrics, reflects this optimisation.

Agentic workloads generate a fundamentally different traffic mix. A single agentic task might invoke a reasoning model, a retrieval system, a code execution environment, and an external API call in sequence, with each step depending on the output of the previous one. That sequential dependency creates latency sensitivity at each hop that training workloads do not have. Furthermore, the traffic flows in agentic systems cross more boundaries: between models, between clusters, between on-premises and cloud environments, and between the data center and external services.

The east-west GPU fabric traffic patterns in neocloud environments document how fabric congestion emerges when traffic patterns shift from bulk transfer to latency-sensitive sequential flows. That congestion dynamic is precisely what agentic workloads introduce at scale. Consequently, a network fabric that performs well for training can degrade significantly under agentic load without any change in the underlying hardware.

Why Latency Sensitivity Compounds at Scale

The latency sensitivity of agentic workloads compounds as the number of agents and model calls in a workflow increases. A workflow that chains five sequential model invocations, each adding 10 milliseconds of network latency, accumulates 50 milliseconds of latency that the end-to-end response time directly absorbs. At small scale that is manageable. At the scale of thousands of concurrent agentic sessions running across a large inference cluster, the aggregate latency adds up to meaningful throughput degradation and user experience impact.

Additionally, agentic systems often need to maintain session state across multiple model calls. That statefulness requires either low-latency access to shared memory or efficient state transfer over the network fabric. Neither is trivial at scale, and neither is well-served by network architectures optimised purely for stateless bulk data movement between training nodes.

How Inference Infrastructure Must Adapt

Beyond GPUs, the hidden architecture powering the AI revolution establishes that the networking layer is as critical to AI performance as the compute layer itself. For agentic inference specifically, three network characteristics matter above all others: latency consistency, topology flexibility, and bandwidth efficiency at small message sizes.

Latency consistency means the network delivers predictable latency rather than just low average latency. Agentic workflows tolerate occasional high latency poorly because sequential dependencies mean that one slow hop delays the entire workflow. A network that averages 5 milliseconds but occasionally spikes to 50 milliseconds is worse for agentic workloads than one that consistently delivers 8 milliseconds, even though its average is better.

Topology flexibility matters because agentic systems do not have fixed communication patterns. Training jobs communicate within a defined cluster topology. Agentic systems communicate dynamically depending on which models and services a given task requires. Network fabrics that can adapt routing and bandwidth allocation to dynamic traffic patterns handle agentic workloads more efficiently than static topologies optimised for predictable training traffic.

The Small Message Problem

Bandwidth efficiency at small message sizes addresses a specific characteristic of agentic traffic that training workloads do not share. Training data moves in large blocks that amortise network overhead across substantial payloads. Agentic model invocations often move small payloads: a prompt, a context window excerpt, a tool call result. These small messages carry proportionally higher network overhead per byte of useful data, reducing effective throughput and increasing per-transaction latency on fabrics designed for large transfers.

The power efficiency challenge of faster data center networks shows that network efficiency at small message sizes also carries power implications. Fabrics that process large numbers of small transactions consume more power per useful byte than those handling bulk transfers, adding to the already significant power density challenge of high-performance AI inference infrastructure.

What Operators Need to Change

The practical response to agentic networking requirements involves three areas: fabric architecture, switching hardware selection, and software-defined traffic management.

On fabric architecture, operators building new AI inference infrastructure should evaluate spine-leaf topologies with lower oversubscription ratios than training-focused designs typically use. Lower oversubscription reduces the likelihood of congestion under bursty agentic traffic patterns. Additionally, fabrics that support adaptive routing, which dynamically selects paths based on current congestion rather than static configuration, handle the variable traffic patterns of agentic workloads more effectively.

Corning’s AI network density breakthroughs reflect how optical interconnect capacity is expanding to support the higher port counts and bandwidth densities that agentic inference infrastructure requires. Switching hardware selection should prioritise low and consistent latency over peak throughput in inference environments, reversing the training-era preference for maximum bandwidth above other characteristics.

The Software Layer Cannot Be an Afterthought

Software-defined traffic management becomes essential in agentic environments because static network configuration cannot adapt to dynamic workload patterns. Operators need visibility into traffic flows at the application layer, not just the network layer, to understand which model invocations and service calls are generating congestion and where latency is accumulating in agentic workflows.

AI compute beyond chips is now about controlling the stack argues that the operators who control the full software and hardware stack from application to network fabric will achieve better performance and efficiency than those who treat networking as a commodity layer beneath the AI application. That argument applies with particular force to agentic inference, where the interaction between application-level workflow design and network-level traffic patterns determines whether the infrastructure performs as designed or chronically underperforms against its theoretical specifications.

The operators designing AI data center networking for agentic workloads today are building for a workload profile that will define AI infrastructure requirements for the rest of this decade. The ones who carry forward training-era networking assumptions into inference-era deployment will discover the mismatch in production, at the worst possible time.