When most people discuss large language model performance, the conversation usually centers on parameter counts, token throughput, or specialized model architectures. A far more fundamental constraint often escapes attention outside infrastructure teams: heat. As AI workloads push GPUs to extreme power densities, thermal throttling has become a serious risk to both performance and cost efficiency in real-world LLM deployments.

Understanding why thermal throttling occurs, why it affects LLM inference so acutely, how it reshapes hardware economics, and how modern data centers can mitigate it is now essential for anyone running AI at scale.

The Physics of the Heat Wall

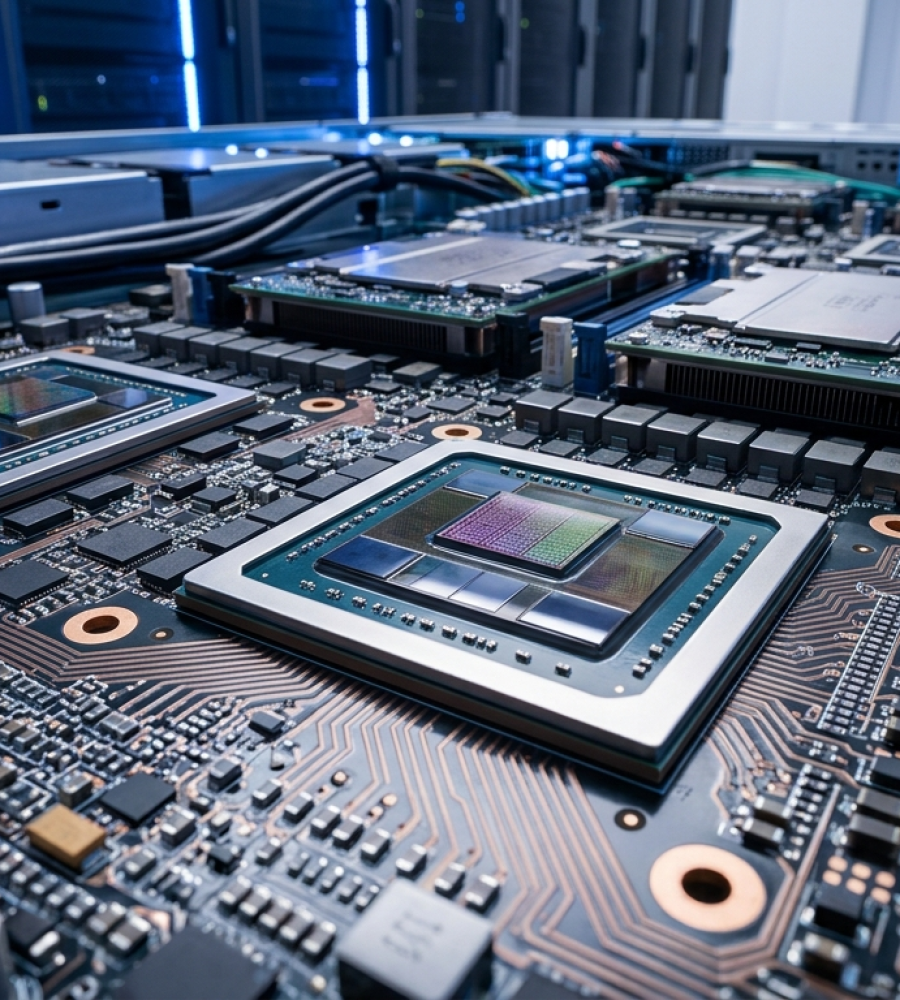

Thermal limits are an unavoidable reality of modern semiconductor design. AI accelerators such as NVIDIA’s H100 and newer B-series devices deliver extraordinary performance per watt, but that performance comes from driving hundreds of watts through a very small piece of silicon. Under heavy workloads, a single H100 can draw several hundred watts, and in dense configurations, rack-level heat output can exceed 50 to 100 kilowatts. Traditional air cooling systems were never designed for this level of thermal density.

To protect the hardware, GPUs rely on thermal throttling. As junction temperatures approach critical thresholds, typically around 90 to 100 degrees Celsius, the GPU automatically reduces clock speeds to limit power draw and prevent physical damage. This behavior is a safety mechanism rooted in physics and long-term chip reliability.

Each GPU has a defined T-junction limit, which represents the maximum safe die temperature. As that limit is approached or exceeded, the hardware applies progressively stronger throttling. Clock frequencies drop, power consumption falls, and performance can collapse rapidly, even when the software stack is capable of much higher throughput.

Why LLMs Are Uniquely Vulnerable

Large language model inference creates a thermal profile that differs sharply from many traditional workloads. LLMs drive sustained, near-maximum utilization of both compute units and memory bandwidth. Because these models are autoregressive, each generated token depends on the previous one. The workload cannot be broken into independent chunks with natural idle periods. When throttling occurs during inference, every subsequent token is delayed, causing latency to accumulate throughout the entire response.

Memory behavior further compounds the problem. LLM inference involves constant movement of large volumes of data between VRAM and compute units. This memory traffic generates substantial heat on its own. In some cases, memory modules or memory controllers reach thermal limits before the compute cores do, triggering throttling even when raw compute capacity remains available.

Batching amplifies these effects. To maximize throughput, operators commonly batch multiple inference requests across GPUs or nodes. In distributed environments, synchronization operations force all devices to progress at the speed of the slowest participant. If one GPU throttles due to elevated temperature, it becomes a straggler that slows the entire group. What begins as a localized thermal issue quickly turns into a cluster-wide performance bottleneck.

The Economic Impact: A Hidden Tax

Thermal throttling introduces a quiet but persistent cost that rarely shows up in headline benchmarks.

One consequence is the forced tradeoff between throughput and latency. Operators can reduce batch sizes or accept slower response times, but both options carry business consequences. Users notice latency increases, even small ones, and perceived responsiveness directly affects product quality in competitive AI-driven markets.

Hardware longevity also suffers. Frequent thermal cycling, caused by repeated transitions between high load and throttled states, places mechanical stress on GPUs. Solder joints, thermal interface materials, and chip substrates undergo constant expansion and contraction, accelerating wear. Over time, this raises failure rates and shortens the usable life of accelerators that already represent major capital investments.

Energy efficiency declines as well. Throttled GPUs continue to consume baseline power while delivering less compute. Leakage power remains, fans and cooling systems stay active, and energy costs rise without corresponding performance gains. The result is a higher effective cost per generated token.

Taken together, these effects turn heat into a recurring operational tax that reduces performance, inflates costs, and erodes hardware value in ways that standard performance metrics often fail to capture.

Modern Mitigation Strategies

As awareness grows, infrastructure teams are adopting multiple approaches to reduce the impact of thermal throttling.

Liquid Cooling and Direct-to-Chip Systems

Air cooling is approaching its practical limits. Once rack power density climbs past roughly 25 to 30 kilowatts, air struggles to remove heat quickly enough to prevent hotspots. Many AI data centers now deploy liquid cooling solutions, including direct-to-chip cold plates and immersion cooling systems that extract heat directly from the source.

Liquids conduct heat far more efficiently than air, allowing GPUs to operate at lower junction temperatures under sustained load. By staying below thermal thresholds, accelerators maintain stable clock speeds and predictable inference latency. Facilities that transition to liquid cooling often see immediate gains in throughput and energy efficiency because cooling no longer constrains performance.

Thermal-Aware Scheduling

Software-based approaches also play a role. Thermal-aware scheduling systems monitor GPU temperatures in real time and shift workloads toward cooler nodes before temperatures reach critical levels. This spreads thermal load across the fleet, reduces hotspots, and improves overall utilization without sacrificing performance stability.

Model and Execution Optimization

Developers can also lower thermal pressure through model optimization. Quantization techniques, such as moving from FP16 to INT8, reduce memory bandwidth demands and peak power draw. Lower data movement translates directly into less heat generation. While accuracy tradeoffs must be evaluated carefully, many production workloads benefit from these optimizations with minimal quality impact.

Heat as a First-Order Constraint

Thermal throttling has moved beyond the realm of hardware nuance. For large language model deployments, it represents a core system constraint that shapes performance, cost structure, and reliability. The interaction between GPU architecture, heat dissipation, and LLM workload patterns makes this issue impossible to ignore.

Managing thermal throttling requires deliberate investment in cooling infrastructure, intelligent scheduling, and execution strategies designed with thermal behavior in mind. Teams that overlook these factors face slower responses, degraded service quality, and higher long-term operating costs.

As AI performance increasingly defines competitive advantage, thermal throttling stands out as the invisible bottleneck that every AI infrastructure strategist must understand and address.