Artificial intelligence development has entered an era defined by large-scale model training and unprecedented computational intensity. Training modern models requires massive compute clusters that operate continuously for weeks or months, drawing substantial electrical power from regional grids. Engineers design these clusters to process trillions of mathematical operations as models optimize billions of parameters across distributed systems. Data centers hosting these workloads therefore operate at power densities that exceed traditional enterprise computing environments. Energy demand has become a central planning factor for organizations building AI infrastructure because large clusters can draw tens of megawatts of electricity during training cycles. As AI capabilities expand, infrastructure operators must balance performance objectives with the environmental and energy implications of this rapid technological expansion.

Rapid growth in AI training workloads has introduced complex sustainability considerations across the compute ecosystem. Infrastructure planners increasingly evaluate the environmental impact of compute clusters alongside performance and scalability metrics. Electricity consumption associated with AI training has already reached levels that require careful grid integration planning in several regions. Large language model training runs can consume tens of gigawatt-hours of electricity depending on model size, hardware configuration, and facility efficiency. Technology companies therefore face pressure to maintain innovation momentum while addressing environmental concerns associated with power use and carbon emissions. This tension between technological progress and sustainability commitments now shapes infrastructure strategies across the global AI ecosystem.

Compute Scale Versus Environmental Limits

Modern AI training clusters rely on thousands of high-performance GPUs operating simultaneously within tightly coordinated compute fabrics. Each accelerator performs large volumes of parallel operations while maintaining constant utilization during model optimization cycles. A single GPU can draw hundreds of watts of electrical power under full load, and dense rack configurations amplify this power demand across entire data halls. Large clusters containing tens of thousands of accelerators therefore require multi-megawatt power supplies to sustain training workloads. Engineers design these systems to operate continuously during training runs in order to maximize hardware utilization and reduce model convergence time. The resulting concentration of compute resources creates infrastructure environments with extremely high energy consumption profiles.

Training frontier-scale models pushes compute infrastructure to levels rarely seen in earlier generations of enterprise computing. Clusters designed for large language model development can include tens of thousands of GPUs connected through high-bandwidth networking fabrics. These deployments frequently operate at rack densities exceeding 80 kilowatts due to the power requirements of modern accelerator hardware. Grid operators and utilities therefore face the challenge of supplying stable electricity to facilities that resemble industrial power consumers. At the same time, environmental policy frameworks require reductions in carbon intensity across energy systems. AI infrastructure expansion thus creates a structural tension between the demand for large-scale computation and the environmental constraints placed on energy generation.

The Infrastructure Footprint of Frontier AI Models

The physical infrastructure required to train frontier AI models extends far beyond the compute servers themselves. Large clusters rely on high-capacity networking systems that synchronize thousands of GPUs across distributed training architectures. Data center operators deploy specialized switches, optical interconnects, and storage systems that move training data across the cluster with minimal latency. Each of these components contributes additional energy consumption and manufacturing requirements to the overall infrastructure footprint. Supply chains must therefore produce large quantities of advanced semiconductors, circuit boards, and cooling components. Infrastructure scaling for AI consequently expands environmental impacts across multiple layers of the technology supply chain.

Lifecycle assessments of data center infrastructure show that construction materials such as concrete, steel, and electrical equipment contribute significantly to embodied carbon emissions before facilities begin operating. Hyperscale data centers require large buildings, power distribution equipment, cooling systems, and backup energy infrastructure. The materials used to build these facilities include steel, concrete, copper, and specialized electronic components. Manufacturing and transporting these materials adds additional carbon emissions before any compute workload even begins operation. The lifecycle footprint of AI infrastructure therefore includes both operational energy consumption and the embodied environmental cost of facility construction and hardware manufacturing. Infrastructure planners increasingly recognize that the environmental impact of AI development extends beyond electricity use during model training.

Energy Elasticity in AI Training Workloads

Organizations exploring sustainable AI infrastructure increasingly focus on workload flexibility as a mechanism for managing energy consumption. Training workloads typically operate as batch processes that can run continuously over extended periods without direct human interaction. Research on carbon-aware computing indicates that AI training workloads can be scheduled during periods when electricity grids supply a higher share of renewable energy. Data center orchestration platforms can therefore shift training runs to align with favorable grid conditions or lower carbon intensity electricity. These scheduling strategies help reduce the environmental footprint of training workloads without slowing model development timelines. Workload elasticity has become an important operational strategy for managing the growing energy demands of AI infrastructure.

Geographic distribution of compute infrastructure also enables energy-adaptive training strategies. Companies often deploy clusters across multiple regions that provide access to different energy markets and renewable resources. Researchers have proposed distributing AI training workloads across multiple data center regions so that jobs can run in locations where electricity has lower carbon intensity at a given time. Researchers and infrastructure operators increasingly explore carbon-aware scheduling systems that dynamically select locations with lower environmental impact. Such approaches allow organizations to maintain high GPU utilization while reducing the carbon footprint of AI training operations. Consequently, energy-adaptive compute architectures are emerging as a core feature of sustainable AI infrastructure planning.

Hardware Efficiency Versus Model Scale

Advances in accelerator hardware have significantly improved the computational efficiency of AI training systems. Modern GPUs deliver substantially higher performance per watt compared with earlier generations of machine learning hardware. Engineers optimize these processors to execute large matrix operations with high throughput while minimizing idle cycles. Hardware vendors also introduce architectural innovations that reduce memory access bottlenecks and improve energy efficiency. These improvements help reduce the electricity required for each unit of computation performed during model training. Efficiency gains therefore represent a critical component of sustainable AI infrastructure development.

Despite these improvements, the total energy consumption of AI infrastructure continues to rise as model scale expands. Researchers frequently increase model size and training dataset complexity to achieve higher performance benchmarks. Larger models require more GPUs, longer training cycles, and greater data movement across compute clusters. The growth rate of model scale often exceeds the efficiency gains achieved through hardware improvements. As a result, the overall electricity demand of AI training infrastructure continues to increase even as individual accelerators become more efficient. This dynamic illustrates a paradox in which technological efficiency advances coexist with rising total energy consumption across the AI ecosystem.

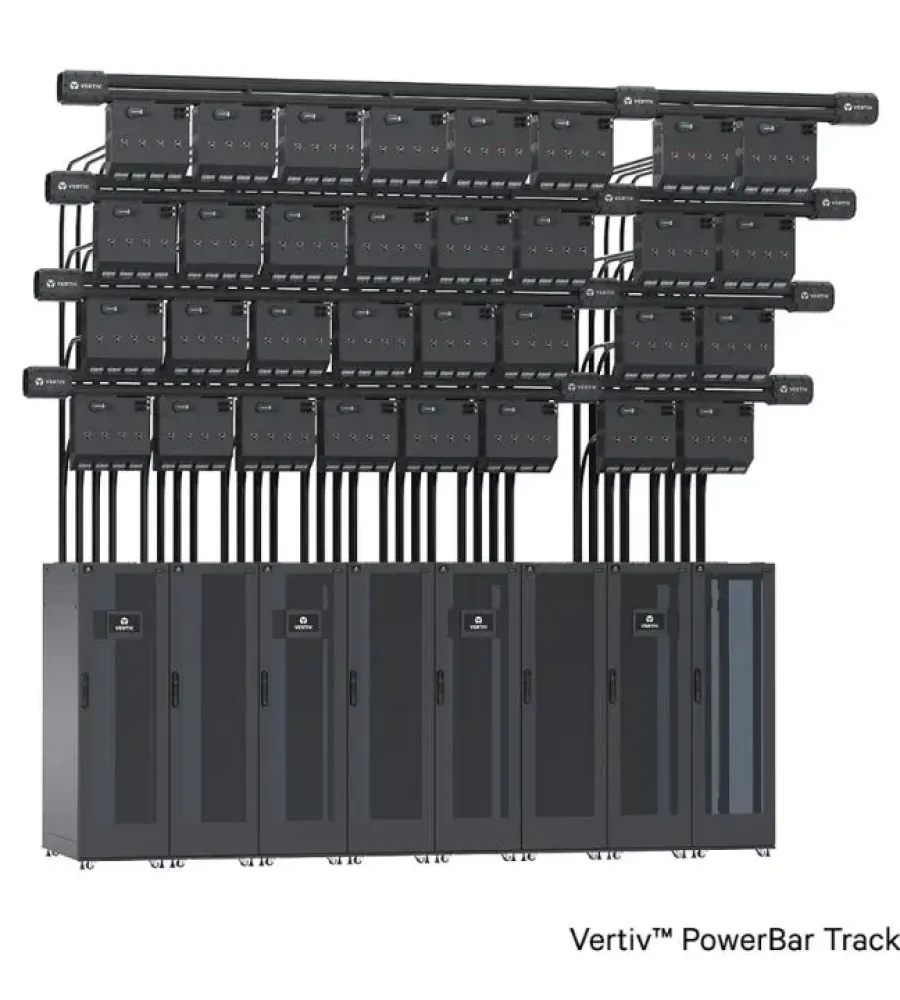

Power Infrastructure as a Constraint on AI Growth

Power availability has become a defining factor in determining where large AI training clusters can operate. Data center developers increasingly evaluate grid capacity and transmission infrastructure before selecting new facility locations. Regions with abundant electricity supply and strong grid connectivity attract large-scale AI infrastructure deployments. Areas with limited transmission capacity or constrained generation resources face challenges supporting high-density compute facilities. Grid planners must therefore coordinate infrastructure upgrades to accommodate the growing electricity demand associated with AI expansion. Energy infrastructure has become a foundational element of digital infrastructure strategy.

Utilities and governments have begun analyzing how AI infrastructure could reshape regional energy systems in the coming decade. Forecasts indicate that data center electricity demand may grow substantially as hyperscale operators deploy larger clusters. Some projections suggest that AI workloads could drive a significant share of data center power consumption by the end of the decade. This growth trajectory places additional pressure on grid planning, generation capacity, and renewable energy deployment strategies. Infrastructure operators therefore consider energy procurement agreements and grid integration strategies during the early stages of data center development. Power infrastructure has evolved from a background requirement into a central constraint shaping the future of AI expansion.

Before examining broader industry responses, one company that has actively addressed the sustainability challenge in AI infrastructure is NVIDIA. The company has worked on improving accelerator efficiency and enabling flexible power management capabilities within GPU clusters used for AI training. Experimental studies have shown that AI data center workloads can participate in demand-response programs by temporarily adjusting power consumption during periods of high grid demand. Jensen Huang, CEO of NVIDIA, has emphasized that future AI infrastructure must combine computational scale with energy-efficient system design to sustain long-term industry growth. These developments illustrate how hardware vendors are participating in the search for sustainable compute architectures that support large-scale AI innovation.

Balancing AI Progress With Sustainable Infrastructure

The rapid expansion of AI training infrastructure has created a new set of sustainability challenges across the digital economy. Large compute clusters enable breakthroughs in machine learning capabilities, yet they also introduce substantial energy requirements that influence regional power systems. Infrastructure planners must therefore evaluate compute performance alongside environmental and energy considerations when designing new facilities. Hardware efficiency improvements, flexible workload scheduling, and renewable energy integration represent important tools for managing these demands. However, infrastructure scaling continues to push the boundaries of electricity consumption within the technology sector. Sustainable AI development requires coordinated strategies across hardware design, data center architecture, and energy infrastructure planning.

Long-term progress in artificial intelligence will depend on balancing technological ambition with responsible infrastructure development. Organizations that build AI systems must integrate sustainability considerations into decisions about hardware deployment, facility construction, and workload management. Grid operators, policymakers, and technology companies increasingly collaborate to address the energy implications of large-scale compute infrastructure. These partnerships aim to ensure that future AI innovation occurs within the environmental limits of global energy systems. The evolution of AI infrastructure therefore reflects not only advances in computation but also the broader challenge of aligning digital progress with sustainable resource use.