Immersion cooling has spent the better part of a decade proving itself inside hyperscale data centers, where operators building at gigawatt scale had both the capital and the engineering resources to deploy liquid dielectric systems at significant volume. The technology earned its credibility in those environments, demonstrating measurable improvements in power usage effectiveness, reduced mechanical complexity compared to traditional air cooling infrastructure, and the ability to support rack densities that air-based systems cannot reach. That track record now attracts attention from a different segment of the market, one that operates at a far smaller scale, faces far tighter physical constraints, and until recently had no viable path to liquid cooling at all: the edge.

Edge data centers sit closest to end users and data sources, positioned in locations where latency, data sovereignty, or connectivity requirements make centralized cloud infrastructure unsuitable. As AI inference workloads migrate toward the edge to serve real-time applications in manufacturing, healthcare, retail, and telecommunications, these facilities must now support GPU-dense compute that their existing infrastructure never anticipated. The thermal and power density challenges that drove liquid cooling adoption in hyperscale environments are arriving at the edge faster than the infrastructure market has moved to address them.

Edge AI Deployment Patterns and Their Thermal Implications

Edge AI deployments run on latency requirements that centralized infrastructure cannot meet. Applications including real-time quality inspection in manufacturing, patient monitoring in clinical environments, autonomous vehicle coordination, and low-latency inference for financial services all require compute resources positioned within milliseconds of the data they process. These requirements push GPU-equipped servers into environments that engineers designed around conventional networking and storage equipment, with thermal management systems specified for rack densities that rarely exceeded modest thresholds under traditional IT workloads.

Modern AI inference hardware operates at rack densities that overwhelm the cooling capacity of conventional computer room air conditioners and precision air handling units within existing edge facility footprints. Operators often house edge locations in repurposed telecommunications facilities, industrial buildings, or purpose-built micro data centers with limited floor area and ceiling height, which restricts the ability to add supplementary air cooling capacity. Thermal density now stands as the primary infrastructure constraint limiting AI deployment at the edge, and the gap between what air cooling can deliver and what AI inference hardware requires widens with each successive GPU generation.

Air Cooling Constraints in Distributed High-Density Environments

Air cooling systems in edge environments face structural limitations that become more pronounced as rack density increases. Conventional cooling architectures depend on managed airflow paths that direct cold air across server inlet faces and return hot exhaust to cooling units through defined hot and cold aisles. This approach works adequately at lower rack densities, where the thermal load per unit of floor area stays within the range that air handling equipment can manage without creating localized hot spots. Above that threshold, maintaining uniform inlet temperatures across high-density GPU racks requires airflow volumes that create unacceptable noise levels, consume significant fan power, and still fail to prevent thermal stratification in confined spaces.

Edge locations compound these challenges because they rarely offer the spatial flexibility available in purpose-built data center campuses. Ceiling heights that limit raised floor plenum depth, building structural constraints that prevent heavy supplementary cooling equipment installation, and limited outdoor space for condenser units and cooling towers all restrict the options available to operators attempting to retrofit air cooling capacity for AI workloads. A significant proportion of edge facilities deployed before the current AI infrastructure wave lack the structural and electrical characteristics needed to support liquid cooling infrastructure using conventional retrofit approaches, creating a design challenge that requires purpose-built solutions rather than incremental upgrades to existing air systems.

Immersion Cooling Architecture and Its Suitability at Smaller Scale

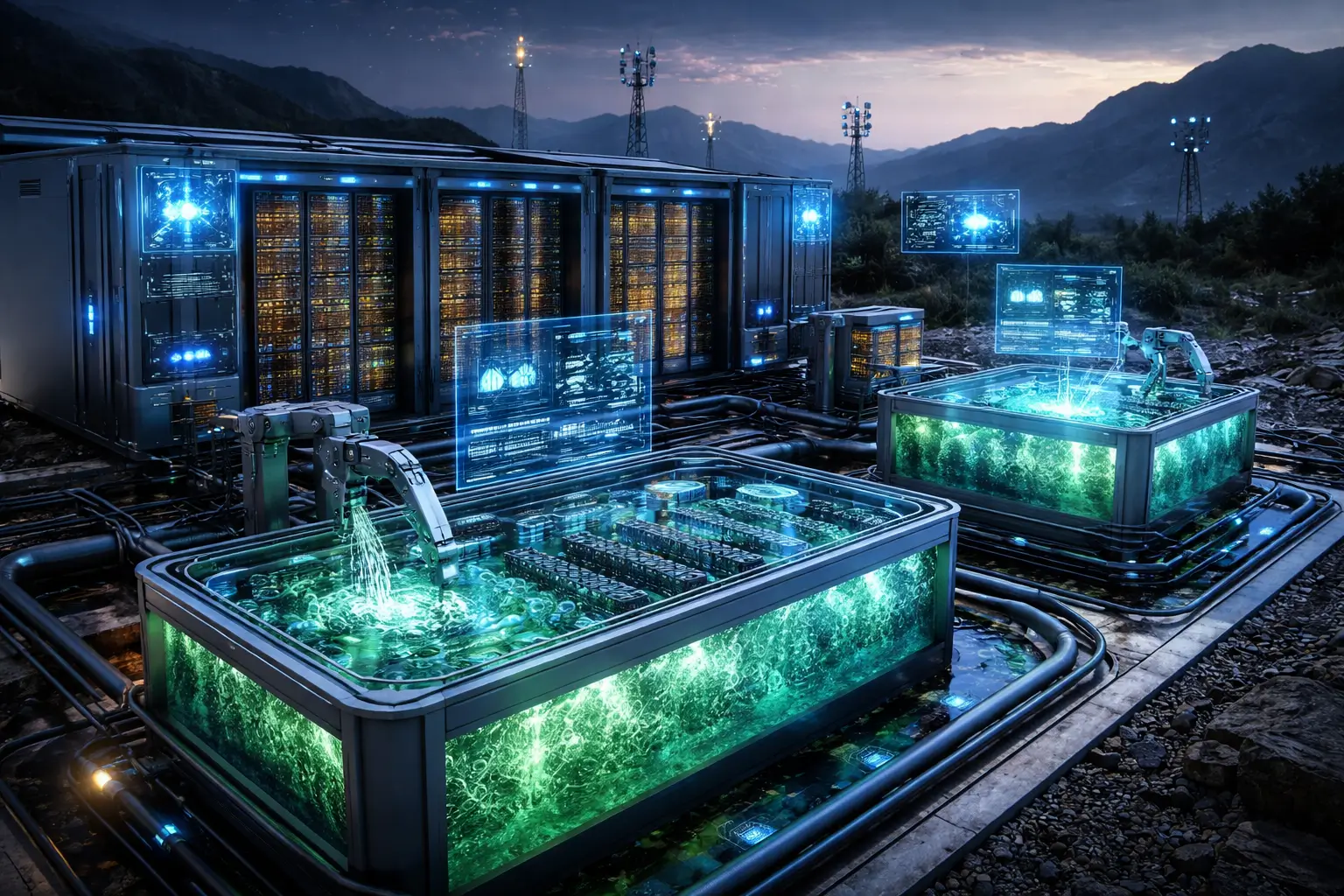

Single-phase immersion cooling systems submerge server hardware directly in dielectric fluid, transferring heat from components to the fluid and then to a facility water circuit through a heat exchanger. The thermal conductivity of dielectric fluids exceeds that of air by a substantial margin, allowing heat removal from GPU surfaces at far higher rates without the airflow volumes that conventional cooling requires. The absence of fans within immersed servers eliminates a significant source of mechanical noise and failure, and the sealed nature of immersion tanks provides inherent protection against dust and contaminants that proves particularly valuable in industrial edge environments.

Two-phase immersion systems operate on a different principle, using fluids with low boiling points that vaporize at component surfaces and condense on cooled coils within the tank. The phase change process transfers heat at very high rates with minimal temperature differential between the fluid and the component surface, enabling support for rack densities that exceed what single-phase systems can manage without active cooling infrastructure beyond the condenser circuit. Vendors including Submer, ZutaCore, and Asperitas are adapting both single-phase and two-phase systems for edge deployment, with tank designs that prioritize compact form factors, simplified fluid management, and integration with facility water circuits that may operate at higher temperatures than those available in purpose-built hyperscale environments.

Economic and Spatial Considerations for Edge Immersion Deployments

The capital cost of immersion cooling systems has historically deterred adoption outside hyperscale environments, where the scale of deployment justifies the upfront investment in tanks, fluid management systems, and facility modifications. At the edge, however, the cost comparison shifts when operators consider the full infrastructure picture. Air cooling systems capable of supporting high-density AI deployments in constrained edge environments require supplementary cooling units, dedicated power circuits, and structural modifications that collectively approach or exceed the installed cost of a purpose-built immersion system sized for equivalent thermal capacity.

Operating cost advantages further strengthen the economic case at the edge. Immersion-cooled systems eliminate fan power consumption within servers, which accounts for a meaningful portion of total server power draw at high utilization. Facility cooling infrastructure for immersion systems consumes substantially less power than equivalent-capacity air systems because heat transfer to facility water circuits operates at higher efficiency than air-to-refrigerant cycles. In edge locations where power supply is constrained or expensive, these efficiency gains translate directly into either reduced operating cost or the ability to support more compute within an existing power envelope.

Early Deployments Validating Immersion Cooling Beyond Hyperscale

Several early deployments demonstrate that immersion cooling can operate successfully in edge and near-edge environments outside the controlled conditions of hyperscale facilities. Telecommunications operators deploying AI inference infrastructure in central office locations have piloted single-phase immersion systems in facilities with limited cooling capacity, reporting stable thermal performance and reduced facility power consumption compared to supplementary air cooling approaches. Industrial AI deployments in manufacturing environments have used immersion cooling to protect GPU hardware from the dust, humidity, and temperature variation that would degrade conventional server hardware and cooling systems in those conditions.

Vendors serving the edge market respond to demonstrated demand with product designs that address the practical constraints of non-hyperscale deployment. Compact tank formats that fit standard data center floor tiles, integrated fluid management systems that reduce the operational complexity of maintaining dielectric fluid quality, and dry-disconnect coupling systems that simplify server insertion and removal without fluid spillage have all emerged in response to edge operator requirements. Submer, ZutaCore, and Asperitas each represent adaptations of core immersion technology for deployment contexts where hyperscale assumptions about facility infrastructure, operational staffing, and maintenance access do not apply.

Infrastructure Readiness and the Path to Edge Immersion Adoption

The primary barriers to broader immersion cooling adoption at the edge are not technical but operational and organizational. Facilities teams at edge locations typically carry deep expertise in air-cooled IT infrastructure and limited familiarity with dielectric fluid management, heat exchanger maintenance, and the modified server handling procedures that immersion systems require. Training requirements, spare parts logistics, and fluid replenishment supply chains represent real operational overhead that operators must account for when evaluating immersion cooling for edge deployments. These barriers are real but addressable, and they are declining as the vendor ecosystem develops managed service offerings, remote monitoring platforms, and standardized maintenance procedures that reduce the operational burden on local facilities staff.

Facility water circuit compatibility represents a technical readiness issue that affects a portion of edge deployment candidates. Immersion cooling systems transfer heat to facility water circuits, requiring those circuits to operate within temperature and flow rate parameters that support adequate heat rejection. Edge facilities that lack adequate facility water infrastructure face additional capital investment to establish compatible cooling water circuits before immersion systems can be deployed. The growing availability of dry coolers and adiabatic cooling systems that can establish facility water circuits without access to chilled water infrastructure expands the range of edge locations where immersion cooling becomes technically feasible. Immersion cooling is on a clear growth trajectory at the edge, and the operators who begin building familiarity with the technology now will be better positioned as AI inference density continues to rise across distributed infrastructure environments.