OpenAI has agreed to pay chip startup Cerebras more than $20 billion to use servers powered by the company’s wafer-scale AI chips over the next three years, according to reporting by The Information on April 16, 2026. The deal doubles the size of an earlier arrangement signed in January, when OpenAI committed to purchasing up to 750 megawatts of computing capacity from Cerebras in a deal valued at more than $10 billion. The rapid doubling of the commitment reflects the pace at which OpenAI’s compute requirements are growing as it scales inference workloads across ChatGPT, enterprise APIs, and agentic AI deployments.

As part of the agreement, OpenAI will receive warrants for a minority equity stake in Cerebras tied to its spending levels. That structure aligns both companies’ interests, giving OpenAI exposure to Cerebras’ upside as a public company while securing long-term access to an alternative chip platform at a moment when Nvidia supply constraints and geopolitical export restrictions are making compute diversification a strategic priority across the AI industry.

What Cerebras Brings to OpenAI’s Compute Stack

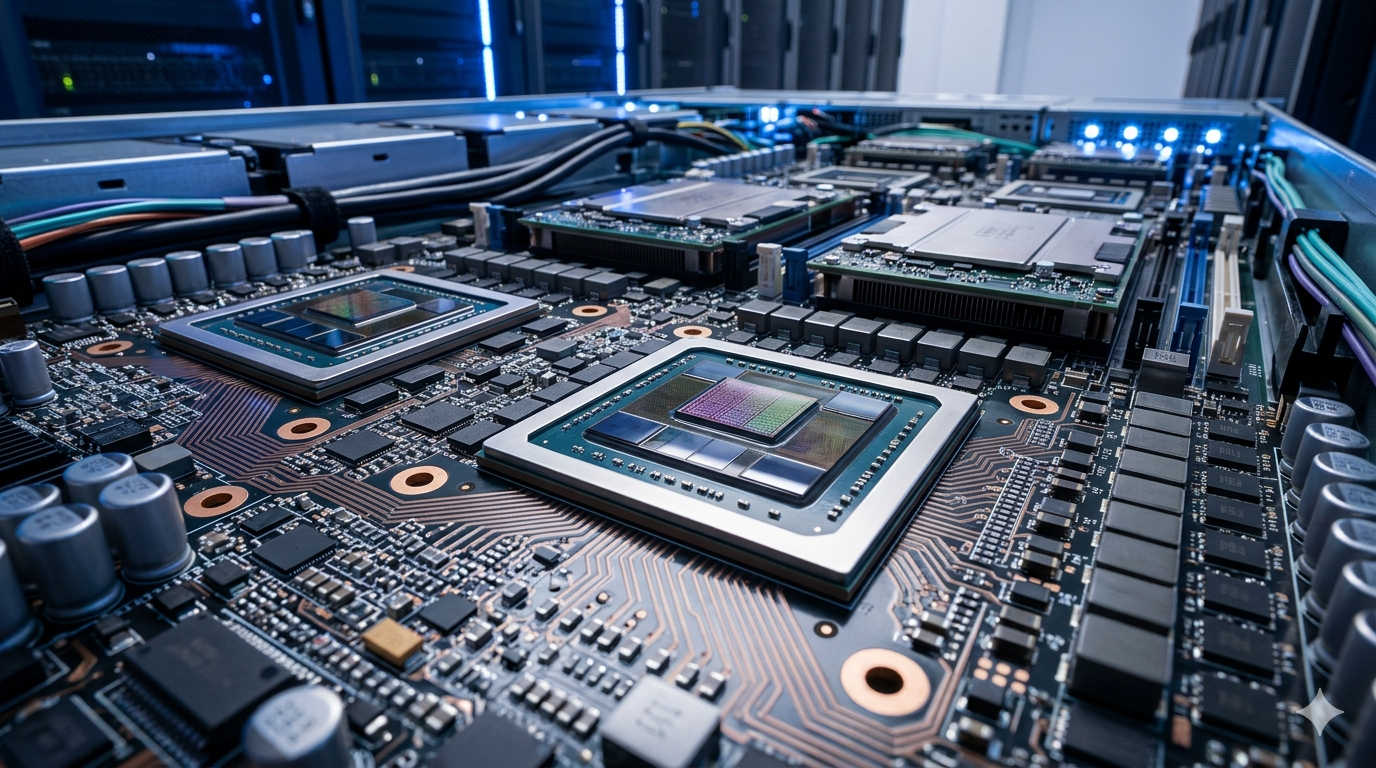

Cerebras is known for its wafer-scale engine, a chip architecture that integrates an entire silicon wafer into a single processor rather than dividing it into individual chips connected by interconnects. That architecture delivers very high memory bandwidth and low latency for specific AI workloads, particularly inference tasks where large models need to generate tokens rapidly across many concurrent users. For OpenAI, which is processing more than 15 billion tokens per minute across its API and consumer products, the latency and throughput profile of Cerebras hardware addresses real operational requirements rather than simply diversifying away from Nvidia for strategic optics.

Cerebras has been positioning itself as a strong alternative to Nvidia’s GPU dominance, and the OpenAI deal significantly boosts its market standing ahead of what the company has signalled could be a near-term public offering. The company counts Meta and several sovereign AI programmes among its existing customers, giving it a diversified demand base that supports the scale of infrastructure deployment the OpenAI commitment requires.

What It Signals About the Broader Compute Diversification Race

The OpenAI-Cerebras deal is the latest in a series of moves by frontier AI labs to reduce single-vendor compute dependency. Anthropic committed $50 billion to Fluidstack for custom-built data centers. OpenAI has simultaneously deepened its relationship with Oracle through the Stargate programme while contracting with Cerebras for alternative chip infrastructure. That parallel strategy, securing multiple compute pathways simultaneously rather than betting on a single supplier, reflects a maturation in how frontier labs think about infrastructure risk at the scale they are now operating.

The deal also signals that the compute market is broadening beyond the Nvidia-hyperscaler axis that has defined AI infrastructure for the past three years. As AI inference workloads grow faster than training workloads, and as the economics of inference favour specialised low-latency hardware over raw training throughput, chip architectures optimised for inference like Cerebras’s wafer-scale engine are finding commercially significant demand at a scale that was not available during the training-dominated era.