The original neocloud proposition was simple. Hyperscalers could not build GPU capacity fast enough to meet the demand that generative AI created in 2023 and 2024. A new category of specialized operator emerged to fill that gap. These operators bought Nvidia GPUs, stood up dense compute clusters, and rented access to AI builders who could not get what they needed from AWS, Azure, or Google Cloud. The model was straightforward. The pricing was spot-based. The customers were mostly AI startups and research teams. The business logic was that the hyperscaler capacity crunch would last long enough for neoclouds to build meaningful scale before the window closed.

That window did not close. It widened. The hyperscaler capacity crunch deepened rather than resolved, and the nature of the neocloud business transformed as a result. The spot rental model that defined the sector’s early years has given way to something structurally different. Multi-year committed offtake agreements with hyperscalers and frontier model labs now anchor neocloud balance sheets. GPU-as-a-service has matured from a commodity rental product into a structured infrastructure offering with long-duration contracts, dedicated capacity pools, and customer relationships that look less like cloud subscriptions and more like industrial supply agreements. The neocloud business model will never return to what it was in 2023.

From Spot Rental to Committed Infrastructure

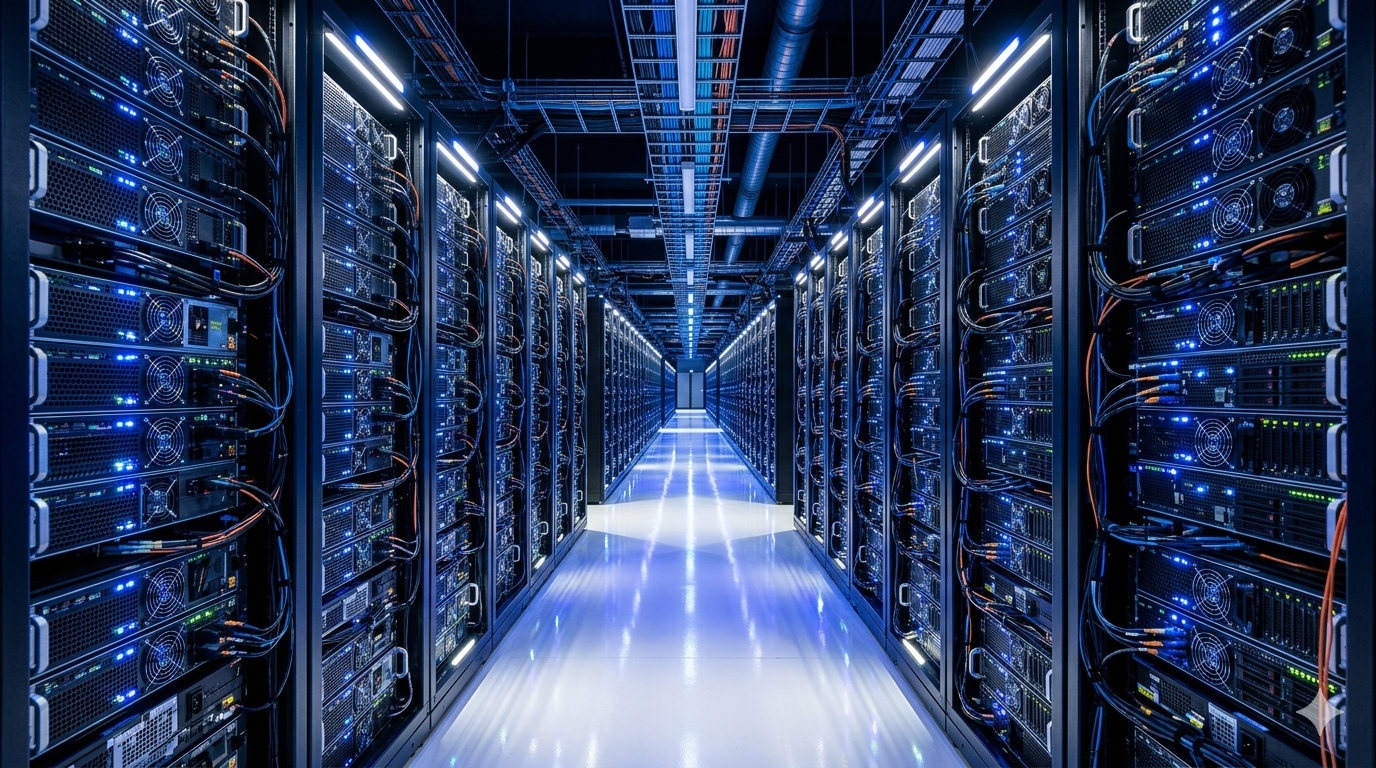

The shift from spot-based GPU rental to committed multi-year contracts did not happen by design. It happened because hyperscalers discovered that neoclouds offered something they genuinely needed. Building their own data center capacity takes three to five years from site selection to operation. A neocloud that already has power, land, and GPU clusters can provide committed capacity on timelines measured in months rather than years. For a hyperscaler trying to meet customer demand for AI compute that is growing faster than its own infrastructure can scale, that speed differential has real commercial value worth paying a significant premium for.

Meta’s expanding commitment to CoreWeave illustrates how far this dynamic has progressed. A deal that began at fourteen billion dollars grew to more than thirty-five billion dollars through 2032. That is not a spot market transaction. It is a long-term infrastructure partnership with a commitment scale that rivals the capital programs of major industrial companies. The deal structure reflects a commercial relationship in which Meta is effectively pre-purchasing GPU capacity years in advance because the alternative, waiting for its own infrastructure to scale, would leave it unable to meet its AI development timelines.

What Committed Offtake Does to Neocloud Economics

The shift to committed offtake agreements changes neocloud economics in ways that cut in both directions simultaneously. On the revenue side, long-term contracts provide visibility that spot pricing never could. A neocloud with a multi-year hyperscaler agreement knows its utilization floor years in advance. It can finance GPU procurement against contracted revenue rather than against projected demand. That financing flexibility allows neoclouds to deploy capital more aggressively than a pure spot-market operator could justify, accelerating the capacity buildout that makes them more valuable to hyperscaler customers.

On the cost side, however, committed contracts introduce concentration risk that the spot model distributed across a wide customer base. When more than half of a neocloud’s revenue comes from one or two hyperscaler customers, the business is structurally exposed to those customers’ capacity decisions in ways that a diversified spot rental operation is not. A hyperscaler that decides to accelerate its own infrastructure buildout and reduce its neocloud dependence can materially damage a neocloud’s revenue base in ways that no individual spot customer could. As explored in our analysis of neocloud unit economics, this margin and concentration problem is the defining tension in the neocloud business model today.

The Nvidia Relationship as Structural Moat

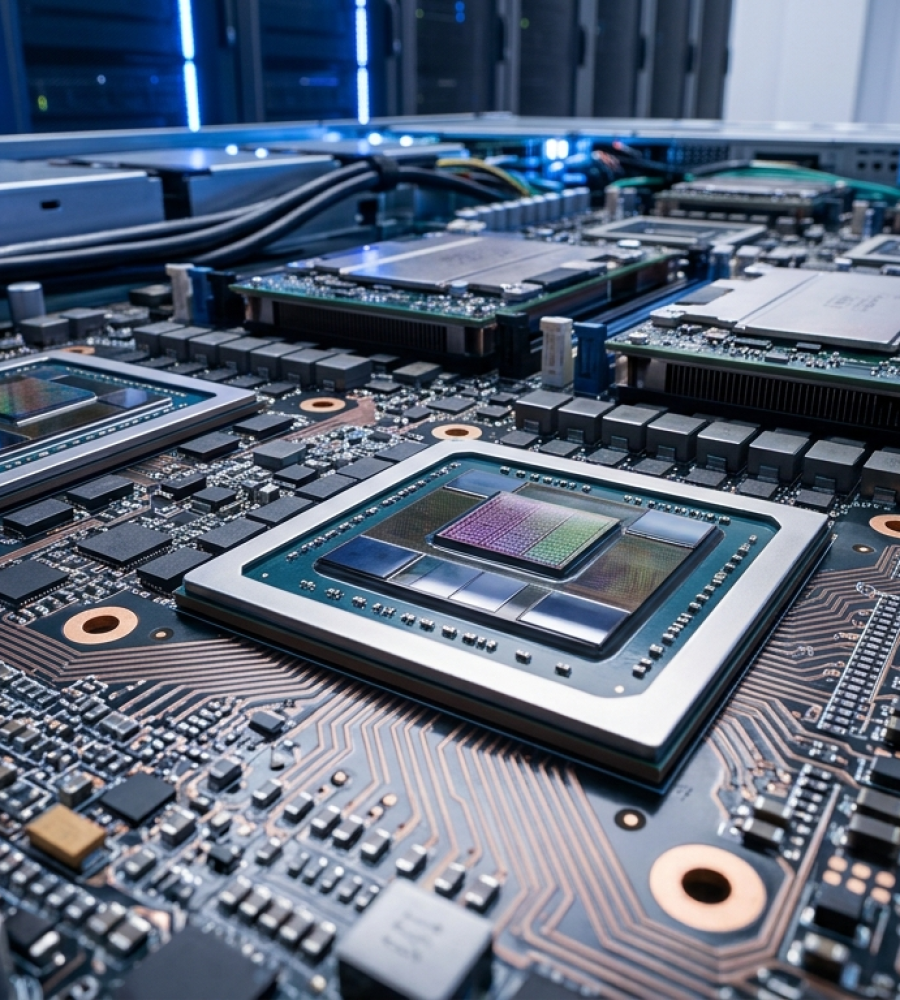

One factor that distinguishes the leading neoclouds from pure commodity operators is their relationship with Nvidia. CoreWeave’s position as an early Nvidia GPU buyer gave it preferential allocations, financing structures, and ultimately an equity relationship that functions as a competitive moat. Operators with Nvidia on their cap table or with committed supply agreements receive the latest GPU generations earlier than competitors. That early access matters enormously in a market where customers making multi-year capacity commitments want assurance that their supplier will have access to the next hardware generation, not just the current one.

The Nvidia relationship also changes how neoclouds finance themselves. GPU procurement at the scale that committed hyperscaler contracts require demands capital that most neoclouds cannot generate from operations. Nvidia’s willingness to provide or facilitate financing for GPU purchases, effectively acting as a quasi-lender to neoclouds that agree to buy its hardware, creates a capital structure that benefits both parties. Nvidia extends its market reach through neoclouds that serve customers it cannot reach directly. Neoclouds access GPU supply and financing that independent capital markets would price at significantly higher cost. The relationship is symbiotic as long as Nvidia’s hardware remains the dominant choice for AI workloads.

What Happens When Custom Silicon Scales

The Nvidia relationship that anchors the leading neoclouds today carries a structural risk that the committed contract model makes harder to manage than the spot model was. A neocloud operating on spot pricing can pivot its hardware mix relatively quickly as customer preferences change. A neocloud with multi-year contracts specifying Nvidia GPU capacity cannot. If hyperscaler custom silicon from Google’s TPUs, Amazon’s Trainium, or future generations of in-house AI accelerators begins to capture meaningful share of the AI compute market, neoclouds built around Nvidia GPU commitments face a hardware obsolescence problem that their contract structures may not allow them to address on commercially viable timelines.

That risk is not immediate. Nvidia’s hardware remains the dominant choice for AI training workloads, and the performance advantages of its latest GPU generations have not been closed by custom silicon alternatives in most training contexts. However, the inference market is evolving differently. Custom silicon increasingly competes effectively with Nvidia GPUs for inference workloads, and inference is the fastest-growing segment of AI compute demand. As explored in our analysis of the rise of inference clouds, neoclouds that do not develop hardware flexibility risk finding their committed capacity pools misaligned with where AI compute demand is actually growing.

The Differentiation Problem at Scale

The original neocloud value proposition was differentiation through specialization. Neoclouds offered GPU-dense infrastructure optimized for AI workloads in ways that general-purpose hyperscaler clouds were not. That differentiation was genuine and commercially valuable in 2023 and 2024 when hyperscalers were building broad-based cloud infrastructure rather than AI-optimized compute environments. The competitive gap has been narrowing ever since.

Hyperscalers have invested heavily in AI-optimized infrastructure over the past two years. Microsoft, Google, and Amazon have all built or are building compute clusters that match or exceed neocloud performance on many AI workloads. The performance differentiation that justified neocloud pricing premiums over hyperscaler alternatives is compressing in most workload categories. Neoclouds that competed primarily on raw GPU performance are discovering that performance alone is no longer sufficient to command the premiums their business models require.

Where Real Differentiation Now Lives

The neoclouds navigating this compression most effectively are those that have shifted their differentiation from hardware performance to service architecture. Bare-metal GPU access, which gives customers direct control over hardware without hypervisor overhead, remains a genuine differentiator for customers running workloads where that control matters. Custom networking configurations optimized for specific AI training patterns, dedicated capacity pools that guarantee availability without the utilization variability of shared clouds, and operational expertise in managing GPU-dense environments at scale all represent areas where leading neoclouds maintain meaningful advantages over hyperscaler alternatives.

According to McKinsey’s analysis of the neocloud sector, the neoclouds most likely to survive the competitive compression are those that use hyperscaler contracts not as their primary business but as the revenue foundation that funds differentiated service development. A neocloud that relies exclusively on hyperscaler offtake for its economics is essentially a contract manufacturer of GPU capacity. That is a viable business, but it is not a defensible one. The operators building durable positions are those using hyperscaler contract revenue to fund the software, service, and operational capabilities that make them valuable to customers the hyperscalers cannot serve as well.

The Capital Structure That Makes It All Possible

The GPU-as-a-service model at scale requires a capital structure that the early neocloud operators were not built to support. Buying tens of thousands of high-end GPUs, standing up the infrastructure to operate them, and committing to multi-year capacity delivery requires billions of dollars of capital deployed years before the revenue that justifies it arrives. Neoclouds have financed this buildout through a combination of GPU-collateralized debt, equity from infrastructure funds and strategic investors, and in some cases direct financing from Nvidia tied to GPU purchase commitments.

The capital structure that results is not like any previous technology company model. It is closer to an industrial infrastructure operator than a software business. GPU-collateralized debt structures function similarly to asset-backed securities in traditional infrastructure finance. The GPUs are the underlying assets, the hyperscaler contracts are the revenue stream, and the debt is serviced against that contracted cash flow. For investors familiar with infrastructure finance, this model is legible. For investors accustomed to evaluating software companies on revenue multiples, it requires a different analytical framework entirely.

The Leverage Risk That Cannot Be Ignored

The capital intensity of the committed GPUaaS model creates leverage ratios that carry genuine financial risk if the contracted revenue does not materialize as expected. CoreWeave’s balance sheet, with total liabilities significantly exceeding equity, reflects a financing structure that works efficiently when contracted utilization is high and hardware retains its value. It becomes fragile quickly if hyperscaler customers reduce their capacity commitments, if GPU prices decline faster than expected, or if hardware obsolescence accelerates beyond what depreciation assumptions model.

None of these risks are hypothetical. Hyperscaler capacity planning is notoriously variable. GPU prices have historically declined as manufacturing scales. Hardware generations have been accelerating. A neocloud that financed its GPU fleet against contracted revenue at prices and depreciation assumptions calibrated to current market conditions faces real balance sheet risk if those conditions shift meaningfully before the contracts run their course. The leverage works until it does not, and the operators who manage that risk most carefully will be the ones who define what the neocloud sector looks like after the current buildout cycle runs its course.

What the Next Phase of GPUaaS Looks Like

The GPU-as-a-service model is not finished evolving. The committed contract phase that currently defines the sector will give way to a third phase as the market matures and competition intensifies. The shape of that third phase is visible in the strategies that leading neoclouds are already pursuing. Software layers that add value above the bare hardware, inference-optimized capacity pools that serve the fastest-growing AI workload segment, geographic diversification into markets where hyperscalers have less established infrastructure, and service offerings that address enterprise customers who need AI compute without the complexity of managing their own GPU clusters all represent directions that differentiated neoclouds are moving.

The neoclouds that survive and thrive in the next phase will be those that used the committed contract windfall of 2025 and 2026 to build capabilities that extend beyond hardware provision. A neocloud that exits this period with a strong balance sheet, diversified customer base, differentiated service architecture, and operational expertise in GPU-dense environments will be positioned to compete in an AI compute market that looks nothing like the one that created the neocloud category in the first place. Those that remained oriented toward the model that created the category will find themselves competing for a diminishing share of a market that has moved past them.

The Geographic Dimension of GPUaaS Competition

The committed GPUaaS model is playing out differently across geographies in ways that will shape the competitive landscape of the neocloud sector over the next several years. North America dominates current neocloud capacity because the hyperscaler relationships that anchor committed contract revenue are primarily North American in origin. However, demand for AI compute is growing rapidly in Europe, Asia Pacific, and the Middle East, and the regulatory and sovereignty requirements of those markets create opportunities for neoclouds willing to build capacity outside the North American core.

European data sovereignty regulations require that certain categories of AI workloads process data within European territory. Hyperscalers serve this requirement through their European cloud regions, but their AI compute capacity in Europe lags their North American buildout significantly. A neocloud with European GPU capacity and compliance capabilities can address a real gap that hyperscaler alternatives cannot fully close on near-term timelines. The sovereignty dimension adds a competitive layer to European neocloud competition that pure performance or price differentiation does not capture.

The Enterprise Market as the Next Growth Vector

The hyperscaler offtake model that currently dominates neocloud revenue is a high-volume, low-margin business at its core. Hyperscalers negotiate hard on price, demand contractual flexibility, and have the scale to move significant capacity between suppliers if pricing or performance advantages shift. Enterprise customers operate differently. They are willing to pay premium prices for dedicated capacity, guaranteed availability, and the operational support that allows them to run AI workloads without building internal GPU infrastructure expertise.

The enterprise GPUaaS market is substantially less developed than the hyperscaler offtake market. Most enterprises that want to run AI workloads at scale today use hyperscaler cloud AI services or manage their own on-premise hardware. The neocloud offering sits between these two options and potentially addresses limitations of both. It provides the performance and control advantages of dedicated hardware without the capital and operational complexity of on-premise ownership. It also avoids the utilization variability and pricing opacity of shared hyperscaler cloud environments. Developing the enterprise market requires different capabilities from those that win hyperscaler contracts, including procurement processes, support organizations, compliance documentation, and service level agreements that hyperscaler relationships do not demand.

The Competitive Endgame for the Neocloud Sector

The structural forces shaping the GPUaaS market point toward a sector consolidation that will leave a smaller number of larger, more differentiated operators defining what neoclouds are in 2028 and beyond. The capital requirements of the committed contract model favor scale. Operators with larger capacity pools can offer hyperscaler customers the volume commitments they want, finance GPU procurement more efficiently, and spread the overhead of building differentiated service layers across a larger revenue base. Scale advantages compound in ways that make the gap between leading neoclouds and smaller operators wider over time rather than narrower.

At the same time, the differentiation pressure is real. A sector that competes purely on GPU rental price will see margins compress toward commodity levels as hyperscaler custom silicon adoption reduces the premium that Nvidia GPU performance commands and as more operators enter the market with similar hardware profiles. The neoclouds that avoid that fate are those that build service, software, and operational differentiation that creates genuine switching costs for their customers. When a customer’s AI training workflows are optimized for a specific neocloud’s networking architecture, when their engineering team has built internal tooling against a specific neocloud’s API surface, and when their operations team has developed institutional knowledge of a specific neocloud’s performance characteristics, switching costs accumulate in ways that protect the neocloud’s competitive position even as hardware commoditizes.

Why the Sector Cannot Afford to Stand Still

The neocloud sector is in a window where the decisions being made today about capacity, differentiation, and capital allocation will determine competitive positions that persist for years. Operators that use the current period of strong hyperscaler demand and available capital to build only GPU capacity are making an implicit bet that the spot and committed rental model will remain commercially viable indefinitely. That bet is unlikely to pay off. The hyperscalers will close the infrastructure gap. Custom silicon will reshape hardware economics. Margins will compress. The operators that emerge from this period with durable competitive positions will be those that used the windfall to build something that outlasts the current demand cycle.

The GPU-as-a-service model has grown up. It is no longer a simple rental product serving overflow demand from capacity-constrained hyperscalers. It is a structured infrastructure offering with committed contracts, complex capital structures, competitive differentiation requirements, and a customer base that extends from frontier model labs to enterprise AI teams. The neoclouds that understand this transformation and build their organizations accordingly will define what the next phase of AI compute infrastructure looks like. Those that remain oriented toward the model that created the category will find themselves competing for a diminishing share of a market that has moved past them.