Modular data centers emerged to accelerate infrastructure deployment by shifting significant portions of construction into controlled manufacturing environments. Prefabricated modules allow operators to integrate power systems, cooling assemblies, and IT racks before delivery to the site, dramatically reducing build timelines. Engineering attention naturally gravitates toward high-impact subsystems such as electrical distribution, thermal performance, and compute density because those components directly influence capacity and operational efficiency.Factory integration of modular units introduces another dynamic that influences cabling discipline across the infrastructure stack. Rack systems often arrive pre-wired to meet basic operational requirements, allowing modules to achieve fast commissioning once power and network uplinks connect. Installation teams working under compressed schedules rarely reconfigure these internal cable arrangements even when pathway constraints appear after module placement. Preconfigured cable bundles may not always align perfectly with the pathway infrastructure available at the deployment site, which can require additional routing adjustments during final module integration.

Rapid expansion cycles in modular environments also reshape how infrastructure teams allocate design resources. New modules often appear as capacity increments that replicate previously deployed units rather than entirely new facilities. Operators assume that earlier cabling decisions will scale effectively across additional modules because compute and cooling designs remain consistent. This assumption ignores how cable pathways interact with site topology, module placement orientation, and interconnection density across clusters. Routing strategies that function adequately within one module may become inefficient or physically constrained when additional modules connect through centralized switching zones. Engineers sometimes identify pathway capacity or routing constraints only after installation begins because early planning may focus more heavily on electrical and cooling infrastructure than on detailed telecommunications pathway modeling. Consequently the physical layer evolves incrementally through field adjustments rather than structured architectural planning.

Documentation practices within modular deployments further reinforce the tendency to treat cabling as a secondary consideration. Infrastructure drawings typically focus on electrical distribution, cooling loops, and equipment placement because those systems require formal engineering approvals. Cabling diagrams sometimes remain schematic representations rather than precise pathway layouts that specify routing routes and separation rules. Operations teams later inherit facilities where cable routes lack consistent labeling or traceability across modules and network zones. Troubleshooting efforts must then rely on manual tracing of connections rather than referencing structured documentation frameworks. Limited visibility into physical network paths complicates maintenance planning and increases the likelihood of accidental disruptions during upgrades. Over time the absence of disciplined documentation erodes the operational clarity that modular infrastructure aims to deliver.

Structured Pathways vs Ad-Hoc Routing: The Reliability Divide

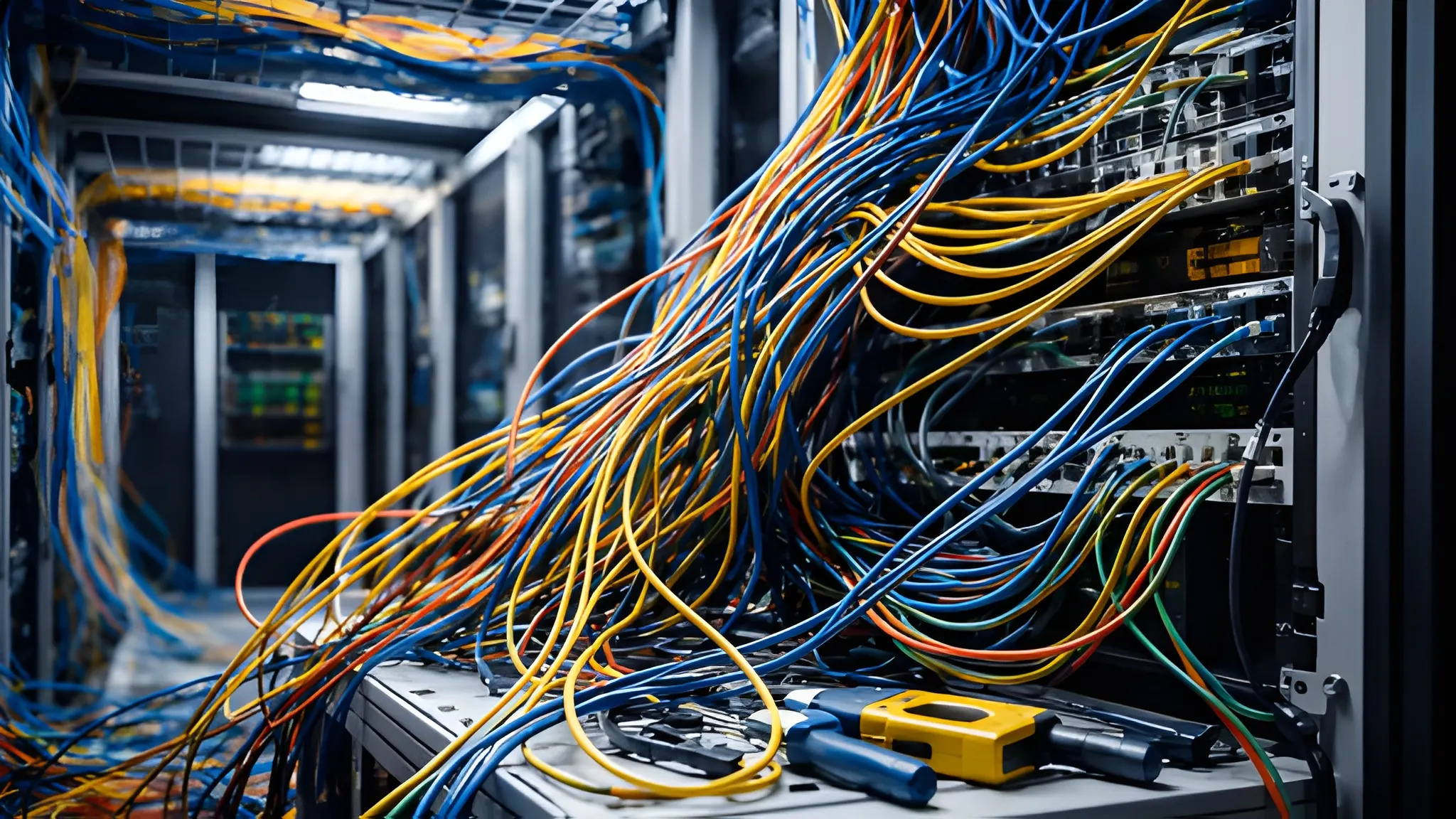

Structured cable pathway systems represent one of the most important elements of reliable data center infrastructure design. Overhead ladder racks, cable trays, and segmented routing corridors provide predictable routes for power and network connectivity across the facility. These systems maintain consistent separation between cable types while ensuring adequate space for airflow and service access around racks. Infrastructure planners use pathway capacity models to determine how many cables each segment can support without creating congestion or mechanical stress. Properly engineered pathways also enforce routing discipline by guiding technicians toward predefined installation routes. Facilities that implement these systems during early design stages often achieve cleaner installations and more predictable maintenance workflows.

Ad-hoc routing practices appear when structured pathways cannot accommodate installation demands or when planning omissions leave connectivity gaps. Technicians working under time pressure may route cables directly between racks using available structural supports or temporary ties. These improvised connections may not consistently follow structured cabling guidelines such as recommended bend radius limits and pathway spacing defined in telecommunications infrastructure standards. Network bundles can accumulate along rack frames or adjacent infrastructure components when structured pathways lack sufficient capacity or clear routing segmentation. This form of routing complicates airflow management because cable clusters obstruct intake and exhaust paths around high-density equipment. Maintenance personnel also face difficulties navigating dense cable bundles when replacing switches or servers. The operational burden created by these conditions grows steadily as infrastructure density increases.

Structured pathways support predictable airflow behavior throughout modular facilities where cooling efficiency remains tightly coupled with rack layout. Air containment systems depend on unobstructed circulation paths to maintain consistent thermal conditions across server rows. Excess cable bundles suspended across racks or cooling plenums can alter airflow patterns in subtle but measurable ways. Engineers often detect localized temperature variations in zones where cabling obstructs air intake corridors or return ducts. Thermal monitoring tools may identify hotspots that originate not from compute loads but from physical airflow disruption created by poorly routed cables. These conditions force cooling systems to operate at higher output levels to compensate for reduced circulation efficiency. Facilities that maintain disciplined cable routing typically achieve more stable thermal performance under similar workload conditions.

Maintenance accessibility represents another dividing line between structured and improvised cabling approaches. Organized pathway systems allow technicians to identify and isolate specific cable groups without disturbing adjacent connections. Clear routing segmentation also enables systematic labeling practices that map cable identifiers directly to pathway segments and rack ports. When service teams perform hardware replacements, they can access relevant cable bundles quickly without navigating dense or tangled routing paths. Improvised cabling environments rarely provide that level of clarity because cable routes overlap unpredictably across racks and infrastructure components. Troubleshooting activities therefore require more time and careful manipulation of surrounding connections. These operational delays translate directly into longer maintenance windows and increased service risk.

Redundancy at the Physical Layer: When Network Resilience Breaks Down

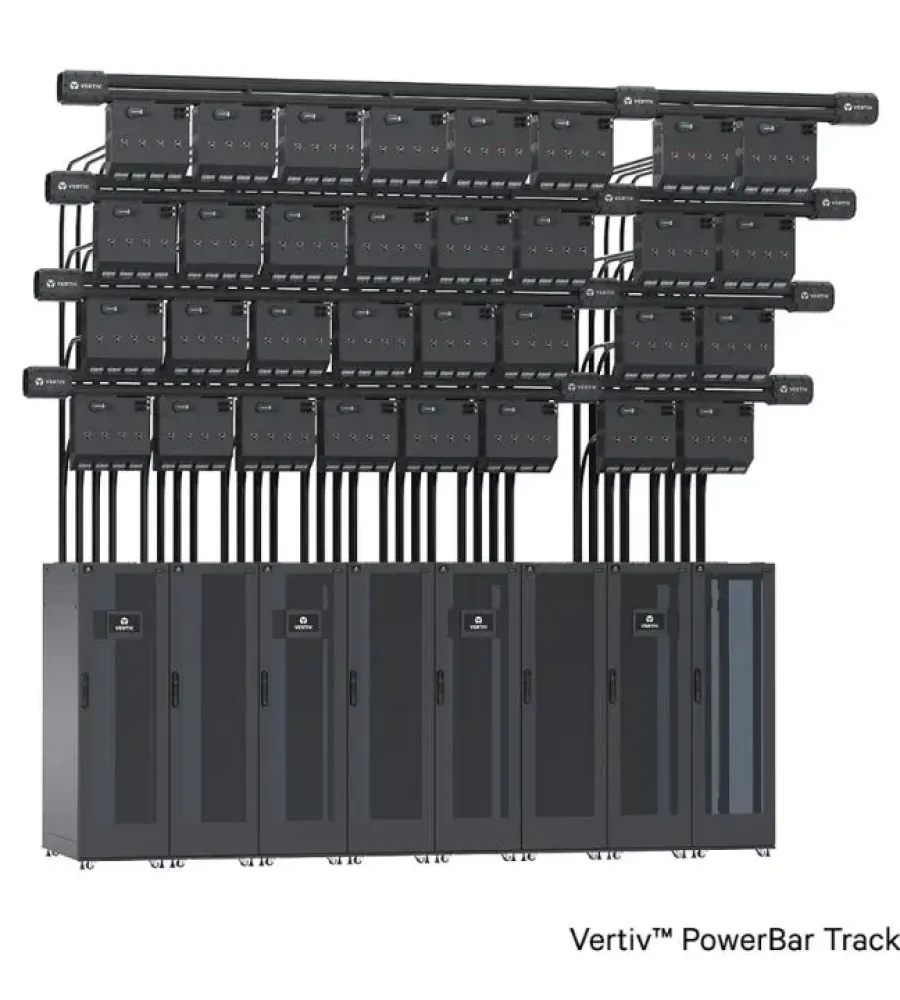

Network architects frequently design redundant connectivity paths to maintain service availability during equipment failures. Logical network redundancy typically involves dual switches, multiple network fabrics, and failover routing protocols that redirect traffic when a link fails. These logical designs assume that physical connections supporting redundant links remain independent across the infrastructure environment. In modular deployments the physical layer sometimes fails to maintain that independence because routing constraints push multiple links through shared cable pathways. A redundant network architecture loses much of its protective value when primary and secondary connections share the same physical route. Damage or disruption along that shared path can simultaneously disable both links and defeat the intended resilience strategy. This disconnect between logical planning and physical implementation represents a persistent reliability risk in high-density infrastructure environments.

Cabling infrastructure must therefore mirror the redundancy philosophy embedded within network topology designs. Separate pathway corridors should carry primary and secondary connections to ensure that a single mechanical event cannot compromise both links. Physical separation can involve distinct overhead trays, independent vertical risers, or geographically separated routing routes within the facility layout. Engineers sometimes refer to this concept as path diversity because it ensures that redundant connections do not share environmental vulnerabilities. Modular facilities can complicate pathway separation because compact module footprints provide less physical routing space than large traditional data halls. Designers sometimes use shared cable trays within modules when pathway capacity must accommodate high cable density within limited structural space. Although these decisions may simplify installation, they weaken the reliability assumptions built into the network architecture.

Risk exposure increases further when modules connect through centralized switching or interconnection zones. High-capacity uplinks from multiple modules often converge into aggregation switches located in dedicated network rooms or meet-me areas. Large bundles of redundant cables may enter these rooms through a limited number of pathway conduits due to structural constraints in the building layout. A localized incident such as accidental cable damage or physical obstruction within that conduit can affect multiple redundancy paths simultaneously. Engineers sometimes overlook these convergence points because logical network diagrams emphasize switching topology rather than physical cable corridors. Operational resilience therefore depends on careful mapping between logical redundancy design and actual routing infrastructure across the facility.

Auditing practices play a crucial role in verifying that physical redundancy matches architectural intentions. Infrastructure teams typically review cable routing during operational audits or change-management reviews to confirm that redundant connections remain physically separated across trays and conduits. Documentation systems should track pathway assignments for every major connectivity link to prevent accidental consolidation during later upgrades. Modular environments often undergo incremental expansions that introduce new modules and additional connectivity requirements over time. Without documented pathway governance and routing guidelines, new cables may be installed alongside existing links within the same pathway, which can reduce the intended physical separation of redundant connections. Routine physical audits help detect such deviations before they undermine network resilience. Facilities that integrate these checks into operational procedures maintain stronger alignment between logical design and physical infrastructure.

Density Growth and the Cable Management Challenge

Modern modular data centers increasingly host compute clusters designed for artificial intelligence training, high-performance computing workloads, and dense virtualization environments. These deployments significantly raise the number of network connections per rack because GPU servers and accelerated compute nodes require multiple high-bandwidth network interfaces. Each additional interface introduces more fiber and copper cables that must travel through limited routing infrastructure within modular enclosures. Cable counts per rack can multiply rapidly when high-speed fabrics such as InfiniBand or Ethernet clusters support distributed compute architectures. Operators must accommodate these connections while maintaining airflow paths, service clearances, and safe bend radii across the infrastructure layout. Cable management therefore evolves from a simple organizational practice into a structural component of high-density infrastructure design.

Large cable volumes place measurable pressure on the physical capacity of pathway systems inside modular environments. Ladder racks and overhead trays that once carried moderate network traffic must now support thick bundles of fiber trunks and high-count copper assemblies. Pathway capacity planning becomes more complex because cable growth rarely occurs in a single deployment stage. Infrastructure teams must anticipate future network expansions while designing pathway widths and load tolerances that remain safe over long operational cycles. Technicians sometimes discover that earlier installations consumed most of the available tray capacity when additional hardware arrives. Improvised expansions may then introduce secondary trays or hanging cable bundles that reduce structural clarity across the routing environment. These adjustments complicate cable tracing and increase the likelihood of congestion within shared pathways.

High cable density also influences airflow management inside modular racks where thermal margins remain narrow. Server platforms designed for accelerated workloads generate concentrated heat loads that rely on uninterrupted air movement across equipment intakes. Dense cable clusters positioned near the rear of racks can interfere with exhaust airflow or obstruct return air channels within containment systems. Even small airflow disruptions may cause temperature variations that affect system reliability or cooling efficiency. Cooling equipment compensates for those variations by increasing fan speeds or chilled air output, which gradually raises operational energy consumption. Careful cable routing therefore contributes directly to maintaining predictable thermal behavior inside dense computing environments. Facilities that preserve clear airflow corridors through disciplined cable management typically experience fewer localized thermal anomalies.

Serviceability challenges emerge when dense cable bundles obscure access to networking equipment and server ports. Maintenance engineers must reach transceiver modules, patch panels, and network switches during routine upgrades or troubleshooting procedures. Thick cable bundles hanging across rack frames can restrict hand access and create physical strain during connector removal or insertion. Equipment replacement tasks may require technicians to temporarily shift surrounding cables in order to reach specific ports. Each manipulation introduces a small risk of disturbing adjacent connections that support active workloads. Operational procedures therefore emphasize organized cable grouping, color coding, and clear routing separation to maintain accessible working spaces around equipment. These practices help maintain service efficiency as infrastructure density increases across modular deployments.

Maintenance, Troubleshooting, and the Cost of Cable Disorder

Operational stability within a data center depends heavily on the ability to identify, trace, and isolate network connections during maintenance events. Structured cable installations support these activities through clear routing paths and standardized labeling systems that link each cable to documented infrastructure maps. Technicians can quickly locate specific network paths because pathway segmentation guides cables along predictable routes between racks and distribution panels. Maintenance teams rely on this clarity when isolating faulty components or performing staged hardware replacements within live environments. Troubleshooting efforts often begin with verifying physical connectivity before analyzing software or configuration issues. Reliable cable organization therefore accelerates root cause analysis across complex infrastructure environments.

Disorganized cabling environments introduce uncertainty into that troubleshooting process because connections overlap without consistent routing logic. Technicians attempting to trace a specific cable may encounter dense clusters of similar connections bundled together without clear pathway separation. Identifying the correct cable often requires careful manual tracing across racks, trays, and patch panels. Maintenance windows extend as engineers verify connections one by one to avoid disconnecting active services. This slower investigative process increases operational risk because prolonged troubleshooting periods leave systems exposed to ongoing service degradation. Clear cable discipline reduces those risks by enabling technicians to locate connections quickly through structured routing visibility.

Hardware upgrade cycles further highlight the operational cost of poor cable organization within modular infrastructure environments. Network switches, servers, and storage platforms undergo periodic replacement as compute technologies evolve and capacity demands increase. Upgrade procedures often involve disconnecting large numbers of cables while maintaining connectivity for surrounding systems. Structured cable pathways simplify this work because technicians can isolate cable groups that correspond to specific racks or network segments. Disorganized installations require more careful handling because cables may cross between racks or overlap across equipment zones without clear pathway separation. An accidental disconnection in such environments can interrupt multiple network connections when cable routing lacks clear separation and labeling.Careful cable organization therefore protects operational continuity during routine infrastructure upgrades.

The economic consequences of cable disorder also extend beyond maintenance efficiency. Longer troubleshooting cycles translate into extended service interruptions when network issues occur. Data center operators measure these interruptions in terms of lost application availability, delayed workloads, or degraded service performance. Industry studies frequently highlight that downtime costs escalate rapidly in facilities supporting digital services or enterprise workloads. Physical infrastructure discipline therefore influences financial risk as much as technical reliability. Organizations that maintain well-structured cabling environments often recover from network incidents faster because technicians can locate and resolve physical connectivity problems without delay.

Conclusion: Treating Cabling as Critical Infrastructure, Not Supporting Hardware

Physical connectivity forms the circulatory system of a modern data center, linking compute platforms, storage systems, and network fabrics into a unified infrastructure environment. Modular facilities inherit the same requirement even though their construction model emphasizes rapid deployment and prefabrication efficiency. Infrastructure planners must therefore treat cabling architecture as a design discipline rather than a supporting installation activity. Structured pathways, documented routing plans, and clear separation between connectivity layers establish the foundation for long-term operational clarity. Facilities that embed these principles into early design stages typically achieve more predictable maintenance outcomes and fewer operational surprises. Reliable modular infrastructure depends not only on advanced compute hardware but also on disciplined physical connectivity frameworks.

Standardization plays a central role in maintaining that discipline across expanding modular environments. Cable labeling conventions, pathway segmentation rules, and installation guidelines allow technicians to replicate consistent practices across multiple modules and deployment phases. Infrastructure teams can scale operations confidently when each new module follows the same physical connectivity framework. Documentation systems must record pathway assignments and routing maps so that maintenance teams can trace connections without ambiguity. These records also support future expansions because engineers can evaluate pathway capacity and redundancy alignment before installing additional hardware. Consistent standards therefore transform cable management into a predictable operational process rather than an improvisational task.

Physical layer planning must also align closely with network architecture and infrastructure resilience strategies. Logical redundancy designs require matching pathway diversity to ensure that failover links remain independent across the facility. Engineers must evaluate cable corridors, module layouts, and interconnection zones during design reviews to verify that physical routing supports network resilience goals. Infrastructure audits can confirm that redundant connections remain separated across trays and conduits after installation. These reviews help detect pathway overlaps that could undermine failover protection in real operational scenarios. Strong alignment between logical design and physical infrastructure strengthens overall reliability within modular data center environments.

Growing compute density and accelerated deployment cycles will continue to shape how modular infrastructure evolves over the coming decade. Network connectivity volumes will rise as artificial intelligence platforms, distributed storage systems, and cloud architectures demand increasingly complex interconnection fabrics. Effective cable management strategies will therefore become even more critical to maintaining operational clarity within compact modular facilities. Infrastructure teams that prioritize disciplined routing frameworks, pathway planning, and documentation will manage these changes with greater stability. Physical connectivity may appear mundane compared with advanced computing technologies, yet its influence reaches every layer of digital infrastructure. Modular data center reliability ultimately rests on the quiet discipline of the cables that bind the system together.