Modern hyperscale facilities emerged from architectural principles developed during an earlier phase of enterprise computing growth. Designers optimized these environments for predictable workloads that rarely pushed rack densities beyond modest thresholds compared with present-day AI deployments. Engineers structured these facilities around raised floors, high-volume air circulation, and hot-aisle and cold-aisle containment patterns to distribute cooling evenly across rows of racks. Mechanical airflow systems relied on large plenum spaces beneath the data hall floor that directed conditioned air toward server intakes. Predictable thermal envelopes allowed operators to maintain stable environmental conditions through carefully balanced airflow velocities and temperature gradients. Infrastructure planning therefore focused on uniform cooling coverage rather than highly localized thermal management.

Enterprise IT environments shaped the thermal assumptions that guided early hyperscale design philosophy. Typical enterprise racks historically operated between five and ten kilowatts, creating heat loads that large air-handling systems could remove without complex cooling interventions. Air cooling architectures scaled effectively because heat generation remained evenly distributed across compute rows and server clusters. Operators depended on airflow containment structures to prevent hot exhaust recirculation into server intakes. Mechanical systems relied on computer room air conditioning units that maintained steady airflow pressure throughout the data hall. The design approach prioritized operational simplicity because cooling loads followed relatively stable enterprise computing patterns.

Hyperscale campuses expanded these enterprise cooling principles across enormous footprints as cloud computing demand increased. Large technology operators constructed data halls that extended across thousands of square meters with centralized cooling plants supplying chilled air at scale. Facility layouts encouraged uniform rack densities so that airflow distribution systems could maintain thermal equilibrium across entire halls. Cooling strategies focused on maximizing air circulation efficiency rather than isolating individual high-heat workloads. Engineers expected most servers to operate within narrow thermal envelopes that airflow systems could regulate. This architecture allowed hyperscale providers to achieve reliable cooling while maintaining predictable mechanical energy consumption.

Artificial intelligence infrastructure has introduced thermal characteristics that differ significantly from earlier cloud computing workloads, as accelerator-based servers concentrate substantially higher power draw within individual racks compared with traditional enterprise compute systems. Accelerator clusters built around GPUs generate concentrated heat output that often exceeds the thermal assumptions embedded within airflow-centric facilities. High-performance AI training nodes require tightly packed hardware configurations that increase rack density far beyond earlier enterprise benchmarks. Cooling infrastructure designed around airflow distribution therefore encounters new operational stresses when confronted with concentrated thermal loads. Rack-level power densities have risen rapidly as organizations deploy advanced processors optimized for large model training and inference workloads. The shift toward AI-driven compute environments now forces hyperscale operators to reassess the architectural assumptions behind traditional airflow cooling systems.

AI computing clusters increasingly push rack densities beyond levels that airflow systems can efficiently support. Data center engineers traditionally regarded densities above twenty kilowatts per rack as challenging for conventional air cooling systems. Many modern AI racks operate between thirty and sixty kilowatts, while some high-performance computing and specialized AI training environments have reported rack designs approaching or exceeding one hundred kilowatts under tightly integrated accelerator configurations. Air’s thermal transfer capability limits how quickly heat can move away from high-power electronic components. Cooling infrastructure must therefore deliver enormous air volumes to maintain acceptable equipment inlet temperatures. High airflow requirements can introduce turbulence that disrupts carefully balanced hot-aisle containment designs.

Air cooling depends on the physical properties of air as a heat transfer medium, which imposes practical constraints on thermal management efficiency. Air carries relatively low heat capacity compared with liquids, requiring significantly larger flow rates to remove equivalent thermal energy. Engineers must therefore increase fan speeds or airflow pathways to compensate for higher rack densities. Mechanical systems experience increased electrical consumption as fans operate at higher power levels to maintain airflow velocity. Energy consumption associated with airflow movement can represent a substantial portion of total facility cooling demand. Elevated airflow speeds may also create uneven temperature distribution across densely packed equipment rows.

Hot exhaust air emerging from dense AI racks creates localized thermal zones that challenge airflow containment strategies. Conventional aisle containment structures assume that airflow will move predictably through defined pathways without excessive turbulence. High-density clusters release heat at rates that can overwhelm the designed airflow balance within a data hall. Thermal stratification may develop when hot air accumulates faster than cooling systems can remove it. Equipment placed near these zones may experience elevated inlet temperatures that reduce hardware reliability margins. Operators must continuously monitor airflow behavior to prevent localized overheating conditions within AI deployment areas.

Mechanical fan systems also face scaling challenges when airflow demand rises alongside rack density. Servers equipped with high-performance accelerators often include powerful internal fans that compete with facility airflow patterns. High fan speeds generate pressure differentials that can alter the designed airflow movement within containment structures. Cooling engineers must account for the interaction between server-level airflow and facility-level ventilation systems. Increased airflow turbulence can reduce cooling efficiency even when total airflow volume appears sufficient. Facilities originally designed for moderate density workloads therefore struggle to maintain stable cooling conditions under extreme AI compute concentrations.

Some operators have begun introducing thermal zoning strategies to manage the uneven heat distribution created by high-density AI clusters within existing hyperscale data halls. Instead of maintaining uniform rack densities across entire data halls, facilities now allocate dedicated zones for high-density accelerator infrastructure. Thermal zoning can isolate AI compute clusters from conventional cloud servers in certain facilities, allowing operators to manage localized heat concentrations without affecting adjacent equipment environments. Engineers adjust airflow pathways, containment structures, and cooling distribution to accommodate these specialized zones. Data hall layouts evolve into segmented thermal environments that support different cooling requirements simultaneously. Facility planners treat AI infrastructure as a separate thermal category within existing hyperscale buildings.

Thermal zoning often requires careful planning of rack placement, airflow containment, and mechanical cooling capacity. Engineers position high-density racks near cooling distribution points that can deliver greater airflow volumes. Raised floor tiles with higher perforation ratios may direct increased airflow toward accelerator clusters. Containment barriers help prevent hot exhaust from migrating into neighboring compute zones that operate at lower densities. Monitoring systems track temperature variations across zones to detect emerging thermal imbalances. Operators can maintain stable facility-wide conditions even when localized compute clusters generate significant heat loads.

Data hall segmentation also influences power distribution and mechanical infrastructure planning. AI clusters often require higher electrical capacity alongside enhanced cooling delivery systems. Engineers design dedicated electrical and cooling pathways to support these zones without overloading shared infrastructure. Thermal zoning can allow operators to expand AI capacity within specific sections of a data hall while preserving stable operating conditions in areas supporting conventional compute workloads. Facilities can accommodate evolving workload profiles without complete structural redesign. This approach provides operational flexibility as hyperscale campuses transition toward increasingly heterogeneous computing environments.

Monitoring technology plays a central role in managing these newly segmented thermal environments. Advanced sensors track temperature gradients, airflow velocity, and equipment inlet conditions across multiple zones. Data analytics platforms interpret sensor data to identify emerging thermal risks before they affect hardware performance. Cooling systems can respond dynamically to changing compute loads within specific zones. Facilities maintain thermal stability even when AI training workloads push localized infrastructure toward higher power consumption. Thermal zoning therefore represents an early operational adaptation to AI-driven infrastructure demands.

Legacy hyperscale cooling infrastructure was engineered to manage large but evenly distributed heat loads generated by cloud computing platforms. Computer room air conditioning systems circulate chilled air through underfloor plenums and direct it toward server racks to absorb thermal output from IT equipment. Chillers, cooling towers, and heat rejection systems then remove this accumulated heat from the facility environment. AI deployments alter the thermal profile within these environments by concentrating power consumption within smaller physical footprints, which increases localized heat output in sections of the data hall.Cooling systems designed for uniform airflow distribution must now absorb sudden spikes of localized heat generated by accelerator clusters. Mechanical infrastructure therefore experiences operating conditions that approach the limits of its original engineering design assumptions.

Cooling plants supporting hyperscale facilities often rely on centralized chilled water systems that distribute cooling capacity across multiple halls. These plants were sized according to expected compute density and airflow demand during initial facility planning stages. High-density AI deployments introduce thermal loads that can exceed those projections within specific areas of the campus. Chiller capacity, pump flow rates, and heat exchanger efficiency must therefore accommodate increasingly variable thermal demand patterns. Mechanical systems may struggle to maintain optimal supply air temperatures when localized rack densities rise dramatically. Infrastructure upgrades become necessary as operators attempt to support growing AI clusters within facilities originally built for lower thermal intensity workloads.

Cooling distribution networks also experience operational strain as airflow demand increases inside high-density compute environments. Air handlers must deliver significantly larger volumes of conditioned air to racks that dissipate large quantities of heat energy. Fan motors, duct systems, and air delivery pathways operate under greater pressure as they attempt to sustain sufficient airflow rates. Mechanical efficiency can decline when cooling systems operate near their maximum performance thresholds. Continuous operation of fans and pumps at elevated capacity levels places greater operational demand on cooling equipment, requiring careful monitoring and maintenance to sustain mechanical reliability. Data center engineers must monitor these systems carefully to prevent mechanical fatigue or cooling interruptions.

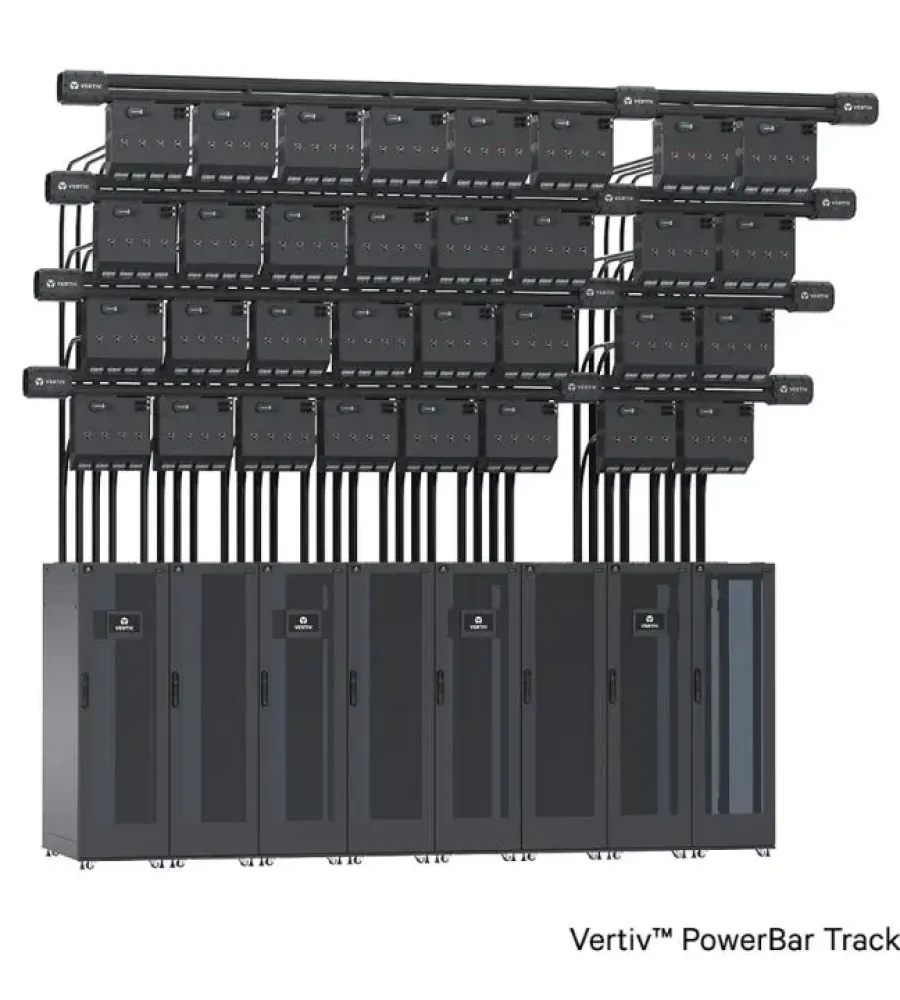

The interaction between cooling infrastructure and electrical power delivery systems further complicates mechanical design. High-density AI clusters consume substantial electrical power while simultaneously generating intense heat loads that cooling systems must remove. Electrical distribution networks must supply this power while maintaining stable voltage conditions for sensitive computing hardware. Cooling plants must also draw additional electrical power to operate fans, pumps, and chillers at higher capacity levels. Facility operators therefore face a dual infrastructure challenge involving both thermal management and energy distribution. Mechanical infrastructure planning increasingly integrates cooling performance with electrical system resilience.

Mechanical engineers now evaluate alternative cooling architectures to relieve pressure on traditional airflow infrastructure. Designers study how heat flows through densely packed accelerator hardware and identify points where airflow cooling becomes inefficient. Liquid-based heat transfer technologies offer higher thermal capacity compared with air and therefore attract growing interest from hyperscale operators. Hybrid cooling solutions attempt to preserve portions of existing airflow infrastructure while introducing targeted liquid cooling for the most demanding hardware zones. Infrastructure teams conduct detailed thermal modeling to determine where airflow systems can continue to operate effectively. These analyses guide long-term facility upgrades that support the next generation of high-performance computing deployments.

Many hyperscale operators have begun deploying hybrid cooling architectures to address the thermal demands of AI infrastructure. These designs integrate liquid cooling technologies alongside traditional airflow systems within the same facility environment. Rear-door heat exchangers represent one early adaptation that captures heat directly at the rack exhaust before it enters the broader data hall airflow. Chilled liquid circulating through these exchangers absorbs thermal energy more efficiently than air alone. This approach allows operators to maintain familiar airflow infrastructure while adding localized heat removal capability. Hybrid architectures therefore create a transitional pathway between legacy cooling systems and fully liquid-cooled environments.

Direct-to-chip liquid cooling has also gained attention as accelerator densities continue to rise across AI infrastructure deployments. Cooling plates mounted directly onto processors circulate coolant through microchannels that absorb heat from critical electronic components. This design removes thermal energy at the source before it spreads into the surrounding air environment. Data centers using direct-to-chip systems can support rack densities that exceed the practical limits of airflow cooling. Operators often retain airflow systems to cool auxiliary components such as memory modules, networking equipment, and power supplies. Hybrid architectures therefore combine liquid cooling for high-power processors with airflow systems for supporting hardware.

Some hyperscale campuses have experimented with immersion cooling systems for specialized AI workloads. Immersion cooling submerges servers within dielectric fluid that absorbs heat generated by electronic components during operation. Heat exchangers then transfer thermal energy from the liquid to external cooling systems. Immersion technology offers extremely efficient heat transfer capabilities because liquids possess far greater thermal conductivity than air. However, large-scale deployment requires changes to hardware design, maintenance procedures, and operational workflows. Facilities therefore evaluate immersion cooling selectively for workloads that generate exceptionally high thermal densities.

Infrastructure integration presents a significant challenge when deploying hybrid cooling architectures within existing hyperscale facilities because liquid distribution systems must be incorporated into buildings originally designed for airflow-based cooling networks. Operators must retrofit liquid distribution systems into buildings originally designed for airflow-based cooling networks. Engineers install coolant supply lines, pumps, and heat exchangers that integrate with existing mechanical infrastructure. Careful design ensures that liquid cooling equipment does not interfere with airflow pathways serving conventional compute racks. Monitoring systems track both airflow and liquid cooling performance to maintain stable environmental conditions across the facility. Hybrid architectures allow hyperscale operators to extend the lifespan of existing facilities while adapting to evolving AI workload requirements.

Furthermore, hybrid cooling environments require new operational expertise from infrastructure teams responsible for managing hyperscale campuses. Engineers must understand both airflow dynamics and liquid cooling thermodynamics when maintaining these integrated systems. Training programs increasingly cover topics such as coolant chemistry, leak detection systems, and thermal interface design. Facilities also introduce additional safety measures to ensure that liquid cooling systems operate reliably alongside electrical infrastructure. Monitoring platforms collect data from both cooling architectures to optimize system performance under varying compute loads. Hybrid infrastructure therefore reflects a transitional phase in the evolution of data center cooling technology.

Artificial intelligence infrastructure has introduced thermal challenges that extend beyond the assumptions embedded within traditional hyperscale facility design. Airflow cooling systems enabled the expansion of cloud computing by supporting large volumes of relatively moderate-density compute hardware. AI workloads concentrate significant computational power within smaller physical footprints, which increases localized heat output inside data halls. Mechanical cooling infrastructure originally optimized for uniform airflow distribution must now adapt to uneven thermal patterns created by accelerator clusters. Engineers have responded by introducing new cooling strategies that supplement or partially replace traditional airflow systems. The architectural evolution of hyperscale facilities reflects the growing influence of AI workloads on infrastructure design.

Infrastructure planning now emphasizes flexibility as operators prepare for continued growth in AI computing demand. Facility designers recognize that rack density and thermal output will likely continue increasing as semiconductor technology advances. Hyperscale campuses therefore incorporate modular cooling systems that allow operators to expand thermal capacity gradually. Hybrid cooling architectures demonstrate how legacy airflow infrastructure can coexist with emerging liquid cooling technologies. Mechanical and electrical infrastructure planning increasingly considers the combined effects of power consumption and heat removal requirements. Many hyperscale design strategies increasingly treat cooling capacity as a central factor in long-term infrastructure development as AI workloads influence facility planning.

Future hyperscale facilities may adopt cooling architectures that integrate liquid-based thermal management from the earliest design stages. Purpose-built AI data centers already explore layouts optimized for high-density compute clusters rather than uniform airflow distribution. Engineers analyze how coolant distribution networks, heat exchangers, and thermal monitoring systems can support next-generation accelerator hardware. Cooling infrastructure evolves alongside processor technology as computational performance continues to scale. Facility operators also examine sustainability implications associated with higher cooling energy consumption. Thermal design therefore becomes a defining component of hyperscale infrastructure planning for AI-driven computing environments.

Cooling technology development continues to accelerate as infrastructure providers respond to the demands of advanced computing systems. Research initiatives explore improved coolant formulations, advanced heat exchangers, and new thermal interface materials that enhance heat transfer efficiency. Data center designers collaborate with hardware manufacturers to align server architecture with emerging cooling technologies. Infrastructure innovation aims to maintain reliability while supporting increasingly powerful computational workloads. Hyperscale operators evaluate how new cooling methods integrate with existing campus infrastructure and operational processes. The evolving thermal landscape of data centers reflects the broader transformation of digital infrastructure driven by artificial intelligence.

The transition toward new cooling paradigms does not represent an abrupt replacement of airflow infrastructure across hyperscale campuses. Operators will likely continue operating airflow-based systems for many conventional computing workloads that remain thermally manageable. Hybrid approaches allow facilities to adapt gradually while preserving operational continuity across large computing environments. Infrastructure teams balance innovation with reliability as they integrate new cooling technologies into existing operational frameworks. Hyperscale campuses will therefore evolve through incremental architectural adaptation rather than immediate structural transformation. The increasing thermal intensity of AI computing nonetheless signals a long-term redesign phase for large-scale digital infrastructure.