We are building artificial intelligence systems that demand exponential growth in processing power, yet we rely on silicon-based hardware that is approaching its thermal and energetic limits. Global data centers now consume an ever-increasing share of the world’s electricity, approaching 1,000 terawatt-hours annually. This growth is driving environmental and economic strain. A fundamental shift is underway in how we move and process information. The transition from electrons to photons, known as photonic computing, has become the necessary path to reduce costs, lower the cloud’s carbon footprint, and transform global data infrastructure.

The Silicon Ceiling and the Energy Crisis of AI

For decades, Moore’s Law guided semiconductor progress. It enabled us to double transistor density every two years and increase computing power while controlling energy use. That era has ended. Transistors now approach atomic scale, and at this size they leak current and produce significant heat.

Modern data centers face a dual energy burden. They require large amounts of electricity for computation. They also need substantial power for cooling systems to prevent overheating. This combined demand raises operational costs and increases emissions.

The rise of Large Language Models has intensified this challenge. A single training run for a model such as GPT-4 consumes more electricity than hundreds of U.S. households use in a year. In addition, inference, which powers daily user interactions, consumes massive energy over time. Research published in Communications Physics in 2025 suggests that AI inference can generate up to 25 times more carbon emissions than training within one year of deployment. If the industry continues to depend solely on CMOS electronics, data center emissions may rival those of global aviation.

Enter the Photon: Computing at the Speed of Light

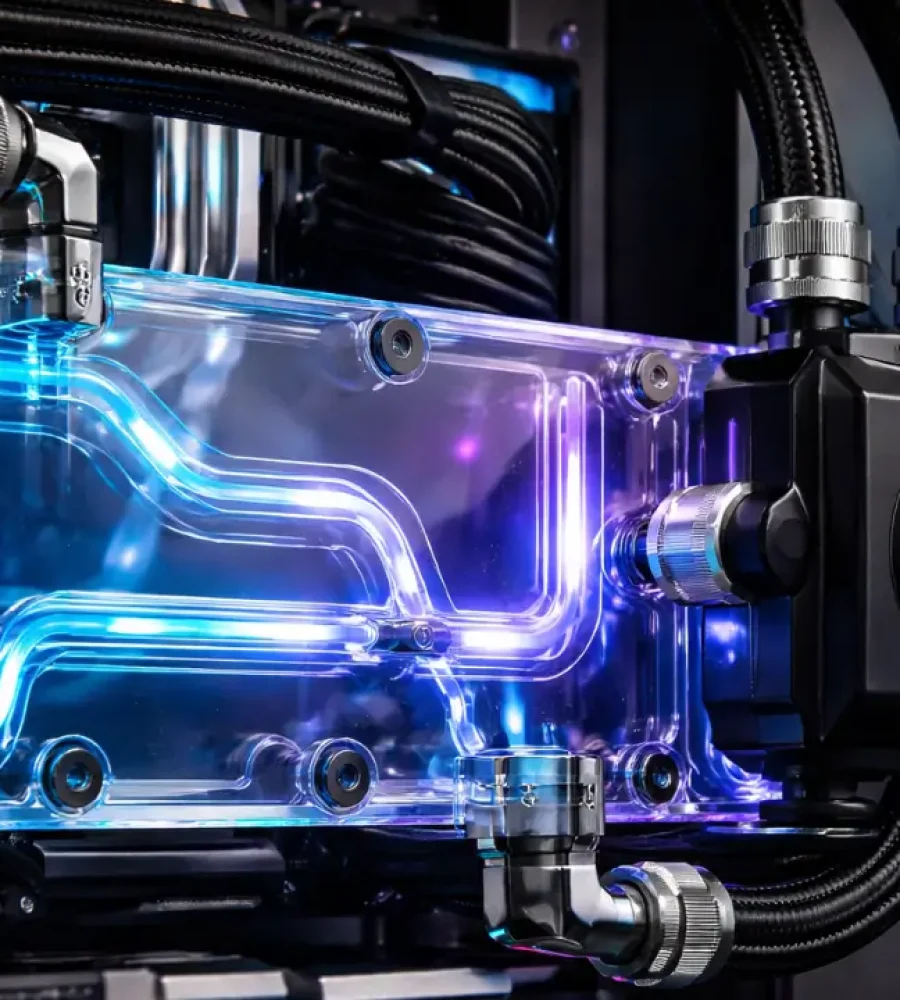

Photonic computing changes this trajectory. Instead of pushing electrons through copper wires, photonic systems use light to transmit and process information. Light travels with minimal resistance and generates far less heat. Photons carry no mass or charge, so they avoid many of the thermal limits that constrain electronics.

Photonics excels at matrix-vector multiplication, which forms the foundation of artificial intelligence and deep learning. Photonic processors encode data into the intensity or phase of light. When light passes through optical interference structures, it performs calculations naturally and rapidly. This approach does not offer a small improvement. It represents a structural transformation. Photonic accelerators have demonstrated speeds three to fourteen times higher than top-tier GPUs while using far less energy.

Reducing the Carbon Footprint Beyond Operations

Sustainability discussions often focus on operational energy, meaning the electricity used during system operation. However, embodied carbon also plays a major role. Embodied carbon includes emissions from mining, refining, and manufacturing hardware.

Photonic infrastructure reduces this hidden cost. Advanced electronic chips, such as 3nm and 5nm GPUs, require complex fabrication processes with many precision layers. Photonic integrated circuits often require fewer metal layers and simpler manufacturing steps. Their components are also larger and more tolerant of microscopic defects, which improves production yield.

Research in Communications Physics shows that the energy required to manufacture a photonic chip per unit area can be about 4.1 times lower than that of a standard 28nm electronic chip. By shifting toward optical systems, technology companies can reduce hardware-related emissions before deployment begins.

Reshaping Global Data Infrastructure

Photonic computing will change how engineers design data centers. Today, facilities often locate near cold climates or large water sources to manage heat from electronic servers. Photonic systems generate less heat, which reduces dependence on such geographical constraints.

Photonics also addresses the interconnect bottleneck. In traditional data centers, moving data between chips consumes significant power and introduces latency. Optical interconnects enable disaggregated architectures. In this model, memory, storage, and processing units can operate in separate physical areas while remaining connected through high-speed light channels. The entire facility functions as a unified system.

This architectural shift lowers total cost of ownership. Organizations can reduce cooling requirements and decrease the volume of hardware needed for equivalent performance. As a result, both capital expenditure and operational expenditure decline.

The Economic Imperative

For enterprises, photonics represents more than a sustainability strategy. It offers a competitive advantage. AI training and maintenance currently account for major infrastructure costs. As demand for intelligence-as-a-service grows, companies that deliver higher performance with lower energy use will lead the market.

Photonic computing enables growth without proportional increases in electricity consumption. Historically, greater data workloads required more power. Optical systems allow organizations to scale digital services without linear energy growth. This makes green AI a practical business model rather than a symbolic initiative.

Overcoming Transition Challenges

Despite its promise, photonic adoption faces technical barriers. Most existing data exists in electronic form. Systems must convert this data into light for processing and then convert it back to electricity for storage. These conversions introduce energy overhead.

The industry addresses this challenge with hybrid electro-photonic architectures. Engineers integrate optical and electronic components on the same package. Co-packaged optics reduces the distance data travels in electronic form. This design improves efficiency and maximizes the benefits of light-based computation.

The Dawn of the Optical Era

The electronic age powered the twentieth century, but its physical limits are now visible. Continued progress requires a new technological foundation. Photonic computing provides that foundation.

By adopting photonics, we can build faster systems while lowering energy consumption. We can design data infrastructure that is cooler, lighter, and more efficient. This transition will reduce the cloud’s carbon footprint and support sustainable artificial intelligence.

The move toward photonics enables a sustainable digital civilization. As data volumes grow and intelligence becomes central to the global economy, the future of the cloud will rely on light.