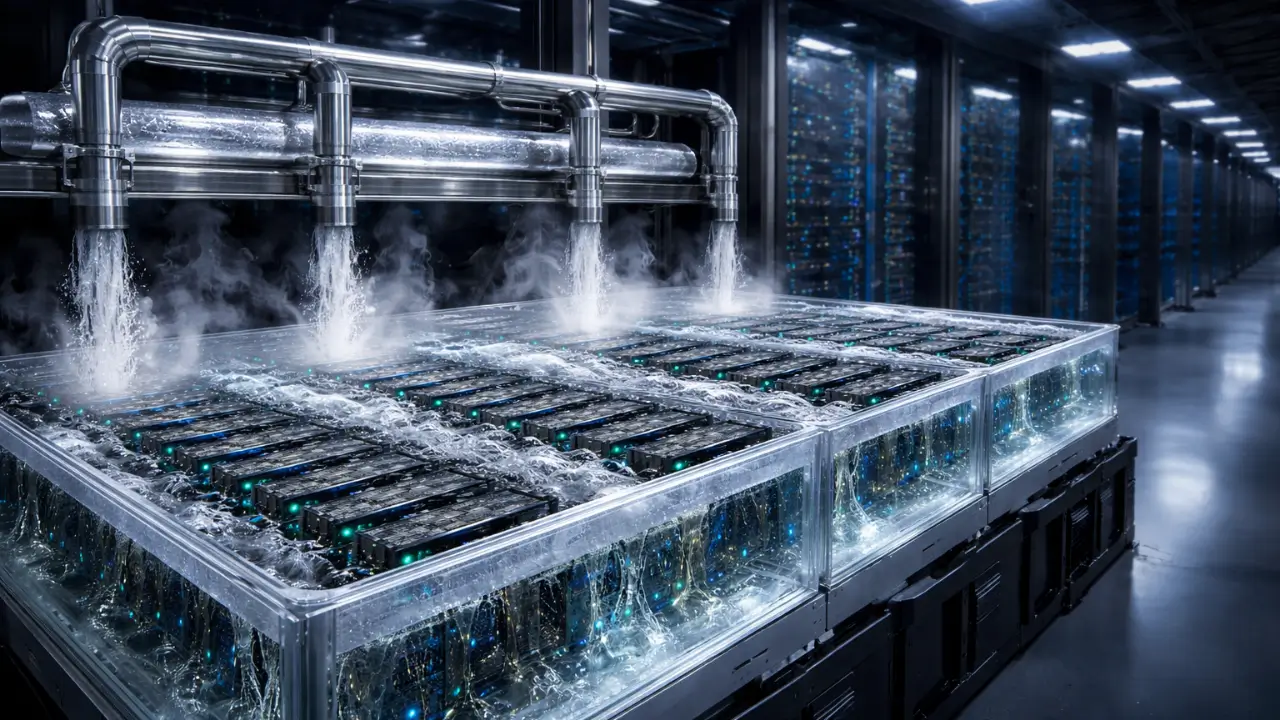

The modern data hall no longer whispers beneath raised floors and humming fans; instead, it pulses with concentrated energy as artificial intelligence workloads drive silicon beyond conventional thermal boundaries. Engineers once treated heat as a byproduct to manage, but today they confront it as a structural variable that shapes compute architecture itself. As accelerator clusters intensify and rack densities climb, thermal strategy has shifted from incremental airflow optimization toward fundamentally different thermodynamic models. Immersion cooling entered this landscape as a practical alternative, yet its evolution did not stop at submerging servers in dielectric liquids. Over time, designers recognized that phase behavior, not just fluid presence, holds the key to unlocking new efficiency regimes. Consequently, two-phase immersion systems now stand at the center of a broader architectural rethink that links thermodynamics directly with intelligence scaling.

From Static Fluids to Dynamic Phase Transitions

Single-phase immersion systems introduced a bold shift by replacing air with liquid as the primary heat transfer medium, allowing components to dissipate energy through direct contact with thermally stable fluids. In that configuration, the fluid absorbs heat while remaining in a constant state, and external heat exchangers remove accumulated thermal energy. Although this approach improved uniformity and eliminated airflow bottlenecks, it still operated within a paradigm of heat absorption rather than transformation. Engineers soon realized that absorption alone imposes limits when workloads sustain high thermal output over long cycles. Therefore, attention turned toward systems where the fluid does not merely warm but deliberately changes state under controlled conditions. This conceptual leap reframed immersion cooling from passive containment toward dynamic thermodynamic choreography.

Two-phase systems rely on phase transition as the central mechanism of heat transfer, and that shift introduces a fundamentally different philosophy. When a dielectric fluid reaches its boiling point at component surfaces, it absorbs significant latent heat during vaporization without dramatic temperature increase. Such behavior allows hardware to operate within tight thermal envelopes even under persistent computational load. Instead of circulating increasingly warmer liquid, the system converts localized heat into vapor and transports it upward in a controlled cycle. Designers treat this vapor formation not as turbulence but as a structured pathway of energy migration. As a result, the fluid evolves from a passive sink into an active participant in the compute environment.

This thermodynamic reorientation redefines how engineers think about the internal environment of a rack. Fluid no longer acts simply as insulation or conductive mass; rather, it becomes a responsive medium that orchestrates continuous transformation between liquid and vapor. Consequently, designers must anticipate nucleation sites, condensation surfaces, and pressure dynamics as integral elements of system planning. Each boiling event becomes part of a predictable cycle instead of an anomaly to suppress. By embracing dynamic phase transitions, architects move away from static thermal management toward an adaptive, self-regulating ecosystem. Through this evolution, immersion cooling aligns more closely with the fluid physics that govern industrial power systems than with legacy air-based server rooms.

Vaporization as an Engineering Asset

In traditional electronics environments, boiling signaled failure or instability, and engineers built safeguards to prevent it at all costs. Two-phase immersion systems invert that assumption by making vaporization an intentional and controlled process. When fluid contacts high-temperature surfaces, microscopic bubbles form and detach, carrying latent heat away from components with remarkable efficiency. Designers select fluids with precise boiling points to ensure vaporization occurs within safe operating margins. Through careful calibration, the boiling process stabilizes component temperatures rather than destabilizing them. Consequently, vapor becomes a predictable engineering asset rather than a threat to reliability.

Latent heat absorption provides the core advantage that distinguishes two-phase systems from their single-phase predecessors. During vaporization, fluids absorb substantial energy without large temperature swings, which maintains thermal uniformity across densely packed hardware. Such stability proves essential for AI accelerators that operate at sustained utilization levels for extended training cycles. Because vapor formation occurs directly at heat-generating surfaces, the system minimizes thermal resistance pathways. Engineers design condenser structures above the bath to capture vapor and return it as liquid in a continuous loop. Therefore, boiling becomes not a byproduct but a performance lever embedded in the infrastructure blueprint.

Controlled vapor formation requires precise mechanical and chemical orchestration within sealed enclosures. System architects incorporate condensation coils or cooled plates that guide vapor back into liquid form before reintegration. Fluid selection accounts for dielectric strength, environmental impact, and material compatibility across long operational lifespans. Rack structures accommodate vapor flow patterns to prevent localized pressure fluctuations and ensure even distribution. Designers treat every phase transition as part of a repeating thermal cycle that sustains equilibrium. In doing so, they construct infrastructure that treats thermodynamic transformation as a strategic capability rather than a secondary feature.

Designing Closed-Loop Thermal Ecosystems

Two-phase immersion systems operate within sealed environments that enable controlled vapor–condensation cycles without fluid loss. Unlike open cooling towers or extensive air handling networks, these systems confine the thermal process within a defined physical boundary. Vapor rises naturally from heated components, encounters a cooler surface, condenses, and returns to the bath under gravity. This closed-loop sequence repeats continuously, driven by phase physics rather than mechanical pumping alone. Because the cycle occurs internally, the infrastructure reduces dependency on expansive air management architectures. As a result, the compute environment transforms into a self-contained thermal ecosystem.

Significantly reducing reliance on large-scale air circulation changes facility design at both rack and building levels, although supplemental air and mechanical systems may still support broader facility requirements Data halls no longer require raised floors for airflow distribution or elaborate containment corridors to separate hot and cold streams. Instead, designers focus on structural support, fluid integrity, and heat rejection interfaces that connect immersion tanks to external chillers. Mechanical systems shift from managing air pressure gradients to maintaining fluid purity and condensation efficiency. Consequently, facility architecture aligns more closely with industrial process engineering than with conventional server room planning. This reconfiguration reduces spatial inefficiencies while preserving thermal predictability.

Fluid containment, recovery, and reintegration define the operational discipline of closed-loop immersion environments. Engineers design seals, gaskets, and material interfaces to withstand prolonged chemical exposure without degradation. Monitoring systems track fluid condition to ensure consistent boiling characteristics and dielectric performance. Maintenance protocols focus on preserving loop integrity rather than cleaning filters or adjusting airflow dampers. Therefore, operations teams adapt from air management routines to fluid lifecycle management practices. Such architectural implications underscore how self-contained thermal systems reshape both engineering workflows and facility culture.

Aligning Phase-Change Cooling with AI Density

Extreme-density AI clusters introduce thermal profiles that differ sharply from traditional enterprise workloads. High-performance accelerators sustain near-constant utilization during model training, generating continuous and concentrated heat. Air-based systems struggle to extract this energy efficiently without escalating fan speeds and power consumption. Two-phase immersion systems address this challenge by positioning the phase transition directly at the heat source. Vaporization absorbs intense thermal output without requiring dramatic increases in coolant flow. Consequently, thermal stability persists even under prolonged computational stress.

Sustained accelerator workloads demand uniform temperature distribution to prevent throttling and ensure predictable performance. Because latent heat absorption maintains narrow thermal bands, two-phase systems support consistent chip behavior across clusters. Rack density can increase vertically as immersion tanks eliminate the need for large air gaps between servers. Designers exploit this compactness to build vertically intensified compute deployments that maximize floor space efficiency. Moreover, the sealed environment shields components from airborne contaminants that often compromise high-density air-cooled systems. Through this alignment, phase-change cooling becomes a structural enabler of next-generation AI infrastructure.

Thermal resilience under persistent load also influences infrastructure planning at the strategic level. Operators increasingly evaluate immersion deployments not merely for energy considerations but for their potential to sustain continuous model training cycles, even as cost, integration complexity, and infrastructure retrofitting continue to influence broader adoption timelines. Developers benefit from predictable thermal envelopes that reduce unexpected performance variability during experimentation. Investors, meanwhile, assess how compact, immersion-native designs may extend the economic lifespan of facilities facing escalating density requirements. Therefore, phase-change cooling intersects with business strategy as well as engineering execution. In this context, thermodynamics evolves into a competitive dimension of intelligence scaling.

Infrastructure Co-Design: Chips, Racks, and Fluids

As immersion systems mature, collaboration between silicon architects and cooling engineers intensifies. Chip designers consider thermal flux distribution during layout planning to optimize boiling efficiency at the surface level. Packaging strategies evolve to accommodate direct fluid contact without compromising reliability. Rack manufacturers redesign frames to support immersion tanks and integrate condensation structures seamlessly. Consequently, cooling ceases to function as an external accessory and becomes embedded in the hardware blueprint. This co-design philosophy accelerates innovation across the compute stack.

Fluid-aware component layouts influence material selection, connector placement, and service accessibility. Engineers must ensure compatibility between dielectric fluids and polymers, seals, and coatings across extended operating periods. Cable routing adapts to submerged conditions while preserving signal integrity and maintenance convenience. Manufacturers test assemblies within immersion environments to validate durability under repeated phase cycles. Such integration demands cross-disciplinary collaboration that spans thermodynamics, materials science, and electrical engineering. Therefore, two-phase immersion systems reshape not only facility design but also product development processes.

Over time, immersion-native architectures may redefine how the industry conceptualizes compute infrastructure. Instead of retrofitting racks with specialized cooling modules, designers could build systems where fluid dynamics inform every structural decision. Standardization efforts may emerge to harmonize tank dimensions, fluid specifications, and condensation interfaces. Supply chains would then adapt to support immersion-compatible components at scale. As this ecosystem matures, the boundary between compute hardware and thermal infrastructure will blur. Ultimately, two-phase immersion stands poised to influence the trajectory of extreme compute more profoundly than incremental silicon gains alone.

Cooling once occupied the periphery of data center strategy, yet phase-change immersion systems now position it at the structural core of infrastructure design. Engineers no longer treat thermodynamics as a constraint to mitigate; instead, they integrate it as a foundational element of compute architecture. By leveraging controlled vaporization and closed-loop cycles, two-phase ecosystems transform heat into a managed and predictable force. This reframing aligns closely with the demands of increasingly dense AI clusters that operate without pause. As thermodynamic behavior shapes rack layouts, chip packaging, and facility blueprints, cooling becomes inseparable from computational ambition. In that convergence of physics and intelligence scaling, the architecture of the future may increasingly reflect advancements in both thermodynamic design and silicon innovation, as the relative influence of each remains an active industry debate.