Artificial intelligence infrastructure is entering a phase where responsiveness begins to outweigh raw training scale as the primary architectural priority, leading to the emergence of inference cloud infrastructure designed specifically for real-time AI workloads. Large model training clusters shaped the early era of modern AI infrastructure, but production AI systems now rely more heavily on the ability to generate outputs quickly and consistently. Applications such as conversational systems, financial analytics engines, robotics controllers, and real-time recommendation platforms demand rapid responses rather than periodic batch computation.

Infrastructure designed solely for massive training jobs struggles to meet these responsiveness requirements because its architecture prioritizes throughput rather than latency. As deployment of AI services accelerates across consumer and industrial environments, infrastructure must increasingly deliver deterministic response times while supporting continuous query loads. This shift is driving the emergence of inference-focused computing environments that prioritize proximity, networking efficiency, and request handling over model creation scale.

Inference workloads operate during the stage where trained models generate predictions or outputs from new inputs, making them the operational backbone of deployed AI services. Unlike training systems that run long computational cycles on enormous datasets, inference systems execute short decision tasks repeatedly under unpredictable workloads. This distinction fundamentally alters infrastructure design priorities because the system must minimize delays while maintaining high availability under fluctuating demand. AI infrastructure planners therefore increasingly separate environments used for model training from environments dedicated to production inference. These inference-centric systems emphasize responsiveness, distributed compute placement, and dynamic workload routing rather than extreme parallel computation density. The emerging architectural pattern represents an intermediate infrastructure layer positioned between hyperscale training clusters and device-level edge processing.

The growing reliance on real-time AI services has created a technological landscape where model serving speed becomes a defining competitive factor for cloud platforms. Infrastructure operators increasingly deploy distributed clusters designed specifically to handle millions of inference queries across diverse applications. These clusters integrate specialized accelerators, optimized networking, and model serving software that collectively reduce the delay between input submission and response generation. In this environment, the cloud evolves beyond a monolithic compute utility into a distributed inference platform capable of orchestrating decision workloads across geographically dispersed systems. The resulting infrastructure layer functions as an inference cloud, optimized for continuous AI interaction rather than episodic training cycles. Understanding how these environments differ from conventional cloud architectures reveals why they may become a defining component of next-generation neocloud ecosystems.

From Training Factories to Response Engines

Training infrastructure historically evolved as a computational factory designed to process enormous datasets through iterative model optimization cycles. Massive GPU clusters perform parallel matrix computations while exchanging gradients across high-speed interconnects to update model parameters during each training iteration. These environments prioritize throughput, synchronization efficiency, and massive data ingestion capacity because training workloads run for extended durations and require sustained computational intensity. The infrastructure often consolidates thousands of accelerators within centralized facilities because communication between GPUs plays a critical role in maintaining training efficiency. Such systems operate like industrial pipelines where model updates flow continuously through tightly coupled hardware resources.

Inference infrastructure introduces a completely different operational pattern because the system must deliver predictions or generated outputs immediately after receiving user inputs. Instead of long computation cycles, inference workloads consist of short, independent requests that arrive unpredictably from applications distributed across networks. Each request triggers model execution and response generation within strict latency boundaries that determine user experience or operational reliability. This workload structure reduces the need for extremely large synchronized clusters while increasing the importance of request routing, caching mechanisms, and service orchestration. Infrastructure must remain responsive even when query volumes fluctuate dramatically throughout the day. The resulting architecture resembles a distributed service platform rather than a centralized training factory.

Infrastructure priorities shift significantly when systems move from training models to serving them in production environments. Training platforms benefit from extremely dense GPU deployments because the computational objective revolves around maximizing parallel processing capability. Inference environments instead prioritize rapid access to models, predictable execution times, and efficient handling of simultaneous requests across many users. The system must also minimize cold start delays when loading models into accelerator memory to avoid response disruptions. Engineers therefore focus on memory access speed, model caching strategies, and request routing logic rather than purely maximizing GPU count. These design priorities reflect the operational nature of inference workloads where responsiveness becomes the defining performance metric.

Training clusters also emphasize long-running stability and batch throughput, while inference systems emphasize elasticity and distribution. Production AI applications must remain available continuously because they often serve interactive workloads such as conversational interfaces or automated decision systems. Infrastructure therefore distributes inference services across multiple nodes and geographic locations to maintain consistent responsiveness even during traffic surges. Model replicas deployed across clusters allow systems to handle concurrent requests without creating processing bottlenecks. The architecture gradually evolves toward microservice-style deployments where each inference service operates independently yet cooperates through orchestration frameworks. This transition illustrates how inference clouds transform AI infrastructure from computation-centric environments into distributed response engines.

Why Inference Latency Is the New Cloud Performance Metric

Cloud performance evaluation traditionally revolved around metrics such as throughput capacity, storage bandwidth, and compute scalability. These metrics remain relevant for training workloads, but real-time AI applications introduce a different performance requirement centered on response latency. When users interact with conversational assistants, fraud detection engines, or automated logistics systems, delays directly affect system usability and reliability. Even small increases in response time can disrupt application workflows or degrade user experience in interactive systems. Infrastructure must therefore deliver consistent response times across thousands of simultaneous inference requests. This operational requirement transforms latency from a secondary metric into a defining benchmark for AI infrastructure competitiveness.

Real-time inference workloads also introduce new forms of performance sensitivity because many AI systems operate within dynamic decision environments. Autonomous machines, financial analytics systems, and recommendation platforms rely on rapid interpretation of incoming data streams to produce actionable outputs. If infrastructure delays responses, the decisions generated by AI models may arrive too late to remain relevant within the operational context. Infrastructure designers therefore emphasize network efficiency, model loading speed, and runtime scheduling to ensure that inference responses occur within extremely short timeframes. These architectural adjustments create specialized cloud environments optimized specifically for real-time AI interactions. The resulting infrastructure ecosystem supports applications that require continuous computational responsiveness rather than episodic data processing.

Latency competition among cloud platforms

As AI applications become more interactive, cloud providers increasingly compete on the speed at which models can generate outputs for end users. Faster inference systems improve application responsiveness, allowing platforms to support a broader range of real-time services. Developers selecting infrastructure platforms evaluate not only cost and scalability but also the responsiveness of model serving pipelines under realistic workloads. This competitive landscape encourages providers to design distributed inference architectures that minimize network delays and optimize resource allocation. Platforms experiment with accelerator placement strategies, edge-adjacent clusters, and specialized hardware to achieve consistent performance advantages. The infrastructure race gradually shifts toward building clouds that behave more like global inference engines.

Low latency infrastructure also enables entirely new categories of AI-driven applications that rely on immediate decision feedback. Systems that analyze sensor streams, automate operational controls, or provide interactive digital assistance require responses that appear instantaneous to users. Achieving such responsiveness requires coordinated optimization across networking, compute hardware, and software orchestration layers. Engineers must ensure that model loading, request processing, and output generation occur within tightly controlled timeframes across distributed infrastructure. These requirements reinforce the importance of specialized inference clouds that prioritize latency management as a core design principle. As these environments mature, they may redefine how cloud infrastructure is evaluated in AI-driven ecosystems.

The Architectural Shift Toward Stateless AI Serving

AI inference workloads increasingly favor stateless service architectures because they simplify scaling across distributed computing environments. Stateless systems process each request independently without retaining contextual information between interactions. This architectural property allows infrastructure to replicate model serving instances across multiple nodes without complex synchronization requirements. When demand increases, orchestration systems simply deploy additional instances that process requests in parallel. The platform therefore scales horizontally rather than relying on increasingly large centralized machines. Such designs align well with modern cloud environments where elasticity and rapid deployment determine operational efficiency.

Stateless inference services also simplify failure recovery because any node can replace another without restoring complex session data. If a server handling inference requests fails, orchestration frameworks automatically redirect requests to other active nodes. This capability ensures that model serving pipelines maintain consistent responsiveness even when infrastructure components experience disruption. Engineers therefore treat inference nodes as interchangeable units that operate within a distributed pool of compute resources. The architecture supports continuous service availability while maintaining consistent response behavior across large deployments. Stateless design therefore becomes a core principle of inference cloud environments.

Containerized model serving accelerates deployment

Containerization technologies reinforce stateless inference architectures by allowing models and serving frameworks to run within portable execution environments. Containers encapsulate model binaries, runtime libraries, and configuration settings, enabling identical deployments across different infrastructure nodes. Infrastructure platforms therefore replicate inference services quickly without extensive manual configuration or dependency management. Engineers deploy containerized inference pipelines through orchestration systems that manage scaling, networking, and health monitoring automatically. This approach enables rapid rollout of updated models without interrupting ongoing inference workloads. The resulting operational flexibility supports continuous improvement cycles for AI applications.

Model serving frameworks such as TensorFlow Serving, Triton Inference Server, and similar systems integrate naturally with container orchestration platforms. These frameworks expose standardized interfaces that allow applications to send inference requests while infrastructure manages resource allocation and runtime execution. Containerized deployments ensure that each inference instance behaves consistently regardless of the underlying hardware environment. Engineers can therefore deploy models across geographically distributed clusters without rewriting application logic. This consistency accelerates the development of globally distributed inference platforms capable of handling large volumes of real-time requests. Stateless containerized architectures thus play a central role in the design of emerging inference clouds.

Micro-Clusters Instead of Mega-Clusters

Traditional AI infrastructure concentrated thousands of GPUs within massive training clusters located in hyperscale facilities. Such designs prioritized high-bandwidth communication between accelerators to support gradient synchronization during model training. Inference workloads impose different requirements because each request executes independently rather than participating in coordinated training loops. Infrastructure therefore benefits less from massive centralized clusters and more from distributed compute deployments located near application demand. Smaller GPU clusters can process inference queries effectively without requiring complex synchronization across thousands of devices. This architectural shift encourages the development of micro-clusters that serve localized workloads while remaining connected through orchestration networks.

Micro-cluster deployments improve responsiveness because inference requests travel shorter network paths before reaching compute resources. When clusters reside closer to users or application servers, the infrastructure reduces communication delays that would otherwise affect response time. Distributed deployments also allow infrastructure operators to scale capacity incrementally by adding clusters in regions experiencing increased demand. This approach contrasts with centralized hyperscale facilities where expansion requires extensive planning and infrastructure investment. Micro-clusters therefore provide operational flexibility while maintaining sufficient compute capacity for real-time inference workloads. The model reflects a broader shift toward distributed computing architectures within AI infrastructure ecosystems.

Infrastructure proximity improves user interaction

Interactive AI systems depend heavily on the perceived responsiveness of their underlying infrastructure. Applications such as conversational assistants or recommendation engines require outputs that appear almost instantaneous to users. When inference clusters reside geographically distant from the applications generating requests, network propagation delays accumulate and degrade performance. Deploying smaller clusters closer to demand sources significantly reduces these delays. The infrastructure effectively shortens the path between data input and AI response generation. This strategy aligns with distributed computing principles that prioritize workload proximity to improve overall system responsiveness.

Micro-clusters also support redundancy by distributing inference capacity across multiple geographic regions. If one cluster becomes unavailable, orchestration systems redirect traffic to other clusters capable of processing the same models. This redundancy enhances service reliability while maintaining consistent response performance across different user locations. Distributed clusters therefore provide both operational resilience and latency improvements. The architecture gradually evolves toward a network of interconnected inference nodes that collectively form a global inference cloud. Each cluster operates independently yet contributes to a unified AI serving platform.

The Hidden Infrastructure of Model Serving Pipelines

AI inference appears simple from an application perspective because a request enters the system and a prediction emerges as output. Behind this interaction lies a multi-layered pipeline responsible for preparing models, routing requests, executing computations, and returning responses. The pipeline begins with model loading, where trained models move from storage into accelerator memory to enable rapid execution. Caching mechanisms store frequently used models within memory to prevent repeated loading delays during high demand periods. Request routers distribute incoming queries across available inference instances to maintain balanced workloads. These components collectively form the infrastructure foundation of modern AI inference platforms.

Inference pipelines must also manage token streaming and incremental response generation for large language models. Instead of producing a complete output instantly, many generative models generate sequences of tokens over time. Infrastructure must stream these tokens back to the requesting application while continuing computation on subsequent tokens. This process requires tightly coordinated runtime environments capable of managing asynchronous communication between compute nodes and application interfaces. Efficient token streaming ensures that users receive partial responses quickly rather than waiting for entire outputs to complete. These architectural details significantly influence perceived system responsiveness in conversational AI environments. (https://huggingface.co/docs/transformers/en/streaming)

Caching and routing maintain system efficiency

Efficient model serving pipelines rely heavily on caching strategies that reduce computational overhead during repeated inference requests. When a model remains loaded within accelerator memory, the system avoids the latency associated with reloading large model weights from storage. Infrastructure platforms therefore maintain memory-resident caches for frequently accessed models or model fragments. Request routing systems then direct queries toward nodes that already host the required model in memory. This strategy ensures that inference requests experience minimal delay during execution. Caching thus plays a crucial role in maintaining consistent performance across distributed inference environments.

Request routing mechanisms also monitor system load and dynamically allocate queries to the most suitable compute resources. Routing frameworks evaluate factors such as node availability, processing queue length, and network latency before assigning tasks. This intelligent distribution prevents individual nodes from becoming overloaded while other resources remain idle. Infrastructure platforms therefore maintain balanced workloads that support stable response performance under varying demand levels. Routing logic effectively transforms distributed clusters into cohesive inference platforms capable of serving global AI applications. Such orchestration capabilities form a critical layer of the emerging inference cloud architecture.

Networking for Millisecond AI Responses

Networking infrastructure plays a decisive role in determining the responsiveness of distributed inference systems. AI requests must travel across networks before reaching compute resources capable of executing model predictions. If the network introduces delays or congestion, even highly optimized compute infrastructure cannot deliver rapid responses. Engineers therefore design networking architectures that prioritize low latency and predictable packet delivery. High-performance switching fabrics, optimized routing protocols, and traffic prioritization mechanisms support efficient movement of inference requests across clusters. Networking thus becomes an integral component of AI infrastructure design rather than a background utility.

Distributed inference environments also require networking systems capable of handling extremely high request volumes generated by interactive applications. Service mesh architectures manage communication between microservices while enforcing policies related to routing, security, and load balancing. These frameworks ensure that inference requests reach the correct compute nodes without introducing unnecessary communication overhead. Infrastructure engineers tune networking configurations carefully to prevent packet loss or latency spikes that could disrupt real-time AI interactions. Reliable networking therefore forms the connective tissue that enables inference clouds to operate as coherent distributed systems.

Congestion resilience supports consistent performance

Inference platforms must maintain consistent responsiveness even during sudden surges in request traffic. Networking congestion can emerge when large volumes of requests compete for limited bandwidth within shared infrastructure. Engineers implement congestion management mechanisms that prioritize inference traffic and distribute requests across alternative network paths when necessary. Load balancing technologies play a key role by ensuring that network links and compute nodes receive balanced workloads. These mechanisms prevent bottlenecks that could otherwise delay AI responses. Infrastructure reliability therefore depends on careful coordination between networking and compute layers.

Predictable network performance also becomes essential when inference systems support industrial automation or machine control environments. Robotics platforms and autonomous systems rely on timely decision outputs to maintain safe operation. Network jitter or unpredictable latency can disrupt these systems by delaying critical instructions generated by AI models. Infrastructure designers therefore prioritize deterministic networking environments that guarantee consistent communication timing. Such environments enable inference clouds to support mission-critical AI applications that extend far beyond conventional consumer services. Networking reliability thus becomes a foundational requirement for the future of distributed AI infrastructure.

Memory Bandwidth Becomes the Bottleneck

AI inference workloads often encounter performance constraints not because of insufficient compute capability but because of memory access limitations. Large neural network models contain billions of parameters that must be retrieved from memory during each inference operation. When models run on accelerators such as GPUs or specialized AI chips, the system repeatedly transfers weights and intermediate activations between memory and compute units. If memory bandwidth cannot keep pace with these transfers, the processor waits for data instead of performing computations. Infrastructure designers therefore increasingly focus on optimizing memory architecture rather than simply increasing accelerator counts. Efficient memory systems become critical for maintaining fast inference response times.

Model loading also influences memory performance because large models must move from storage into accelerator memory before execution begins. If this loading process occurs frequently, it can introduce delays that reduce inference responsiveness. Infrastructure engineers therefore deploy strategies such as memory pinning and persistent model caching to keep frequently accessed models available in high-speed memory. These techniques minimize repeated transfers from slower storage layers and ensure that inference nodes remain prepared to respond quickly. Memory hierarchy design thus becomes a major factor in determining how effectively inference clouds support continuous workloads. High-bandwidth memory technologies increasingly appear in accelerator hardware to address these demands.

Model optimization reduces memory pressure

Software optimization techniques help alleviate memory bandwidth limitations by reducing the size and complexity of deployed models. Techniques such as quantization compress model parameters into smaller representations without significantly affecting prediction quality. Pruning methods remove redundant connections within neural networks to decrease the amount of data required during inference execution. These approaches allow inference systems to process models more efficiently while consuming fewer memory resources. Engineers frequently combine such optimizations with specialized inference runtimes that streamline data movement during computation. These adjustments collectively improve the responsiveness of inference services operating within distributed cloud environments.

Inference clouds therefore evolve toward architectures that balance compute performance with memory efficiency. High-performance accelerators remain essential for executing neural network operations, but memory systems increasingly determine real-world performance outcomes. Infrastructure designers must coordinate hardware and software strategies to ensure that models execute efficiently under real-time workloads. This balance shapes the next generation of inference infrastructure platforms capable of supporting large-scale AI services. As models continue to grow in complexity, memory bandwidth considerations will remain central to infrastructure design decisions.

Edge-Adjacent Infrastructure and the Expansion of AI Geography

AI infrastructure historically concentrated within large hyperscale data centers located in a limited number of global regions. This model worked effectively for training workloads because those processes required extensive compute resources but tolerated longer execution times. Inference workloads introduce a new requirement because they must respond quickly to user interactions or device signals. Deploying inference systems exclusively within distant hyperscale regions can introduce network delays that degrade application responsiveness. Infrastructure providers therefore increasingly deploy inference clusters closer to where data originates. This shift expands the geographic footprint of AI infrastructure across many distributed locations.

Edge-adjacent infrastructure does not fully replicate the capabilities of hyperscale training clusters. Instead, these environments host smaller compute deployments capable of executing inference tasks rapidly for nearby applications. Industrial sensors, robotics platforms, and consumer applications benefit from this proximity because inference responses arrive faster. The infrastructure effectively reduces the distance between the source of data and the compute resources processing it. As AI adoption spreads across industries, demand for such localized inference capacity continues to increase. The distributed placement of infrastructure therefore becomes a defining feature of emerging inference cloud platforms.

Hybrid architectures link edge systems and centralized clouds

Distributed inference infrastructure often operates as part of a hybrid architecture connecting edge environments with centralized cloud platforms. Training and large-scale model development still occur within powerful centralized clusters capable of supporting extensive computational workloads. After training completes, models move toward edge-adjacent inference clusters that serve applications requiring rapid responses. Infrastructure platforms coordinate these layers through orchestration frameworks that manage deployment and version control across distributed systems. This architecture enables continuous improvement of models while maintaining fast inference performance near end users. Hybrid AI infrastructure therefore combines the strengths of centralized training and distributed inference environments.

Such distributed AI geography also supports resilience because applications can access multiple inference clusters across different regions. If one cluster experiences disruption, requests can shift automatically to other available locations. This redundancy helps maintain service continuity for applications that rely on real-time AI responses. Distributed architectures therefore enhance both responsiveness and reliability across global infrastructure networks. As inference workloads grow, these geographically distributed clusters collectively form the backbone of emerging inference cloud ecosystems. Infrastructure evolution increasingly reflects the need to support AI workloads wherever they operate.

Cost Models for Inference-Dominated Cloud Platforms

Cloud pricing historically revolved around compute time and infrastructure usage measured through virtual machine or GPU allocation periods. Training workloads align naturally with this model because they occupy hardware resources for extended durations during model optimization processes. Inference workloads introduce a different usage pattern where requests arrive continuously but execute quickly. Charging infrastructure users based solely on reserved compute time does not accurately reflect how inference services operate. Cloud platforms therefore experiment with pricing structures that align more closely with request volume and processing activity. These models emphasize the cost of delivering AI responses rather than maintaining idle infrastructure capacity.

Request-based pricing reflects the operational characteristics of inference workloads more effectively than traditional compute billing methods. Platforms measure the number of inference calls processed by infrastructure rather than the duration for which compute resources remain allocated. This approach allows developers to scale applications dynamically without maintaining continuously reserved hardware capacity. Infrastructure platforms adjust resource allocation automatically in response to request traffic patterns. The pricing structure therefore aligns economic incentives with system efficiency and responsiveness. Inference cloud ecosystems increasingly adopt these flexible models to accommodate diverse AI application workloads.

Resource efficiency influences infrastructure economics

Infrastructure operators must also consider resource efficiency when designing inference cloud platforms. Always-available inference services require hardware resources that remain ready to respond instantly to incoming requests. If infrastructure sits idle during periods of low demand, operational costs increase without generating corresponding service value. Platforms therefore implement dynamic scaling mechanisms that activate additional compute nodes only when traffic increases. Efficient scheduling systems distribute workloads across available resources while minimizing unnecessary energy consumption. These strategies help maintain sustainable operational economics for large-scale inference infrastructure deployments.

Cost efficiency also depends on selecting appropriate hardware for specific inference workloads. Some models perform effectively on specialized inference accelerators that consume less energy than general-purpose GPUs. Others require GPU acceleration to deliver acceptable performance for complex neural network architectures. Infrastructure designers therefore integrate diverse hardware options that balance cost and performance across different AI workloads. The resulting environment enables platforms to match each inference task with the most suitable compute resource. Such flexibility supports economically sustainable inference cloud operations.

Energy Efficiency as a Design Constraint for Continuous Inference

AI inference infrastructure operates continuously because deployed applications send requests throughout the day. Unlike training clusters that may run specific computational jobs before entering idle states, inference systems must remain active to process incoming queries at any moment. This continuous operation increases the overall energy demand of AI infrastructure environments. Power consumption therefore becomes an important consideration when designing inference cloud architectures. Engineers seek hardware and software solutions that maximize performance while minimizing unnecessary energy usage. Energy efficiency thus becomes a defining constraint in the evolution of inference infrastructure.

Accelerators optimized specifically for inference workloads help improve energy efficiency within these environments. Such hardware designs focus on executing neural network operations using reduced precision arithmetic or specialized tensor processing units. These optimizations allow accelerators to perform inference computations using less energy than general-purpose processors. Infrastructure platforms combine these devices with advanced power management techniques that adjust resource utilization dynamically. The system therefore consumes energy proportionally to the workload rather than operating at maximum capacity continuously. These strategies contribute to more sustainable AI infrastructure ecosystems.

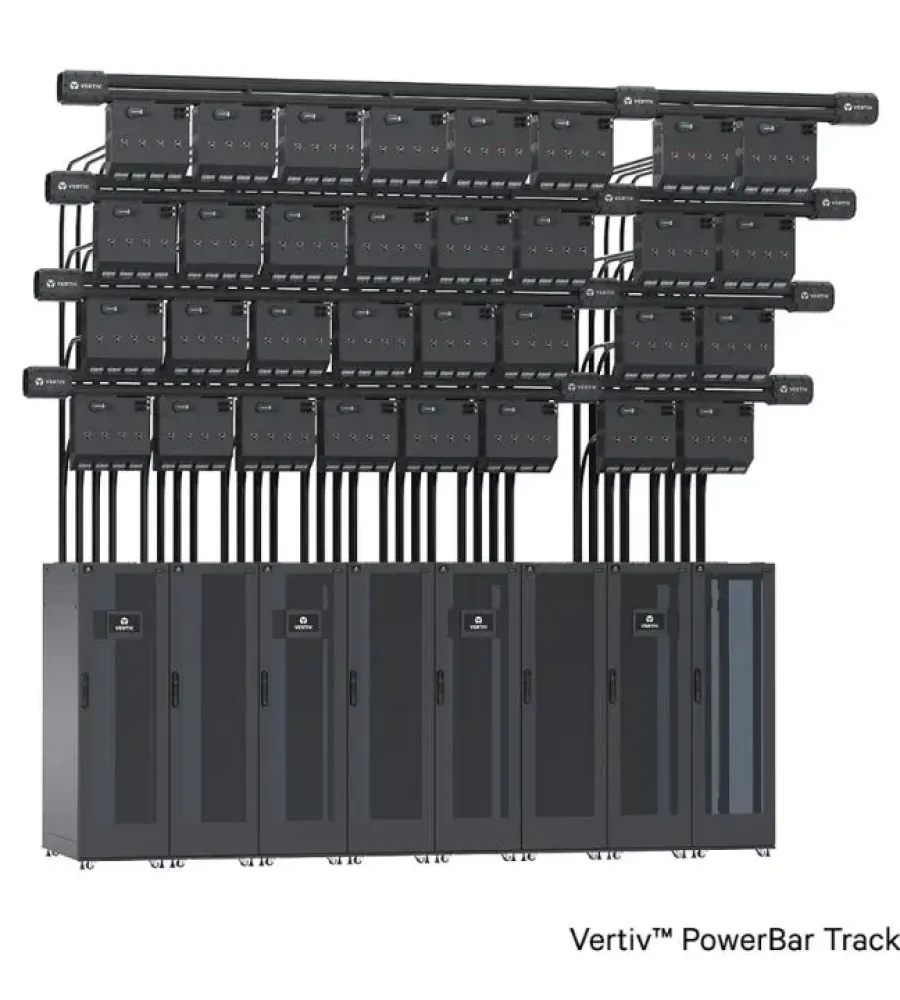

Cooling and infrastructure design influence energy consumption

Energy efficiency in inference clouds also depends on the physical design of the facilities hosting compute infrastructure. Data center cooling systems must dissipate heat generated by accelerators operating under continuous workloads. Engineers design airflow management systems and liquid cooling technologies to maintain safe operating temperatures while minimizing energy consumption. Efficient cooling infrastructure reduces the amount of additional power required to sustain hardware performance. Facility design therefore contributes directly to the sustainability of AI infrastructure deployments. Energy-aware infrastructure strategies support the long-term viability of distributed inference clouds.

As inference workloads expand globally, energy considerations will increasingly influence infrastructure planning decisions. Operators must balance performance demands with environmental responsibility and operational costs. Efficient hardware utilization and facility design help mitigate the energy footprint associated with continuous AI inference services. These considerations encourage innovation across hardware, software, and facility engineering disciplines. The resulting infrastructure ecosystem aims to deliver reliable AI services while maintaining sustainable resource consumption patterns. Energy-efficient design thus becomes an essential aspect of inference cloud architecture.

Workload Routing and Intelligent Request Placement

Inference cloud environments consist of multiple distributed clusters capable of serving AI requests. Efficient operation requires orchestration systems that determine where each request should execute. Routing frameworks evaluate several factors before assigning workloads to specific compute nodes. These factors include network latency, system load, and model availability within each cluster. Intelligent routing ensures that requests reach the most suitable infrastructure resources without unnecessary delays. The orchestration layer therefore acts as the coordination mechanism connecting distributed inference clusters into a unified platform.

Dynamic routing also improves infrastructure utilization by distributing workloads evenly across available compute resources. When one cluster approaches its processing capacity, the routing system can redirect requests toward other clusters with available capacity. This mechanism prevents individual nodes from becoming overloaded while others remain underutilized. Infrastructure therefore maintains balanced workloads that support consistent response performance across the entire platform. Intelligent routing strategies play a central role in enabling inference clouds to scale effectively across global infrastructure networks.

Geographic placement improves latency performance

Routing decisions often incorporate geographic considerations because physical distance between users and infrastructure affects network latency. When a request originates from a particular region, the routing system typically selects a nearby cluster capable of executing the required model. This proximity reduces the time required for data transmission between application and infrastructure. Geographic request placement therefore contributes significantly to maintaining fast response times. Infrastructure platforms continuously monitor network conditions to ensure that routing decisions remain optimal. These mechanisms enable distributed inference clouds to deliver consistent performance across global user bases.

Such routing strategies also improve system resilience by allowing requests to bypass infrastructure experiencing temporary disruptions. If a cluster encounters operational issues, routing frameworks redirect requests toward alternative clusters capable of processing them. This redundancy ensures that AI services remain available even when individual infrastructure components fail. Intelligent request placement therefore supports both performance and reliability within distributed inference cloud architectures. As AI services expand globally, these routing mechanisms become increasingly critical to maintaining stable infrastructure operations.

Hardware Diversity in the Inference Cloud Era

Inference clouds integrate diverse hardware architectures because different AI workloads exhibit varying computational characteristics. Graphics processing units remain widely used due to their ability to execute large neural network operations efficiently. Specialized inference accelerators also appear increasingly within infrastructure deployments because they optimize performance for specific model architectures. Central processing units continue to handle orchestration tasks and lightweight inference workloads that do not require specialized acceleration. Infrastructure platforms therefore combine several hardware categories within a unified environment. This diversity allows systems to match each workload with the most appropriate compute resource.

Different accelerator architectures emphasize distinct performance characteristics such as throughput, memory efficiency, or energy consumption. Some hardware designs prioritize high parallel computation capacity suitable for complex generative models. Others focus on efficient execution of smaller models used in real-time analytics or recommendation systems. Infrastructure designers must therefore evaluate workload requirements carefully when selecting hardware components for inference clusters. The coexistence of multiple hardware architectures enables platforms to optimize infrastructure efficiency across diverse AI applications. Hardware diversity thus becomes a defining feature of emerging inference cloud environments.

Software abstraction layers manage heterogeneous hardware

Operating heterogeneous hardware environments requires software frameworks capable of managing different accelerator architectures seamlessly. Abstraction layers allow applications to submit inference requests without needing to know the specific hardware executing the computation. The infrastructure platform translates these requests into operations suitable for available accelerators. This abstraction simplifies application development while enabling infrastructure providers to upgrade hardware without disrupting existing services. Developers therefore interact with inference clouds through standardized interfaces that hide hardware complexity. Such frameworks support continuous innovation within the underlying infrastructure environment.

Resource scheduling systems further enhance hardware utilization by assigning workloads to accelerators capable of executing them most efficiently. If a request involves a lightweight model, the system may schedule it on a CPU or specialized inference chip rather than occupying a GPU. More complex models requiring extensive tensor operations may execute on high-performance GPU clusters. Intelligent scheduling ensures that infrastructure resources operate efficiently while maintaining consistent response performance. Hardware abstraction and scheduling therefore form essential components of scalable inference cloud architectures.

Reliability Challenges in Real-Time AI Infrastructure

Inference clouds support applications that rely on constant access to AI decision systems. Service interruptions can disrupt automated processes, delay operational decisions, or degrade user experiences across many digital platforms. Infrastructure must therefore maintain extremely high availability to support continuous AI interactions. Distributed cluster architectures help achieve this objective by spreading workloads across multiple nodes and geographic locations. If one node fails, other nodes continue processing requests without noticeable disruption. Reliability engineering thus becomes a central discipline in the design of inference infrastructure environments.

Maintaining reliability also requires monitoring systems that track infrastructure health in real time. Telemetry platforms collect information about system performance, network conditions, and hardware status across distributed clusters. When monitoring systems detect anomalies, automated remediation processes attempt to resolve issues before they affect inference workloads. Engineers analyze these telemetry signals to identify potential reliability risks and improve infrastructure resilience. Continuous monitoring therefore forms an essential component of maintaining stable AI inference services. Operational awareness enables platforms to sustain consistent performance across complex distributed environments.

Deterministic performance supports critical AI systems

Certain AI applications operate within environments where predictable system behavior becomes essential. Industrial automation systems, robotics platforms, and financial trading algorithms rely on rapid and reliable AI decision outputs. Variability in response time could disrupt operations or introduce unintended consequences within these environments. Infrastructure designers therefore strive to minimize latency fluctuations and ensure deterministic performance across inference systems. Network reliability, workload scheduling, and hardware consistency all contribute to maintaining predictable system behavior. Deterministic infrastructure performance allows inference clouds to support a broader range of mission-critical AI applications.

Ensuring deterministic performance often involves implementing redundancy and failover mechanisms throughout infrastructure architecture. Multiple inference instances run simultaneously so that workloads can shift immediately when failures occur. This redundancy allows systems to maintain consistent response behavior even during hardware disruptions or network disturbances. Reliability strategies therefore extend across every layer of the infrastructure stack. Distributed design, continuous monitoring, and intelligent orchestration collectively support stable AI inference operations. These capabilities ensure that inference clouds remain dependable foundations for real-time AI services.

The Emergence of a New Layer in Cloud Infrastructure

Inference clouds represent an architectural evolution driven by the operational realities of deployed artificial intelligence systems. While training infrastructure enabled the development of powerful models, production environments demand systems capable of delivering rapid responses to continuous streams of requests. Infrastructure therefore shifts from centralized training factories toward distributed response platforms optimized for real-time inference workloads. These platforms incorporate stateless architectures, distributed micro-clusters, optimized networking, and sophisticated request routing mechanisms. Hardware diversity and energy efficiency considerations further shape the design of these environments. The resulting infrastructure layer forms a distinct tier within the broader AI computing ecosystem.

This emerging infrastructure tier sits between hyperscale training clusters and device-level edge computing systems. Training environments continue to develop increasingly sophisticated AI models using massive compute resources. Edge devices handle localized inference tasks where immediate processing is essential. Inference clouds bridge these layers by delivering scalable model serving capabilities across distributed infrastructure networks. This intermediate layer allows applications to access powerful AI models while maintaining rapid response performance. The architecture therefore enables AI services to operate effectively across diverse digital environments.

As artificial intelligence continues to integrate into everyday technologies, the importance of inference infrastructure will grow steadily. Applications across industries increasingly rely on AI models to generate predictions, automate decisions, and interpret complex data streams. Infrastructure capable of delivering these responses reliably and efficiently becomes a fundamental component of modern computing ecosystems. The rise of inference clouds therefore represents more than an incremental infrastructure change. It signals the emergence of a new operational paradigm where responsiveness, distribution, and efficiency define the architecture of AI infrastructure. These systems will likely shape the next phase of cloud computing evolution.