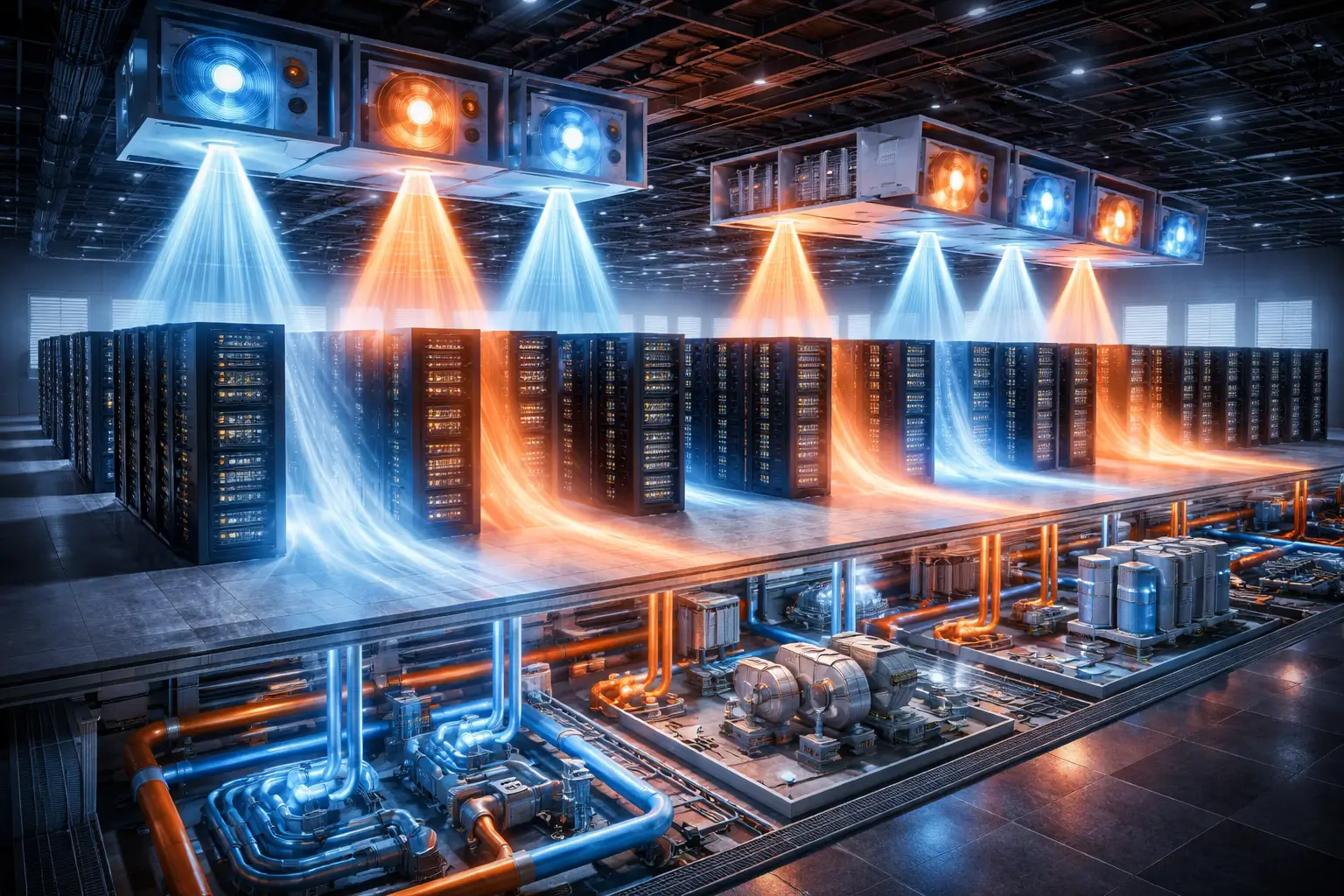

Thermal management in data centers has never been a static discipline. Each generation of compute hardware has introduced new heat density challenges that required corresponding advances in cooling architecture, and each advance has expanded the boundaries of what is considered technically feasible for facility design. The transition from mainframe computing to distributed server architectures, the shift from single-core to multi-core processors, and the emergence of high-performance storage and networking all reshaped thermal design assumptions in ways that required the industry to develop new approaches, new equipment, and new engineering methodologies. Each of these transitions was disruptive in its time, but none of them fundamentally altered the physical principles on which data center cooling was based. AI infrastructure is doing something categorically different, and the thermal management discipline is being rebuilt around a new set of requirements that conventional approaches cannot meet.

The power density requirements of modern GPU clusters for AI training and inference are generating heat loads at rack and row levels that exceed the thermal management capacity of conventional data center cooling architectures by factors that cannot be bridged through incremental improvement. A single rack supporting a dense configuration of current-generation AI accelerators can generate heat at rates that would have been considered implausible for an entire row of servers a decade ago. The engineering response to this density shift requires rethinking thermal management not as a facility service that removes heat from the compute environment, but as an integrated infrastructure layer that is architecturally coupled with compute hardware, power distribution systems, and facility structural design from the earliest stages of development planning. That reconceptualization is underway across the industry, and its implications extend to every aspect of how next-generation AI data centers are designed, built, and operated.

The most visible manifestation of this reconceptualization is the accelerating adoption of liquid cooling technologies across AI data center deployments. Liquid cooling is not new to the data center industry, but its role is changing from a specialized solution for exceptional density situations to a baseline design assumption for any facility that intends to support current or future AI workloads at scale. This shift is being driven by physics rather than preference: the thermal conductivity of water and dielectric fluids relative to air, combined with the ability of liquid cooling systems to remove heat at the source rather than managing air temperatures across a facility, makes liquid cooling the only technically viable path to supporting the rack densities that AI hardware is delivering and that future hardware generations will amplify further. Understanding the architectural dimensions of this shift, and the engineering trade-offs it introduces, is becoming a core competency for anyone involved in planning, developing, or operating AI data center infrastructure.

Heat Generation Characteristics of AI Hardware

Graphics processing units and AI-specific accelerators operate at sustained power draws that approach their rated thermal design power for extended periods during training runs, generating continuous high-density heat loads that cooling systems must handle without interruption for hours or days at a time. Conventional server hardware typically operates at a fraction of its rated thermal design power under mixed workloads, with peak loads occurring intermittently and for shorter durations. The difference between intermittent peak loads and sustained near-peak loads is significant for thermal system sizing, because a cooling system designed for intermittent peaks can accommodate headroom through thermal mass and short-term capacity reserves that are not available when heat generation is continuous. AI accelerator hardware also concentrates heat generation within very small physical volumes relative to the power levels involved, creating localized heat flux densities at component surfaces that challenge the fundamental heat transfer mechanisms on which air cooling relies. Air cooling removes heat by convecting it away from component surfaces into an airstream, and the efficiency of that process depends on maintaining adequate airflow velocity across the component surface and sufficient temperature differential between the component and the cooling air. As heat flux density increases, maintaining adequate component temperatures through air cooling requires either increasing airflow velocity, reducing supply air temperature, or both, each of which has practical limits and diminishing returns at the density levels that AI hardware is generating.

AI workloads also introduce thermal transients during workload transitions that create dynamic challenges for cooling system control. The shift from idle state to full training load can occur within seconds, generating a rapid increase in heat output that cooling systems must accommodate without allowing component temperatures to exceed safe operating thresholds. The reverse transition, from full load to idle, can occur equally rapidly, creating a sudden reduction in heat output that cooling systems must respond to without over-cooling components in ways that introduce thermal stress. Cooling systems designed for steady-state operation struggle with these transitions because their control loops are calibrated for gradual load changes rather than rapid step changes in heat generation. Next-generation thermal management architectures need to incorporate control systems that are specifically designed for the rapid-response requirements of AI workload transitions rather than adapted from systems designed for conventional IT load profiles. The combination of sustained high-density heat generation, localized heat flux concentration, and rapid load transients creates a thermal management requirement that is qualitatively different from anything the data center industry has previously needed to address at commercial scale.

The thermal profile of AI infrastructure also varies across different workload types in ways that complicate facility design. Training workloads generate sustained near-peak heat loads for extended periods, while inference workloads generate more variable heat profiles that shift in response to request volumes and model complexity. Facilities designed to support both training and inference workloads face the challenge of accommodating two distinct thermal load profiles within the same infrastructure, which requires cooling architectures that can handle both the sustained peaks of training and the variable demand patterns of inference without overprovisioning cooling capacity to the point that it becomes economically inefficient. Mixed-use AI facilities are therefore pushing the development of more adaptive cooling control systems that can modulate capacity in response to real-time workload conditions rather than operating at fixed setpoints calibrated for peak load scenarios.

The Limits of Air Cooling in High-Density AI Environments

Air cooling has served as the primary thermal management approach for data centers since the earliest commercial installations, and it has adapted through successive generations of increasing compute density through improvements in airflow management, cooling unit efficiency, and containment strategies. Computer room air conditioners, precision air handling units, hot aisle and cold aisle containment systems, and in-row cooling units represent successive adaptations of the fundamental air cooling principle to progressively higher density environments. Each adaptation extended the viable density range of air cooling, but each also approached physical limits that constrained how far the approach could be pushed without fundamentally changing the heat transfer mechanism. Removing heat from a high-density AI rack through air cooling requires moving large volumes of air across server inlet faces at velocities sufficient to maintain adequate temperature rise across the server chassis. As heat density increases, the required airflow volume increases proportionally, and the fan power required to move that airflow increases as a cubic function of velocity. The practical result is that air cooling high-density AI racks requires fan systems that consume a significant fraction of the total power delivered to the rack, reducing the power available for compute and increasing the facility power usage effectiveness beyond levels that are economically or environmentally acceptable for large-scale deployments.

Maintaining component temperatures within safe operating ranges in high-density air-cooled environments also requires supply air temperatures that are low enough to provide adequate temperature differential across the server thermal stack. As rack densities increase, the required supply air temperature decreases, which requires cooling infrastructure capable of delivering chilled air at progressively lower temperatures. This requirement imposes capital and energy costs on facility cooling infrastructure that scale adversely with density, and it creates interactions with outdoor temperature conditions that limit the ability to use economizer cooling in warm climates. Facilities designed around free cooling and economization strategies, which have become standard practice for reducing operational energy consumption in moderate climates, find their economization potential progressively reduced as the supply air temperature requirements of high-density AI racks pull cooling system setpoints below the temperatures achievable through heat exchange with outdoor air during significant portions of the year. Beyond the efficiency penalty, air cooling high-density AI infrastructure imposes structural constraints on facility design that become increasingly difficult to manage as density increases. Ceiling heights, floor loading capacities, and aisle geometries that were adequate for conventional IT density become constraints on the airflow management strategies available to designers, and the structural modifications required to accommodate supplementary cooling equipment for high-density deployments can approach the cost of purpose-built liquid cooling infrastructure in retrofit applications.

The interaction between high-density air cooling and facility infrastructure systems creates cascading design challenges that extend beyond the cooling system itself. Electrical distribution systems designed for conventional IT loads must be upsized to accommodate the higher power densities and different load factor characteristics of GPU-dense racks. Raised floor systems that provided both structural support and underfloor air distribution for conventional deployments may require reinforcement or replacement to support the weight and cooling requirements of high-density AI hardware. Fire suppression systems may require reconfiguration to provide adequate protection for high-value AI accelerator hardware in configurations that differ from the server layouts assumed during original facility design. Each of these secondary systems imposes its own cost and complexity when adapting conventional air-cooled facilities for AI workloads, and the cumulative impact frequently exceeds the direct cost of cooling system modifications in retrofit projects.

Liquid Cooling Architecture Fundamentals

Liquid cooling addresses the thermal density challenge of AI hardware by changing the heat transfer medium from air to liquid, exploiting the substantially higher thermal conductivity and heat capacity of water and dielectric fluids to remove heat at rates that air cannot approach within practical engineering constraints. The fundamental advantage of liquid cooling is not technological sophistication but physical: the thermal properties of liquids allow heat to be transferred from component surfaces to the cooling medium at the rates required by high-density AI hardware, whereas the thermal properties of air make those transfer rates physically unreachable regardless of how well the air delivery system is engineered. Direct liquid cooling systems route a cooling liquid through cold plates that are mounted in direct thermal contact with heat-generating components, removing heat at the source before it can propagate into the air within the server chassis or the facility airspace. Cold plate technology has evolved significantly in response to AI hardware requirements, with designs that accommodate the geometric constraints of GPU package configurations, the non-uniform heat flux distributions across accelerator die surfaces, and the flow rate and pressure drop requirements that are compatible with facility cooling water systems. Modern cold plate designs for AI accelerators incorporate internal flow features that distribute cooling liquid across the die surface in patterns that minimize thermal gradients and maximize heat transfer uniformity, enabling component temperatures to be maintained within tight bounds even under sustained full-load operation.

Rear door heat exchangers represent a transitional approach that preserves compatibility with existing air-cooled server hardware while capturing a portion of the efficiency advantage of liquid cooling. These systems mount a liquid-cooled heat exchanger at the rear of server racks, where hot exhaust air from the servers passes through the heat exchanger before entering the facility airspace. The heat exchanger captures a significant fraction of the rack heat load and transfers it to the facility cooling water system, reducing the amount of heat that the facility air handling system must manage. Rear door heat exchangers do not eliminate the fan power penalty associated with air cooling within the server, and they do not support the highest density configurations that full liquid cooling enables, but they offer a path to managing moderate density increases in existing air-cooled facilities without the facility modifications required for direct liquid cooling or immersion systems. Their value is primarily as a bridge technology for operators who need to increase density in existing facilities on a timeline that does not accommodate the facility modifications required for more comprehensive liquid cooling deployment.

The facility infrastructure required to support direct liquid cooling differs from air-cooled infrastructure in ways that must be addressed during facility design rather than after construction. Facility cooling water distribution systems must be sized to deliver the flow rates required by cold plate and manifold circuits across all racks in the liquid-cooled zone, with appropriate redundancy to maintain cooling continuity during component failures or maintenance operations. Supply water temperatures must be managed within ranges that ensure adequate component cooling while avoiding condensation on cold surfaces in the facility environment. Leak detection systems must be integrated into the cooling water distribution infrastructure to provide early warning of fluid loss before it can cause equipment damage. These infrastructure requirements are well understood by experienced liquid cooling system integrators, but they represent a significant departure from the facility systems that air-cooled data center operators and facilities teams are accustomed to managing.

Immersion Cooling Architecture and Its Design Implications

Immersion cooling systems represent the most complete implementation of the liquid cooling principle, submerging server hardware entirely in dielectric fluid and eliminating the role of air as a heat transfer medium within the server environment. The thermal performance advantages of immersion cooling are substantial, enabling rack densities that exceed the practical limits of direct liquid cooling while also providing protection against dust, humidity, and other environmental contaminants that can degrade hardware reliability in conventional deployments. Single-phase immersion systems maintain the dielectric fluid in liquid state throughout the heat transfer process, circulating fluid through tanks where it absorbs heat from submerged hardware and then through heat exchangers where it transfers that heat to the facility cooling water circuit. Two-phase systems use fluids with low boiling points that vaporize at component surfaces during heat absorption, transferring heat through the phase change process before the vapor condenses on cooled coils within the tank and returns to liquid state. Two-phase systems achieve higher heat transfer rates at lower temperature differentials than single-phase systems, enabling them to support higher rack densities and maintain tighter component temperature control, but they introduce additional complexity in fluid management and containment that increases both capital cost and operational requirements relative to single-phase alternatives. The selection between single-phase and two-phase immersion depends on the specific density requirements of the planned workloads, the facility infrastructure available to support fluid management, and the operational maturity of the team responsible for maintaining the systems.

Deploying immersion cooling systems requires facility infrastructure that differs substantially from the requirements of air-cooled or direct liquid-cooled environments. Immersion tanks require structural floor loading capacity that exceeds the requirements of conventional server racks, as the combined weight of the tank, dielectric fluid, and submerged hardware is substantially greater than the weight of equivalent rack-mounted equipment. Facility water systems must be designed to provide the flow rates and supply temperatures required by the tank heat exchangers, with appropriate redundancy and isolation capabilities to ensure cooling continuity during maintenance operations. Fluid management infrastructure must be provided for monitoring fluid quality, replenishing fluid lost through evaporation or maintenance activities, and safely handling fluid during server insertion and removal operations. These requirements are manageable within purpose-built AI data center designs but can present significant challenges in retrofit applications where existing facility infrastructure was not designed to accommodate them. Server hardware procurement strategies also need to adapt for immersion deployment, as not all server configurations are certified for immersion use by their manufacturers, and the selection of compatible hardware can constrain the choice of AI accelerator platforms available to facility operators.

The operational procedures required for immersion-cooled systems differ significantly from those applicable to air-cooled or direct liquid-cooled environments, and building operational capability for immersion cooling requires deliberate investment in training and knowledge development. Server insertion and removal from immersion tanks requires procedures that prevent fluid contamination, ensure adequate draining before handling, and maintain fluid cleanliness standards that protect both hardware reliability and fluid system longevity. Fluid quality monitoring requires regular sampling and analysis to detect contamination, degradation products, and changes in dielectric properties that could affect cooling performance or hardware safety. Maintenance activities that are routine in air-cooled environments require modified procedures in immersion environments that account for the fluid environment and the specialized tooling required for working with immersed hardware. Organizations that are building immersion cooling capabilities for the first time frequently underestimate the operational learning investment required, and the early deployments that have been most successful have been those where operators invested in training and procedure development before bringing systems into production.

Integrated Thermal and Power Architecture

The most significant architectural shift in next-generation AI data centers is the integration of thermal management and power distribution into a unified infrastructure layer rather than treating them as separate facility systems. This integration is driven by the recognition that thermal and power conditions within a high-density AI data center are tightly coupled: the heat generated by compute hardware is a direct function of the power delivered to it, and the efficiency of cooling systems affects both the total power consumed by the facility and the thermal environment within which compute hardware operates. Designing these systems independently and then integrating them during construction produces suboptimal outcomes that are increasingly apparent as density levels push against the limits of what conventional facility designs can accommodate. Engineers who approach thermal management and power distribution as an integrated design problem from the earliest stages of project planning can identify optimization opportunities that are not available when the two systems are designed and procured independently. Power distribution architectures that minimize conversion losses and deliver power to compute hardware at higher voltages reduce the heat generated per unit of useful compute work, which directly reduces the cooling load that thermal management systems must handle. Cooling systems that recover heat at temperatures suitable for productive reuse can offset facility energy consumption in ways that improve overall power usage effectiveness beyond what is achievable through cooling efficiency improvements alone.

The high-temperature heat generated by AI hardware at rack density levels that liquid cooling systems can capture represents an energy resource that is beginning to attract serious attention from both facility operators and external energy users. Liquid cooling systems that operate at elevated supply temperatures can deliver waste heat at temperatures suitable for use in district heating systems, industrial process applications, and other heat consumers that can be co-located with or connected to data center facilities. Several deployments in Northern Europe have demonstrated the operational viability of data center heat recovery at scale, with facility operators receiving revenue or cost offsets from heat utility customers that partially offset cooling infrastructure capital costs. This model is not universally applicable, as it requires proximity to heat consumers and regulatory frameworks that support heat utility arrangements, but it represents a direction in which thermal management strategy is evolving from pure cost management toward value creation. The control architecture for cooling systems in next-generation AI data centers must also accommodate the dynamic load characteristics of AI workloads in ways that conventional building management system approaches cannot adequately address. AI training workloads generate rapid changes in heat output that require cooling system response times measured in seconds rather than the minutes that conventional cooling control loops are designed around, and advanced control architectures that integrate real-time telemetry from compute hardware with predictive models of workload behavior are being developed and deployed in leading AI data center facilities to enable proactive cooling adjustments that anticipate load changes rather than reacting to them after thermal thresholds have been crossed.

The Path Forward for Thermal Management Architecture

The evolution of thermal management architecture in AI data centers is not approaching a stable endpoint. GPU and AI accelerator hardware roadmaps indicate continued increases in per-chip power consumption and heat flux density over the next several hardware generations, which will require cooling architectures to continue advancing beyond the current state of the art. The transition from air cooling to direct liquid cooling to full immersion that is underway in leading AI data center deployments represents the early stages of a longer architectural evolution that will ultimately produce facility designs that are fundamentally different from the air-cooled data centers that dominated the industry for the first three decades of commercial internet infrastructure. Operators and developers who invest in understanding and implementing advanced thermal management architectures now are building expertise and operational knowledge that will compound in value as hardware densities continue to increase and the gap between air-cooled and liquid-cooled facility capabilities widens further.

The organizational capability required to design, deploy, and operate liquid-cooled AI data center infrastructure is substantially different from the capability required for conventional air-cooled facilities. Fluid dynamics expertise, materials compatibility knowledge, and operational experience with liquid handling systems are not widely distributed across the existing data center workforce, and building that capability requires investment in training, hiring, and knowledge development that takes time to produce results. Facilities teams at operators who have not begun that investment are accumulating a capability gap relative to organizations that have been building liquid cooling expertise through early deployments, and that gap will become increasingly consequential as the proportion of new AI data center capacity deployed with liquid cooling continues to grow. The vendors and system integrators who serve the AI data center market are responding to this capability gap by developing managed service offerings, remote monitoring platforms, and standardized maintenance procedures that reduce the operational burden on operator facilities teams, but these services supplement rather than replace the need for internal capability development.

The supply chain for liquid cooling system components is also evolving in ways that affect how operators plan and execute liquid cooling deployments. Cold plate manufacturers, dielectric fluid suppliers, heat exchanger fabricators, and fluid distribution system integrators are all scaling production capacity in response to growing demand, but lead times for specialized components remain longer than those for conventional air cooling equipment. Operators who are planning liquid cooling deployments need to incorporate component procurement timelines into their project schedules and establish supplier relationships early in the development process to secure capacity commitments that protect their delivery timelines. The supply chain maturity for liquid cooling is improving rapidly as volumes grow and manufacturers invest in capacity expansion, but it has not yet reached the reliability levels that operators expect from mature air cooling equipment supply chains, and project teams that do not account for this difference are frequently surprised by procurement delays that affect their construction and commissioning schedules.

Thermal management has always been a core data center engineering discipline, but it has rarely been a source of competitive differentiation between operators. In the AI era, it is becoming exactly that. The facilities that can support the density levels required by current and future AI hardware generations, delivered reliably and at operational efficiency levels that make them economically viable for hyperscaler and enterprise customers, will capture a disproportionate share of AI infrastructure demand. The facilities that cannot support those density levels will be limited to workloads that conventional air-cooled infrastructure can accommodate, a market segment that will grow more slowly and command lower returns than AI-optimized infrastructure. Thermal management architecture is no longer a technical detail to be resolved during detailed design. It is a strategic decision that determines what markets a facility can serve and what competitive position its operator can sustain in the AI infrastructure market over the long term.

The operators who begin building liquid cooling expertise, supply chain relationships, and integrated thermal-power design capabilities now will find that those investments produce compounding returns as the AI infrastructure market continues to scale. The facilities they build will be capable of supporting hardware generations that have not yet been released, giving them a longevity advantage over facilities that are already approaching their thermal management limits with current hardware. The organizations that defer this investment until liquid cooling becomes an unavoidable requirement will find that catching up requires more time and capital than getting ahead of the transition would have. Thermal management architecture is the infrastructure decision that determines everything else about an AI data center’s competitive position, and treating it as a strategic priority rather than a technical afterthought is the defining characteristic of operators who are building for the long term.