Meta Platforms Inc. has deepened its dependence on external compute infrastructure, committing an additional $21 billion to AI cloud capacity from CoreWeave. The agreement, disclosed Thursday, extends from 2027 through 2032 and builds on an earlier $14.2 billion contract signed in September, which runs until 2031. The combined value of Meta’s engagement with CoreWeave now totals $35.2 billion, placing it among the largest compute procurement strategies in the AI era. The move underscores a structural shift in how hyperscalers approach infrastructure balancing owned assets with outsourced, high-density compute.

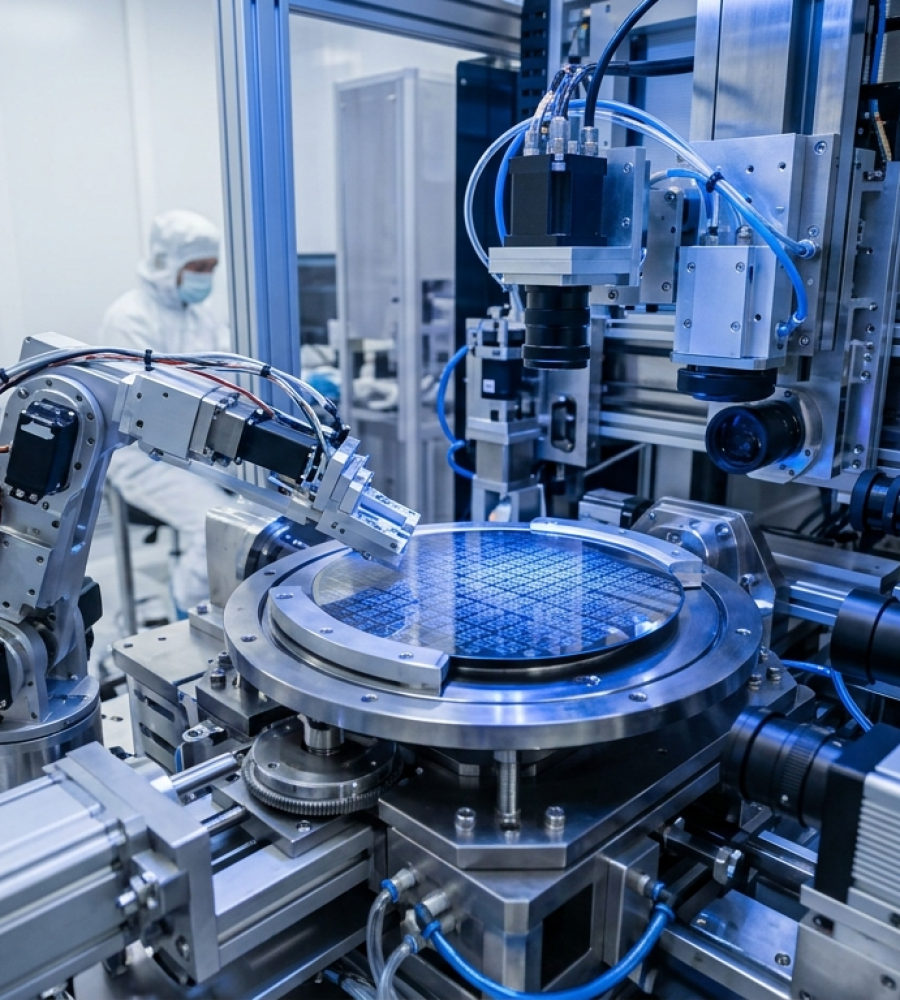

CoreWeave operates a rapidly expanding network of data centers densely packed with GPUs supplied by Nvidia. These processors serve as the backbone of modern AI workloads, from training frontier models to deploying inference systems at scale.

The company has positioned itself as a specialized provider of ready-to-deploy AI compute, offering an alternative to traditional cloud vendors. Its infrastructure has become particularly valuable for companies facing immediate capacity constraints in a market where GPU supply remains tight and deployment timelines stretch into years.

Meta continues to invest heavily in its own infrastructure footprint. The company recently unveiled plans for a $10 billion data center project in Texas, reflecting its long-term ambition to control critical compute assets.

However, internal buildouts cannot match the pace required to stay competitive in the current AI cycle. The velocity of model development and deployment has forced companies to secure near-term capacity through external providers. This dual-track approach owning infrastructure while leasing high-performance capacity signals a broader industry pattern. Companies are no longer choosing between build or buy; they are executing both simultaneously.

CoreWeave’s Edge: Performance and Developer Preference

CoreWeave’s leadership attributes its growing client base to performance differentiation. CEO Mike Intrator emphasized the company’s value proposition in a recent interview.

“Sure, they can buy compute,” he said, referring to Meta and its peers, adding, “Yet, for some reason, all these people who can buy compute also feel the need to buy it from us, because of the quality of the product that we deliver,” CoreWeave CEO Mike Intrator told CNBC.

The company’s infrastructure has also resonated with AI engineers. Intrator pointed to talent migration trends as a reinforcing factor: “They hired from across the space, people who have used infrastructure from all different folks, and they came back to us,” he said. This dynamic highlights a critical but often overlooked factor in infrastructure decisions developer familiarity and workflow efficiency.

Meta’s Portfolio Strategy for AI Infrastructure

Meta has framed the agreement as part of a diversified infrastructure strategy rather than a single-provider dependency. A company spokesperson described the deal as part of its “portfolio-based approach to infrastructure, as we invest in capacity for our AI ambitions.”

This approach reflects a deliberate effort to mitigate risk while optimizing performance across workloads. By distributing compute across multiple providers and architectures, Meta gains flexibility in managing both training and inference demands.

Multi-Partner Ecosystem Expands Beyond CoreWeave

The CoreWeave agreement represents only one layer of Meta’s broader compute strategy. The company has also aligned with Advanced Micro Devices for AI chip supply, diversifying beyond Nvidia’s ecosystem. In parallel, reports indicate that Meta has secured a separate $10 billion arrangement with Google to access Tensor Processing Units (TPUs) for inference workloads.

These parallel partnerships illustrate how leading AI players are constructing multi-vendor stacks to hedge against supply bottlenecks and optimize performance across different stages of the AI lifecycle.

Meta’s aggressive spending reflects intensifying competition with rivals such as OpenAI and Google. The race to build more capable models has shifted the battleground from algorithms alone to the infrastructure that powers them.

However, access to compute has emerged as a primary constraint. Companies that secure reliable, scalable GPU capacity gain a decisive advantage in training larger models faster and deploying them more efficiently. Meta’s expanded deal signals that infrastructure is no longer a background function, it is a frontline competitive asset.

The New Economics of AI Compute

The scale of Meta’s commitment highlights a broader economic transformation in the AI sector. Capital allocation is increasingly directed toward compute rather than traditional software development. High-performance infrastructure now dictates the ceiling of innovation. As a result, companies are willing to lock in long-term, multi-billion-dollar agreements to secure capacity years in advance.

Therefore, the Meta-CoreWeave partnership reflects more than a procurement decision, it marks a structural shift in how AI leaders compete, invest, and scale in an era defined by compute scarcity.