Artificial intelligence is no longer just software. It is infrastructure. And at the heart of that infrastructure lies silicon.

For decades, computing power expanded quietly in the background, enabling the internet, smartphones, and cloud services. Today, the equation has shifted. Artificial intelligence has transformed computing capacity from a supporting utility into the defining resource of the digital economy.

The surge in demand for AI processing hardware signals something deeper than technological progress. It marks the beginning of a geopolitical and economic contest over the world’s most critical digital resource: advanced chips. Just as oil fueled the industrial age, silicon is rapidly becoming the foundation of the AI age.

The New Resource Economy

Every technological era revolves around a critical resource. Coal powered the steam revolution. Oil powered the automotive age. Data powered the internet. Artificial intelligence, however, runs on something more tangible: computing power. Training modern AI systems requires enormous computational capability. Large-scale models ingest vast datasets and process them through billions or even trillions of parameters. This level of complexity demands hardware specifically designed for AI workloads—processors optimized to perform massive parallel computations.

Traditional CPUs cannot handle these workloads efficiently. Instead, specialized processors such as GPUs and AI accelerators have emerged as the backbone of modern machine learning infrastructure.

This shift has elevated semiconductor manufacturing from an industrial sector to a strategic pillar of the global economy. The world is now witnessing a resource race, not for fossil fuels, but for silicon capable of powering artificial intelligence.

Why AI Models Are Changing the Hardware Game

Artificial intelligence systems have evolved dramatically over the past decade. Earlier machine learning models could be trained on relatively modest infrastructure. Today’s AI architectures are far larger and more sophisticated. Training them requires enormous clusters of high-performance processors working simultaneously to process massive datasets.

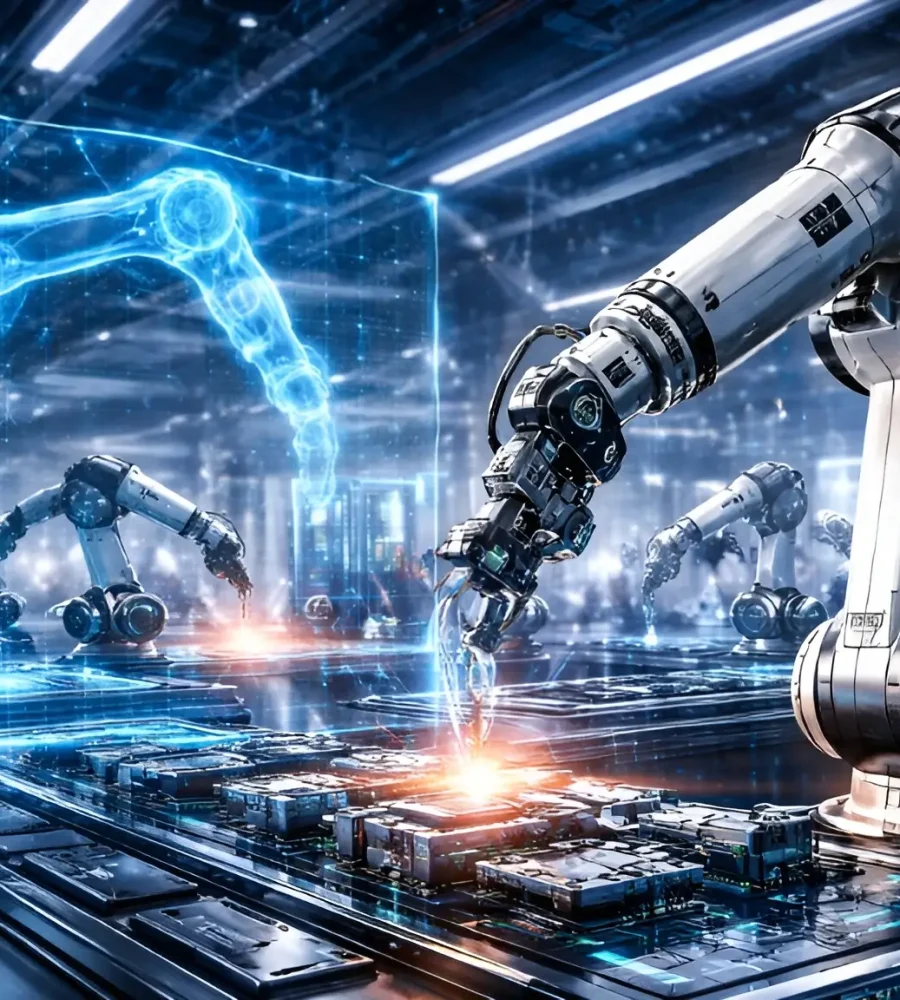

These systems operate within specialized computing environments where thousands of processors collaborate across high-speed networks. Such infrastructures form the backbone of modern AI research, enterprise analytics, and automation technologies. The implication is clear: innovation in artificial intelligence increasingly depends on access to powerful computing hardware.

Organizations that lack sufficient processing resources face a growing disadvantage in developing advanced AI systems. This dynamic is reshaping how companies and governments think about technological competitiveness. In the AI economy, computing power is becoming the ultimate strategic asset.

Silicon Valley Meets Industrial Policy

The surge in AI chip demand is not happening in isolation. It is unfolding alongside a new wave of industrial policy across the world. Governments are investing heavily in artificial intelligence infrastructure as part of broader efforts to strengthen technological sovereignty. Advanced computing facilities are being developed to support research, data processing, and next-generation AI applications.

These investments are transforming semiconductor manufacturing into a focal point of national strategy. Access to advanced chips increasingly influences economic security, innovation capacity, and geopolitical influence. Countries that can produce or secure high-performance AI processors hold a structural advantage in developing emerging technologies such as robotics, automation, and autonomous systems.

In other words, the competition for AI chips is also a competition for future technological leadership. The implications extend far beyond the technology sector.

The Infrastructure Behind the AI Boom

Artificial intelligence breakthroughs often capture headlines, but the real story lies in the infrastructure supporting them. Behind every major AI system lies a massive network of processors, servers, cooling systems, and data pipelines operating within specialized data centers.

These facilities function as the factories of the digital age. Instead of manufacturing physical goods, they manufacture intelligence, processing vast datasets to train models capable of performing complex tasks. The demand for such infrastructure has expanded rapidly as industries adopt AI-driven tools.

Finance uses machine learning to detect fraud and optimize trading strategies. Healthcare applies AI to analyze medical imaging and accelerate drug discovery. Logistics companies deploy algorithms to streamline supply chains and forecasting. All of these applications depend on reliable access to high-performance computing resources. As AI adoption spreads, so does the demand for the silicon powering it.

Democratizing Access to Compute

Yet the AI chip boom raises an important question: who gets access to this computing power?

Large technology companies still dominate the global AI infrastructure landscape because they can build massive data centers and deploy thousands of specialized processors. For startups, researchers, and smaller organizations, building similar infrastructure is often financially impossible.

This gap has led to the rise of cloud-based AI infrastructure platforms, which provide on-demand access to powerful computing resources without requiring organizations to own physical hardware. Major cloud providers such as Amazon Web Services, Microsoft Azure, and Google Cloud now offer GPU-accelerated environments that allow developers to train and deploy machine-learning models at scale through remote infrastructure. Amazon Web Services, Microsoft Azure, and Google Cloud collectively dominate global cloud infrastructure and provide access to advanced AI tools and computing environments for organizations of all sizes.

These cloud platforms deliver high-performance GPUs and specialized accelerators as virtualized resources, enabling users to run compute-intensive workloads such as deep learning training, scientific simulations, and large-scale data analysis without maintaining their own infrastructure.

In effect, cloud AI infrastructure has become the gateway through which much of the world accesses advanced computing power. As AI models grow larger and more demanding, the role of cloud-based compute platforms in expanding participation in the AI ecosystem is likely to become even more important. Despite the excitement surrounding artificial intelligence, the industry’s true constraint is not talent or data. It is compute. Training cutting-edge models consumes enormous amounts of energy and processing capacity. As models grow larger and more complex, their computational requirements continue to expand.

This creates a reinforcing cycle. More powerful chips enable more sophisticated AI systems. Those systems, in turn, drive demand for even more powerful chips. The result is a feedback loop accelerating the growth of the semiconductor and computing infrastructure sectors. Industry analysts widely expect the AI chip market to remain one of the fastest-growing segments of the global technology industry in the coming years.But growth alone does not capture the scale of transformation underway.Artificial intelligence is shifting computing from a commodity into a strategic resource.

Why Silicon Is the New Oil

Comparisons between silicon and oil are increasingly common, and not without reason.Oil powered the industrial economy because it enabled mobility, manufacturing, and energy production. Silicon powers the digital economy because it enables intelligence.

Every AI model, cloud service, or automated system ultimately depends on semiconductor hardware performing billions of calculations per second.Control over that hardware carries profound implications.It determines which countries lead technological development. It shapes which companies dominate digital markets. And it influences how quickly artificial intelligence evolves. The AI chip gold rush therefore represents more than a technology trend. It signals a structural shift in the global economy.

The Next Phase of the AI Race

As artificial intelligence continues advancing, demand for computing power will only intensify.Machine learning systems are expanding into robotics, autonomous systems, and complex decision-making environments. These applications require ever-greater processing capabilities and specialized hardware architectures. The global AI technology race is accelerating accordingly.Companies and governments alike are investing billions in advanced computing infrastructure to secure their position in this emerging landscape.

In the coming decade, access to powerful processing resources may prove just as important as access to capital, talent, or data.The winners of the AI era may ultimately be those who control the silicon beneath it.And in that sense, the analogy holds: in the age of artificial intelligence, silicon is not merely a material.

It is the fuel of the future.