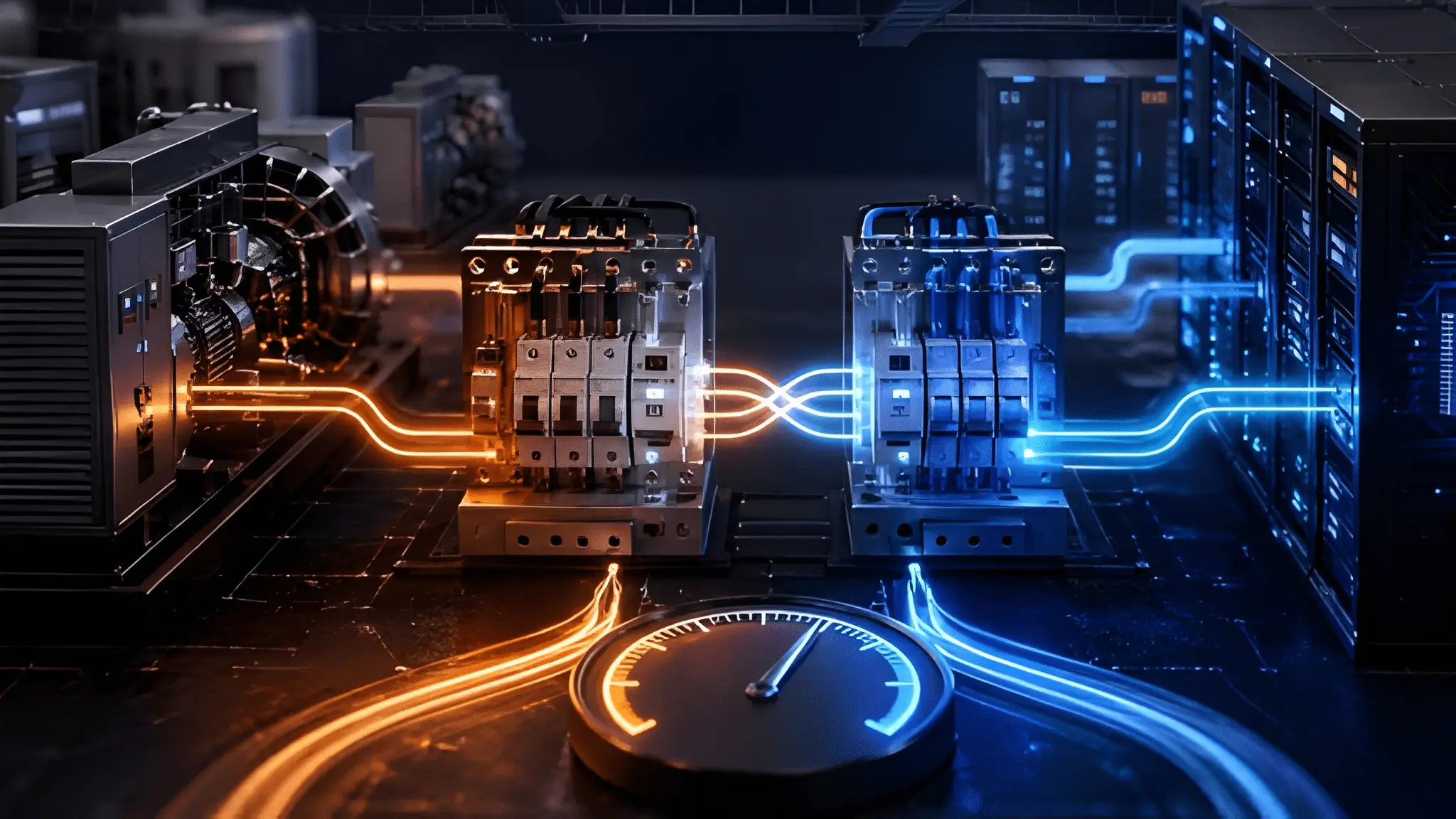

Infrastructure has evolved toward higher density and tighter operational tolerances without equivalent evolution in switching expectations. Legacy assumptions treated short interruptions as acceptable because older equipment tolerated brief instability. Modern compute systems behave differently under the same conditions due to tighter voltage and frequency tolerances. Transition delays that once seemed negligible now propagate into cascading system responses. The challenge lies not in recognizing outages, but in handling the transition between power sources with precision. That distinction frames the difference between Automatic Transfer Switches and Static Transfer Switches.

Power continuity now operates as a dynamic condition rather than a binary state of on or off. Systems must maintain stable waveform characteristics during transitions, not just restore supply after loss. Switching technology defines how gracefully or abruptly that transition occurs under load. Engineers who overlook this dimension often assume that backup power automatically guarantees continuity. Real-world operation proves that assumption incomplete under transient conditions. Understanding the gap between ATS and STS switching times clarifies where hidden risks reside.

The 5–10 Second Problem Nobody Designs For

A typical Automatic Transfer Switch initiates transfer only after detecting a sustained loss of utility power. Detection circuits incorporate intentional delay to prevent nuisance switching during transient disturbances. Once the ATS confirms a genuine outage, it signals the standby generator to start. Generator startup involves mechanical rotation, fuel delivery stabilization, and voltage buildup before it reaches usable output. Each of these steps introduces unavoidable latency that accumulates into several seconds of delay. During this interval, the load remains disconnected from a stable source unless supported by additional systems.

Mechanical switching further extends this delay once the generator stabilizes. The ATS physically disconnects the utility source before connecting the generator supply to prevent backfeed. Contact movement requires time for actuation, separation, and re-engagement under controlled conditions. Arc suppression mechanisms ensure safe switching but also impose speed limits on the process. These physical constraints prevent instantaneous transfer even after the generator becomes ready. The resulting delay reflects both electrical safety requirements and mechanical realities.

Load exposure during this delay depends on whether upstream systems can absorb the interruption, particularly in generator-backed open-transition ATS configurations where no alternate source is momentarily connected. Without a bridging system, equipment experiences a complete loss of power until the transfer completes. Voltage collapse occurs almost immediately after the utility source drops, leading to system shutdown. Restart sequences often require more time than the interruption itself, amplifying operational disruption. Systems designed without accounting for this delay implicitly accept the risk of downtime. This design assumption rarely appears explicitly in infrastructure planning documents.

Why IT systems exceed tolerance thresholds

Information technology equipment operates within tightly regulated power envelopes. Servers, storage systems, and network devices rely on stable voltage and frequency to maintain internal state. Even brief interruptions can trigger protective shutdown mechanisms to prevent hardware damage. These systems do not interpret outages based on duration alone, but on waveform continuity. A multi-second interruption exceeds the tolerance window of nearly all modern digital infrastructure. That mismatch exposes systems to avoidable risk during ATS transitions. Thermal and electrical stability further complicate system behavior during interruptions. Sudden power loss disrupts cooling systems, causing localized temperature fluctuations.

Restart sequences place additional electrical stress on components as inrush currents spike. Repeated exposure to such cycles accelerates hardware wear and reduces lifespan. Engineers often underestimate these cumulative effects when evaluating switching performance. The focus on backup availability overshadows the importance of transition continuity. Application-level impacts extend beyond hardware considerations. Transactional systems depend on continuous processing to maintain data integrity. Interruptions can leave operations in incomplete states, requiring recovery procedures. Distributed systems may interpret the outage as a node failure, triggering failover mechanisms. These responses introduce additional complexity and recovery overhead. The operational cost of such events often exceeds the perceived impact of the initial outage.

Inside the 10 Millisecond Lifeline

Static Transfer Switches operate on a fundamentally different principle compared to mechanical systems. Instead of moving contacts, they use solid-state devices to control power flow between sources. These devices switch at speeds measured within fractions of an electrical cycle. Detection and transfer occur almost simultaneously, minimizing interruption duration. The load experiences little to no perceptible disruption during the transition when both sources remain within acceptable voltage, frequency, and phase alignment limits. This capability defines the core advantage of STS technology.

The switching process relies on continuous monitoring of both primary and alternate sources. Control systems evaluate voltage, frequency, and phase alignment in real time. When the primary source deviates beyond acceptable limits, the STS initiates transfer instantly. Semiconductor devices conduct the transition without physical movement, eliminating mechanical delays. This approach enables switching within a timeframe that aligns with IT equipment tolerance. The result is effective continuity rather than delayed restoration. Zero-cross detection plays a critical role in minimizing electrical disturbance during switching. The STS coordinates transfer at the point where the waveform crosses zero voltage. Switching at this instant reduces transient spikes and minimizes stress on connected equipment. This precision requires accurate synchronization between sources before transfer occurs. Control logic ensures that both sources remain within acceptable phase difference limits. Such coordination enables seamless transitions under dynamic conditions.

Maintaining load continuity under dynamic conditions

Load continuity depends not only on speed but also on waveform quality during transfer. STS systems maintain voltage within acceptable limits throughout the switching event. The absence of mechanical delay prevents complete voltage collapse at the load. Equipment continues operating as if no disturbance occurred. This behavior supports applications that cannot tolerate even brief interruptions. The system effectively masks upstream disturbances from downstream loads.

Control algorithms determine the effectiveness of this process under real-world conditions. The STS must differentiate between transient disturbances and sustained faults. Incorrect decisions can lead to unnecessary transfers or failure to transfer when needed. Advanced systems incorporate adaptive logic to improve decision accuracy. Continuous monitoring ensures that the alternate source remains ready for immediate use. This readiness defines the reliability of the switching process. Environmental factors also influence switching performance. Variations in source quality, load characteristics, and system configuration affect transfer dynamics. STS systems account for these variables through real-time adjustments. Engineers must consider these factors during system design to ensure optimal performance. The goal extends beyond fast switching to consistent switching under all conditions. Achieving this balance requires both robust hardware and precise control logic.

What Actually Happens During Those Missing Seconds

When utility power disappears, voltage at the load does not fade gradually under most conditions. Electrical systems experience a rapid collapse as the supply source disconnects or fails to sustain output. Capacitive and inductive elements within the system release stored energy briefly, but that reserve dissipates almost instantly. The waveform loses its structure as amplitude drops and frequency becomes undefined. Equipment connected to this unstable supply enters an unpredictable operational state. This period represents the most critical vulnerability during ATS transfer delays.

Frequency instability can compound the problem during this interval in grid or rotating-source disturbances where upstream generation decouples or slows prior to complete loss. Sensitive electronics rely on stable frequency to maintain synchronization of internal clocks and processing cycles. Deviation from expected frequency introduces timing errors that can corrupt operations. Systems designed with narrow tolerance thresholds respond quickly by shutting down to prevent damage. The absence of a stable waveform effectively renders the supply unusable even before voltage reaches zero. Transient conditions dominate the electrical environment during these seconds. Sudden changes in load and supply conditions create spikes, dips, and oscillations. These disturbances propagate through the distribution network and interact with connected equipment. Protective circuits attempt to respond, but their actions may introduce additional switching events. The system enters a state where stability cannot be guaranteed until a new source takes over. This chaotic interval defines the operational risk associated with delayed transfer.

System exposure and cascading effects

IT systems respond to power anomalies based on predefined protection thresholds. When voltage drops below acceptable limits, power supplies disengage to avoid malfunction. This response occurs quickly, often before complete loss of supply. Devices shut down in an uncoordinated manner, depending on their individual tolerance levels. The lack of coordination leads to partial system outages rather than a clean shutdown. Recovery becomes more complex as systems restart asynchronously.

Network infrastructure faces additional challenges during such events. Switches and routers rely on continuous operation to maintain connectivity. Interruption causes loss of routing information and session states. Re-establishing these states requires time and coordination across multiple devices. The resulting delay can extend beyond the initial power interruption depending on network design, redundancy mechanisms, and recovery protocols. This chain reaction amplifies the impact of the initial outage. Storage systems encounter risks related to data integrity during abrupt power loss. Write operations in progress may remain incomplete, leading to corrupted data blocks. File systems attempt recovery upon restart, but not all inconsistencies resolve automatically. Redundant storage configurations mitigate some risks, yet they do not eliminate them entirely. Rebuilding data structures consumes time and system resources. The interruption creates a ripple effect that extends into application performance.

The Physics of Delay: Why ATS Can’t Be Faster

Automatic Transfer Switches rely on electromechanical components to perform switching operations. Contacts must physically separate from one source before engaging another to ensure safe isolation. This movement requires time due to inertia and the need for controlled acceleration and deceleration. Arc suppression mechanisms protect the contacts during separation but introduce additional delay. Engineers design these systems to prioritize safety and durability over speed. These constraints define the minimum achievable switching time for ATS systems.

Contact wear and material limitations further influence switching behavior. Repeated operations cause gradual degradation of contact surfaces. Designers incorporate features to minimize wear, such as controlled contact pressure and arc quenching. These features require precise timing and cannot operate instantaneously. Faster switching would increase stress on components and reduce lifespan. The balance between speed and reliability shapes the design of ATS mechanisms. Synchronization requirements can limit how quickly transfer can occur in closed-transition or synchronized transfer configurations where phase and voltage matching are required before connection. The generator must match voltage and frequency within acceptable limits before connection. Connecting an unsynchronized source risks severe electrical damage. Control systems enforce these conditions before allowing contact closure. This verification process adds to the overall delay. The system must ensure safe operation under all conditions, not just speed.

Control delays and safety interlocks

Control logic within ATS systems includes intentional delays to prevent unnecessary switching. Short disturbances in utility power should not trigger full transfer sequences. Time delays filter out these transient events and reduce wear on equipment. These delays, while beneficial, contribute to overall response time. Designers must balance responsiveness with operational stability. This trade-off results in switching times that extend into seconds. Safety interlocks prevent simultaneous connection of utility and generator sources. These mechanisms ensure that one source disconnects completely before another connects. The process requires confirmation signals and mechanical positioning. Each step introduces incremental delay into the sequence. The system prioritizes safety over speed to avoid catastrophic faults. This design philosophy defines the operational characteristics of ATS systems.

Environmental conditions also affect mechanical performance. Temperature variations influence material properties and actuator response. Dust and humidity can impact contact movement and electrical insulation. Designers account for these factors by incorporating tolerances and safeguards. These adjustments further limit how quickly the system can operate reliably. The result is a system optimized for robustness rather than rapid switching.

How STS Eliminates the ‘Power Gap’ Completely

Static Transfer Switches eliminate mechanical movement by using semiconductor devices. These components conduct or block electrical current based on control signals. Switching occurs electronically, without physical displacement of parts. This approach enables response times within fractions of an electrical cycle. The load remains connected to a valid source throughout the transition. This capability effectively minimizes the traditional power gap associated with ATS systems by reducing transfer interruption to sub-cycle durations under suitable source conditions.

The absence of moving parts reduces both delay and maintenance requirements. Semiconductor devices operate with consistent performance over repeated cycles. They do not suffer from mechanical wear in the same way as contact-based systems. Control systems manage switching with high precision and repeatability. This consistency enhances reliability under dynamic conditions. The system maintains continuity even during frequent disturbances. Switching logic determines the optimal moment to transfer between sources. The system evaluates voltage, frequency, and phase alignment continuously. When conditions meet predefined criteria, the STS executes transfer immediately. This process occurs faster than the load can detect any interruption. Equipment continues operating without disruption. The system achieves true continuity rather than delayed recovery.

Zero-cross coordination and disturbance avoidance

Zero-cross detection ensures that switching occurs at the optimal point in the waveform. At this instant, voltage passes through zero, minimizing electrical stress. Switching at this point reduces the likelihood of transient spikes. The system coordinates both sources to align at this moment. This precision requires continuous monitoring and rapid decision-making. The result is a smooth transition with minimal disturbance. Phase synchronization plays a critical role in successful transfer. The STS must ensure that both sources remain within acceptable phase difference limits. Excessive phase mismatch can lead to disturbances during switching. Control systems prevent transfer until conditions meet required thresholds. This approach balances speed with stability. The system maintains high-quality power delivery throughout the process.

Load characteristics influence how the system responds during transfer. Different types of equipment react differently to minor disturbances. The STS accounts for these variations through adaptive control strategies. Engineers design systems to handle a wide range of load conditions. This flexibility ensures consistent performance across applications. The system delivers continuity regardless of the operating environment.

From Utility Loss to Load Impact: A Timeline Breakdown

The process begins with detection of utility power deviation beyond acceptable limits. Control systems introduce a delay to confirm that the disturbance persists. Once confirmed, the ATS initiates the generator start sequence. The generator accelerates and stabilizes before producing usable output. Only after stabilization does the ATS proceed with mechanical transfer. This sequence defines the multi-step nature of ATS operation.

During this sequence, the load remains without a stable power source unless supported by auxiliary systems. Voltage drops quickly after utility loss, leading to equipment shutdown. The delay between detection and restoration determines the extent of disruption. Systems without adequate buffering experience complete interruption. Recovery begins only after the generator assumes the load. This process highlights the inherent delay in ATS-based designs. Re-transfer to utility power follows a similar sequence in reverse. The ATS verifies stability of the utility source before initiating transfer back. Mechanical switching occurs again, introducing additional interruption. This process repeats each time the system transitions between sources. The cumulative effect increases wear on equipment and risk of disruption. The design prioritizes safe operation over seamless continuity.

Sequence of events in STS-based systems

STS systems operate with continuous monitoring of both primary and alternate sources. Detection of deviation triggers immediate evaluation of transfer conditions. If the alternate source meets required parameters, the system initiates transfer instantly. The switching process occurs within a fraction of a cycle. The load remains connected to a valid source throughout the event. This sequence eliminates the interruption seen in ATS systems.

The system maintains readiness by keeping both sources synchronized within acceptable limits. Continuous monitoring ensures that the alternate source remains available. Transfer occurs only when conditions guarantee a stable transition. This approach prevents unnecessary switching while maintaining responsiveness. The system balances speed with reliability through precise control logic. The result is consistent performance under varying conditions. Re-transfer occurs seamlessly when the primary source stabilizes. The system evaluates conditions and executes transfer without interruption. This capability allows frequent transitions without impacting load continuity. Equipment operates as if connected to a single uninterrupted source. The system can effectively mask upstream disturbances when transfer conditions are met and the alternate source maintains stable electrical characteristics. This behavior defines the advantage of STS technology in modern infrastructure.

The Moment UPS Starts Running the Show

A UPS system activates immediately when input power falls outside acceptable limits. It does not wait for confirmation delays in upstream switching devices. The inverter begins supplying conditioned power to the load using stored energy from batteries or other storage media. This response creates a temporary buffer that bridges the gap between utility failure and generator availability. The load continues to receive stable voltage and frequency during this interval. This bridging function defines the critical role of UPS systems in ATS-based architectures.

The UPS must sustain output for the entire duration of the ATS transfer sequence. Its capacity and autonomy determine how effectively it can cover this period. Designers must align UPS runtime with worst-case generator startup and transfer delays. Any mismatch between these parameters introduces risk to load continuity. The system depends on precise coordination between independent components. This dependency creates a layer of complexity that requires careful engineering. Battery behavior influences the reliability of this bridging function. Energy storage systems must deliver consistent output under varying load conditions. Temperature, aging, and charge state affect performance during discharge. A degraded battery reduces the effective buffer time available. This reduction may not be apparent until an actual outage occurs. The UPS becomes a single point of dependency during the transfer window.

What happens when the buffer is stressed

When the UPS operates near its capacity limits, system stability becomes more fragile. High load conditions increase discharge rates and reduce available runtime. The inverter must maintain waveform quality despite these stresses. Any deviation can propagate to connected equipment. Systems designed with minimal margin face higher risk during extended outages. The buffer becomes less reliable as operating conditions approach limits.

Extended transfer delays or generator startup issues can exhaust UPS reserves. Once the stored energy depletes, the load loses power abruptly. This scenario represents a failure of the entire backup chain rather than a single component. Recovery requires both restoration of supply and system restart procedures. The impact often exceeds that of a short interruption. This risk underscores the importance of aligning system components with realistic operating conditions. Coordination between UPS and ATS systems determines overall resilience. Control systems must ensure smooth transition from battery to generator supply. Any mismatch in timing or synchronization can introduce disturbances. Engineers must validate these interactions under real-world scenarios. Testing under load conditions reveals potential weaknesses in the design. Reliable operation depends on integration rather than individual component performance.

Milliseconds vs Seconds in AI Workloads

Modern compute environments operate with tightly coupled processing units and high data throughput. GPUs and accelerators rely on continuous power to maintain processing pipelines. Even brief interruptions can disrupt computational states and force recalculation. These systems typically do not tolerate gaps that extend beyond instantaneous or sub-cycle switching windows without triggering interruption or recovery mechanisms. The difference between milliseconds and seconds becomes critical in maintaining operational continuity. This sensitivity defines the requirements for power infrastructure in advanced workloads.

Inference systems process data streams in real time, requiring uninterrupted execution. Interruptions introduce latency that affects downstream applications and user experience. Systems designed for continuous operation cannot accommodate multi-second delays. Recovery processes may involve reloading models and reinitializing pipelines. These steps extend beyond the duration of the initial interruption. The operational impact compounds quickly in such environments. Clustered architectures amplify the effect of power disturbances. Nodes depend on synchronized operation to maintain performance and consistency. Loss of a single node can trigger redistribution of workloads. This process introduces additional overhead and potential instability. Systems must maintain continuity across all nodes to function effectively. The infrastructure must support this requirement through rapid switching capabilities.

Why ATS delays create operational risk

ATS-based systems introduce delays that exceed the tolerance of high-performance workloads. During transfer, the UPS must carry the entire load without interruption. Any weakness in this chain exposes the system to risk. The longer the delay, the greater the dependence on stored energy. This dependency increases the likelihood of failure under stress conditions. The architecture becomes sensitive to variations in component performance.

Transient disturbances during transfer can affect sensitive electronics even if power is restored. Voltage fluctuations and waveform distortions may propagate through the system. Equipment may not fail immediately but can experience degraded performance. Repeated exposure to such conditions affects reliability over time. Engineers must consider these effects when designing power systems for advanced workloads. The focus shifts from recovery to prevention of disruption. Static Transfer Switches address these challenges by minimizing interruption duration. Their rapid response aligns with the tolerance of modern compute systems. The load remains connected to a stable source throughout the transition. This capability reduces reliance on UPS buffering. The system achieves higher resilience through elimination of delay rather than compensation. This approach reflects the evolving requirements of modern infrastructure.

Transient Events: The Damage You Don’t See

Electrical systems experience subtle disturbances during switching operations that often go unnoticed. These include voltage spikes, dips, and harmonic distortions. Even when equipment continues operating, these events can affect internal components. Power supplies must absorb these variations to maintain output stability. Repeated exposure places stress on capacitors and other sensitive elements. Over time, this stress contributes to gradual degradation.

Switching events can introduce high-frequency noise into the power system. This noise propagates through distribution networks and interacts with connected devices. Sensitive electronics may experience interference that affects performance. Filtering mechanisms reduce these effects but cannot fully eliminate them, particularly under rapidly changing or high-frequency switching conditions. The quality of switching determines the extent of disturbance introduced. Systems with faster and more precise switching reduce these impacts. Harmonic distortion further complicates system behavior during transitions. Non-linear loads interact with distorted waveforms to create additional harmonics. These harmonics increase losses and reduce efficiency in electrical systems. Equipment designed for clean power may experience reduced performance under such conditions. Engineers must account for these effects when evaluating switching technologies. The goal extends beyond continuity to maintaining power quality.

Long-term impact on equipment reliability

Repeated exposure to transient events affects the lifespan of electrical components. Capacitors, transformers, and semiconductors experience cumulative stress. This stress does not always result in immediate failure but reduces reliability over time. Maintenance cycles may not detect these gradual changes. Failures may occur unexpectedly under normal operating conditions. The root cause often traces back to repeated power disturbances.

Thermal effects accompany electrical disturbances during switching events. Fluctuations in power delivery lead to variations in heat generation. Components expand and contract with temperature changes, affecting mechanical integrity. Over time, this process weakens connections and materials. Systems exposed to frequent disturbances show higher failure rates. Engineers must consider these factors when designing resilient infrastructure. Preventive design strategies focus on minimizing exposure to such disturbances. Faster switching technologies reduce the duration and intensity of transient events. Improved control logic ensures smoother transitions between sources. Engineers must integrate these considerations into system architecture. The objective shifts from reacting to disturbances to avoiding them. This approach enhances long-term reliability and performance.

Control Logic vs Speed: Where Most Designs Fail

Switching hardware alone does not determine how a system behaves during a disturbance. Control logic governs when and how a transfer occurs under varying electrical conditions. Poorly tuned logic can delay switching even when hardware supports faster operation. Systems may wait too long to confirm a fault, extending exposure to unstable power. Overly sensitive logic can trigger unnecessary transfers, introducing avoidable disturbances. The balance between responsiveness and stability defines real-world switching performance.

Detection thresholds play a central role in this decision-making process. Voltage and frequency limits determine when the system recognizes a fault. If thresholds sit too wide, the system tolerates instability longer than it should. If thresholds sit too narrow, the system reacts to minor fluctuations unnecessarily. Engineers must calibrate these parameters based on load sensitivity and source characteristics. This calibration directly impacts the effectiveness of both ATS and STS systems. Timing coordination between multiple systems adds another layer of complexity. UPS, ATS, and STS units each operate with their own control logic. Misalignment between these systems can create gaps or overlaps during transfer. Such mismatches may introduce disturbances even in otherwise robust designs. Engineers must ensure synchronized operation across all components. Reliable performance depends on integration rather than isolated optimization.

Failure modes driven by logic misalignment

Control systems can fail in subtle ways that are difficult to detect during normal operation. Incorrect parameter settings may not reveal themselves until a real disturbance occurs. During such events, delayed or inappropriate responses can amplify system instability. Equipment may experience unnecessary switching cycles or delayed recovery. These behaviors reduce overall system reliability. Engineers must validate control logic under realistic scenarios to uncover such issues.

Communication delays between monitoring systems and switching devices can also affect performance. Data must travel between sensors, controllers, and actuators within tight timeframes. Any delay in this communication chain introduces latency into the decision process. Even small delays can become significant when dealing with millisecond-level switching requirements. Systems must minimize these delays to maintain responsiveness. Efficient communication architecture supports effective control logic. Adaptive control strategies improve system resilience under dynamic conditions. These systems adjust thresholds and response times based on real-time data. Such adaptability allows better handling of varying load and source conditions. Engineers increasingly rely on these approaches to enhance performance. Static configurations struggle to meet the demands of modern infrastructure. Control logic evolves into a critical component of system design.

The Cost of a Delayed Transfer

A delayed transfer affects more than the immediate availability of power. Systems must undergo restart procedures that consume time and resources. Applications may require reinitialization before resuming normal operation. This process introduces additional downtime beyond the initial interruption. Users experience delays that extend well past the moment of power restoration. The true impact of a delay lies in its ripple effects across operations. Data integrity risks emerge during abrupt interruptions. Transactions in progress may not complete successfully. Systems must reconcile inconsistencies during recovery. This process can involve complex validation and correction steps. The effort required depends on the nature of the workload and system architecture. Even small interruptions can create significant recovery overhead.

Human intervention often becomes necessary after such events. Operators must verify system status and address any issues that arise. This involvement adds to the overall recovery time. Automated systems reduce some of this burden but cannot eliminate it entirely. The complexity of modern infrastructure requires careful validation after disruptions. The cost of delay includes both technical and operational factors.

Long-term implications for system reliability

Repeated interruptions affect system reliability over time. Components subjected to frequent power cycles experience increased wear. This wear may not be immediately visible but accumulates gradually. Systems may fail unexpectedly due to this hidden degradation. Maintenance schedules may not account for these effects. Engineers must consider the long-term impact of switching delays. Software systems also experience cumulative effects from repeated disruptions. Frequent restarts can lead to configuration drift or data inconsistencies. Systems designed for continuous operation may not handle repeated interruptions gracefully. Over time, these issues degrade overall performance. Engineers must design systems to minimize such disruptions. Prevention proves more effective than repeated recovery. Reputation and service quality depend on consistent system availability. Users expect uninterrupted access to services and applications. Even brief disruptions can affect user experience. Organizations must maintain high standards of reliability to meet expectations. The cost of delayed transfer extends beyond technical metrics. It influences overall system perception and trust.

Why Dual Power Paths Still Need Fast Switching

Dual power paths provide multiple sources of supply to critical loads. Each path operates independently to reduce the risk of complete failure. However, redundancy alone does not guarantee seamless operation. Switching between paths must occur quickly to maintain continuity. Slow transfer introduces gaps even in redundant systems. This limitation highlights the importance of switching speed.

Systems with dual inputs often rely on ATS or STS devices to manage source selection. The effectiveness of redundancy depends on how these devices operate. If switching occurs too slowly, the load experiences interruption despite multiple sources. Engineers must ensure that switching performance matches load requirements. Redundancy must align with operational tolerance thresholds. The design must address both availability and continuity. Load balancing across multiple paths introduces additional complexity. Systems must distribute power without creating instability. Switching events must not disrupt this balance. Engineers must design control systems to manage these interactions effectively. Poor coordination can negate the benefits of redundancy. The system must operate as an integrated whole.

The role of STS in dual-feed architectures

Static Transfer Switches enable rapid switching between dual power sources. Their speed ensures that the load remains connected to a valid source at all times. This capability enhances the effectiveness of redundant architectures. Systems can switch between sources without interruption. The load experiences continuous operation despite upstream changes. This behavior defines the advantage of STS in dual-feed designs.

STS systems also support load-level redundancy. Each device can select the best available source based on real-time conditions. This approach allows granular control over power distribution. Engineers can design systems that respond dynamically to changing conditions. The result is improved resilience and flexibility. The system adapts to disturbances without affecting load continuity. Integration with upstream systems ensures coordinated operation. STS devices must work alongside UPS and ATS units within the architecture. Proper integration prevents conflicts and ensures smooth transitions. Engineers must validate these interactions during design and testing. Reliable operation depends on seamless coordination between components. The system achieves resilience through integration rather than redundancy alone.

Designing Around the Gap Instead of Ignoring It

Modern power architectures combine multiple technologies to achieve resilience. UPS systems provide immediate response to power disturbances. ATS units manage long-duration backup through generator integration. STS devices ensure rapid switching between available sources. Each component addresses a specific aspect of power continuity. Together, they create a layered defense against disruption. Designers must consider how these layers interact under real-world conditions. Each component operates with its own response time and control logic. Misalignment between layers can introduce gaps or conflicts. Engineers must ensure that transitions occur smoothly across all systems. Testing under load conditions reveals potential weaknesses. Integration becomes the key to effective design. Scalability also plays a role in system design. As infrastructure grows, power systems must adapt without compromising performance. Designers must ensure that switching performance remains consistent at larger scales. This requirement influences component selection and system architecture. Engineers must plan for future expansion during initial design. The system must maintain resilience as it evolves.

Engineering strategies for eliminating delay impact

Design strategies focus on minimizing the impact of unavoidable delays. Engineers place UPS systems close to critical loads to reduce exposure. STS devices provide rapid switching at the load level. ATS units handle bulk transfer without affecting sensitive equipment. This approach isolates delays from critical systems. The design prioritizes continuity where it matters most. Monitoring and diagnostics enhance system reliability. Real-time data allows engineers to identify potential issues before they escalate. Predictive maintenance reduces the risk of component failure. Systems can adjust operation based on current conditions. This adaptability improves overall performance. Engineers must incorporate these capabilities into system design. Training and operational procedures also influence system effectiveness. Personnel must understand how systems behave during disturbances. Proper response reduces recovery time and prevents further issues. Engineers must document system behavior and response strategies. Regular testing ensures readiness under real conditions. The system achieves resilience through both design and operation.

Uptime Is Measured in Milliseconds Now

Power continuity now demands precision that aligns with modern system requirements. Seconds-long interruptions generally do not fit within acceptable operational thresholds for most modern digital and real-time compute environments. Systems must maintain stable power through transitions, not just restore it afterward. This shift redefines how engineers approach power system design. The focus moves from backup availability to seamless continuity. The difference between ATS and STS highlights this evolution clearly.

Technological advancements continue to push the boundaries of system performance. Faster processing and tighter integration increase sensitivity to power disturbances. Infrastructure must evolve to support these demands. Engineers must adopt technologies that match system requirements. Static switching represents a step toward meeting these expectations. The industry continues to refine approaches to achieve higher resilience.

Design philosophy must adapt to these changing conditions. Systems must eliminate gaps rather than compensate for them. Engineers must consider switching speed as a fundamental parameter. This approach leads to more robust and reliable infrastructure. The goal becomes uninterrupted operation under all conditions. The definition of uptime evolves accordingly.

The future of switching and resilience

Emerging technologies promise further improvements in switching performance. Advances in semiconductor devices enable faster and more efficient operation. Control systems continue to evolve with improved intelligence and adaptability. These developments enhance the ability to maintain continuity under complex conditions. Engineers must stay informed about these advancements. The future of power systems depends on continuous innovation. Integration across systems will define the next stage of resilience. Power, cooling, and compute systems must operate as a cohesive unit. Coordination between these elements ensures consistent performance. Engineers must design systems with this integration in mind. The result is infrastructure that responds dynamically to changing conditions. This approach supports the demands of modern workloads. Uptime now reflects the ability to avoid disruption entirely. Systems must operate without perceptible interruption under all conditions. Millisecond-level switching defines this capability. Engineers must prioritize technologies that achieve this level of performance. The gap between seconds and milliseconds determines success. Modern infrastructure depends on closing that gap completely.