The GPU shortage supply chain problem runs deeper than the quarterly earnings narrative suggests. Every quarter, TSMC reports record revenue. Every quarter, analysts celebrate the numbers as proof that the AI infrastructure buildout is real and accelerating. And every quarter, the same conclusion follows: more demand, more chips, more capacity coming. The narrative is clean, confident, and almost entirely wrong about where the actual constraint lives.

TSMC’s Q1 2026 results were genuinely impressive. A 58 percent profit surge, revenue of $35.9 billion, full-year guidance raised above 30 percent growth. The numbers confirm that AI chip demand is not slowing. What they do not confirm, and what almost nobody in the mainstream coverage is saying clearly, is that TSMC’s wafer output is not the binding constraint on how many AI GPUs the world can actually deploy. That constraint sits upstream, downstream, and largely invisible to anyone not deep in the semiconductor supply chain.

The Real Bottleneck Is Not Silicon. It Is What Goes Around It.

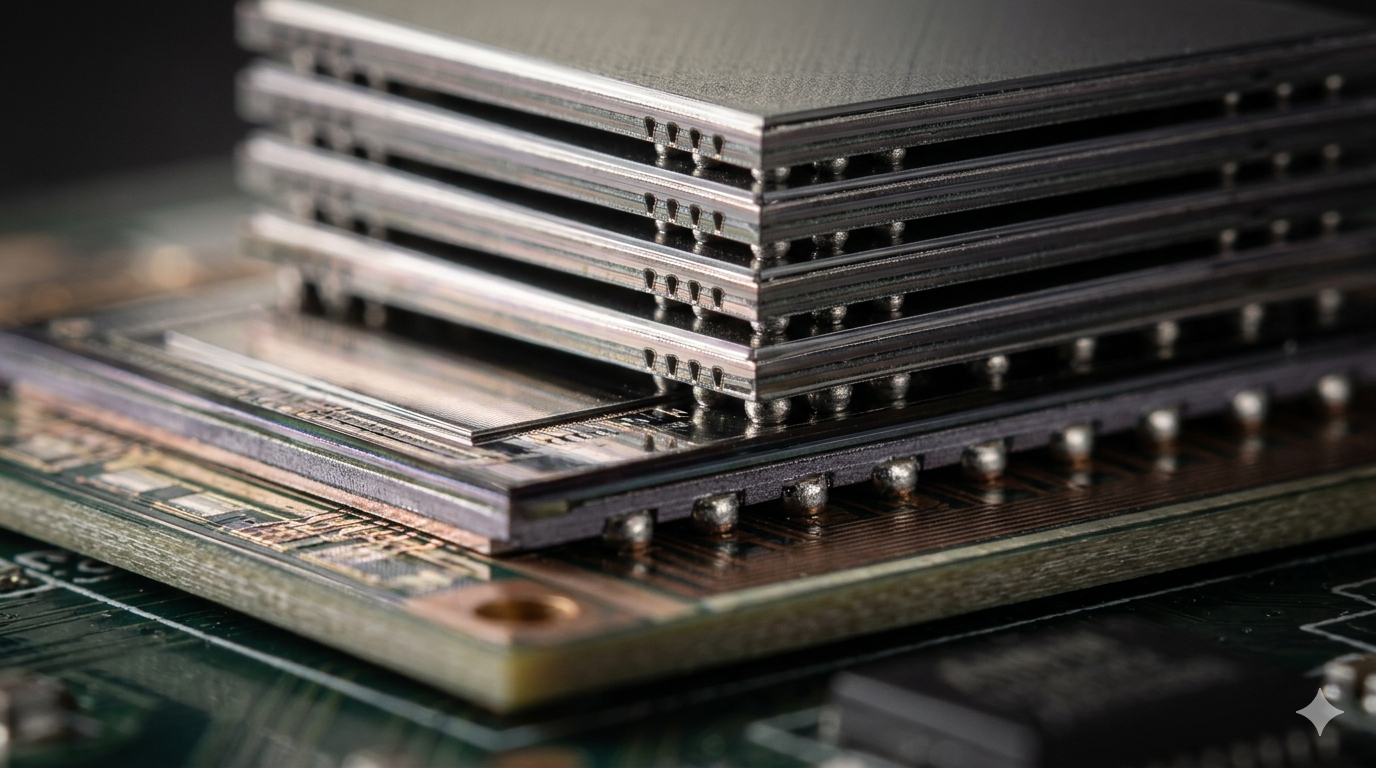

Building a modern AI GPU requires more than a finished wafer. It requires High Bandwidth Memory, the stacked DRAM architecture that gives GPU dies the memory bandwidth that AI training and inference workloads demand. Three companies manufacture HBM: SK Hynix, Samsung, and Micron. SK Hynix alone supplies the majority of HBM for Nvidia’s highest-demand products. That concentration means TSMC’s fab output does not set the effective capacity ceiling for AI GPU production. SK Hynix’s HBM production line does.

Beyond HBM, every high-end AI GPU requires advanced packaging. CoWoS, TSMC’s Chip-on-Wafer-on-Substrate process, integrates the GPU die and HBM stacks into a single package with the interconnect density that modern AI chip architectures require. CoWoS capacity sits entirely separate from wafer capacity, constrained by specialised substrate materials, precision bonding equipment, and yield management at tolerances that standard packaging cannot achieve. TSMC has been aggressively expanding CoWoS capacity, but the expansion timeline runs in years, not quarters. Consequently, every announcement of record GPU demand is simultaneously an announcement of record pressure on a packaging process that has a fixed expansion rate.

TSMC’s move of 3nm production to Japan is partly a geographic diversification story, but it is also a story about the limits of concentrating every critical process at a single facility. The real question is not whether TSMC can produce enough leading-edge wafers. It is whether the entire supply chain around those wafers, including the HBM suppliers, the CoWoS capacity, the substrate manufacturers, and the advanced assembly and test operations, can scale at the same rate. The honest answer is that they cannot, and the gap between wafer output growth and system-level GPU availability reflects that directly.

Why the GPU Shortage Supply Chain Keeps Getting Misdiagnosed

The GPU shortage narrative persists because wafer starts are a number that gets reported. Analyst models are built around it. Investor presentations reference it. TSMC’s earnings calls address it directly. The constraint is legible and discussable in ways that CoWoS yield rates and HBM allocation agreements are not.

The less legible constraints, however, do not fit neatly into earnings call talking points. SK Hynix does not break out HBM allocation by customer in its quarterly results. TSMC does not report CoWoS capacity utilisation as a standalone metric. The substrate suppliers, many of which are Taiwanese and Japanese companies operating well below the radar of Western financial media, do not hold investor days where they announce their expansion constraints. The result is a public narrative about AI chip supply that focuses on the most visible number while the actual constraints operate in the spaces between the headlines.

When the leading chipmaker commits, the AI demand debate ends — but that framing assumes the chipmaker’s output is the relevant commitment. The commitment that actually matters is the entire supply chain’s collective ability to deliver finished, packaged, tested AI computing systems at the rate the market demands. TSMC’s capex commitment of up to $56 billion in 2026 is enormous and necessary. It is not sufficient, however, if the rest of the supply chain cannot keep pace.

What This Means for Anyone Planning AI Infrastructure

Practically speaking, the GPU supply constraint is not going to resolve on the timeline that TSMC’s production ramp suggests. Operators modelling infrastructure deployment plans around the assumption that hardware availability will normalise in 2026 or early 2027 are working from a supply chain model that does not account for the packaging and memory constraints limiting actual system deliveries.

Consequently, this affects investment decisions in ways the surface narrative obscures. A neocloud that has secured GPU allocation from Nvidia has not secured a delivery timeline. It has secured a position in a queue that moves at the rate of the slowest constraint in the supply chain. Similarly, an enterprise planning an AI training cluster for deployment in the second half of 2026 needs to model against CoWoS capacity expansion rates, not TSMC wafer starts, to get an honest picture of when its hardware will actually arrive.

Why the Operators Who Know This Are Already Acting Differently

As a result, the operators who understand this are quietly extending their lead. They locked in hardware commitments earlier, built flexibility into their deployment plans, and are not relying on the public narrative about supply normalisation to validate their timelines. Meanwhile, operators still planning against wafer production numbers are going to find that the gap between announced capacity and delivered systems is larger and more persistent than the headline figures suggest.

TSMC’s results deserve the attention they receive. A 58 percent profit surge at the company that manufactures the chips powering the AI economy is genuinely significant. But the conclusion that follows from those results is not that the GPU supply problem is resolving. It is that demand is so strong that even a supply chain operating at its packaging and memory limits is generating record revenue. The shortage is not ending. It is just becoming more profitable for the people at the top of the stack.