Engineers exploring physics-inspired computing for AI observe nanoscale materials as electrical currents reshape internal states in ways that resemble learning. Unlike conventional processors that follow stepwise instructions, these experimental systems allow physical dynamics to participate directly in solving structured problems. Researchers are not attempting to eliminate digital logic, but they are questioning whether certain computational tasks can unfold more naturally through material behavior. This inquiry emerges from practical constraints as much as intellectual curiosity, particularly as artificial intelligence models demand increasing energy efficiency and memory bandwidth optimization. As model complexity intensifies, the physical substrate itself becomes part of the computational conversation rather than merely a passive executor of code. The result is a growing field of exploration where computation extends beyond abstract symbols and into the measurable evolution of physical systems.

From Binary Certainty to Physical Participation

For decades, artificial intelligence relied on digital circuits that interpret information as binary states switching between zero and one. That abstraction provided reliability, programmability, and scalability, which allowed neural networks to expand across powerful accelerators. However, as model sizes increased, engineers confronted bottlenecks related to data movement, energy dissipation, and thermal density. These pressures encouraged researchers to revisit an older question: must every calculation pass through strictly digital logic gates? Instead of treating physics as a limitation to be controlled, new research treats it as a resource that can assist with optimization and inference. Importantly, this direction complements rather than replaces digital systems, because large-scale orchestration, training pipelines, and software ecosystems still depend on deterministic computation.

Thermodynamic Systems as Computational Substrates

Thermodynamic computing explores whether energy minimization processes can approximate solutions to structured mathematical problems. In controlled laboratory settings, certain physical systems naturally evolve toward low-energy configurations that correspond to optimized states. Researchers encode computational variables into properties such as spin alignment, electrical resistance, or phase configuration. As the system relaxes toward equilibrium, it effectively performs a form of parallel exploration across possible solutions. Current demonstrations remain at proof-of-concept scale, often addressing sampling tasks or constrained optimization problems rather than full deep learning workloads. Therefore, thermodynamic approaches represent an active research frontier whose practical deployment depends on further advances in fabrication stability and integration with digital control layers.

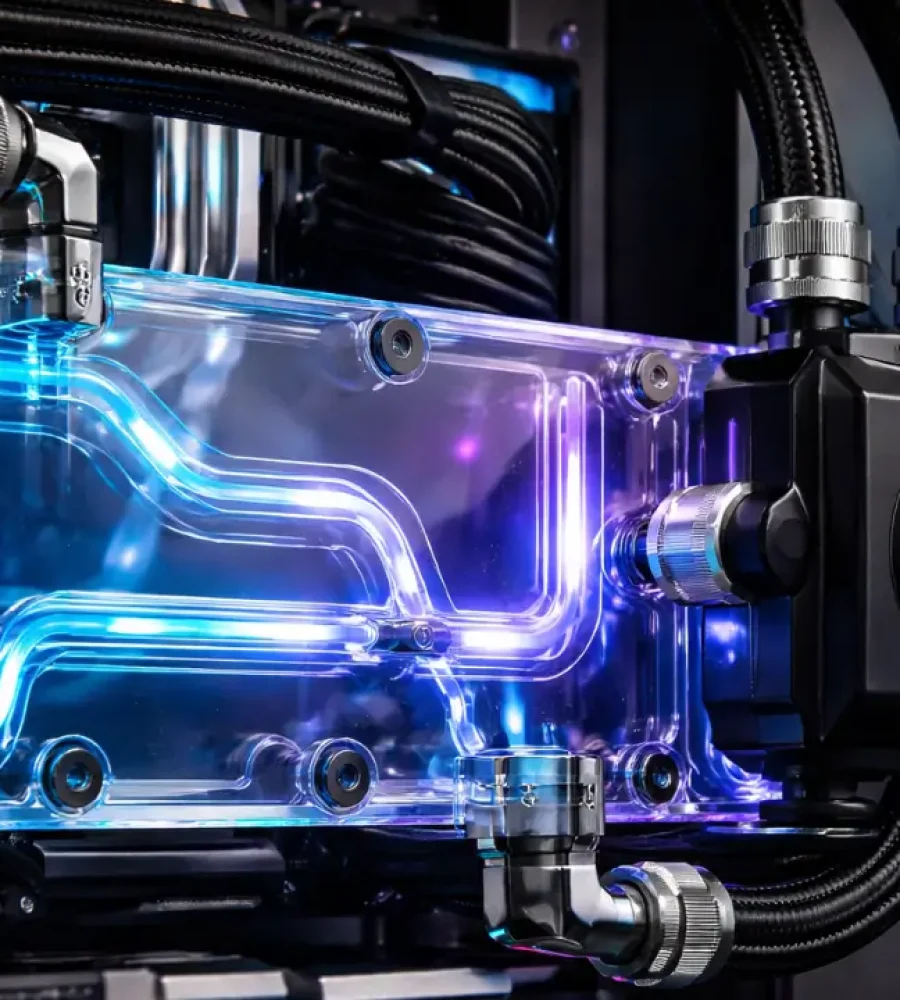

Analog computing once dominated early scientific instrumentation before digital systems became standard due to noise resilience and reproducibility. Today, advances in nanofabrication and materials engineering enable a more controlled revival of analog principles. Devices such as memristive elements adjust conductivity based on applied voltage histories, creating hardware-level mechanisms that resemble adaptive weighting. Optical platforms perform matrix operations by exploiting interference patterns that occur naturally when light propagates through structured media. These systems often execute specific mathematical operations with lower data movement overhead compared to purely digital pipelines. Nonetheless, they operate most effectively as accelerators coordinated by conventional processors rather than as standalone replacements for general-purpose computation.

Material Dynamics and Learning Behavior

Embedding learning mechanisms into device physics alters how engineers conceptualize intelligence within machines. Instead of updating numerical weights solely in memory arrays managed by software, some prototypes allow conductance states or phase transitions to encode adaptive behavior. This design reduces the need for constant shuttling of information between separated memory and processing units. At the same time, researchers carefully evaluate variability, drift, and reproducibility, because material systems exhibit behaviors that differ from idealized mathematical abstractions. Ensuring stability across thousands or millions of such elements remains a significant engineering challenge. Consequently, intelligence emerges from a coordinated interaction between physical substrates and algorithmic frameworks rather than from hardware alone.

Speculation about replacing digital processors often oversimplifies the technical landscape. In practice, hybrid architectures appear far more plausible, where physical computing elements accelerate specific operations under digital supervision. Conventional CPUs and GPUs continue to manage training loops, model updates, and distributed synchronization. Physics-inspired components may assist with energy-intensive primitives such as matrix multiplication, probabilistic sampling, or optimization routines. This division of labor aligns with historical patterns in computing, where specialized accelerators coexist alongside general-purpose processors. As a result, innovation focuses on integration strategies rather than on wholesale displacement of existing infrastructure.

Infrastructure Implications Without Overstatement

The energy footprint of artificial intelligence has become a growing concern for operators and policymakers. Physics-based accelerators may eventually reduce power density for certain workloads if engineers achieve reliable scaling. However, most current prototypes operate in research environments rather than production data centers. Commercial viability requires reproducible fabrication, standardized programming interfaces, and compatibility with established semiconductor supply chains. Infrastructure operators therefore continue optimizing digital accelerators while monitoring developments in emerging hardware paradigms. Any measurable impact on large-scale energy consumption will depend on successful translation from laboratory experiments to manufacturable systems.

The philosophical dimension of this shift deserves careful framing grounded in evidence rather than exaggeration. When materials participate directly in computation, intelligence gains a tangible physical expression, yet it remains architected by human design. Algorithms still define objectives, loss functions, and validation metrics that guide system behavior. Physical processes may assist with executing certain computations more efficiently, but they do not independently determine goals or contextual meaning. Intelligence therefore remains a layered construct emerging from both software structure and material execution. This perspective expands the definition of machine intelligence without dissolving it into purely physical phenomena.

Scaling physics-based systems introduces challenges that extend beyond theoretical feasibility. Fabrication variability, long-term drift, and environmental sensitivity complicate reproducibility across devices. Engineers must develop abstraction layers that allow programmers to harness physical accelerators without managing low-level material behavior. Benchmarking also requires refinement because equilibrium-based computation does not always map neatly onto conventional performance metrics. Interdisciplinary collaboration between physicists, computer scientists, and semiconductor engineers remains essential for progress. Each incremental breakthrough clarifies not only what these systems can achieve, but also where their practical boundaries lie.

A Narrative That Moves Beyond Software Alone

Stories about artificial intelligence often emphasize algorithms, architectures, and training data. Yet beneath those abstractions lies a physical substrate that increasingly shapes computational possibilities. As researchers integrate thermodynamic relaxation, analog adaptation, and optical dynamics into hardware, they broaden the conceptual boundaries of computation. Digital logic remains central to large-scale deployment, but it now shares the stage with controlled material processes. The evolution reflects expansion rather than replacement, adding new layers to how machines perform reasoning tasks. In that sense, intelligence in machines begins to resemble a collaboration between mathematics and matter, where nature itself contributes to how problems are solved.