Why Rack-Level Cooling Is No Longer Enough for AI Processors

Modern artificial intelligence accelerators operate at power densities that challenge the limits of conventional thermal management architectures in data centre environments. Advanced GPUs, tensor processors, and custom AI ASICs integrate billions of transistors within tightly packed silicon footprints, producing localized heat flux that exceeds the capabilities of traditional airflow systems. Thermal design power values for leading accelerators already surpass several hundred watts per device, while next-generation systems continue pushing those boundaries further. Engineering teams now explore deeper integration of cooling infrastructure to address the growing mismatch between processor heat density and facility-level heat removal mechanisms. This growing thermal challenge has intensified research into silicon embedded microchannel cooling as a potential architecture for managing extreme processor heat densities.

High-performance computing clusters built for machine learning training introduce another layer of complexity in thermal management design. Dense GPU racks often exceed 80 kilowatts of power consumption per rack, which significantly increases localized thermal gradients within server enclosures. Airflow distribution becomes uneven under such conditions because airflow resistance increases through tightly packed accelerator boards and high-speed networking equipment. Cold-plate liquid cooling improves heat transfer efficiency compared to air, yet thermal resistance remains constrained by the interface between the silicon die and the cooling plate. Semiconductor scaling trends also increase heat flux per square millimeter, particularly in advanced nodes where transistor density continues to rise. Conventional system-level cooling strategies therefore encounter diminishing returns as chip architectures evolve toward higher integration and greater computational throughput. Designers increasingly recognize that removing heat at the source offers a more scalable pathway for sustaining performance growth in AI computing infrastructure.

The architecture of modern AI processors amplifies thermal challenges due to the introduction of heterogeneous compute structures. High-bandwidth memory stacks sit adjacent to compute dies, and advanced packaging technologies connect multiple chiplets within a single processor module. Heat distribution across these complex packages becomes highly uneven because compute blocks generate more heat than memory or I/O circuitry. Thermal hotspots can therefore emerge within extremely small areas of silicon, creating localized temperature spikes that degrade performance and reliability. Traditional heat spreaders distribute heat across a larger surface area before liquid or air removes it from the package. Surface-level cooling strategies cannot effectively manage extreme localized heat generation inside multilayer semiconductor packages. Researchers increasingly investigate chip-integrated cooling approaches that bring coolant directly to the regions where thermal loads originate.

Another challenge arises from the growing adoption of vertical integration in semiconductor packaging. Three-dimensional chip stacking allows engineers to increase computational density without expanding the physical footprint of the processor package. Vertical stacking shortens electrical interconnects and improves performance efficiency for workloads such as neural network training and inference. Heat removal becomes significantly more complex because stacked layers create additional thermal resistance between the heat-generating components and the external cooling system. Thermal accumulation within stacked layers can reduce clock speeds and introduce reliability risks such as electromigration or material degradation. Cooling technologies designed around external surfaces struggle to maintain uniform temperatures across these multilayer architectures. Thermal engineers therefore seek solutions that integrate fluid flow pathways directly into the semiconductor structure itself.

Power delivery infrastructure inside AI servers also contributes to the growing heat management challenge. Voltage regulation modules, high-speed interconnects, and memory subsystems produce additional heat alongside the primary compute accelerators. Rack-level cooling must therefore remove heat generated by multiple subsystems operating simultaneously under heavy workloads. Increasing coolant flow rates at the rack or facility level introduces higher energy consumption for pumps and cooling infrastructure. Energy efficiency considerations encourage the development of cooling systems that minimize thermal resistance along the heat removal path. Direct interaction between coolant and heat sources offers the potential to improve thermal transfer coefficients significantly. Micro-scale cooling channels integrated within silicon represent one approach that aims to shorten the thermal path between the transistor and the cooling medium.

Thermal limitations increasingly influence processor design decisions in modern computing systems. Semiconductor manufacturers must consider power density constraints when planning transistor placement, clock frequency, and architectural complexity. Excessive heat generation can force performance throttling even when computational resources remain available. Cooling innovations therefore play a direct role in enabling future performance scaling for artificial intelligence workloads. Research groups across academia and industry now investigate methods that merge chip fabrication with micro-scale fluid engineering. Engineers recognize that cooling cannot remain an external afterthought if computational densities continue increasing at current rates. The exploration of embedded microchannel cooling technologies reflects this shift toward deeper integration between semiconductor design and thermal management strategies.

Inside the Silicon: How Microfluidic Cooling Channels Work

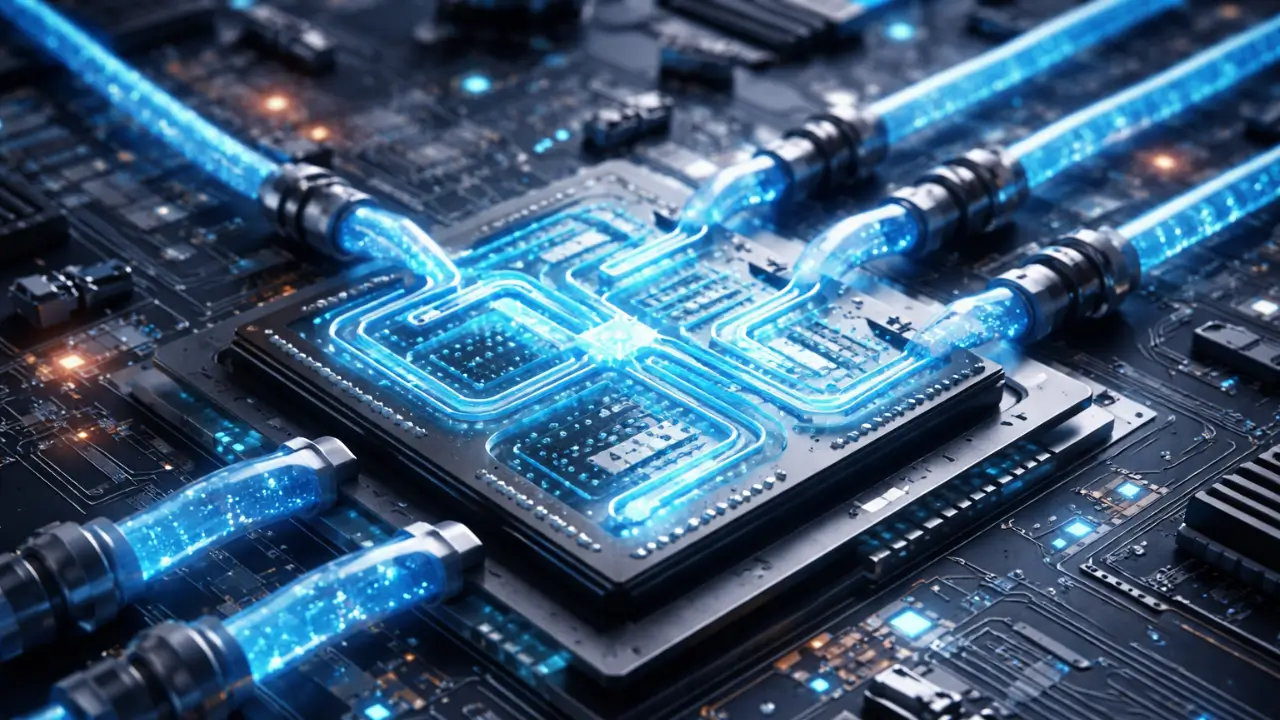

Engineers exploring chip-integrated thermal management have turned to micro-scale fluid mechanics as a promising pathway for efficient heat extraction. Microfluidic cooling architectures incorporate extremely small coolant channels etched directly into silicon substrates or intermediate packaging layers. Semiconductor fabrication techniques allow engineers to create these channels with dimensions measured in micrometers while maintaining precise structural tolerances. Coolant flows through these microchannels and absorbs heat directly from nearby transistor regions. Heat transfer efficiency improves significantly because the coolant operates in close proximity to the heat generation source. Researchers have demonstrated that microchannel cooling systems can achieve heat removal rates far beyond those possible with conventional surface-based cooling approaches.

Microchannel fabrication typically involves advanced lithography and etching processes similar to those used in semiconductor manufacturing. Engineers design fluid pathways that maximize surface area contact between the coolant and the surrounding silicon material. Channel geometry plays a critical role in determining fluid velocity, pressure distribution, and heat transfer performance. Narrow channels increase surface contact but also raise hydraulic resistance within the fluid circuit. Engineers must therefore balance thermal efficiency with fluid pumping requirements during the design process. Computational fluid dynamics models assist researchers in optimizing channel shapes, spacing, and orientation within the silicon substrate. These simulations allow engineers to evaluate different cooling architectures before committing to fabrication experiments.

Fluid circulation through embedded channels relies on miniature manifolds that distribute coolant across the processor package. External pumps drive coolant through inlet channels, while outlet channels remove heated fluid from the silicon structure. Uniform distribution across all microchannels remains essential because uneven flow can create temperature gradients within the chip. Designers often implement parallel channel configurations to ensure balanced fluid flow across the entire cooling network. The microfluidic architecture must also integrate with existing semiconductor packaging methods without disrupting electrical connectivity. Packaging engineers therefore collaborate closely with thermal specialists when designing these systems. This integration ensures that cooling structures coexist with electrical interconnects and power delivery networks inside the processor package.

Coolant selection plays a central role in determining the effectiveness of microchannel heat removal. Engineers typically consider fluids with high thermal conductivity and favorable dielectric properties when designing embedded cooling systems. Water-based fluids offer strong thermal performance but require careful electrical isolation to prevent short circuits within electronic components. Dielectric liquids provide electrical safety but often deliver lower heat capacity compared to water. Researchers therefore investigate hybrid cooling fluids and additives that improve thermal performance without compromising electrical reliability. Fluid compatibility with silicon materials and microfabricated structures must also be evaluated to prevent long-term corrosion or material degradation. These considerations influence the long-term viability of chip-integrated cooling technologies.

Microfluidic cooling provides another advantage through highly uniform temperature distribution across the processor die. Traditional cooling methods remove heat primarily through the top surface of the package, which can leave internal hotspots within the silicon structure. Embedded channels allow coolant to travel across multiple regions of the chip simultaneously. Heat therefore dissipates more evenly throughout the silicon substrate, reducing thermal gradients that can stress semiconductor materials. Uniform temperatures improve reliability because large temperature differences often accelerate material fatigue and device degradation. This property becomes particularly valuable for high-performance processors that operate continuously under heavy computational loads. Thermal uniformity therefore represents a key engineering motivation behind chip-integrated cooling research.

Microfluidic cooling research has progressed rapidly due to advances in microfabrication technology and semiconductor packaging techniques. Universities, semiconductor companies, and government laboratories actively investigate new channel geometries and fluid delivery mechanisms. Experimental prototypes have demonstrated the ability to dissipate heat flux exceeding several kilowatts per square centimeter under controlled laboratory conditions. Such performance levels far exceed the capabilities of traditional air-based cooling infrastructure. These demonstrations illustrate the theoretical potential of chip-integrated fluid systems for managing future processor heat loads. Continued research now focuses on scaling these laboratory prototypes into manufacturable technologies suitable for commercial semiconductor production.

Co-Designing Chips and Cooling Systems for Maximum Efficiency

The increasing thermal density of artificial intelligence processors has introduced a design philosophy where cooling infrastructure becomes an integral part of semiconductor architecture. Processor designers traditionally optimized transistor layouts and packaging structures independently from thermal management solutions implemented at the system level. Emerging chip-integrated cooling approaches require a different methodology because fluid channels occupy physical space within the semiconductor substrate. Semiconductor engineers must therefore account for cooling pathways during the earliest stages of chip architecture planning. Collaborative design frameworks now bring together electrical engineers, materials scientists, and thermal specialists to align computational performance with heat removal strategies. This integrated development model seeks to ensure that computational throughput, energy efficiency, and thermal stability evolve together rather than as isolated engineering considerations.

Advanced semiconductor packaging techniques provide the physical foundation required for integrated cooling architectures. Modern processors increasingly rely on chiplet-based designs where multiple functional dies connect through high-density interconnect fabrics. Interposers and advanced packaging substrates create opportunities for integrating fluid distribution networks beneath or around compute dies. Engineers can embed micro-scale channels within these packaging layers while maintaining electrical routing paths for power delivery and data transmission. Thermal engineers analyze fluid distribution patterns to ensure coolant reaches areas with the highest heat flux. Electrical designers simultaneously optimize signal pathways to prevent interference with cooling structures embedded within the same physical layers. These collaborative design processes transform processor packaging into a combined electrical and thermal infrastructure platform.

Three-dimensional stacking technologies further strengthen the case for integrating cooling systems directly into processor design workflows. Vertical integration places multiple compute layers on top of each other, dramatically increasing transistor density within a small footprint. Electrical engineers benefit from reduced interconnect distances that improve latency and bandwidth performance between stacked dies. Thermal challenges increase simultaneously because inner layers of stacked chips remain farther from conventional heat removal interfaces. Engineers address this issue by embedding cooling channels inside intermediate layers of the stack. Fluid flow across stacked layers can extract heat from multiple die surfaces rather than relying solely on the outer package interface. This structural integration ensures that cooling performance scales alongside computational density in vertically integrated architectures.

Thermal-electrical co-design also extends into power management strategies within advanced processors. Power delivery networks must distribute energy across multiple compute blocks while maintaining voltage stability under dynamic workloads. Cooling infrastructure influences power distribution because temperature affects electrical resistance and semiconductor switching behavior. Designers analyze temperature maps across the chip to identify regions where cooling channels should intersect with high-power compute units. Engineers refine these layouts iteratively using simulation tools that combine thermal, electrical, and fluid dynamic models. These models allow researchers to predict how cooling structures will interact with power delivery circuits during real computational workloads. The integration of such multidisciplinary simulation tools represents an essential step in designing reliable chip-integrated cooling architectures.

Manufacturing considerations also shape the development of integrated cooling solutions for semiconductor devices. Fabrication processes must support both electronic circuitry and micro-scale fluid structures within the same wafer manufacturing environment. Engineers evaluate etching techniques, bonding processes, and wafer thinning methods to ensure that cooling channels maintain structural integrity during fabrication. Manufacturing compatibility becomes essential because semiconductor production requires extremely high yield rates for commercial viability. Researchers therefore explore fabrication workflows that incorporate microfluidic features without introducing additional defects into the silicon substrate. This challenge has driven significant research into microfabrication techniques capable of supporting complex three-dimensional structures within semiconductor wafers. The successful integration of these manufacturing methods will determine the scalability of chip-integrated cooling systems in future processor generations.

Collaborative chip and cooling design ultimately shifts thermal management from a reactive discipline to a proactive engineering strategy. Cooling systems no longer serve merely as external infrastructure attached to completed hardware components. Semiconductor architects increasingly treat heat removal pathways as part of the computational platform itself. Processor performance improvements often depend on maintaining stable operating temperatures under extreme workloads. Integrated cooling architectures allow engineers to design processors that operate closer to their theoretical performance limits. This shift toward co-design reflects a broader evolution in computing infrastructure where energy efficiency and thermal engineering shape the trajectory of processor innovation.

Materials, Fluids, and Reliability Challenges in Microfluidics

Embedding coolant pathways inside semiconductor devices introduces a set of engineering challenges that extend beyond conventional thermal management systems. Microfluidic structures operate within extremely small geometries where fluid behavior differs from that observed in larger cooling pipes. Surface tension, viscosity effects, and pressure variations influence the stability of fluid flow inside micro-scale channels. Engineers must design channel networks that maintain consistent coolant distribution across the entire processor die. Small variations in channel dimensions can lead to uneven flow patterns that produce temperature differences across the chip. Precision manufacturing therefore becomes essential for maintaining reliable cooling performance in microfluidic systems.

Material compatibility represents another critical challenge when coolant circulates directly within semiconductor structures. Silicon substrates, bonding materials, and interconnect metals must remain stable under continuous exposure to cooling fluids. Chemical interactions between coolant and structural materials could lead to corrosion or contamination over long operational lifetimes. Engineers therefore conduct extensive compatibility testing when evaluating potential coolant candidates for microfluidic systems. Protective coatings and passivation layers may be applied to channel surfaces to prevent chemical reactions with the fluid medium. These material engineering strategies ensure that embedded cooling channels remain structurally stable throughout years of continuous processor operation.

Fluid selection also plays a decisive role in determining the long-term reliability of embedded cooling architectures. Cooling fluids must exhibit favorable thermal conductivity and heat capacity to absorb energy efficiently from the silicon substrate. Electrical insulation properties remain equally important because conductive fluids could damage semiconductor circuitry if leakage occurs. Engineers evaluate dielectric liquids that combine safe electrical characteristics with adequate thermal transport properties. Water-based coolants may also be considered when strong electrical isolation barriers exist between fluid pathways and electronic components. Each fluid candidate undergoes rigorous testing to determine its compatibility with micro-scale channel structures and semiconductor materials. These evaluations help engineers identify coolant formulations suitable for long-term operation within chip-integrated systems.

Leakage prevention represents one of the most critical reliability concerns associated with chip-integrated cooling systems. Micro-scale channels operate under pressure as coolant circulates through the silicon substrate. Structural defects or bonding failures could allow coolant to escape into surrounding electronic regions. Engineers address this risk through advanced wafer bonding techniques that create extremely strong seals between silicon layers. Redundant sealing structures and pressure monitoring systems may also be integrated into the cooling architecture. Real-time monitoring sensors allow engineers to detect abnormal pressure conditions before they lead to system failure. These protective measures ensure that embedded cooling structures maintain safe operation throughout the processor lifecycle.

Pressure management within microfluidic cooling networks introduces additional engineering complexity. Narrow channel geometries increase hydraulic resistance and require carefully controlled pumping systems to maintain stable coolant flow. Excessive pressure could damage fragile semiconductor structures or weaken bonding interfaces between silicon layers. Engineers therefore design fluid circulation systems that operate within tightly controlled pressure limits. Computational models help researchers predict how fluid pressure will evolve across complex channel networks embedded within the chip. These simulations guide the design of inlet manifolds, outlet pathways, and pumping mechanisms that support stable fluid movement. Effective pressure management ensures that microfluidic cooling systems remain both efficient and structurally safe during long-term operation.

Reliability testing plays a central role in determining whether chip-integrated cooling systems can transition from laboratory research into commercial semiconductor products. Engineers subject experimental prototypes to extensive stress testing under high temperature, pressure, and vibration conditions. Long-duration experiments evaluate how cooling channels respond to continuous fluid circulation over extended operational periods. Researchers also analyze the impact of thermal cycling that occurs when processors repeatedly transition between idle and peak workloads. These evaluations reveal potential failure mechanisms such as channel blockage, material fatigue, or bonding degradation. Comprehensive reliability assessments help engineers refine designs that can withstand the demanding conditions present in large-scale computing environments.

From Data Centres to Edge AI: Deployment Pathways for Chip-Level Cooling

Experimental research into chip-integrated cooling architectures has begun to influence discussions about future computing infrastructure across hyperscale data centres and distributed edge systems. Studies evaluating embedded cooling demonstrate that placing coolant pathways close to heat sources can sustain higher processor power densities compared with traditional surface cooling methods. Researchers often examine these designs in the context of high-performance computing environments where processors operate under continuous heavy workloads. Such investigations indicate that embedded cooling could support increased compute density if manufacturing and reliability challenges are addressed. Hyperscale operators monitor these developments because thermal limits already constrain the scaling of accelerator-based servers used for artificial intelligence training. Ongoing pilot research therefore evaluates how chip-integrated cooling concepts might interact with existing liquid cooling infrastructure within large computing facilities.

Edge computing environments represent another scenario frequently examined in research discussions around advanced thermal management. Compact edge systems must often deliver significant processing capability within physically constrained hardware platforms. Conventional air cooling approaches may struggle in small enclosures operating outside controlled data centre environments. Researchers therefore investigate whether embedded cooling technologies could help maintain stable processor temperatures in compact computing hardware. Experimental studies suggest that localized heat extraction can improve thermal efficiency for high-power electronic devices operating in limited spaces. These findings remain largely exploratory because commercial deployment of chip-integrated cooling technologies has not yet occurred at scale.

Hybrid cooling architectures may combine chip-integrated cooling with established liquid cooling technologies already deployed in modern data centres. Cold plates, immersion cooling tanks, and facility water loops provide large-scale heat transport away from server hardware. Microfluidic channels embedded within processors would handle the initial stage of heat extraction directly at the silicon level. Heated coolant from these microchannels could then transfer energy to secondary cooling loops connected to existing infrastructure. This layered cooling architecture allows each system component to operate within its optimal performance range. Engineers consider such hybrid solutions practical pathways for gradually introducing chip-integrated cooling into existing computing facilities.

The progression from laboratory demonstrations to commercial deployment depends heavily on manufacturing scalability and long-term reliability validation. Embedded cooling channels introduce additional fabrication complexity because semiconductor wafers must accommodate both electronic circuitry and microfluidic structures. Researchers continue investigating wafer bonding methods, micro-etching processes, and packaging strategies capable of producing these structures without reducing fabrication yield. Current demonstrations typically occur within research laboratories or prototype development environments rather than mass-produced semiconductor products. Academic reviews consistently highlight manufacturing compatibility and reliability testing as critical barriers that must be addressed before widespread adoption. These challenges explain why chip-integrated cooling remains an active research field rather than a commercially deployed cooling standard in modern processors.

The evolution of artificial intelligence workloads will strongly influence how quickly these cooling technologies move toward real-world deployment. Training large neural networks demands enormous computational throughput and sustained processor performance. High-performance processors operating under continuous heavy workloads generate persistent heat that stresses conventional cooling infrastructure. Chip-integrated cooling technologies offer a pathway for sustaining these workloads without sacrificing reliability or efficiency. Engineers continue to evaluate how such cooling architectures could support future generations of AI hardware with significantly higher power densities. These evaluations will shape the thermal strategies used in both centralized data centres and distributed computing environments in the coming decades.

The Future of Thermal Architecture in AI Computing

Thermal management has gradually evolved from facility-level infrastructure toward deeper integration within computing hardware itself. Early data centres relied almost entirely on airflow systems that removed heat from server racks through room-scale cooling equipment. The rise of high-performance computing and artificial intelligence workloads introduced heat densities that demanded more efficient liquid-based cooling technologies. Chip-integrated cooling architectures extend this evolution further by targeting heat removal at the silicon level. Micro-scale fluid channels embedded within semiconductor structures shorten the thermal pathway between the heat source and the cooling medium. This architectural shift reflects a broader recognition that thermal engineering must evolve alongside semiconductor innovation.

Future processor architectures will likely depend on integrated thermal solutions that support extreme computational density without compromising energy efficiency. Semiconductor designers already face constraints where heat generation limits achievable performance improvements. Embedded cooling networks offer a mechanism for maintaining stable operating temperatures even as transistor density continues to increase. Integrated cooling may also enable new processor architectures that would otherwise remain impractical due to thermal limitations. Continued research across materials science, fluid mechanics, and semiconductor engineering will determine how quickly these systems mature. Engineers across academia and industry therefore continue exploring how micro-scale fluid systems could redefine the thermal architecture of advanced computing platforms.

Advances in semiconductor packaging, microfabrication technology, and fluid engineering continue to expand experimental research into chip-integrated cooling systems. Universities, research laboratories, and several technology companies have developed prototype processors that incorporate micro-scale fluid channels for heat removal. These demonstrations illustrate the technical feasibility of extracting heat directly from silicon structures under controlled conditions. However, researchers still evaluate long-term reliability, manufacturing scalability, and integration with existing semiconductor production workflows. Review studies emphasize that these engineering challenges must be resolved before such technologies can enter large-scale commercial semiconductor manufacturing. Current work therefore focuses on validating reliability and fabrication compatibility rather than immediate industry deployment.

The trajectory of artificial intelligence infrastructure suggests that thermal innovation will remain a central factor in sustaining computational growth. Processor power densities continue increasing as researchers develop more capable machine learning models and training systems. Cooling technologies that operate directly at the silicon level could provide the thermal headroom required for these advancements. Microfluidic cooling represents one approach that aligns with the long-term direction of semiconductor scaling and advanced packaging. Engineers continue refining these systems to address reliability, manufacturability, and integration challenges within commercial hardware platforms. The convergence of semiconductor design and fluid engineering may therefore define the next generation of thermal architecture for high-performance computing systems.