The Myth of the Infinite Cloud

Grounded Cloud Computing challenges the notion of cloud as a limitless, abstract resource, emphasizing the alignment of infrastructure, software, and strategy with physical realities. Cloud computing has often been portrayed as an on-demand platform that developers and organizations can draw upon without concern for the underlying hardware. Early cloud narratives highlighted elasticity and instant scalability, fostering the perception that capacity could expand or contract without consequences. These abstractions relied on virtualization layers, containerization, and software-defined networking, which masked the reality that computing resources depend on tangible components such as servers, storage, and network hardware. Grounded Cloud Computing reframes these assumptions by recognizing that physical infrastructure, energy, and operational constraints shape performance and reliability. By integrating material realities into cloud design, organizations can achieve predictable, efficient, and sustainable outcomes.

The idea of infinite cloud capacity also reinforced misconceptions around deployment planning, energy usage, and latency, suggesting that applications could operate anywhere, anytime, without physical constraints. In practice, the demand for high-density computation, storage, and network throughput reveals that every cloud deployment is grounded in energy, cooling, and spatial realities. Over time, the illusion of unlimited scalability has given way to an acknowledgment that cloud computing, while highly flexible, is bounded by infrastructure and operational limitations.

Understanding the Limits Behind Cloud Abstraction

Engineers design cloud infrastructure around tangible assets that require careful planning and operational oversight, despite the perception of boundless flexibility. Data centers deploy power delivery systems, cooling infrastructure, network interconnects, and physical racks that determine where and how workloads execute. Developers interact with virtualized interfaces, but orchestration systems actively balance hardware capabilities, energy consumption, and thermal dynamics. Abstraction layers enable seamless provisioning, yet they reveal constraints when workloads scale and stress the system. Organizations running performance-sensitive applications, such as AI training or high-frequency computation, now confront the physical limits of cloud resources. Recognizing these constraints reframes cloud computing as an industrial system rather than a purely digital abstraction, requiring both technical insight and operational strategy to deliver predictable results.

Cloud computing’s promise of abstraction also masked the interdependencies between compute, storage, and networking systems. Performance variability, energy draw, and thermal considerations were often invisible to software developers, creating misalignment between workload expectations and infrastructure capabilities. Early cloud frameworks assumed generic workload profiles that could be distributed anywhere without accounting for locality, latency, or resource contention. In reality, the deployment of high-intensity workloads exposes limitations in bandwidth, processing capacity, and thermal dissipation, forcing operators to consider infrastructure as a first-class design element. This growing awareness has spurred a shift toward cloud systems that integrate physical constraints into operational and architectural decisions. The shift underscores a fundamental truth: while cloud virtualization abstracts many complexities, it cannot eliminate the influence of energy, cooling, and spatial realities on performance and reliability.

From Virtual to Tangible: The Neo Cloud Transition

Modern cloud deployments reflect a shift where engineers design infrastructure considering physical realities. These next-generation architectures integrate constraints such as energy delivery, thermal management, and physical footprint directly into orchestration and scheduling. Operators map workloads to resources that meet performance and infrastructure capabilities. Engineers incorporate physical context into scheduling algorithms, enabling deterministic deployment strategies that consider hardware availability, network latency, and power limits. By transitioning from abstract to tangible infrastructure, designers resolve inefficiencies in generic elasticity models and align allocation with real-world limitations. Teams now orchestrate workloads with dual focus on software intent and physical feasibility, recognizing that infrastructure cannot scale infinitely without consequence.

Modern cloud deployments require mapping workload profiles to infrastructure capabilities. Operators evaluate energy consumption, cooling efficiency, rack density, and network topology to ensure reliable execution. Engineers configure scheduling systems to apply constraints like thermal limits, peak energy availability, and proximity to compute nodes. Architects embed physical parameters into orchestration layers, reducing resource contention and preventing unpredictable performance. This proactive approach contrasts with traditional clouds that deferred physical considerations to reactive operations. By actively designing with material awareness, teams maintain predictable, efficient, and optimized cloud environments.

Neo Clouds also emphasize the interaction between software deployment strategies and tangible infrastructure conditions. Workloads are scheduled not only according to logical resource requirements but also with consideration for power availability, cooling efficiency, and geographic placement. This ensures that applications perform consistently even under high computational load or constrained energy environments. Infrastructure-aware orchestration systems monitor real-time conditions, such as temperature fluctuations and network congestion, adjusting placements dynamically to maintain service levels. The focus on physical grounding reshapes cloud design from abstract planning to context-sensitive execution, integrating operational realities into architectural choices. Neo Clouds therefore offer a bridge between the virtual flexibility developers expect and the material constraints operators must manage.

The Workload-First Imperative

Modern cloud design increasingly centers workloads as the primary driver of infrastructure decisions. Traditional elasticity-focused clouds assumed generic, fungible workloads that could be shifted or scaled without impacting system efficiency. Today, high-demand applications, especially those involving AI, analytics, or real-time processing, require specialized infrastructure profiles tailored to their compute, memory, and networking needs. Workload-first cloud design maps software requirements to physical resources, ensuring that execution aligns with energy availability, cooling capacity, and latency constraints. This approach allows operators to anticipate performance bottlenecks and optimize placement, rather than relying on reactive scaling that may overload physical systems. By treating workloads as the architectural starting point, cloud environments achieve predictable performance and operational efficiency under diverse conditions.

Workload-first principles extend to orchestration policies and deployment automation. Scheduling engines incorporate metrics related to energy use, thermal limits, and proximity to critical network paths. Specialized instance types are designed to match the unique requirements of specific workloads, whether it is AI training clusters with high interconnect demands or edge inference nodes for low-latency computation. Placement decisions now balance performance objectives with physical constraints, ensuring that infrastructure resources are used efficiently and sustainably. The shift toward workload-centric design reduces reliance on overprovisioning and highlights the importance of integrating physical realities into cloud architecture planning. By making workloads the primary lens through which cloud environments are designed, operators can harmonize software performance with infrastructure capabilities.

Physical Constraints Are the New Architects

Physical realities are increasingly the principal factors guiding cloud infrastructure design, challenging assumptions that software abstractions alone can dictate architecture. Energy availability, cooling capacity, land scarcity, and hardware limits now shape decisions about where and how data centers are built. High-density computing clusters demand stable power delivery systems and advanced thermal management to maintain consistent performance and prevent hardware degradation. Network topology, physical rack layout, and proximity to power grids or renewable energy sources influence workload placement, making infrastructure planning a complex balance between physical feasibility and operational efficiency. Operators now design facilities with these constraints as first-order parameters rather than secondary concerns, integrating them into orchestration strategies and long-term deployment roadmaps. By treating energy, thermodynamics, and spatial availability as architectural levers, cloud infrastructure evolves into a system where physical constraints define both capability and growth potential.

Energy, Heat, and Spatial Planning

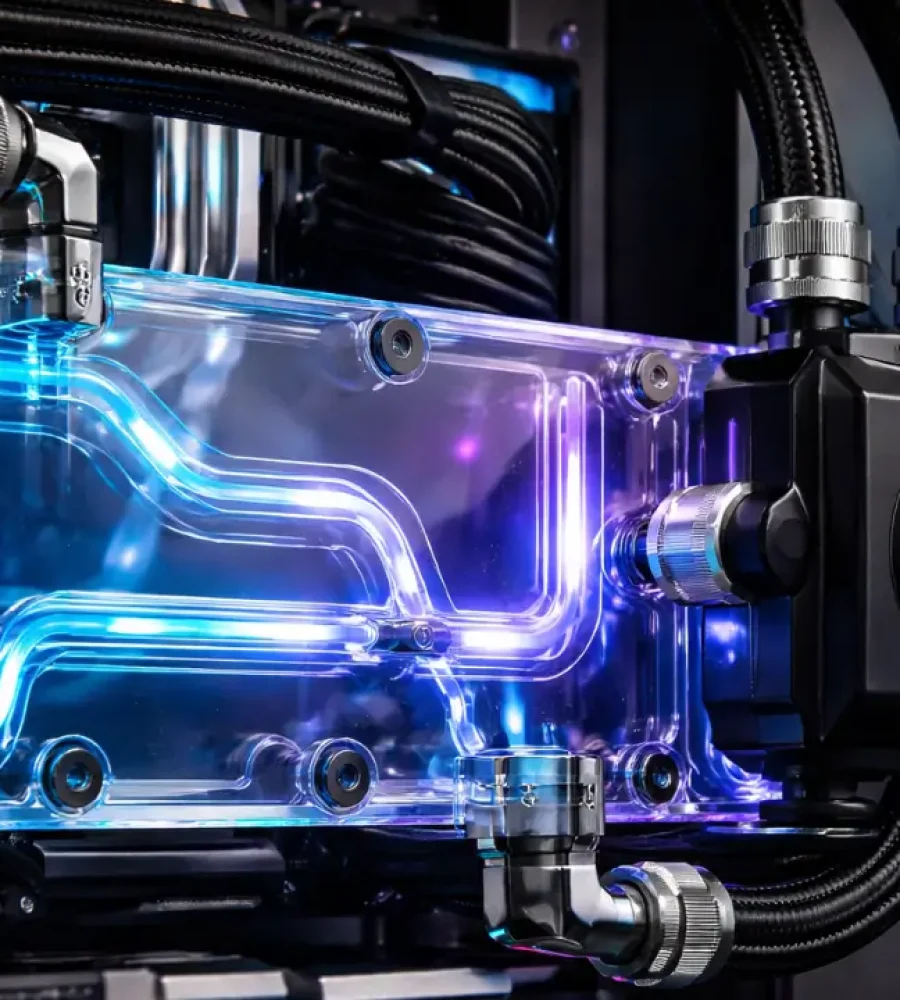

Modern data centers rely on energy management systems that monitor consumption patterns and adjust workload distribution in response to dynamic constraints. Cooling infrastructure, whether air-based or liquid-cooled, dictates rack density and spatial layout, influencing both efficiency and performance. Physical proximity to network backbones and end users affects latency and throughput, integrating geographic and operational realities into infrastructure planning. Hardware choices are also constrained by thermal limits and energy efficiency targets, leading to careful selection of processors, accelerators, and storage devices to maximize performance within material limitations. Resource orchestration now incorporates these parameters, aligning software deployments with the tangible realities of the physical environment. By embedding energy and spatial considerations into design and operation, cloud infrastructure becomes more predictable, resilient, and efficient.

Cloud design is no longer dictated solely by virtual abstraction layers; instead, physical constraints act as architects, shaping decisions around site selection, rack layout, and network topology. Thermodynamics influence cooling system design, while energy availability affects both hardware utilization and operational scaling. Even land scarcity and regulatory zoning impact where cloud facilities can be deployed, emphasizing that cloud expansion is bounded by environmental and logistical realities. These considerations force providers to balance efficiency, resilience, and scalability while respecting the immutable laws of physics. Infrastructure planning now involves multi-disciplinary collaboration among engineers, architects, and operators to ensure that workloads can run reliably within material limits. Recognizing physical constraints as central to architecture marks a fundamental shift toward grounded cloud computing, bridging the gap between software intent and hardware reality.

Software Meets Reality

As infrastructure becomes materially grounded, software development practices evolve to respect these constraints rather than assuming limitless virtual capacity. Traditional cloud-native applications decoupled software logic from hardware specifics, relying on orchestration layers to allocate resources transparently. Engineers design deployment strategies to balance application performance with operational feasibility, placing workloads where infrastructure can support them. Operators integrate these constraints into orchestration tools, dynamically adjusting placement and execution according to real-time data about energy, cooling, and network conditions. This approach improves predictability, reduces the risk of resource contention, and aligns software behavior with the capabilities of the underlying physical environment. By embedding knowledge of material constraints into software design, organizations achieve performance consistency and operational resilience across diverse cloud environments.

Infrastructure-Aware Application Strategies

Grounded software design includes partitioning workloads to respect locality and physical constraints, such as placing latency-sensitive components near users while locating compute-heavy tasks where energy and cooling resources are optimal. Developers collaborate with infrastructure teams to encode policies into orchestration engines that enforce resource-aware execution. Techniques such as workload profiling, adaptive scaling, and regional placement enable applications to operate efficiently across heterogeneous infrastructure. Software systems now anticipate potential bottlenecks arising from energy or thermal limitations, integrating these considerations into CI/CD pipelines and deployment planning. Infrastructure-aware strategies also improve resource utilization and operational efficiency by ensuring that workloads do not exceed the capabilities of physical assets. By embedding knowledge of material constraints into software design, organizations achieve performance consistency and operational resilience across diverse cloud environments.

Modern orchestration systems continuously evaluate infrastructure conditions and adjust workloads in real-time to maintain stability and service levels. This includes monitoring energy consumption, thermal dynamics, and network performance, feeding that data back into placement and scaling decisions. Software engineers now collaborate with operators to define placement rules and scheduling policies that optimize both application outcomes and infrastructure efficiency. Applications become adaptive, responding to changing physical conditions rather than relying solely on pre-configured virtual abstractions. This integration of software logic with material awareness marks a shift in cloud design philosophy, where operational constraints directly inform software architecture. Grounded cloud computing thus redefines the developer-operator relationship, embedding infrastructure knowledge into every stage of the application lifecycle.

Rethinking Hyperscale Assumptions

Traditional hyperscale cloud models prioritized vast pooling of resources, generic scalability, and multi-tenancy as the solution for efficiency and reliability. These approaches often relied on overprovisioning, assuming that excess capacity could absorb unexpected workload spikes without considering energy, cooling, or spatial limitations. Modern high-demand workloads, particularly AI and analytics, reveal inefficiencies in such generalized models, including resource contention, virtualization overhead, and unpredictable performance.

Neo Clouds challenge these assumptions by prioritizing efficiency and workload alignment over raw scale, designing infrastructure that reflects operational realities rather than theoretical elasticity. Predictability, sustainability, and performance consistency become central goals, with orchestration systems making placement decisions that respect both application and physical constraints. By reevaluating hyperscale ideology, Neo Clouds demonstrate that strategic infrastructure design can deliver better outcomes than simply expanding capacity indefinitely.

Purpose-Built Infrastructure Over Generic Pooling

Hyperscale overprovisioning assumes that excess resources solve both performance and resilience challenges, but specialized workloads demand tailored solutions. Neo Clouds adopt purpose-built infrastructure, aligning compute, storage, and networking with the specific needs of workload types. Training-intensive AI clusters, for example, are co-located with high-bandwidth interconnects and advanced cooling, while inference or edge workloads prioritize proximity and low latency. This strategic alignment reduces energy waste, improves reliability, and ensures consistent performance. Infrastructure is designed with workload characteristics in mind, turning energy, thermal, and network constraints from operational challenges into inputs for architectural planning. By focusing on purpose-built design rather than generic pooling, cloud environments become both efficient and resilient, supporting diverse application requirements while respecting physical limits.

Hyperscale assumptions also fail to account for locality and jurisdictional constraints that affect deployment options. Neo Clouds integrate geographic, regulatory, and operational considerations into infrastructure planning, resulting in systems optimized for both performance and compliance. Data centers are no longer homogeneous global pools but are engineered according to workload type, energy availability, cooling capability, and legal requirements. This results in a more modular, adaptive, and efficient cloud ecosystem that balances scale with operational feasibility. By redefining the principles of hyperscale, cloud architects create systems that perform predictably, consume resources responsibly, and adapt to the diverse conditions of the modern computing landscape.

Locality and Latency: Geography Matters

Cloud computing is no longer abstracted from geography; the location of infrastructure now directly impacts performance, latency, and reliability. Users interacting with applications experience response times that are dictated by network paths, distances, and the physical placement of compute resources. To optimize application behavior, modern cloud architectures distribute workloads across geographically diverse nodes, situating latency-sensitive components closer to users and placing bulk compute tasks where power, cooling, and space are optimal. This approach requires planners to account for fiber availability, backbone redundancy, energy access, and regional regulations, integrating physical and policy constraints into deployment decisions. Geographic awareness transforms cloud strategy from a purely virtual design into a context-sensitive orchestration that balances performance, energy efficiency, and operational feasibility. The cloud, while still virtualized for the end-user, must now acknowledge that every resource is tethered to a physical location, which becomes a critical determinant of system behavior.

Proximity-Driven Deployment

Infrastructure placement prioritizes proximity as a lever to improve performance and reliability. Edge data centers, regional clusters, and micro-hubs allow workloads to operate closer to users or connected systems, reducing latency while ensuring that energy and cooling constraints are respected. Network topology, fiber route redundancy, and interconnect capacity guide placement decisions alongside regulatory and environmental considerations. Scheduling engines integrate these parameters to place tasks in locations that balance speed, efficiency, and compliance, ensuring applications meet both operational and service level requirements. This approach redefines elasticity by incorporating geography into workload decisions rather than assuming uniform performance across all locations. Cloud architects now treat spatial awareness as a critical design input, making physical context a first-class factor in both planning and execution.

Geographic considerations also influence software design and orchestration strategies. Applications must adapt dynamically to the characteristics of the regions in which they run, including network latency, energy availability, and local infrastructure reliability. Multi-region deployments are planned to maintain performance while minimizing resource conflicts and energy inefficiencies. By embedding locality constraints into orchestration logic, cloud operators achieve predictable performance across diverse environments. Geography becomes not just a constraint but a parameter that shapes the architecture of the cloud, ensuring that virtual flexibility aligns with physical realities. Recognizing spatial factors in workload placement is a defining aspect of grounded cloud computing, reinforcing the principle that infrastructure cannot exist independent of location.

Neo Clouds and the AI Workload Divide

AI workloads increasingly shape cloud infrastructure, requiring operators to design systems tailored to different computational demands. Engineers build dense clusters with high-bandwidth interconnects for AI training, while inference workloads run closer to users to minimize latency. Operators allocate these workload-specific resources based on energy availability, thermal limits, and network topology. Scheduling systems now monitor real-time conditions and adjust placement dynamically, ensuring consistent performance for both training and inference tasks. By aligning infrastructure with workload characteristics, architects optimize efficiency, reduce operational risk, and maintain predictable outcomes. These approaches turn AI workload management into a combined software-infrastructure challenge, emphasizing the need for material awareness in cloud design.

Tailored Infrastructure for Training and Inference

AI workloads introduce unique pressures on data centers that challenge traditional abstractions. Training workloads generate sustained, high-power draw, often requiring advanced cooling solutions and careful energy planning. Inference workloads, by contrast, prioritize response time and distributed deployment, necessitating proximity to users and reliable network connectivity. Neo Clouds accommodate these divergent requirements through specialized instance types, regional deployment strategies, and orchestrated resource allocation. Energy, cooling, and physical proximity constraints are integrated into scheduling policies, ensuring that AI applications operate efficiently without exceeding infrastructure capabilities. The physical realities of running AI workloads drive innovation in both hardware design and cloud operations, reinforcing the necessity of grounding cloud strategies in material constraints.

High-density AI clusters influence decisions about site selection, rack layout, and interconnect design. Architects design facilities to support intensive thermal loads and sustained energy demands, integrating liquid cooling and airflow optimization. Operators coordinate workload placement across clusters to avoid overloading resources while meeting latency and throughput requirements. Engineers implement orchestration policies that distinguish between training and inference needs, enhancing resource utilization and operational efficiency. These strategies ensure that AI workloads execute reliably without exceeding infrastructure limits. By combining workload awareness with material constraints, operators create cloud systems that deliver high performance predictably and sustainably.

Sovereignty in the Cloud Era

National regulations increasingly influence cloud deployment, requiring operators to keep specific data within country borders. Engineers design infrastructure to comply with sovereignty laws while maintaining performance and reliability. Decision-makers select data center locations based on local energy, network availability, and legal compliance. Architects coordinate workloads across regions, ensuring that tasks execute in jurisdictions that satisfy regulatory mandates. Teams now integrate political, legal, and operational factors into planning, aligning physical infrastructure with policy requirements. By embedding sovereignty considerations into deployment strategies, operators balance compliance, efficiency, and operational resilience

Policy-Driven Deployment Decisions

Legal and policy constraints guide multi-region strategies, influencing hybrid and distributed cloud deployments. Engineers plan site placement to satisfy data residency, energy sourcing, and regulatory conditions. Infrastructure teams enforce compliance through workload scheduling and orchestration policies. Architects adapt network topology and resource allocation to meet jurisdictional requirements without compromising performance. By actively managing these factors, teams ensure predictable operation while adhering to sovereignty mandates. These practices demonstrate that physical and legal realities jointly shape modern cloud infrastructure.

Culture and Philosophy of Grounded Cloud Design

Grounded cloud computing demands a cultural shift where engineers and operators adopt infrastructure-conscious practices. Teams now incorporate energy, cooling, and spatial constraints into design and orchestration decisions. Cross-functional collaboration ensures that software behavior aligns with physical realities. Organizations establish feedback loops to evaluate real-time performance, energy usage, and thermal conditions. Developers and operators actively integrate monitoring data into deployment strategies, improving predictability and efficiency. By embedding this mindset, organizations align technological innovation with operational feasibility and resource constraints.

Embedding Physical Awareness in Teams

Developers and operators adopt tools and practices that integrate infrastructure awareness into workflows. Continuous feedback loops allow teams to evaluate energy consumption, thermal performance, and network constraints as part of deployment planning. Design reviews incorporate questions about resource locality, cooling efficiency, and energy sourcing, reinforcing the connection between software intent and material feasibility. Cross-functional teams develop policies for workload placement, orchestration, and scaling that align operational efficiency with business goals. By instilling infrastructure-conscious thinking across roles, organizations cultivate a philosophy where technology decisions respect both digital abstraction and physical reality. The culture of grounded cloud design thus embeds operational intelligence into every layer of development, from code to hardware orchestration, ensuring alignment with material constraints.

Organizational mindset also affects long-term strategy and innovation. Grounded thinking drives investment in energy-efficient hardware, intelligent cooling systems, and location-aware deployment strategies. Decision-makers consider how operational practices influence sustainability, performance, and reliability, embedding these values into corporate strategy. The culture reinforces that cloud computing is not only about delivering software functionality but also about maintaining harmony with the physical environment and regional regulations. Teams learn to anticipate operational limits, integrating knowledge of energy, thermal, and spatial constraints into the earliest stages of application design. By embracing this philosophy, organizations achieve a balance between technological ambition and practical implementation, fostering a cloud ecosystem that is both adaptive and responsible.

Sustainability as a Design Principle

Cloud architects now integrate sustainability into infrastructure planning, designing systems that optimize energy use and reduce environmental impact. Operators select renewable energy sources and implement efficient thermal management to maintain reliability. Scheduling systems actively assign workloads to minimize energy consumption while meeting performance requirements. Engineers adjust deployment strategies to align with operational constraints and sustainability goals. By making energy efficiency and material limits central to design, teams improve both operational efficiency and environmental responsibility. These practices ensure that infrastructure and software function in harmony with physical and ecological realities.

Resource-Conscious Workload Orchestration

Architects evaluate hardware and facility choices to maximize efficiency, selecting energy-efficient processors and optimized cooling systems. Operators plan site locations considering renewable energy availability and environmental conditions. Engineers embed sustainability into orchestration logic, ensuring workloads execute where resources are most efficient. Teams monitor energy, thermal, and network metrics in real time, adjusting placement proactively. By integrating sustainability into every layer of design and operation, organizations reduce environmental impact without compromising performance. These strategies demonstrate how cloud innovation can advance responsibly while respecting physical constraints.

Sustainability also influences hardware and facility design. Energy-efficient processors, optimized cooling systems, and intelligent power distribution reduce resource consumption while maintaining performance. Site selection considers renewable energy availability, local environmental conditions, and long-term operational feasibility. By integrating sustainability into every aspect of cloud architecture—from hardware selection to workload orchestration—organizations achieve efficiency gains while minimizing environmental impact. Software, infrastructure, and operational practices converge to create a system that respects both material constraints and planetary boundaries. Grounded cloud computing thus exemplifies how technological innovation can be aligned with environmental responsibility, delivering performance without compromising the broader ecosystem.

Embracing a Grounded Future

Cloud computing has transitioned from abstract myths to a reality grounded in physical, operational, and workload constraints. Engineers and architects design infrastructure to respect energy, cooling, spatial, and geographic limitations. Software teams develop applications that actively align with material realities and performance requirements. Organizations now coordinate infrastructure, workloads, and strategy to achieve predictable, efficient, and resilient outcomes. By integrating physical constraints into every layer of planning and operation, teams bridge the gap between virtual elasticity and real-world feasibility. This approach ensures that technological innovation proceeds responsibly, balancing ambition with operational and environmental considerations.

Grounded cloud computing represents a philosophical realignment of how technology, infrastructure, and human decision-making intersect. Engineers consider energy, thermodynamics, geography, and regulatory constraints as primary inputs for design. Software development becomes infrastructure-aware, workload-sensitive, and context-driven. Teams adopt sustainable, efficient, and predictable strategies to align performance with operational and environmental limits. Decision-makers embed responsibility and material awareness into every level of planning. By embracing this philosophy, organizations create cloud ecosystems that are robust, adaptive, and aligned with both physical realities and strategic objectives.