Modern compute systems confront a rising imperative: neither chips nor racks willingly endure the heat generated by escalating workload complexity and density. Engineers once assumed that scaling transistor counts meant proportionally scaling heat mitigation through faster fans and beefier air conditioners, yet that paradigm falters as compute architectures demand tighter packing and higher sustained performance. Air cooling, once ubiquitous, now shows its physics-bound limitations; even advanced liquid-to-chip techniques strain under concentrated heat loads that accompany artificial intelligence (AI), high-performance computing (HPC), and emerging accelerator arrays. What once seemed like incremental thermal refinement has now evolved into redefining thermal battles within modern infrastructure design, as immersion cooling emerges not as a marginal tweak but as a conceptual departure that envelops entire computing assemblies in dielectric fluids. This evolution in thermal strategy influences design direction and performance potential alike at the architectural level.

Compute workloads grow not by increments but by leaps in parallel intensity and heterogeneity, driven by AI training, scientific simulations, and real-time analytics. These applications compel data center and hardware designers to squeeze more compute elements into ever-tighter volumes, pushing integrated circuits and server racks into uncharted thermal stress territories where legacy cooling struggles to keep pace. Heat no longer accumulates uniformly; it emerges variably across densely packed cores, accelerators, and memory units, challenging simplistic assumptions about airflow and temperature gradients. Pressing further, conventional cooling strategies confront an unyielding physical boundary of air’s low heat capacity and limited convective potential, making it increasingly difficult to move heat away from sources in a way that sustains performance and reliability.

The Limits of Air Cooling

Air cooling relies on forced convection to transfer heat from hot components into moving air streams that carry energy toward heat exchangers or vents. Air’s low thermal conductivity and limited heat capacity restrict how much energy it can absorb before its temperature rises, and the typical solution involves expansive fans, ducts, and refrigeration units to maintain airflow. As server trays become dense and compute units concentrate thermal load into localized hotspots, air’s capacity to carry away heat diminishes rapidly, necessitating ever-greater airflow and infrastructure just to sustain safe operating conditions. This dependency on airflow introduces inefficiencies and spatial overhead that become untenable in high-density racks, forcing operators to reconsider whether air’s innate physical limits can ever match emerging thermal loads without radical redesign.

Targeted Heat Extraction

Liquid-to-chip cooling, often realized through cold plates or direct liquid channels over CPUs and GPUs, brings the coolant closer to the primary heat source. Fluids such as water or engineered coolants exhibit far higher thermal conductivity than air, enabling them to capture heat more effectively at the chip surface and convey it out of the package with less energy overhead. This approach shortens the thermal path compared to air cooling, allowing data centers to push higher rack densities while keeping peak temperatures controlled and uniform. Engineers implementing direct liquid systems can exploit the superior heat transfer properties of liquids to mitigate thermal hotspots and improve overall system stability under strenuous workloads.

Yet even liquid-to-chip systems have finite headroom. As processing elements cluster and accelerate their interconnectivity, the rate of heat production grows faster than single-phase or cold-plate mediums can efficiently ferry away energy. Liquid flow must be maintained at sufficient pressure and velocity to prevent stagnation zones that lead to localized warming, requiring external pumps and distribution manifolds that complicate system design. Moreover, because liquid-to-chip still draws heat to an external exchanger rather than enveloping the full assembly, areas beyond the primary dies, such as memory modules, voltage regulators, and power delivery circuits, often remain subject to uneven cooling effectiveness. This leaves designers balancing localized effectiveness against overall system thermal coherence.

Power Density and Its Implications

Power density, the quantum of electrical energy dissipated as heat per unit volume—governs the nature of the thermal challenge facing compute designers. As packaging techniques place transistors more densely on silicon, and systems aggregate more sockets and accelerators per rack, the amount of heat generated per cubic unit escalates sharply. These concentrated heat sources defy the assumptions embedded in legacy cooling designs that anticipated broader heat distribution and slower thermal flux. Elevated power density intensifies thermal resistance because the material interfaces and cooling paths are increasingly challenged to maintain uniform temperature fields, squeezing designers to accommodate this fundamental shift.

Traditional air cooling and targeted liquid transfer both depend on relative gradients between heat source and sink. As power density tightens those gradients, airflow and liquid convection reach diminishing returns. Air cooling’s effectiveness drops significantly as air warms rapidly near high-density components, while directed liquid cooling remains constrained by the capacity of fluid circuits and heat exchange hardware. This makes achieving stable thermal equilibrium without throttling or component distress ever more challenging under modern compute loads.

Thermal Gradients – The Hidden Enemy

Thermal gradients represent differences in temperature between adjacent regions within compute hardware. These disparities strain silicon, memory stacks, and power delivery networks because materials expand and contract at different rates with temperature changes, creating mechanical and operational stress. In extreme cases, persistent gradients lead to thermal throttling, in which processing cores automatically reduce clock rates to avoid damage, degrading performance just when demand peaks. Designers striving to maintain tight thermal control find that even small gradients amplify over time, contributing to reliability issues and shortened component lifespans.

Unmanaged gradients also compromise performance consistency across multi-chip modules and accelerator fabrics. Uneven cooling forces system firmware to balance workloads across heterogeneous temperatures, introducing inefficiencies that cascade into latency and reduced throughput. Such adverse effects are particularly acute in HPC and AI contexts, where sustained high performance confers a competitive advantage, and any thermal limitation translates directly into slower execution or diminished utilization of silicon.

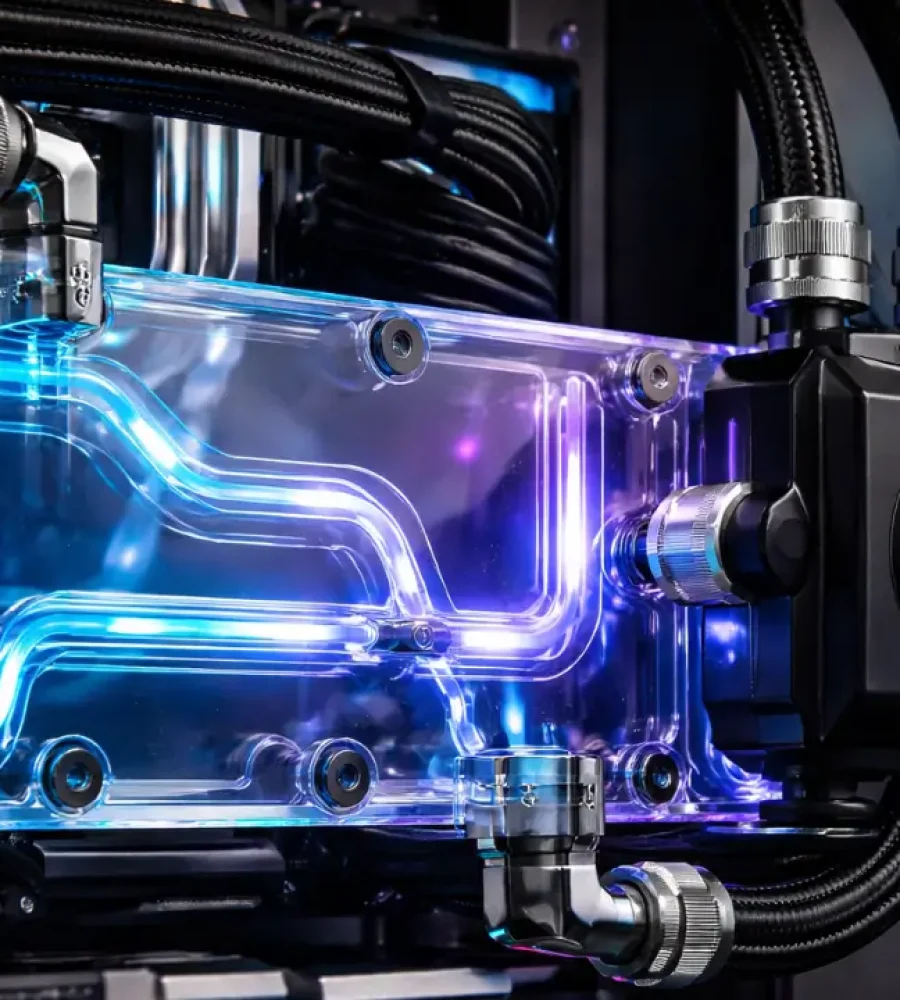

Immersion Cooling – A Paradigm Shift

Immersion cooling abandons the premise that heat must travel through air corridors or narrowly defined cold plates before reaching a cooling medium. Instead, it submerges entire servers or component assemblies into electrically non-conductive dielectric fluids that directly contact every heat-producing surface. This approach eliminates the intermediate thermal interfaces that typically impede heat transfer, enabling convection to occur uniformly across processors, memory modules, and power circuitry. Because dielectric fluids possess higher heat capacity and more stable thermal behavior than air, they absorb and redistribute energy more evenly within the enclosure. Designers therefore shift from channeling heat away from isolated hotspots toward maintaining a balanced thermal environment across the entire hardware assembly.

Rethinking Thermal Pathways

Traditional cooling methods rely on layered heat transfer paths that include heat spreaders, thermal interface materials, airflow channels, and remote exchangers, each contributing incremental thermal resistance between silicon and ambient rejection systems. Immersion cooling reduces several external thermal boundaries by removing the air interface and eliminating the need for discrete heat sinks or cold plates at the board level. The dielectric fluid establishes direct convective contact with exposed component surfaces, thereby minimizing resistance associated with external surface interfaces. Internal package interfaces such as die-to-spreader bonds and multilayer conduction pathways within the semiconductor stack remain physically present and continue to influence junction temperatures. Immersion therefore does not eliminate intrinsic package-level resistance, but it significantly reduces external thermal impedance beyond the chip package boundary. This distinction reframes immersion as a reduction of external thermal barriers rather than a complete removal of all intermediary interfaces.

From Surface to Surround – How Immersion Changes Heat Flow

Air and liquid-to-chip systems primarily target surfaces that interface directly with cooling hardware, leaving peripheral components dependent on indirect airflow or secondary conduction paths. Immersion surrounds each element with fluid, enabling convective currents to form naturally around every exposed surface and reducing the likelihood of localized overheating. This circumferential cooling effect distributes thermal load more evenly and diminishes the formation of steep gradients between neighboring components. Heat disperses across the fluid volume before exiting through a heat exchanger, preventing concentration at singular extraction points. The result is a thermally coherent environment in which hotspots lose prominence because no component remains isolated from the cooling medium.

Immersion systems rely on fluid dynamics rather than forced airflow as the primary transport mechanism for heat. In single-phase immersion, pumps circulate fluid to sustain uniform temperature distribution, while two-phase designs exploit phase change properties that allow the coolant to absorb energy during vaporization and release it upon condensation. Such mechanisms enable efficient heat transport without the acoustic and mechanical complexity of high-speed fans. Designers can calibrate fluid properties and flow patterns to match the thermal signature of specific hardware configurations, integrating cooling into the computational architecture itself. This symbiosis between fluid behavior and electronic function marks a decisive shift in thermal engineering philosophy.

Design Philosophy – Cooling as Part of Architecture

Air-cooled systems require spacing allowances for airflow channels, ducting, and fan assemblies, which limit how densely hardware can be arranged. Immersion cooling removes these airflow dependencies, permitting closer component placement without sacrificing thermal performance. Engineers can design boards and chassis with reduced spacing between modules, as the fluid uniformly envelops each surface and prevents heat buildup even in confined geometries. This flexibility enables higher compute density within the same physical footprint while maintaining stable operating conditions. Cooling therefore transitions from an external constraint to an intrinsic design parameter that shapes hardware architecture.

Conventional racks align components to optimize front-to-back airflow, often constraining innovation in board orientation and cable routing. Immersion systems decouple layout from airflow considerations, granting engineers greater freedom in positioning accelerators, memory banks, and power distribution units. Hardware designers can experiment with novel stacking methods or vertical module arrangements because cooling efficacy no longer depends on maintaining laminar air streams. This architectural liberation encourages exploration of compact, modular compute pods that align more naturally with workload demands. Thermal management thus evolves from a limiting factor into a catalyst for creative system configuration.

Operational Simplicity – Rethinking Maintenance

Fewer Moving Parts

Air-cooled infrastructures depend heavily on fans and air handling units, components that introduce mechanical wear and acoustic considerations into the maintenance equation. Immersion cooling reduces reliance on such moving parts within the server enclosure, which simplifies the mechanical landscape and reduces vibration sources that can affect sensitive electronics. Pumps and heat exchangers remain essential, yet they operate within a closed fluid loop rather than across dispersed airflow networks. The reduced mechanical complexity within each compute node streamlines inspection and replacement processes. Maintenance shifts from managing airflow obstructions to monitoring fluid integrity and circulation pathways.

Air systems continually circulate external air through hardware, exposing components to dust accumulation and particulate interference that degrade heat sink efficiency over time. Immersion enclosures isolate hardware from ambient contaminants, as sealed tanks contain dielectric fluid that does not carry dust or moisture in the manner of external airflow. This containment preserves surface cleanliness and maintains consistent thermal contact between fluid and components. Engineers can focus on fluid quality control rather than debris removal, altering the rhythm of routine servicing. Operational attention pivots toward chemical stability and heat exchanger performance rather than air filtration logistics.

Compatibility with High-Demand Workloads

Artificial intelligence accelerators and HPC clusters operate under sustained, intensive computational loads that produce concentrated thermal output across densely packed silicon. Immersion cooling accommodates these conditions by surrounding accelerators and interconnect fabrics with a medium capable of absorbing rapid thermal flux without forming steep gradients. The uniform cooling envelope supports consistent clock speeds and reduces the need for throttling during peak workload cycles. Designers integrating GPUs, tensor cores, and advanced memory subsystems can maintain performance stability because the fluid moderates temperature fluctuations across interconnected modules. Immersion thereby aligns with the architectural realities of contemporary compute platforms that prioritize parallel intensity and sustained throughput.

Sustained Performance Potential

High-demand workloads often stress both compute cores and supporting components such as network adapters and power regulators, which collectively generate distributed heat. Immersion cooling addresses this holistic thermal profile by enveloping not only primary processors but also secondary electronics in the same controlled environment. This comprehensive approach ensures that auxiliary systems do not become weak links in the thermal chain. Stable temperature fields across the entire server reduce variability in execution behavior and contribute to predictable system response under continuous load. Performance potential thus becomes constrained more by silicon design than by cooling limitations.

Modern compute platforms increasingly consolidate heterogeneous processors within compact physical boundaries, integrating CPUs, GPUs, high-bandwidth memory stacks, and specialized accelerators into unified assemblies. This architectural consolidation increases inter-component communication efficiency but simultaneously intensifies localized heat generation because multiple high-activity elements operate in close proximity. Designers no longer distribute heat across spacious board layouts; instead, they cluster processing units tightly to reduce latency and signal loss, which compounds thermal concentration. Electrical energy consumed during sustained workloads converts directly into heat at silicon junctions, and the surrounding materials must immediately conduct that energy away to preserve stability. As packaging techniques evolve toward three-dimensional stacking and chiplet integration, heat escapes through increasingly constrained pathways that traditional cooling strategies struggle to accommodate. Thermal management therefore becomes inseparable from semiconductor packaging innovation rather than a peripheral afterthought.

Air cooling, designed for earlier generations of spatially distributed components, encounters structural inefficiencies when applied to such compact assemblies. Engineers attempt to increase airflow velocity to compensate for rising heat concentration, yet higher airflow introduces turbulence that disrupts predictable thermal patterns within racks. Turbulent flow diminishes cooling uniformity and creates microenvironments where heat accumulates unpredictably around obstructions and cabling. Mechanical fan acceleration also increases vibration exposure across boards, which can influence connector integrity and long-term reliability. Physical duct expansion competes with valuable compute real estate, forcing compromises between airflow optimization and hardware density. This tension illustrates why air-based convection struggles to align with the trajectory of compute miniaturization.

Material Interfaces and Thermal Resistance

Every cooling strategy must overcome thermal resistance that arises at the interface between silicon and its surrounding materials. Heat spreads from transistor junctions into integrated heat spreaders, then into interface compounds, and finally into the external cooling medium, creating cumulative resistance along the path. Each boundary introduces microscopic imperfections that impede efficient conduction and elevate localized temperatures. Engineers attempt to refine interface materials and surface finishes, yet no configuration eliminates resistance entirely because physical contact surfaces remain imperfect at micro scales. Liquid-to-chip cooling shortens this path but does not remove the layered structure that separates the heat source from the cooling circuit. Immersion cooling alters the equation by removing several intermediary steps, thereby minimizing resistive interfaces and improving direct convective exchange.

Thermal resistance becomes particularly problematic when multiple high-power devices share a confined substrate. Concentrated heat sources can saturate localized regions of a heat spreader, reducing its effectiveness and creating asymmetric temperature distribution. Such asymmetry forces control firmware to moderate performance unevenly across cores, undermining architectural balance. Airflow cannot directly address microscopic resistance at material boundaries because it interacts only with external surfaces. Even direct liquid cooling remains dependent on mechanical fastening and surface uniformity to achieve optimal thermal contact. Immersion systems bypass some of these constraints by surrounding heat-generating elements with fluid that conforms naturally to surface irregularities, enhancing contact quality.

The Escalation of Accelerator-Centric Architectures

Contemporary compute development emphasizes accelerator-centric platforms in which GPUs, tensor processors, and custom AI silicon dominate the power envelope. These accelerators operate under sustained high utilization, producing continuous thermal output rather than intermittent spikes associated with traditional workloads. Thermal management must therefore accommodate persistent heat generation without relying on idle intervals for recovery. Air cooling systems historically assumed fluctuating loads, yet accelerator clusters maintain steady thermal intensity that overwhelms airflow-based dissipation strategies. Liquid-to-chip designs improve direct extraction from accelerator dies, yet auxiliary circuitry still accumulates heat under prolonged stress. Immersion cooling aligns more naturally with accelerator-dense boards because it equalizes temperature across both primary and secondary components simultaneously.

Interconnect fabrics that bind accelerators together also contribute to thermal complexity. High-speed links generate their own heat while transmitting massive volumes of data between processors. These communication pathways often reside near compute cores, compounding localized thermal concentration. Designers cannot isolate link heat from core heat because both coexist within compact substrates. Air-based convection struggles to penetrate narrow interconnect corridors effectively. Immersion fluid, by contrast, flows around connectors and transceivers with minimal obstruction, enabling more uniform dissipation across the communication layer.

Thermal Stability and Clock Behavior

Processing frequency depends heavily on temperature stability because semiconductor switching characteristics shift with thermal variation and localized heat accumulation. Elevated temperatures increase electrical resistance within conductive pathways, which can narrow operational margins and prompt firmware-level safeguards. System firmware may reduce clock speeds when thermal thresholds are approached, thereby protecting silicon integrity under sustained load conditions. Immersion cooling contributes to more uniform external temperature conditions across the assembly, which can reduce abrupt thermal fluctuations that trigger throttling events. Clock behavior, however, remains governed by silicon design, voltage regulation strategy, firmware policy, and workload characteristics independent of the cooling medium. Immersion therefore supports thermal stability that can enable sustained performance, but it does not inherently guarantee higher clock speeds or architectural performance gains.

Stable temperature fields also improve predictability in multi-processor clusters. Workload schedulers rely on consistent performance characteristics across nodes to balance parallel execution efficiently. Uneven thermal environments create subtle disparities that complicate scheduling logic and introduce variability in task completion times. Immersion systems reduce these disparities by minimizing localized temperature divergence within each server. Engineers therefore achieve greater uniformity across cluster nodes, enhancing computational symmetry without modifying silicon architecture. Cooling design thus indirectly influences distributed computing efficiency through its impact on thermal consistency.

Acoustic and Mechanical Considerations

Air-cooled systems require substantial fan arrays to maintain airflow across densely packed racks, and high rotational speeds generate both acoustic output and mechanical vibration within server enclosures. Persistent vibration can influence connector seating and long-term structural stability in sensitive electronic assemblies. Immersion cooling removes the need for high-speed internal server fans within submerged compute nodes, thereby reducing airflow-induced vibration at the board level. External pumps and heat exchangers remain part of immersion architectures and introduce their own mechanical dynamics, although these components typically operate outside the immediate electronics enclosure. This spatial separation alters how vibration interacts with compute hardware rather than eliminating mechanical motion entirely. Immersion therefore reduces vibration exposure within the server chassis itself while still relying on external fluid circulation systems.

Noise considerations extend beyond comfort because acoustic energy reflects underlying mechanical strain. Elevated acoustic output signals increased airflow resistance and mechanical effort, which correlate with higher energy consumption. Immersion tanks operate without reliance on internal air turbulence, thereby shifting thermal transport to fluid convection that produces minimal acoustic disturbance. External heat exchangers and pumps remain operational, yet they typically reside outside direct proximity to electronic boards. This redistribution of mechanical components changes the stress profile within the compute enclosure itself. Cooling strategy therefore shapes not only thermal conditions but also mechanical dynamics inside modern compute systems.

Energy Pathways and Heat Rejection

Every cooling method must ultimately reject captured heat into a secondary environment, whether through ambient air exchange or liquid-based heat exchangers. Air systems expel warm air into controlled facility spaces where additional infrastructure removes residual energy. Liquid-to-chip approaches transfer heat from cold plates into facility water loops, introducing complexity in plumbing and leak mitigation. Immersion cooling channels absorbed heat through sealed dielectric circulation toward external exchangers without exposing electronics to conductive fluids. Designers can integrate immersion tanks with closed-loop secondary systems that isolate compute hardware from facility-level fluctuations. This layered separation refines control over heat rejection pathways and enhances thermal predictability.

Thermal rejection efficiency influences architectural scalability. When cooling systems struggle to expel accumulated heat effectively, designers must constrain compute density to prevent thermal saturation. Immersion systems distribute heat evenly within fluid before transferring it outward, reducing the risk of concentrated rejection points that overwhelm facility interfaces. This balanced transfer process supports incremental scaling of compute modules without redesigning airflow corridors. Engineers can replicate immersion units modularly while maintaining stable thermal rejection characteristics. The cooling framework therefore evolves alongside compute expansion rather than constraining it.

Reliability Over Lifecycle

Electronic reliability depends heavily on consistent thermal conditions across operational lifecycles. Repeated exposure to steep thermal cycles accelerates material fatigue and solder joint degradation. Air cooling systems often experience broader temperature oscillations due to variable airflow patterns and ambient fluctuations. Liquid-to-chip designs moderate primary die temperatures yet may not fully stabilize surrounding components. Immersion cooling fosters more uniform temperature behavior, which reduces differential expansion across materials and promotes longevity. Engineers who prioritize lifecycle stability increasingly evaluate cooling strategies as determinants of durability rather than mere performance enablers.

Long-term maintenance considerations also intersect with thermal reliability. Dust accumulation in air-cooled systems degrades heat sink effectiveness and requires periodic cleaning to restore efficiency. Immersion tanks isolate electronics from airborne contaminants, preserving surface cleanliness and maintaining consistent thermal conductivity over time. Fluid quality management replaces dust mitigation as the primary maintenance concern, shifting operational focus toward chemical stability and filtration. This transition alters lifecycle planning and component servicing protocols. Cooling choice therefore reshapes reliability management strategies across the hardware lifespan.

Two-Phase Immersion and Phase-Change Dynamics

Two-phase immersion cooling introduces a thermodynamic mechanism that differs fundamentally from both air convection and single-phase liquid circulation. Dielectric fluid in these systems absorbs heat from component surfaces until it reaches its boiling point, at which stage it transitions into vapor directly at the heat interface. Vapor bubbles rise naturally away from the component, carrying latent heat upward where a condenser surface returns the vapor to liquid form. This cyclical phase transition allows heat absorption without requiring large temperature differentials between the component and the cooling medium. Because the energy transfer leverages phase change rather than temperature rise alone, heat removal occurs with remarkable thermal stability across the immersed assembly. Designers therefore achieve a self-regulating heat transfer process that adapts dynamically to workload intensity without introducing abrupt temperature spikes.

Phase-change behavior also modifies how thermal gradients propagate across densely packed electronics. Instead of forcing energy through rigid conduction layers toward a remote cold plate, two-phase systems dissipate heat locally as vaporization begins at the hottest points first. This selective boiling mechanism inherently targets hotspots without requiring active sensor-based redistribution of cooling capacity. Vapor formation equalizes temperature differences by accelerating heat removal exactly where flux intensifies, creating a form of localized equilibrium. Air systems cannot replicate this responsiveness because airflow must traverse the entire enclosure regardless of hotspot distribution. Liquid-to-chip approaches similarly lack intrinsic phase-change modulation unless explicitly engineered for it, which complicates plumbing and control design.

Semiconductor Packaging Convergence with Cooling Strategy

Advanced semiconductor packaging now integrates stacked dies, embedded memory layers, and heterogeneous chiplets within compact substrates. These multi-layered assemblies shorten electrical paths but complicate thermal extraction because inner layers rely on conduction through outer silicon strata. Traditional cooling methods focus primarily on the top surface of a processor package, leaving lateral and sub-surface heat diffusion dependent on internal conduction pathways. Immersion cooling changes the thermal boundary conditions around the package by ensuring that every exposed surface participates in convective exchange. Even though internal die layers still rely on conduction to reach the exterior, the surrounding fluid removes energy uniformly once it arrives at any boundary. This consistency reduces the burden on a single extraction interface and complements the direction of packaging innovation.

Three-dimensional packaging intensifies the need for volumetric cooling strategies. Vertical stacking increases silicon density within a confined footprint, concentrating thermal output into smaller cross-sectional areas. Air cooling cannot penetrate internal layers and therefore depends entirely on surface heat spreading, which struggles under stacked configurations. Liquid-to-chip cooling enhances surface extraction but still focuses primarily on designated cold-plate contact zones. Immersion fluid surrounds the entire module, enabling sidewall and underside convective participation that supplements top-surface conduction. Cooling philosophy therefore converges with packaging design, encouraging holistic integration rather than isolated optimization.

System-Level Thermodynamics and Heat Distribution

Compute systems operate as thermodynamic ecosystems in which processors, memory, storage, and power regulation units interact continuously. Heat generated in one component influences neighboring elements because shared substrates and enclosure materials conduct energy laterally. Air-based systems often create temperature stratification within racks, with warmer air rising and cooler air settling unevenly, complicating uniform cooling delivery. Liquid-to-chip solutions mitigate die temperatures but may leave vertical stratification effects within the broader enclosure unaddressed. Immersion cooling submerges all elements in a single thermally cohesive medium, preventing vertical layering of temperature zones inside the tank. This integrated environment simplifies thermodynamic modeling and enhances predictability at the system level.

Uniform heat distribution influences not only hardware stability but also facility-level integration. When heat exits a server enclosure unevenly, downstream rejection systems must compensate for fluctuating thermal loads. Air systems channel warm exhaust into broader HVAC infrastructure, introducing variability that complicates facility control loops. Immersion tanks concentrate heat extraction into defined fluid circuits that can be monitored and regulated with greater precision. This structured thermal pathway improves synchronization between compute modules and external rejection equipment. System-level thermodynamics therefore benefit from the containment and predictability inherent in immersion architectures.

Integration with Emerging Compute Paradigms

Quantum-inspired accelerators, neuromorphic processors, and specialized inference engines represent emerging compute paradigms that may exhibit unconventional thermal signatures. Some architectures operate with bursts of synchronized activity across numerous cores, generating distributed yet simultaneous heat flux. Traditional airflow systems respond sluggishly to synchronized surges because air mass requires time to adjust and redistribute. Liquid-to-chip cooling improves local response but may not equalize distributed flux across an entire assembly instantly. Immersion cooling accommodates these synchronized behaviors by providing omnidirectional convective support that responds at the fluid boundary everywhere simultaneously. Such adaptability positions immersion as a versatile partner for experimental compute architectures.

Edge computing deployments also present unique thermal challenges due to constrained physical environments. Compact enclosures often lack the spatial allowances required for robust airflow corridors. Immersion systems can operate within sealed units that isolate electronics from environmental contaminants and temperature variability. Fluid-based convection inside a closed tank reduces dependence on ambient airflow conditions, enhancing deployment flexibility. Designers exploring distributed compute nodes increasingly evaluate immersion for environments where air management proves impractical. Thermal resilience thus extends beyond centralized facilities into diverse compute landscapes.

Environmental and Fluid Engineering Considerations

Dielectric fluids used in immersion systems require careful formulation to balance thermal conductivity, chemical stability, and material compatibility. Engineers select fluids that resist oxidation and maintain consistent viscosity under prolonged thermal cycling. Material compatibility testing ensures that seals, connectors, and substrate coatings do not degrade when immersed for extended durations. Air systems avoid fluid compatibility concerns but introduce issues related to humidity control and particulate filtration. Liquid-to-chip cooling demands strict management of conductive fluids to prevent leakage and corrosion. Immersion engineering shifts focus toward fluid lifecycle management rather than airflow conditioning.

Fluid engineering also intersects with sustainability goals in compute infrastructure design. Closed-loop immersion systems reduce evaporative losses and minimize direct interaction between electronics and atmospheric conditions. Controlled circulation allows engineers to maintain stable chemical composition over time. External heat exchangers can integrate with secondary systems without exposing compute hardware to environmental fluctuations. This separation of concerns refines operational predictability and reduces contamination risk. Thermal management therefore becomes an exercise in fluid stewardship as much as mechanical design.

Rethinking Compute Density Without Thermal Fear

Historically, thermal limits constrained how aggressively designers pursued density improvements. Engineers often introduced spacing buffers or reduced component clustering to maintain airflow viability. Immersion cooling relaxes these constraints by decoupling density from airflow requirements. Hardware architects can pursue compact layouts aligned with electrical efficiency rather than thermal compromise. This freedom encourages experimentation with unconventional board geometries and integrated module stacking. Compute density evolves in tandem with fluid-based cooling capacity rather than in opposition to it.

Removing thermal fear from density planning alters innovation trajectories. Designers previously constrained by fan placement and duct clearance can prioritize signal routing and latency optimization. Immersion systems absorb the thermal consequences of such optimizations without requiring external air corridors. This shift promotes architectural boldness in processor and accelerator integration. Cooling ceases to dictate layout limitations and instead becomes an enabling substrate for experimentation. The narrative of thermal battles transforms from defensive mitigation to proactive design empowerment.

Rethinking the Thermal Narrative

Compute evolution now hinges as much on thermal imagination as on silicon advancement. Air cooling remains rooted in convection limits that struggle under concentrated modern workloads. Liquid-to-chip systems improve proximity to heat sources yet retain dependence on discrete extraction interfaces. Immersion cooling reframes heat management as a volumetric equilibrium process that surrounds rather than targets components. Uniform fluid contact reduces gradients, supports architectural flexibility, and aligns naturally with accelerator-dense platforms. Redefining thermal battles in compute cooling therefore signals not incremental improvement but a structural shift in how designers conceive the relationship between energy, heat, and performance.