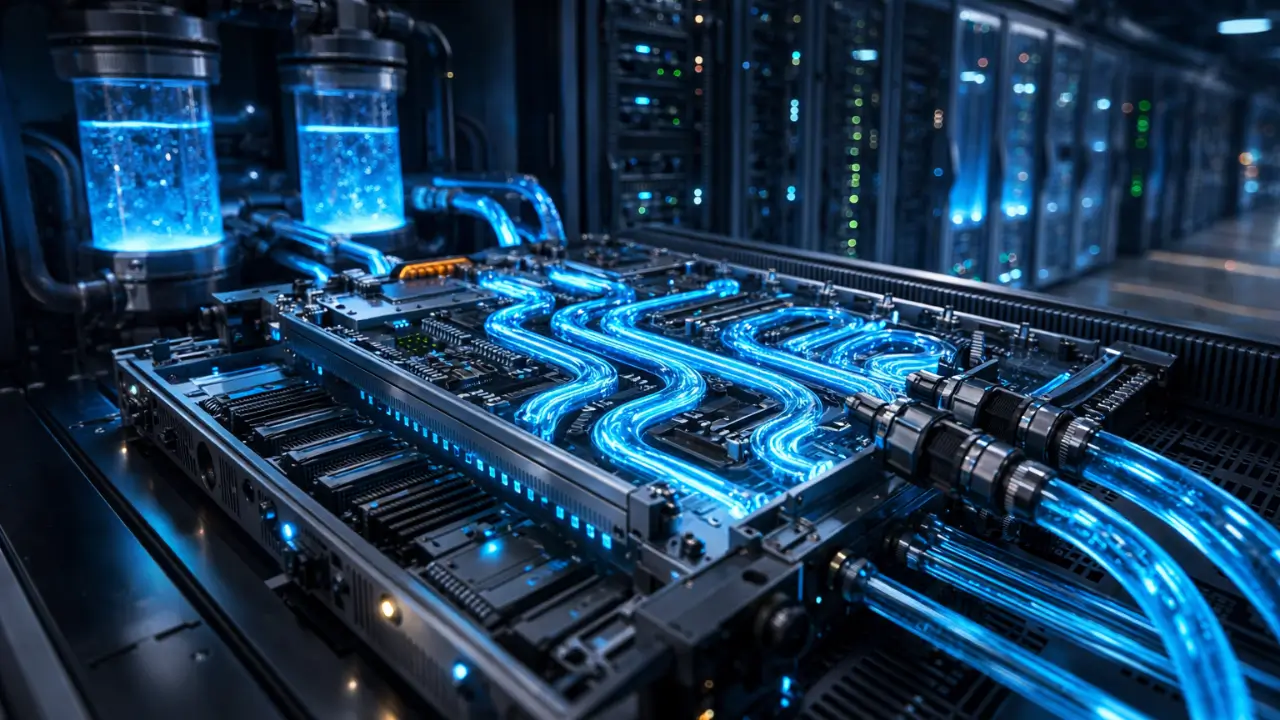

At the upcoming NVIDIA GTC 2026, ASUS will move beyond silicon announcements and focus squarely on infrastructure physics. The company has introduced its ASUS Optimized Liquid-Cooling Solutions alongside a structured partner ecosystem, directly targeting the mounting heat, power, and density constraints reshaping next-generation AI and HPC facilities.

As AI workloads intensify, operators confront unprecedented rack densities, soaring thermal loads, and rising power draw. Rather than treating cooling as an auxiliary system, ASUS reframes it as a core architectural layer integrated, modular, and partner-driven. The company pairs this launch with a strategic ecosystem framework aimed at aligning compute, thermal, and infrastructure layers from design through deployment.

Engineering for NVIDIA’s Next AI Reference Designs

The new cooling architecture aligns with upcoming ASUS AI POD SKUs built on the NVIDIA Vera Rubin NVL72 design. According to ASUS, the solution increases heat removal effectiveness and improves efficiency across dense accelerator racks and high-speed interconnect fabrics. As a result, operators can target lower energy consumption, a projected PUE of 1.18, and improved total cost of ownership.

Importantly, ASUS avoids a monolithic strategy. Instead, the company deploys direct-to-chip (D2C) cooling, in-row CDU-based architectures, and hybrid configurations tailored to workload profiles and facility constraints. This flexibility allows operators to scale from single-rack AI deployments to full POD-level clusters without redesigning core thermal infrastructure.

Infrastructure Partnerships Move to the Forefront

However, ASUS does not operate in isolation. The company collaborates with infrastructure specialists such as Schneider Electric and Vertiv to coordinate facility-level integration. Meanwhile, it integrates precision thermal components from Auras Technology and Cooler Master.

Through this ecosystem model, ASUS aims to deliver purpose-built, scalable cooling systems that sustain extreme rack density while maintaining operational stability. The strategic logic is clear: AI infrastructure now demands vertically aligned partnerships that unify compute silicon, mechanical design, and power distribution into a single performance envelope.

Early Deployment Signals Regional Ambition

One early deployment underscores the platform’s ambition. ASUS has implemented the solution with the National Center for High-performance Computing under Taiwan’s National Institute of Applied Research. The system features a dual-compute architecture combining the NVIDIA HGX H200 cluster and the NVIDIA GB200 NVL72.

This deployment marks Taiwan’s first fully liquid-cooled AI supercomputer built on this architecture. More significantly, it signals a shift in regional AI infrastructure strategy: nations now compete not only on silicon access but also on the ability to sustain thermal equilibrium at scale.

Cooling as Competitive Infrastructure

As AI models expand and inference scales across industries, thermal management becomes a strategic differentiator rather than a mechanical afterthought. ASUS positions AI liquid cooling as a competitive enabler, one that reduces operational risk, improves energy efficiency, and prepares data centers for the next acceleration wave.

Consequently, the conversation around AI infrastructure evolves. Compute density no longer hinges solely on chip design; it depends equally on how effectively operators remove heat from increasingly compact silicon environments. In that equation, ASUS wants to anchor itself at the intersection of performance and thermodynamic control.