Edge systems now operate in environments that refuse to stay predictable, forcing engineers to confront thermal behavior as a dynamic variable rather than a fixed constraint. Robotics platforms, autonomous vehicles, and distributed inference nodes push silicon into constantly shifting workloads that generate uneven heat patterns. Traditional cooling assumptions become less effective under these conditions because they rely on relatively stable airflow, predictable duty cycles, and stationary deployments. Thermal design has moved from a background engineering concern into a primary determinant of system reliability and performance. The industry has started to recognize that processing capability alone cannot sustain edge intelligence without equally advanced heat management strategies. This shift increasingly reframes cooling as an active system layer rather than solely a passive support mechanism.

Heat Doesn’t Sit Still Anymore

Thermal load in edge deployments behaves like a moving target shaped by motion, terrain variability, and real-time workload fluctuations. Mobile robots operating across uneven surfaces experience airflow disruptions that can alter heat dissipation efficiency over short time intervals. Autonomous systems running perception stacks generate burst-driven thermal spikes when sensors feed high-density data into processing units. Static cooling architectures respond less effectively to these rapid changes because they assume relatively uniform heat generation across predictable intervals. Engineers now model thermal behavior using transient simulations that account for movement, vibration, and environmental exposure simultaneously. These models reveal that heat distribution shifts continuously across components, which can limit the effectiveness of fixed cooling pathways under sustained dynamic operation.

Environmental factors amplify this instability because edge systems rarely operate in controlled conditions like traditional data environments. Dust accumulation, humidity variation, and ambient temperature swings directly influence thermal resistance across surfaces and interfaces. Systems deployed outdoors or in industrial zones encounter rapid thermal gradients that disrupt equilibrium states within hardware assemblies. These conditions force cooling systems to respond dynamically rather than rely on steady-state assumptions. Designers increasingly integrate sensors that monitor localized temperature variations across boards and enclosures in real time. This approach allows systems to detect thermal drift early and adjust cooling behavior before performance degradation begins.

Cooling Has to Think, Not Just Flow

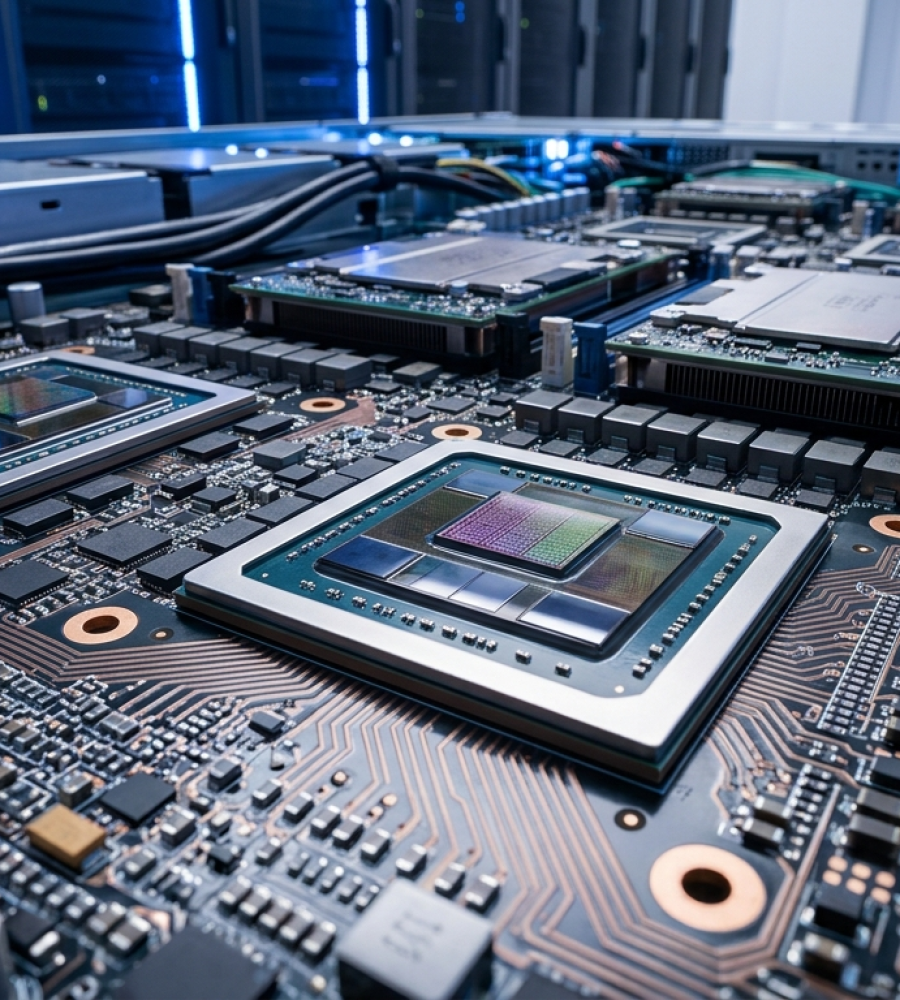

Modern edge architectures demand cooling systems that operate with embedded intelligence rather than fixed mechanical responses. Passive heat sinks and constant-speed fans are often less effective at handling workload variability that shifts within milliseconds across processing units. Adaptive cooling layers now incorporate control algorithms that adjust airflow, liquid circulation, and thermal pathways based on real-time system telemetry. These systems interpret workload intensity, component stress, and environmental inputs to determine optimal cooling actions continuously. Engineers design these control loops to minimize latency between thermal detection and response, ensuring that heat does not accumulate beyond safe thresholds. This transformation increasingly positions cooling as an active participant in system operation rather than only a static infrastructure element.

Control-driven thermal systems also enable energy-efficient operation by aligning cooling effort with actual demand instead of worst-case scenarios. Fixed cooling systems often overcompensate, consuming excess power even during low-intensity workloads. Intelligent cooling reduces unnecessary energy expenditure by scaling thermal response precisely to system activity. This approach extends hardware lifespan by avoiding repeated thermal cycling that stresses components over time. Designers increasingly treat cooling algorithms as part of system firmware, integrating them with workload schedulers and hardware management layers in advanced implementations. This convergence ensures that thermal decisions align directly with processing priorities and system objectives.

Performance Drops Before Systems Fail

Edge AI systems rarely exhibit catastrophic failure when thermal limits approach critical levels, which makes heat-related issues difficult to detect. Instead, systems begin to throttle processing speed, reduce throughput, and introduce latency inconsistencies that degrade overall performance. These changes often occur silently, without triggering explicit fault conditions or alerts. Thermal throttling mechanisms activate to protect hardware, but they compromise system output quality in subtle ways. Engineers must identify these performance shifts as indicators of thermal stress rather than isolated anomalies. Monitoring tools in advanced systems increasingly focus on detecting gradual degradation patterns rather than waiting for failure events.

Latency-sensitive applications such as autonomous navigation and real-time analytics cannot tolerate these hidden performance drops. Even minor delays or inconsistencies can disrupt decision-making pipelines and reduce operational reliability. Thermal buildup introduces variability that propagates through the system, affecting timing, synchronization, and output accuracy. Designers now prioritize thermal stability as a prerequisite for consistent performance rather than treating it as a secondary concern. This shift requires deeper integration between thermal monitoring systems and performance management frameworks. As a result, heat management becomes directly linked to maintaining deterministic system behavior under varying conditions.

Form Factor Is Now a Thermal Problem

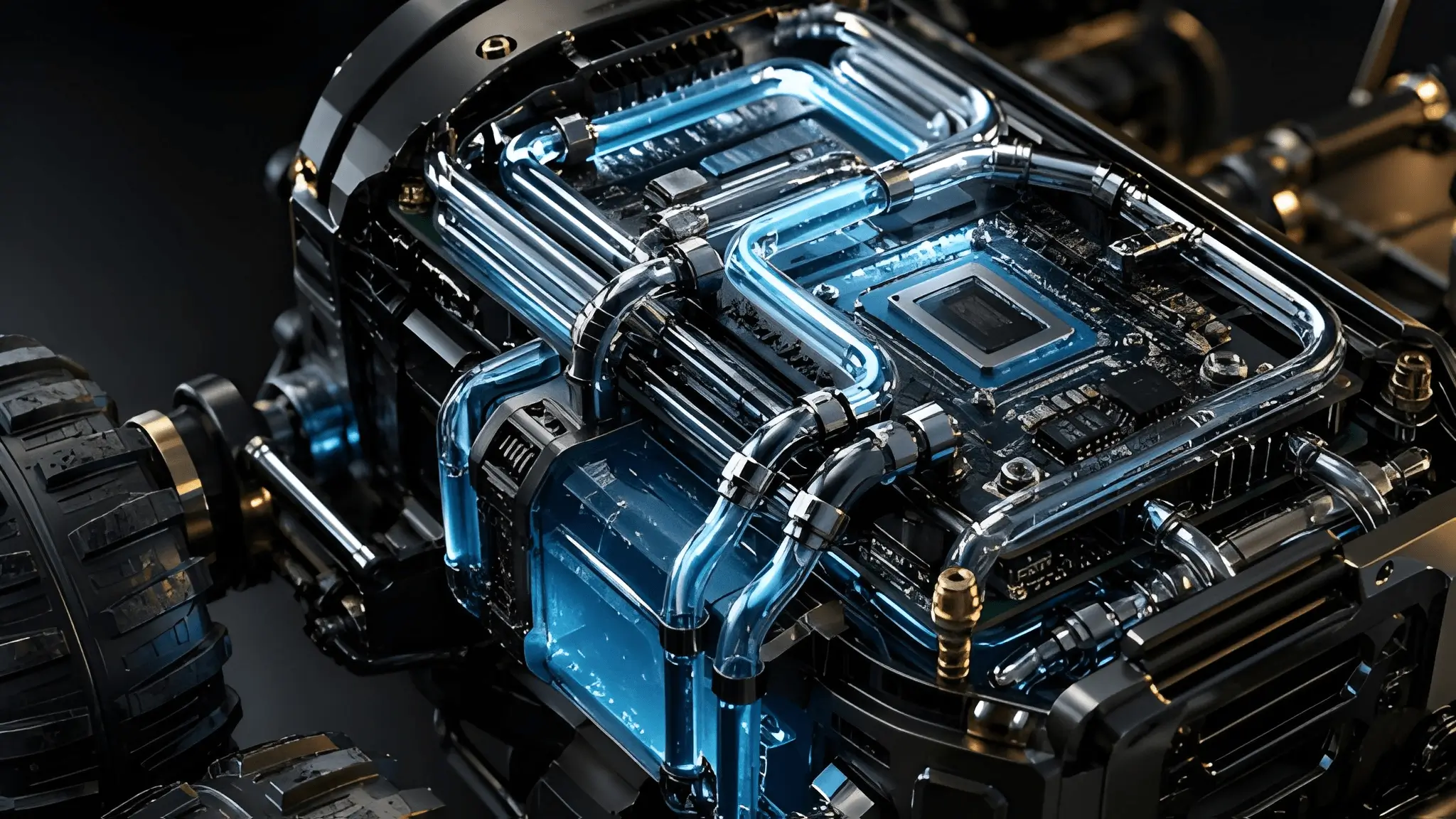

Compact edge devices concentrate processing power within increasingly constrained physical spaces, creating dense heat zones that challenge traditional cooling strategies. Miniaturization reduces the available surface area for heat dissipation while increasing power density across components. Designers must balance size, weight, and thermal capacity without compromising system performance. Enclosures that prioritize compactness often restrict airflow, forcing engineers to explore alternative cooling methods such as vapor chambers and advanced materials. These solutions aim to distribute heat more evenly across limited spaces, preventing localized hotspots from forming. The physical design of the system now directly influences its thermal behavior and operational limits.

Material selection plays a critical role in managing heat within compact form factors. Engineers evaluate thermal conductivity, structural integrity, and weight constraints when choosing materials for enclosures and internal components. Advanced composites and phase-change materials offer new possibilities for passive heat distribution in constrained environments. These materials absorb and release heat more efficiently, reducing the burden on active cooling systems. Designers integrate these materials strategically to create thermal pathways that guide heat away from critical components. This approach transforms the physical structure of the device into an active participant in heat management.

Intelligence Needs Thermal Awareness Built In

Every watt consumed by an edge system converts into heat that must be managed within the same physical boundary. Power efficiency directly influences thermal load, making energy management inseparable from cooling strategy. Engineers cannot optimize one without addressing the other because inefficiencies in power usage amplify thermal challenges. Systems that consume more energy require proportionally more advanced cooling solutions to maintain stability. This relationship creates a closed loop where energy optimization reduces thermal stress and vice versa. Designers now evaluate power and heat together during system architecture planning rather than treating them as separate concerns.

Energy-aware cooling strategies focus on minimizing total system load rather than addressing heat after it forms. Workload distribution, hardware utilization, and power scaling mechanisms all contribute to reducing thermal output at the source. These strategies complement adaptive cooling systems by lowering the baseline heat generation across components. Engineers integrate power management frameworks with thermal control systems to create unified optimization layers. This integration ensures that energy consumption aligns with thermal capacity in real time. Consequently, system endurance improves as both energy usage and heat generation remain within manageable limits.

The evolution of edge systems shows that thermal behavior can define operational boundaries alongside raw processing capability, particularly in constrained environments. Designers must treat heat as a core system variable that influences performance, reliability, and longevity. Cooling systems now require intelligence that matches the complexity of the workloads they support. This shift demands integration across hardware design, control algorithms, and energy management frameworks. Future edge deployments will depend on their ability to anticipate and adapt to thermal conditions continuously. Intelligent thermal design will determine which systems sustain performance under real-world conditions and which fail silently under pressure.