The Paradox at the Core: GenAI as Both Load Multiplier and Load Manager

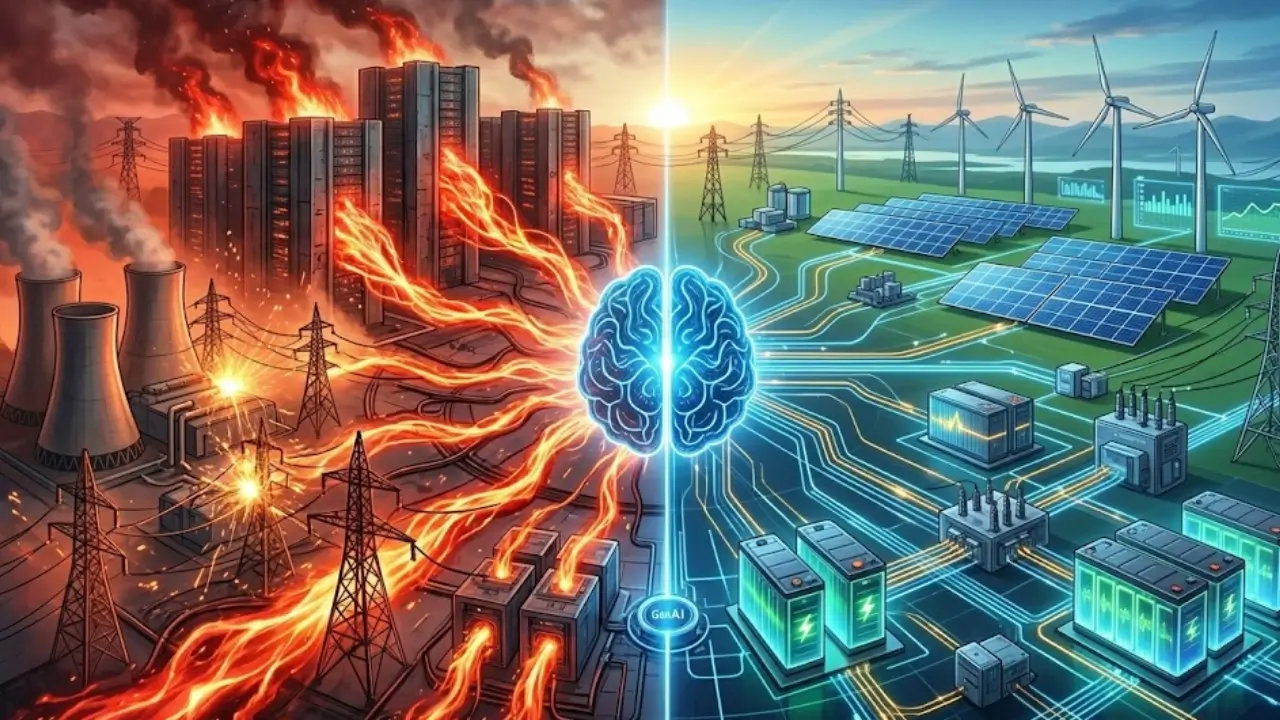

Generative AI does not enter infrastructure ecosystems as a neutral workload because it fundamentally alters how demand emerges and how it gets controlled. Systems that deploy large-scale models immediately experience intensified compute density that translates into concentrated energy consumption patterns. At the same time, these systems introduce intelligent orchestration layers that can redistribute and reshape portions of that demand in near real time within controlled and advanced deployments.This duality creates a structural contradiction where the source of volatility can also act as a potential mechanism for stability in systems that implement advanced orchestration capabilities.Infrastructure design increasingly needs to account for systems that generate load while incorporating mechanisms to partially regulate it under specific operational conditions. The result is a tightly coupled interaction between compute intensity and energy intelligence that cannot be separated into independent domains.

Traditional infrastructure models treated demand as an external variable that could be forecasted, provisioned, and passively supported through capacity expansion. Generative AI disrupts this assumption by embedding decision-making capabilities directly within the demand layer itself. Load no longer behaves as a predictable outcome of user activity because it becomes influenced by model training cycles, inference bursts, and orchestration logic.This shift forces engineers to reconsider how systems respond to demand under dynamic conditions, particularly in environments where demand generation and elements of demand control can coexist within the same system architecture.Infrastructure must now accommodate a feedback loop where demand generation and demand control occur simultaneously within the same system boundary. This transformation introduces both optimization potential and systemic uncertainty that did not exist in earlier compute paradigms.

The paradox extends beyond technical architecture into operational strategy, where organizations must balance competing objectives without isolating one from the other. Increasing compute capacity to support AI workloads inherently raises energy demand, while limited use of orchestration capabilities can lead to missed efficiency opportunities in certain operational contexts. This creates a design challenge where neither dimension can dominate without creating inefficiencies elsewhere. Engineers increasingly approach system design with the expectation that this contradiction will persist, requiring architectures that can manage both expansion and optimization dynamics together. The infrastructure evolves into a dynamic system that, in advanced implementations, can balance expansion and optimization through coordinated control mechanisms. This condition defines the foundational tension shaping next-generation AI-driven environments.

Reframing Infrastructure Around Dual-Function Systems

The emergence of dual-function systems requires a redefinition of infrastructure roles across the compute stack. Hardware, software, and energy systems must operate as interconnected components that respond to both demand generation and demand orchestration signals. This integration introduces complexity but also enables more granular control over resource utilization. AI systems influence how hardware operates, how workloads get scheduled, and how energy flows through the system. This level of coordination moves infrastructure toward more adaptive systems that can respond to internal and external conditions in environments with sufficient orchestration maturity. The boundaries between compute and energy management begin to narrow as both domains increasingly interact within integrated operational frameworks.

Designing for dual-function systems requires a departure from linear planning approaches that assume stable relationships between inputs and outputs. Engineers must adopt models that account for recursive interactions where outputs influence future inputs in real time. This approach introduces a level of dynamism that challenges traditional engineering methodologies. Systems must remain stable under conditions where demand patterns shift unpredictably while orchestration mechanisms attempt to restore equilibrium. The ability to maintain stability within this dynamic environment becomes a critical success factor. Infrastructure design is gradually shifting toward managing continuous adaptation alongside traditional optimization approaches, particularly in advanced AI-driven environments.

Generative AI introduces demand patterns that diverge sharply from the assumptions underlying conventional load forecasting models. Utilities and infrastructure planners historically relied on incremental growth trends and predictable consumption cycles to guide capacity planning. AI workloads disrupt these patterns by generating sudden, high-intensity bursts of activity that do not align with established temporal rhythms. These bursts originate from training cycles, model updates, and large-scale inference requests that can occur independently of traditional usage drivers. The result is a demand profile characterized by volatility rather than stability. Forecasting models that depend on historical continuity can face challenges in capturing this new behavior, although many are evolving to improve accuracy.

The unpredictability of AI-driven demand introduces challenges that extend beyond magnitude into timing and distribution. Load spikes may occur during periods that previously exhibited low consumption, creating mismatches between expected and actual demand. This misalignment can complicate grid balancing efforts and, in certain scenarios, increase the risk of localized stress within energy systems. Infrastructure operators must now account for events where some workloads can resemble industrial-scale consumption within digital environments. These events lack the regularity that traditional models depend on, making them difficult to anticipate using existing tools. The need for more adaptive forecasting approaches is becoming increasingly evident as AI adoption accelerates.

The spatial dimension of demand also changes under AI-driven workloads, as compute tasks can migrate across distributed infrastructure based on optimization criteria. This mobility introduces a layer of complexity where demand does not remain confined to a single geographic location. Instead, in distributed cloud environments, it can shift dynamically in response to factors such as latency requirements, energy availability, and system performance. Grid operators increasingly need to consider interconnected networks of consumption rather than relying solely on isolated nodes.This transformation challenges the foundational assumptions of localized forecasting models. The shift toward networked demand requires new analytical frameworks capable of capturing distributed behavior.

From Predictive Models to Adaptive Forecasting Systems

Forecasting systems are gradually evolving from static predictive models into more adaptive frameworks that incorporate real-time data and machine learning techniques. These systems analyze live inputs from infrastructure, energy markets, and environmental conditions to generate dynamic forecasts. The integration of AI into forecasting introduces a recursive element where prediction models continuously refine themselves based on new data. This approach enhances accuracy but also increases system complexity. Engineers must ensure that forecasting systems remain reliable under conditions of uncertainty and rapid change. The transition to adaptive forecasting represents a fundamental shift in how demand gets understood and managed.

Adaptive forecasting systems enable infrastructure operators to respond more effectively to non-linear demand patterns. These systems provide insights that support proactive decision-making rather than reactive adjustments. Operators can anticipate potential demand spikes and implement strategies to mitigate their impact before they occur. This capability enhances the resilience of both compute and energy systems. The integration of forecasting and orchestration creates a more cohesive approach to managing demand. Infrastructure evolves toward systems that, in advanced implementations, can not only respond to demand but also anticipate and influence it under certain conditions.

From Static Loads to Programmable Demand Systems

Reframing Infrastructure as Adaptive Energy Consumers

Infrastructure is beginning to move beyond passive energy consumption as generative AI introduces control loops that can shape how and when power gets consumed in advanced deployments. Systems that once followed fixed consumption patterns can now respond dynamically to internal orchestration signals in environments where advanced scheduling and control mechanisms are implemented. This transformation is gradually shifting the role of data centers from static load entities toward programmable demand systems capable of real-time adaptation in certain operational contexts.Engineers are beginning to design architectures where energy usage can align more closely with operational logic rather than rigid capacity assumptions. This capability enables infrastructure to participate in energy optimization without sacrificing computational throughput. The emergence of programmable demand marks a decisive break from deterministic consumption models.

Programmable demand systems rely on deep integration between workload orchestration platforms and energy management frameworks that continuously exchange data. Scheduling engines can evaluate compute priority, system utilization, and external energy conditions before allocating resources across available infrastructure. This coordination ensures that workloads execute under conditions that balance performance with energy efficiency. The system increasingly treats energy as a partially controllable parameter rather than solely as an unavoidable constraint in advanced orchestration environments.Operators gain the ability to shift consumption across time and infrastructure layers without interrupting service continuity. This adaptability introduces a level of operational flexibility that traditional systems could not achieve.

The transition to programmable demand also alters how infrastructure interacts with external energy systems and grid operators. Data centers can adjust consumption in response to demand response signals, creating a bidirectional relationship with the grid. This interaction allows infrastructure to support grid stability by reducing load during periods of stress or increasing consumption when excess energy becomes available. The system effectively becomes an active participant in energy markets rather than a passive endpoint. This integration has the potential to enhance both economic efficiency and system resilience where such participation is implemented. Programmable demand systems redefine the role of compute infrastructure within the broader energy ecosystem.

Dynamic Workload Prioritization and Execution Control

Generative AI enables infrastructure to differentiate between workloads based on urgency, computational intensity, and strategic importance, which directly influences energy allocation decisions. Critical inference tasks receive immediate execution priority, while non-essential processes can be deferred or redistributed without affecting overall system performance. This prioritization allows infrastructure to smooth demand peaks by controlling when specific workloads consume resources. Systems effectively create an internal hierarchy that governs how energy gets distributed across competing tasks. This approach enhances efficiency while maintaining service reliability under fluctuating demand conditions. The ability to control execution timing becomes a defining feature of programmable demand systems.

Execution control mechanisms extend beyond simple scheduling into continuous optimization processes that adjust workloads in response to evolving conditions. Systems monitor performance metrics, energy availability, and grid signals to refine execution strategies in real time. This iterative process ensures that infrastructure operates at optimal efficiency without compromising output quality. AI models contribute to this optimization by learning from historical data and improving decision-making over time. The result is a self-adjusting system that balances competing objectives dynamically. Infrastructure evolves into a responsive environment that continuously aligns compute activity with energy conditions.

Adaptive Consumption as a Native Capability

Generative AI systems introduce the ability to reshape their own energy consumption patterns without requiring external intervention, which fundamentally alters how load profiles behave over time. These systems analyze real-time conditions and adjust their operational behavior to maintain equilibrium between demand and resource availability. Instead of generating fixed demand curves, AI-driven environments produce fluid consumption patterns that evolve continuously. This capability enables infrastructure to respond proactively to potential imbalances before they escalate into critical issues. Engineers design systems that treat load profiles as adjustable outputs rather than static characteristics. The concept of self-shaping load profiles becomes central to modern infrastructure strategy.

Self-shaping load profiles rely on predictive intelligence that evaluates both internal system states and external environmental factors. AI models forecast potential demand spikes and implement preemptive adjustments to mitigate their impact. These adjustments may include delaying non-critical workloads, redistributing compute tasks, or modifying execution intensity. This proactive approach reduces the likelihood of sudden demand surges that could strain energy systems. Infrastructure gains the ability to maintain stability even under highly variable conditions. The system effectively becomes capable of managing its own consumption dynamics.

The implementation of self-shaping load profiles requires robust infrastructure capable of supporting rapid decision-making and seamless workload transitions. Systems must integrate advanced monitoring tools, high-speed networking, and sophisticated orchestration frameworks to enable this level of adaptability. Engineers must ensure that these components operate cohesively to avoid performance degradation or instability. The complexity of these systems increases as they balance multiple objectives simultaneously. Despite these challenges, the benefits of adaptive consumption justify the investment in advanced capabilities. Self-shaping load profiles represent a critical advancement in the evolution of AI-driven infrastructure.

Flattening Peaks Through Intelligent Redistribution

AI systems can actively flatten demand peaks by redistributing workloads across time and infrastructure layers, which reduces the intensity of energy consumption during critical periods. This capability allows infrastructure to maintain consistent performance without overloading energy systems. Workloads shift to periods of lower demand or to locations with greater resource availability, creating a more balanced consumption profile. This redistribution enhances system stability and reduces the risk of localized stress within the grid. Infrastructure operators benefit from smoother demand curves that align more closely with available capacity. The ability to manage peaks dynamically becomes a key advantage of AI-driven systems.

Peak flattening also contributes to improved efficiency by reducing the need for excess capacity that would otherwise remain underutilized during normal operations. Systems can operate closer to optimal capacity levels without risking overload during peak periods. This approach enhances both economic and operational efficiency. AI-driven redistribution ensures that resources get utilized more effectively across the entire infrastructure network. The system achieves a balance between performance and sustainability that static models cannot replicate. Intelligent redistribution represents a fundamental shift in how demand gets managed within modern compute environments.

When Energy Becomes an Input Variable in Model Training

Generative AI introduces a shift where energy considerations move from operational constraints into core decision-making variables within model training processes. Systems now evaluate when and where to train models based on energy availability and grid conditions rather than relying solely on computational readiness. This approach enables infrastructure to align training cycles with favorable energy conditions, improving overall efficiency. AI models incorporate energy signals into their optimization frameworks, allowing them to adapt execution strategies dynamically. This integration transforms energy into an active parameter that influences computational workflows. Training processes become more responsive to environmental and systemic factors.

Energy-aware training allows systems to adjust parameters such as workload intensity, scheduling, and resource allocation based on real-time conditions. These adjustments ensure that training processes operate efficiently without exceeding available capacity. AI models can pause or redistribute tasks during periods of high demand, reducing strain on energy systems. This flexibility enhances the sustainability of large-scale training operations. Infrastructure becomes capable of balancing performance objectives with energy considerations in a more integrated manner. The shift toward energy-aware training reflects a broader trend toward intelligent resource management.

The adoption of energy as an input variable also influences how organizations approach infrastructure deployment and geographic distribution. Training schedules can, in some implementations, align with periods of favorable energy conditions. This flexibility reduces dependence on localized resources and enhances system resilience. Organizations can optimize operations by aligning compute activity with energy availability across different regions. The integration of energy into decision-making processes introduces a new dimension of optimization. This capability reshapes how AI systems interact with the energy ecosystem.

The Feedback Loop: AI Optimizing the Infrastructure That Powers It

Generative AI introduces a recursive operational structure where systems continuously observe, evaluate, and refine the infrastructure that sustains their execution. This closed-loop architecture transforms optimization from a periodic activity into a continuous process embedded within the system itself. Sensors, telemetry pipelines, and monitoring frameworks feed real-time data into AI models that analyze energy usage, thermal conditions, and compute efficiency. These models generate actionable decisions that immediately influence workload distribution and infrastructure behavior. The system evolves through iterative adjustments that improve performance and efficiency over time. This feedback loop establishes a dynamic equilibrium between demand generation and resource optimization.

Closed-loop intelligence systems enable rapid responsiveness to changing conditions without relying on manual intervention or static policies. AI-driven controllers can adjust cooling mechanisms, reallocate workloads, and optimize hardware utilization based on current system states. These actions reduce inefficiencies that would otherwise accumulate in traditional infrastructure environments. Continuous feedback ensures that optimization strategies remain aligned with real-time conditions rather than outdated assumptions. This capability enhances both operational resilience and energy efficiency across large-scale systems. Infrastructure transitions into a self-regulating environment where optimization becomes intrinsic to its operation.

The implementation of closed-loop systems introduces new engineering challenges related to system stability and decision accuracy. AI models must operate reliably under diverse conditions while avoiding unintended consequences that could destabilize infrastructure. Engineers must design safeguards that validate decisions and prevent cascading failures within interconnected systems. Redundancy mechanisms and fail-safe protocols become essential components of these architectures. Despite these complexities, closed-loop intelligence provides significant advantages in managing dynamic workloads. The ability to continuously refine system behavior represents a major advancement in infrastructure design.

Recursive Optimization and System Evolution

Recursive optimization allows AI systems to improve their performance incrementally by learning from historical data and operational outcomes. Each optimization cycle generates new insights that inform subsequent decisions, creating a compounding effect over time. This process enhances the system’s ability to anticipate demand patterns and adjust resource allocation proactively. Infrastructure becomes more efficient as it adapts to recurring patterns and emerging trends. The system’s intelligence evolves alongside its operational environment, leading to continuous improvement. This adaptive capability distinguishes AI-driven infrastructure from traditional static systems.

The recursive nature of these systems also introduces a level of unpredictability that requires careful management. As AI models evolve, their decision-making processes may change in ways that are not immediately transparent to operators. Engineers must maintain visibility into system behavior to ensure that optimization efforts remain aligned with operational objectives. This requires advanced monitoring tools and robust governance frameworks. The balance between autonomy and control becomes a critical consideration in system design. Managing this balance ensures that recursive optimization enhances performance without introducing instability.

Decoupling Compute Growth from Peak Load Growth

Generative AI challenges the long-standing assumption that increases in computational capacity inevitably lead to proportional increases in peak electricity demand. Intelligent orchestration systems enable infrastructure to scale compute resources while managing how and when energy gets consumed. This decoupling allows systems to expand their capabilities without placing equivalent strain on energy infrastructure. AI-driven scheduling distributes workloads across time and geography to minimize peak demand intensity. This approach redefines scalability by separating compute growth from energy spikes. Infrastructure evolves toward a model where efficiency and expansion coexist without direct proportionality.

The decoupling process relies on the ability to smooth demand curves through dynamic workload allocation and execution timing. Systems analyze usage patterns and adjust resource distribution to avoid concentrated demand peaks. This strategy reduces the need for excess capacity that would otherwise remain idle during normal operations. Infrastructure operates more efficiently by utilizing available resources more evenly over time. The result is a more balanced energy profile that supports sustainable growth. This shift represents a departure from traditional scaling laws that assumed fixed relationships between compute and energy consumption.

The implications of decoupling extend to both infrastructure design and energy system planning. Reduced peak demand alleviates pressure on grid infrastructure and minimizes the need for costly capacity expansions. Utilities can manage resources more effectively when demand patterns become less volatile. This alignment between compute systems and energy infrastructure enhances overall system stability. The ability to scale without proportional energy impact introduces new opportunities for innovation. Decoupling becomes a key principle guiding the evolution of AI-driven infrastructure.

Sustaining Growth Without Infrastructure Strain

Sustaining compute growth without increasing peak load requires continuous coordination between orchestration systems and infrastructure components. AI models must evaluate multiple variables, including workload urgency, resource availability, and energy conditions, to optimize execution strategies. This coordination ensures that growth does not translate into unsustainable demand patterns. Systems can maintain high levels of performance while operating within energy constraints. This capability enhances the long-term viability of large-scale AI deployments. Infrastructure becomes more resilient as it adapts to increasing demand without exceeding capacity limits.

The ability to sustain growth also depends on integrating advanced technologies that support dynamic resource management. High-speed networking, distributed computing frameworks, and real-time analytics enable seamless workload redistribution across infrastructure layers. These technologies allow systems to respond quickly to changing conditions and maintain optimal performance. Engineers must design architectures that support this level of flexibility without introducing excessive complexity. The balance between adaptability and simplicity becomes a key consideration. Sustaining growth without strain represents a critical objective for next-generation infrastructure systems.

The Rise of Temporal Energy Intelligence

Generative AI introduces a temporal dimension to energy management where the timing of compute execution becomes as important as the execution itself. Systems can schedule workloads based on temporal variables such as energy availability, grid conditions, and operational priorities. This approach transforms time into a strategic resource that influences how infrastructure operates. AI-driven scheduling systems evaluate these variables to determine optimal execution windows for different workloads. This capability allows infrastructure to align compute activity with favorable conditions. Temporal intelligence enhances both efficiency and system stability.

Time-based optimization enables systems to avoid periods of high demand by shifting workloads to more favorable intervals. This flexibility reduces strain on energy systems and improves overall efficiency. AI models can delay non-critical tasks without affecting long-term outcomes, allowing infrastructure to operate more effectively under varying conditions. The ability to adjust execution timing introduces a new level of control over energy consumption. Systems become more responsive to external signals and internal priorities. Temporal energy intelligence represents a significant advancement in resource management strategies.

The integration of temporal intelligence requires infrastructure capable of supporting flexible scheduling and rapid workload transitions. Systems must maintain high levels of performance while adapting to changing conditions. This capability depends on robust orchestration frameworks and real-time data integration. Engineers must design architectures that enable seamless coordination across distributed environments. The complexity of these systems increases as they incorporate temporal variables into decision-making processes. Despite these challenges, temporal intelligence provides substantial benefits in optimizing energy usage.

Synchronizing Compute with Energy Cycles

Synchronizing compute activity with energy cycles allows infrastructure to operate in harmony with external conditions rather than in isolation. AI systems can align workloads with periods of favorable energy availability, improving efficiency and reducing environmental impact. This synchronization enhances the integration between compute systems and energy infrastructure. Systems become capable of responding to fluctuations in energy supply and demand dynamically. This capability supports more sustainable and efficient operations. Synchronization represents a key aspect of temporal energy intelligence.

The process of synchronization involves continuous monitoring and analysis of both internal and external variables. AI models must evaluate energy conditions, workload requirements, and system performance to determine optimal execution strategies. This analysis enables infrastructure to adapt to changing conditions in real time. Engineers must ensure that synchronization mechanisms operate reliably under diverse scenarios. The integration of these capabilities enhances the overall resilience of infrastructure systems. Synchronizing compute with energy cycles represents a critical step toward more adaptive and efficient systems.

From Energy Efficiency to Energy Orchestration

Energy efficiency once defined the primary benchmark for infrastructure performance, yet generative AI shifts the focus toward a more dynamic and system-wide concept of orchestration. Systems no longer aim solely to reduce total energy consumption because optimization now depends on when, where, and how energy gets used. This transition reflects a broader recognition that static efficiency metrics cannot capture the complexity of AI-driven workloads. AI systems coordinate multiple variables simultaneously, including workload priority, grid conditions, and infrastructure state. This coordination allows infrastructure to achieve balanced outcomes across performance, cost, and sustainability dimensions. Energy orchestration emerges as the governing framework for modern compute environments.

Energy orchestration involves integrating intelligence across the entire infrastructure stack, from hardware components to software platforms and external energy systems. AI-driven controllers continuously analyze data streams to make decisions that align with both operational objectives and environmental conditions. These decisions influence how workloads get scheduled, how resources get allocated, and how energy flows through the system. The result is a coordinated environment where every component contributes to optimized performance. Infrastructure evolves into a system that actively manages its own energy dynamics rather than reacting to them. This shift introduces a higher level of sophistication in system design and operation.

The move toward orchestration also requires redefining how success gets measured within infrastructure systems. Traditional metrics must expand to include factors such as adaptability, responsiveness, and integration with external systems. Engineers must develop new frameworks that capture the dynamic behavior of AI-driven environments. This approach ensures that optimization efforts reflect real-world conditions rather than simplified models. Organizations must adopt a holistic perspective that considers both technical and environmental factors. Energy orchestration represents a comprehensive approach to managing complex systems.

Coordinating Multi-Layered Energy Decisions

Coordinating energy decisions across multiple layers of infrastructure requires seamless integration between different system components and operational domains. AI systems must align decisions made at the application level with those at the hardware and energy levels. This alignment ensures that optimization efforts remain consistent across the entire system. Disjointed decision-making could lead to inefficiencies or conflicts that undermine overall performance. Engineers must design architectures that support unified control across all layers. This coordination enhances the effectiveness of energy orchestration strategies.

Multi-layer coordination also depends on the ability to process and interpret large volumes of data in real time. AI models must synthesize information from diverse sources to make informed decisions. This capability enables infrastructure to respond quickly to changing conditions and maintain optimal performance. The integration of data across multiple layers introduces complexity but also provides greater insight into system behavior. Engineers must ensure that data flows remain accurate and reliable. Coordinating energy decisions across layers becomes a critical requirement for modern infrastructure systems.

Grid Visibility Becomes a Compute Requirement

Generative AI systems increasingly depend on real-time visibility into grid conditions to optimize their operation effectively. This requirement transforms grid data into a fundamental input for compute decision-making processes. Information such as energy pricing signals, congestion indicators, and carbon intensity levels directly influences how workloads get scheduled and executed. AI systems integrate this data into their orchestration frameworks to enhance efficiency and sustainability. This integration creates a tightly coupled relationship between compute infrastructure and energy systems. Grid visibility becomes a core requirement rather than an optional feature.

Embedding real-time awareness requires advanced data integration capabilities that allow systems to process and respond to external signals without delay. AI models must continuously monitor grid conditions and adjust their behavior accordingly. This capability enables infrastructure to align compute activity with energy availability and system constraints. The result is a more responsive and adaptive environment that operates in harmony with external conditions. Engineers must design systems that can handle the complexity of integrating multiple data sources. Real-time awareness enhances both efficiency and system resilience.

The reliance on grid visibility also introduces new challenges related to data reliability and system integration. Inaccurate or delayed data could lead to suboptimal decisions that affect system performance. Engineers must implement mechanisms to validate data and ensure its accuracy under varying conditions. This requires robust data management frameworks and continuous monitoring processes. Despite these challenges, the benefits of integrating grid visibility remain significant. The ability to make informed decisions based on real-time conditions represents a critical advancement in infrastructure design.

Compute Systems as Grid-Aware Entities

Compute systems evolve into grid-aware entities that actively respond to external conditions rather than operating in isolation. AI-driven infrastructure can adjust workloads based on grid signals, contributing to overall system stability. This capability enables data centers to participate in energy markets and demand response programs. Systems become more integrated with the broader energy ecosystem, creating opportunities for enhanced efficiency and sustainability. Engineers must design architectures that support this level of interaction. Grid-aware systems represent a significant step forward in infrastructure evolution.

The transition to grid-aware systems also changes how organizations approach infrastructure planning and deployment. Systems must be capable of adapting to varying grid conditions across different regions. This requires flexible architectures that support distributed operations and real-time decision-making. Organizations can optimize performance by aligning compute activity with local energy conditions. This approach enhances both efficiency and resilience. Grid awareness becomes a defining characteristic of modern AI infrastructure.

The New Risk: When Intelligence Fails the Load It Created

The increasing reliance on AI-driven orchestration introduces systemic risks that stem from the complexity and autonomy of these systems. Failures in optimization mechanisms can propagate across interconnected infrastructure, amplifying their impact. During periods of high demand or grid stress, these failures could lead to significant disruptions in both compute and energy systems. AI systems that misinterpret data or execute incorrect decisions may exacerbate existing challenges. This risk highlights the importance of designing systems that remain stable under adverse conditions. Engineers must address these vulnerabilities to ensure reliable operation.

Systemic vulnerabilities also arise from the interdependence between different components within AI-driven infrastructure. A failure in one subsystem can trigger cascading effects that affect the entire system. This interconnectedness increases the potential for widespread disruptions. Engineers must implement safeguards that isolate failures and prevent them from spreading. These safeguards include redundancy, fault tolerance mechanisms, and continuous monitoring. The goal is to maintain system stability even when individual components fail. Addressing these vulnerabilities becomes a critical aspect of infrastructure design.

The complexity of AI systems makes it challenging to predict all possible failure scenarios. Engineers must adopt a proactive approach to risk management that includes continuous testing and validation. This approach ensures that systems remain resilient under diverse conditions. Organizations must invest in developing robust governance frameworks that oversee AI-driven operations. These frameworks help ensure that optimization efforts align with overall system objectives. Managing systemic risks represents a key challenge in the evolution of AI-driven infrastructure.

Balancing Autonomy with Control

Balancing the autonomy of AI systems with the need for human oversight becomes a critical consideration in infrastructure design. Fully autonomous systems may achieve high levels of efficiency but also introduce risks if they operate without sufficient controls. Engineers must design mechanisms that allow for intervention when necessary. These mechanisms ensure that systems can respond effectively to unexpected conditions. The balance between autonomy and control influences both system performance and reliability. Achieving this balance requires careful design and continuous monitoring.

Human oversight plays a crucial role in ensuring that AI systems operate within acceptable parameters. Operators must have visibility into system behavior and the ability to intervene when needed. This capability requires intuitive interfaces and robust monitoring tools. Engineers must design systems that provide clear insights into decision-making processes. Transparency becomes essential for maintaining trust in AI-driven infrastructure. Balancing autonomy with control ensures that systems remain both efficient and reliable.

Generative AI establishes a persistent duality within infrastructure systems where demand creation and demand orchestration coexist as inseparable functions. This condition does not resolve into a stable equilibrium because both aspects remain essential to system performance. Infrastructure must therefore evolve to operate within this paradox rather than attempting to eliminate it. Engineers must design systems that accommodate continuous tension between expansion and optimization. This approach requires embracing complexity as a fundamental characteristic of modern infrastructure. The ability to operate within duality becomes a defining feature of successful systems.

Operating Within the Paradox

Operating within this paradox involves integrating advanced technologies with robust design principles that support adaptability and resilience. AI-driven orchestration provides the tools needed to manage dynamic demand patterns effectively. However, these tools must operate within frameworks that ensure stability and reliability under varying conditions. Engineers must balance innovation with caution to avoid introducing new vulnerabilities. This balance shapes the evolution of infrastructure design in AI-driven environments. The focus shifts from solving the paradox to managing it effectively.

The future of infrastructure will depend on the ability to harness the dual role of generative AI as both a load creator and a load orchestrator. Systems that successfully integrate these functions will achieve higher levels of efficiency and resilience. This integration creates opportunities for innovation while also introducing new challenges. Engineers must continue to refine their approaches to managing this dynamic relationship. The interplay between compute and energy systems will shape the trajectory of technological development. Designing for duality rather than resolution represents the path forward in this evolving landscape.