Air cooling built the data center industry. For decades it worked well enough. Operators pushed cold air under raised floors, directed it toward hot aisles, and exhausted the heat through the facility envelope. The physics were manageable. The equipment was familiar. The economics made sense. That model is not failing gradually. It is hitting a hard wall, and the wall is the AI chip. Direct-to-chip liquid cooling has moved from an experimental option to a baseline requirement in any facility built for serious AI workloads. The question is no longer whether to deploy it. The question is how fast and at what cost.

Why Air Cooling Cannot Follow AI Compute Into the Next Generation

The thermal challenge of AI compute is not just about total heat output. It is about heat density. A modern GPU cluster generates heat in a concentrated volume at intensities that air cannot remove quickly enough. Chips fall outside safe operating temperature ranges before airflow can carry the load away. Conventional air cooling becomes less effective as rack power density rises. The volume of air required to carry the heat away grows faster than the physical space available to move it. Beyond a certain density threshold, air cooling stops being an engineering problem. It becomes a physics problem. That threshold has already been crossed for the most power-intensive AI hardware in production today.

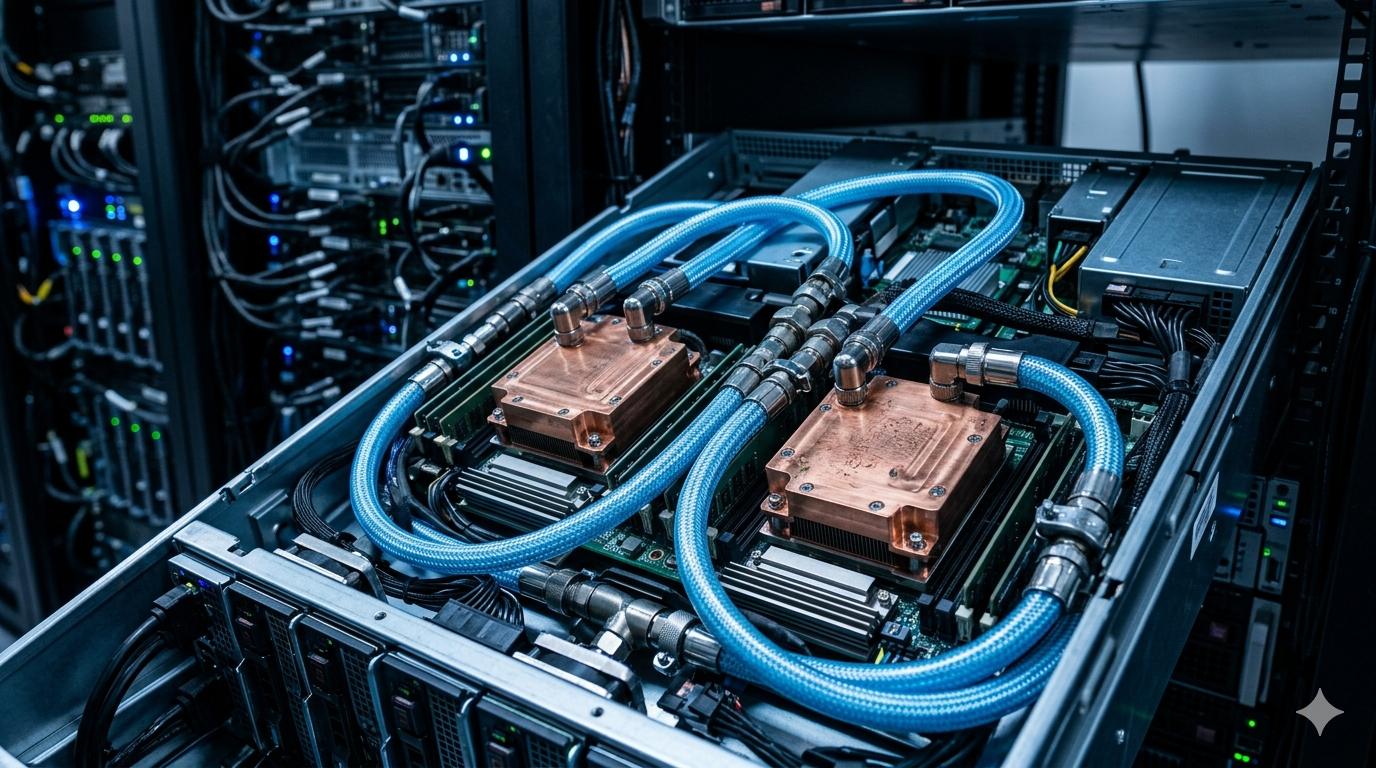

Direct-to-chip cooling addresses this by delivering coolant directly to a cold plate mounted on the processor. The cold plate absorbs heat at the source before it dissipates into the surrounding air. Coolant carries the heat away through a closed loop to a coolant distribution unit. From there it transfers to facility water or a dry cooler. The efficiency advantage over air cooling is substantial. Liquid carries far more thermal energy per unit volume than air. The same cooling effect requires far less mechanical energy and far less physical infrastructure. For operators managing the economics of AI infrastructure at scale, that efficiency gap affects total cost of ownership in ways that compound over a multi-year deployment.

Where the Deployment Complexity Actually Lives

The technical case for direct-to-chip cooling is settled. The deployment challenge is not physics. It is integration. Liquid cooling requires piping, manifolds, coolant distribution units, leak detection systems, and facility water infrastructure. Most existing colocation environments were not built to support any of that. Retrofitting a live facility introduces risks that greenfield deployment does not face. Operators must route piping through active spaces and install new mechanical systems without disrupting existing customers. Leak detection requires validation before liquid infrastructure goes anywhere near compute hardware.

Leak risk slows adoption most consistently. A leak in a direct-to-chip cooling system can damage servers that represent significant capital investment. The engineering response has been the development of dry-break connectors, pressurised monitoring systems, and secondary containment designs. These reduce but do not eliminate the possibility of fluid escape. Facilities that deploy direct-to-chip cooling at scale build detection and response capability into operations from the start. Treating leak management as an edge case is a mistake that operators in this space cannot afford to make.

The Market Has Already Decided

Hyperscalers are not waiting for the retrofit question to resolve itself. New AI campus builds increasingly specify liquid cooling as a baseline requirement rather than an optional upgrade. The supply chain for direct-to-chip cooling components has responded accordingly. Coolant distribution unit manufacturers, cold plate designers, and facility piping specialists are seeing demand that reflects a market that has made its decision. The debate is over in the segment where AI infrastructure investment concentrates most heavily.

The remaining question concerns the large installed base of air-cooled colocation capacity built for a different generation of compute hardware. Retrofitting some of it is viable. Other facilities will serve workloads that do not require liquid cooling. The rest will become stranded as AI customers migrate to purpose-built liquid-cooled facilities. As AI density continues to outpace infrastructure reality, operators who move fastest on liquid cooling infrastructure are not just solving a thermal problem. They are positioning for a market transition that is already underway and will not reverse.

The Infrastructure Investment That Cannot Be Deferred

Direct-to-chip cooling is not a feature that operators can add later without cost. Facilities designed from the ground up with liquid cooling infrastructure have fundamentally different economics than those that retrofit it afterward. Piping architecture, floor loading capacity, mechanical room sizing, and facility water systems all require specification at the design stage. Retrofitting them later means expensive rework and operational disruption. Operators who defer this investment because their current customers do not yet require it are making a decision that future customers will price into their procurement choices.

The colocation market is already sorting itself along this line. AI-native customers evaluating data center capacity treat liquid cooling readiness as a qualification criterion rather than a preference. A facility that cannot support direct-to-chip cooling for high-density GPU clusters is simply not in consideration for those workloads. Location, connectivity, pricing, and operational track record all matter less than the ability to support the compute hardware the customer intends to deploy. Operators without the infrastructure investment are not losing on price. They are losing before the conversation starts.

Why This Decision Defines the Next Phase of Data Center Competition

The investment case for direct-to-chip cooling has closed. Facilities built today for AI workloads treat it as a standard specification rather than a premium option. Operators still treating it as optional are not behind the curve on a technical preference. They are behind on a market requirement that their most valuable potential customers have already established as non-negotiable.

The competitive implications extend beyond individual facility decisions. Operators who build liquid cooling capability early accumulate operational expertise that latecomers cannot quickly replicate. Running direct-to-chip systems at scale requires engineering knowledge that the labor market does not supply in large quantities. Facilities that develop it internally hold an advantage that compounds as AI workload density continues to rise. The 600kW rack problem will not be solved by air cooling under any configuration. The operators who have already built direct-to-chip capability will be the ones positioned to support whatever comes next.